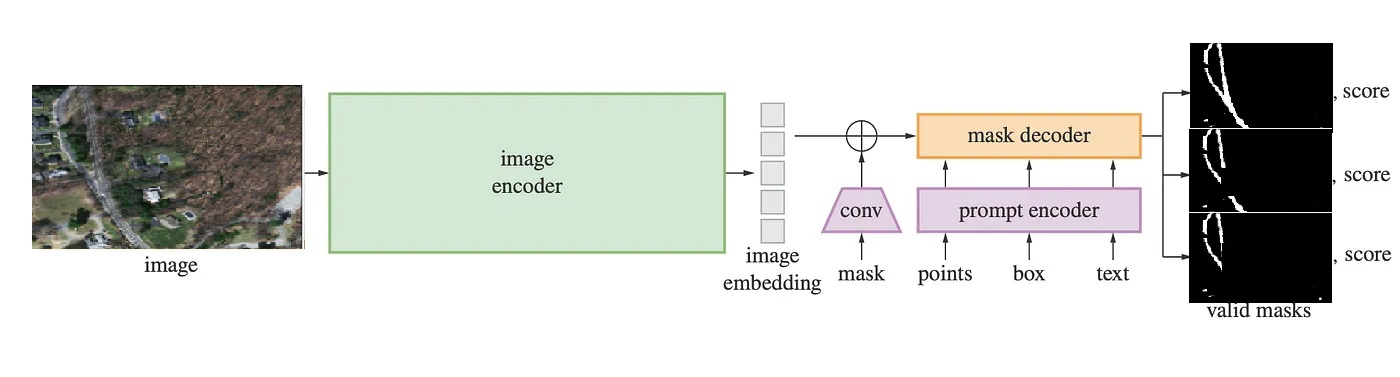

SAM is a powerful image semantic segmentation model designed to accurately predict pixel-level masks for a wide range objects within an image. It consists of three parts:

- a heavy vision transformer backbone that generates image features.

- a lightweight embedding module that creates sparse and dense embeddings out of prompt inputs (points, boxes and or masks). Mask

- a decoder that takes outputs of the image encoder and the prompt encoder to produce masks.

Image is passed through the image encoder, then its latent features are combined with prompt features before feeding into the mask decoder. Finally, output masks are generated.

SAM paper: https://arxiv.org/pdf/2304.02643.pdf

Link to the dataset used in this demonstration: https://www.kaggle.com/insaff/massachusetts-roads-dataset