Kubernetes Event-Drive Autoscaling (KEDA) is a component and extension of Horizonal Pod Autoscaler (HPA) which can be added to any Kubernetes cluster, including Azure Kubernetes Service (AKS), to reactively scale workloads based on various types of event.

The below video provides a very high-level overview and demo of this scenario:

This repo guides you though the setup of a lab environment to demonstrate the utility of KEDA with AKS. The lab is comprised of the following resources:

- Azure Storage Account and Queue - The KEDA target which will be monitored by the AKS KEDA operator to determine scaling requirements.

- Azure Container Registry - The registry for building and storing the container images used in this demonstration.

- Azure Kubernetes Service - The compute environment used to demonstrate workload scaling with KEDA.

- Azure Managed Identity - The identity used by the AKS workloads and KEDA operator to authenticate to Azure resources.

- (Optional) Azure Log Analytics Workspace - The log store used by AKS to enable Container Insights and view real-time workload metrics, including autoscaling behaviour.

The objective of this lab is to demonstrate the autoscaling behaviour of KEDA, centered around an Azure storage queue. The queue is monitored by the KEDA operator within the AKS cluster in order to determine scaling requirements.

To simulate a scalable workload, two apps are created to interact with the Azure storage account queue:

- A Message Generator app will send a random number of messages to an Azure storage queue every 60 seconds. The lower and upper bounds for the number of messages can be set by the

MESSAGE_COUNT_PER_MINUTE_MAXandMESSAGE_COUNT_PER_MINUTE_MINenvironment variables. - A Message Processor app will receive messages from an Azure storage queue and simulate some amount of processing time. The processing time per message can be set with the

MESSAGE_PROCESSING_SECONDSenvironment variable.

The concept here is that the message generator will post batches of messages into the storage queue every 60 seconds. One or more message processors will continually check the queue and retrieve, process, and delete the messages. However, each message processor is only able to process a set number of messages per second, and so the number of message processors will need to scale in order to work through the messages in the storage queue.

The message generator app will be deployed as a Kubernetes pod on the AKS cluster, and use a workload identity to authenticate and post messages to the storage queue every 60 seconds.

The message processor app will be deployed as a Kubernetes deployment on the AKS cluster, and use a workload identity to authenticate and retrieve, process and delete messages as quickly as it can. The deployment will be KEDA-enabled, allowing KEDA to scale the number of pods in the deployment based on the length of the storage queue. In this way, the deployment will effectively scale up and down based on current demand.

The lab can be tuned to simulate a workload by modifying the environment variables for each app.

- Setting the message generator

MESSAGE_COUNT_PER_MINUTE_MAXto120andMESSAGE_COUNT_PER_MINUTE_MINto12with the message processorMESSAGE_PROCESSING_SECONDSto10(6 messages/minute) will demonstrate a moderately scalable workload and should see KEDA scale the message processor deployment between 2 and 20 pods. - Setting the message generator

MESSAGE_COUNT_PER_MINUTE_MAXto1200andMESSAGE_COUNT_PER_MINUTE_MINto120with the message processorMESSAGE_PROCESSING_SECONDSto10(6 messages/minute) will demonstrate a highly scalable workload and should see KEDA scale the message processor deployment between 20 and 200 pods.

The following sections walk through the lab setup and configuration. By the end of these steps you should have a fully-functioning lab environment with a simulated workload demonstrating KEDA the autoscaling capability of KEDA.

- 1. Environment

- 2. Storage

- 3. Container Registry

- 4. Azure Kubernetes Service

- (Optional) 5. Monitoring

- 6. Workload Identity

- 7. Permissions

- 8. Applications

- 9. Deployment

- 10. Load Testing

Note

The variables set during setup steps may be referenced by later steps. Take care to ensure these are not lost of overwritten during deployment.

In this section you configure a resource group to house the lab resources.

Set some variables:

# Modify as preferred:

RESOURCE_GROUP_NAME="rg-aks-keda-demo"

LOCATION="uksouth"Create an Azure resource group for the lab resources:

az group create \

--name $RESOURCE_GROUP_NAME \

--location $LOCATIONIn this section you create a storage account and queue to be used by the apps, and monitored by KEDA.

Set some variables:

# Modify as preferred:

STORAGE_ACCOUNT_PREFIX="stakskedademo"

STORAGE_QUEUE_NAME="demo"

# Do not modify:

RESOURCE_GROUP_ID=$(az group show --name $RESOURCE_GROUP_NAME --query id -o tsv)

ENTROPY=$(echo $RESOURCE_GROUP_ID | sha256sum | cut -c1-8)

STORAGE_ACCOUNT_NAME="$STORAGE_ACCOUNT_PREFIX$ENTROPY"Create an Azure storage account:

az storage account create \

--name $STORAGE_ACCOUNT_NAME \

--resource-group $RESOURCE_GROUP_NAME \

--location $LOCATION \

--sku "Standard_LRS" \

--min-tls-version "TLS1_2"Create an Azure storage queue:

az storage queue create \

--name $STORAGE_QUEUE_NAME \

--account-name $STORAGE_ACCOUNT_NAME \

--auth-mode "login"In this section you create a container registry to build and host the apps ready for deployment into the AKS cluster.

Set some variables:

# Modify as preferred:

ACR_PREFIX="acrakskedademo"

# Do not modify:

ACR_NAME="$ACR_PREFIX$ENTROPY"Create an Azure container registry:

az acr create \

--name $ACR_NAME \

--location $LOCATION \

--resource-group $RESOURCE_GROUP_NAME \

--sku "Basic"In this section you create an AKS cluster to run the apps. There are several notable configuration options:

--enable-managed-identitycreates a managed identity for the AKS cluster to enable the use of Azure RBAC to authenticate and authorise the cluster to interface with other Azure resources.--attach-acrlinks the AKS cluster to the container registry and configures the relevant permissions on the container registry for AKS to be able to pull images using its managed identity.--enable-oidc-issuerand--enable-workload-identityprovide the ability for Azure user-assigned managed identities to be federated to AKS service accounts and used by pods. Azure RBAC can then be used to authenticate and authorise pods to interface with other Azure resources.--enable-kedaprovisions the KEDA components into the AKS clusterkube-systemnamespace. This includes the KEDA operator service account which can have an Azure used-assigned managed identity federated to it in order for Azure RBAC to be used to authenticate and authorise KEDA to interface with other Azure resources.--enable-cluster-autoscaleralong with--max-countand--min-countallows the AKS cluster nodepool to automatically scale the number of ndoes based on cluster load. If the number of required pods exceeds the current nodepool capacity during the lab, the cluster autoscaling behaviour may be observed.

Set some variables:

# Modify as preferred:

AKS_CLUSTER_NAME="aks-keda-demo"

# Do not modify:

ACR_ID=$(az acr show --name $ACR_NAME --resource-group $RESOURCE_GROUP_NAME --query id -o tsv)

USER_ID=$(az ad signed-in-user show --query id -o tsv)Create an AKS cluster:

az aks create \

--name $AKS_CLUSTER_NAME \

--resource-group $RESOURCE_GROUP_NAME \

--location $LOCATION \

--attach-acr $ACR_ID \

--disable-local-accounts \

--enable-aad \

--enable-azure-rbac \

--enable-cluster-autoscaler \

--enable-keda \

--enable-managed-identity \

--enable-oidc-issuer \

--enable-workload-identity \

--generate-ssh-keys \

--min-count 2 \

--max-count 6 \

--network-dataplane "cilium" \

--network-plugin "azure" \

--network-plugin-mode "overlay" \

--network-policy "cilium" \

--node-count 2 \

--os-sku "AzureLinux" \

--pod-cidr "172.100.0.0/16" \

--tier "standard" \

--zones 1 2 3Grant yourself the Azure Kubernetes RBAC Cluster Admin role on the AKS cluster:

AKS_CLUSTER_ID=$(az aks show --name $AKS_CLUSTER_NAME --resource-group $RESOURCE_GROUP_NAME --query id -o tsv)

az role assignment create \

--assignee-object-id $USER_ID \

--assignee-principal-type "User" \

--role "Azure Kubernetes Service RBAC Cluster Admin" \

--scope $AKS_CLUSTER_IDIn this optional section you provision a log analytics workspace and install the monitoring addon for AKS to enable container insights. This is recommended to enable a graphical view into the scaling operations during the lab.

Set some variables:

# Modify as preferred:

LOG_WORKSPACE_NAME="log-keda-demo"Create an Azure log analytics workspace:

az monitor log-analytics workspace create \

--name $LOG_WORKSPACE_NAME \

--location $LOCATION \

--resource-group $RESOURCE_GROUP_NAMELOG_WORKSPACE_ID=$(az monitor log-analytics workspace show --name $LOG_WORKSPACE_NAME --resource-group $RESOURCE_GROUP_NAME --query id -o tsv)

az aks enable-addons \

--name $AKS_CLUSTER_NAME \

--resource-group $RESOURCE_GROUP_NAME \

--addon "monitoring" \

--workspace-resource-id $LOG_WORKSPACE_IDIn this section you provision an Azure user-assigned identity and federate it with AKS service accounts including the KEDA operator and workload service account.

Note

For simplicity, a single user-assigned identity is used for both the KEDA operator service account and workload service account (used by both applications). In practice, a separate user-assigned identity should be created and federated with the KEDA operator to delineate the permissions required by KEDA (usually read-only) and the permissions required by workload applications.

Set some variables:

# Modify as preferred:

NAMESPACE="keda-demo"

WORKLOAD_IDENTITY_NAME="uid-aks-keda-demo"

# Do not modify:

AKS_OIDC_ISSUER=$(az aks show --name $AKS_CLUSTER_NAME --resource-group $RESOURCE_GROUP_NAME --query "oidcIssuerProfile.issuerUrl" -o tsv)Get your AKS credentials for authentication:

az aks get-credentials \

--name $AKS_CLUSTER_NAME \

--resource-group $RESOURCE_GROUP_NAMECreate an AKS namespace:

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: Namespace

metadata:

name: "${NAMESPACE}"

EOFCreate an Azure managed identity:

az identity create \

--name $WORKLOAD_IDENTITY_NAME \

--resource-group $RESOURCE_GROUP_NAME \

--location $LOCATIONWORKLOAD_IDENTITY_CLIENT_ID=$(az identity show --name $WORKLOAD_IDENTITY_NAME --resource-group $RESOURCE_GROUP_NAME --query clientId -o tsv)

WORKLOAD_IDENTITY_TENANT_ID=$(az identity show --name $WORKLOAD_IDENTITY_NAME --resource-group $RESOURCE_GROUP_NAME --query tenantId -o tsv)

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: ServiceAccount

metadata:

annotations:

azure.workload.identity/client-id: "${WORKLOAD_IDENTITY_CLIENT_ID}"

azure.workload.identity/tenant-id: "${WORKLOAD_IDENTITY_TENANT_ID}"

name: "${WORKLOAD_IDENTITY_NAME}"

namespace: "${NAMESPACE}"

EOFFederate the Azure managed identity and AKS service account:

az identity federated-credential create \

--name "aks-sa-$WORKLOAD_IDENTITY_NAME" \

--resource-group $RESOURCE_GROUP_NAME \

--identity-name $WORKLOAD_IDENTITY_NAME \

--issuer $AKS_OIDC_ISSUER \

--subject system:serviceaccount:$NAMESPACE:$WORKLOAD_IDENTITY_NAME \

--audiences api://AzureADTokenExchangeFederate the Azure managed identity and KEDA operator service account:

az identity federated-credential create \

--name "aks-sa-keda-operator" \

--resource-group $RESOURCE_GROUP_NAME \

--identity-name $WORKLOAD_IDENTITY_NAME \

--issuer $AKS_OIDC_ISSUER \

--subject system:serviceaccount:kube-system:keda-operator \

--audiences api://AzureADTokenExchangeIn this section you configure Azure RBAC for both the storage account and container registry, assigning permissions both to yourself and also to the user-assigned identity used by both the apps and KEDA operator.

Set some variables:

# Do not modify:

STORAGE_ACCOUNT_ID=$(az storage account show --name $STORAGE_ACCOUNT_NAME --resource-group $RESOURCE_GROUP_NAME --query id -o tsv)

WORKLOAD_IDENTITY_PRINCIPAL_ID=$(az identity show --name $WORKLOAD_IDENTITY_NAME --resource-group $RESOURCE_GROUP_NAME --query principalId -o tsv)Grant yourself the Storage Queue Data Contributor role on the storage account:

az role assignment create \

--assignee-object-id $USER_ID \

--assignee-principal-type "User" \

--role "Storage Queue Data Contributor" \

--scope $STORAGE_ACCOUNT_IDGrant the Azure managed identity the Storage Queue Data Contributor role on the storage account:

az role assignment create \

--assignee-object-id $WORKLOAD_IDENTITY_PRINCIPAL_ID \

--assignee-principal-type "ServicePrincipal" \

--role "Storage Queue Data Contributor" \

--scope $STORAGE_ACCOUNT_IDGrant yourself the AcrPush role on the container registry:

az role assignment create \

--assignee-object-id $USER_ID \

--assignee-principal-type "User" \

--role "AcrPush" \

--scope $ACR_IDIn this section you use the container registry to build the container images ready for deployment into the AKS cluster.

Set some variables:

# Modify as preferred:

MESSAGE_GENERATOR_IMAGE_NAME="az-message-generator"

MESSAGE_PROCESSOR_IMAGE_NAME="az-message-processor"Build the Azure storage message generator image:

az acr build \

--registry $ACR_NAME \

--image $MESSAGE_GENERATOR_IMAGE_NAME:{{.Run.ID}} \

apps/az-message-generatorBuild the Azure storage message processor image:

az acr build \

--registry $ACR_NAME \

--image $MESSAGE_PROCESSOR_IMAGE_NAME:{{.Run.ID}} \

apps/az-message-processorIn this section you deploy the message processor application as a Kubernetes deployment. KEDA is then configured for this deployment by creating both a trigger authentication and scaled object component.

Set some variables:

# Modify as preferred:

AUTH_TRIGGER_NAME="azure-queue-auth"

DEPLOYMENT_NAME="azure-queue-processor"

MESSAGE_PROCESSING_SECONDS="3"

SCALED_OBJECT_NAME="azure-queue-scaler"

SCALING_QUEUE_LENGTH="10"

# Do not modify:

ACR_LOGIN_SERVER=$(az acr show --name $ACR_NAME --resource-group $RESOURCE_GROUP_NAME --query loginServer -o tsv)

MESSAGE_PROCESSOR_IMAGE_TAG=$(az acr repository show-tags --name $ACR_NAME --repository $MESSAGE_PROCESSOR_IMAGE_NAME --orderby time_desc --top 1 --query '[0]' -o tsv)Create an Azure storage message processor deployment:

cat <<EOF | kubectl apply -f -

apiVersion: apps/v1

kind: Deployment

metadata:

name: "${DEPLOYMENT_NAME}"

namespace: "${NAMESPACE}"

spec:

selector:

matchLabels:

app: "${DEPLOYMENT_NAME}"

template:

metadata:

labels:

app: "${DEPLOYMENT_NAME}"

azure.workload.identity/use: "true"

spec:

serviceAccountName: "${WORKLOAD_IDENTITY_NAME}"

containers:

- name: "${MESSAGE_PROCESSOR_IMAGE_NAME}"

image: "${ACR_LOGIN_SERVER}/${MESSAGE_PROCESSOR_IMAGE_NAME}:${MESSAGE_PROCESSOR_IMAGE_TAG}"

env:

- name: AZURE_CLIENT_ID

value: "${WORKLOAD_IDENTITY_CLIENT_ID}"

- name: MESSAGE_PROCESSING_SECONDS

value: "${MESSAGE_PROCESSING_SECONDS}"

- name: STORAGE_ACCOUNT_NAME

value: "${STORAGE_ACCOUNT_NAME}"

- name: STORAGE_QUEUE_NAME

value: "${STORAGE_QUEUE_NAME}"

EOFCreate a KEDA trigger authentication:

cat <<EOF | kubectl apply -f -

apiVersion: keda.sh/v1alpha1

kind: TriggerAuthentication

metadata:

name: "${AUTH_TRIGGER_NAME}"

namespace: "${NAMESPACE}"

spec:

podIdentity:

identityId: "${WORKLOAD_IDENTITY_CLIENT_ID}"

provider: azure-workload

EOFCreate a KEDA scaling object:

cat <<EOF | kubectl apply -f -

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: "${SCALED_OBJECT_NAME}"

namespace: "${NAMESPACE}"

spec:

scaleTargetRef:

name: "${DEPLOYMENT_NAME}"

pollingInterval: 10

cooldownPeriod: 60

minReplicaCount: 1

maxReplicaCount: 250

advanced:

restoreToOriginalReplicaCount: true

horizontalPodAutoscalerConfig:

name: "${SCALED_OBJECT_NAME}-hpa"

behavior:

scaleDown:

stabilizationWindowSeconds: 30

policies:

- type: Percent

value: 100

periodSeconds: 10

triggers:

- type: azure-queue

metadata:

accountName: "${STORAGE_ACCOUNT_NAME}"

queueName: "${STORAGE_QUEUE_NAME}"

queueLength: "${SCALING_QUEUE_LENGTH}"

authenticationRef:

name: "${AUTH_TRIGGER_NAME}"

EOFIn this section you deploy the message generator application as a Kubernetes pod to begin load-testing the solution.

Set some variables:

# Modify as preferred:

MESSAGE_COUNT_PER_MINUTE_MAX="256"

MESSAGE_COUNT_PER_MINUTE_MIN="32"

# Do not modify:

MESSAGE_GENERATOR_IMAGE_TAG=$(az acr repository show-tags --name $ACR_NAME --repository $MESSAGE_GENERATOR_IMAGE_NAME --orderby time_desc --top 1 --query '[0]' -o tsv)Create an Azure storage message generator pod:

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: Pod

metadata:

labels:

app: azure-storage-queue-message-generator

azure.workload.identity/use: "true"

name: azure-storage-queue-message-generator

namespace: $NAMESPACE

spec:

serviceAccountName: $WORKLOAD_IDENTITY_NAME

containers:

- name: $MESSAGE_GENERATOR_IMAGE_NAME

image: $ACR_LOGIN_SERVER/$MESSAGE_GENERATOR_IMAGE_NAME:$MESSAGE_GENERATOR_IMAGE_TAG

env:

- name: AZURE_CLIENT_ID

value: "${WORKLOAD_IDENTITY_CLIENT_ID}"

- name: MESSAGE_COUNT_PER_MINUTE_MAX

value: "${MESSAGE_COUNT_PER_MINUTE_MAX}"

- name: MESSAGE_COUNT_PER_MINUTE_MIN

value: "${MESSAGE_COUNT_PER_MINUTE_MIN}"

- name: STORAGE_ACCOUNT_NAME

value: "${STORAGE_ACCOUNT_NAME}"

- name: STORAGE_QUEUE_NAME

value: "${STORAGE_QUEUE_NAME}"

EOFMonitor the solution natively using kubectl:

Tip

The following commands are used here to monitor activity:

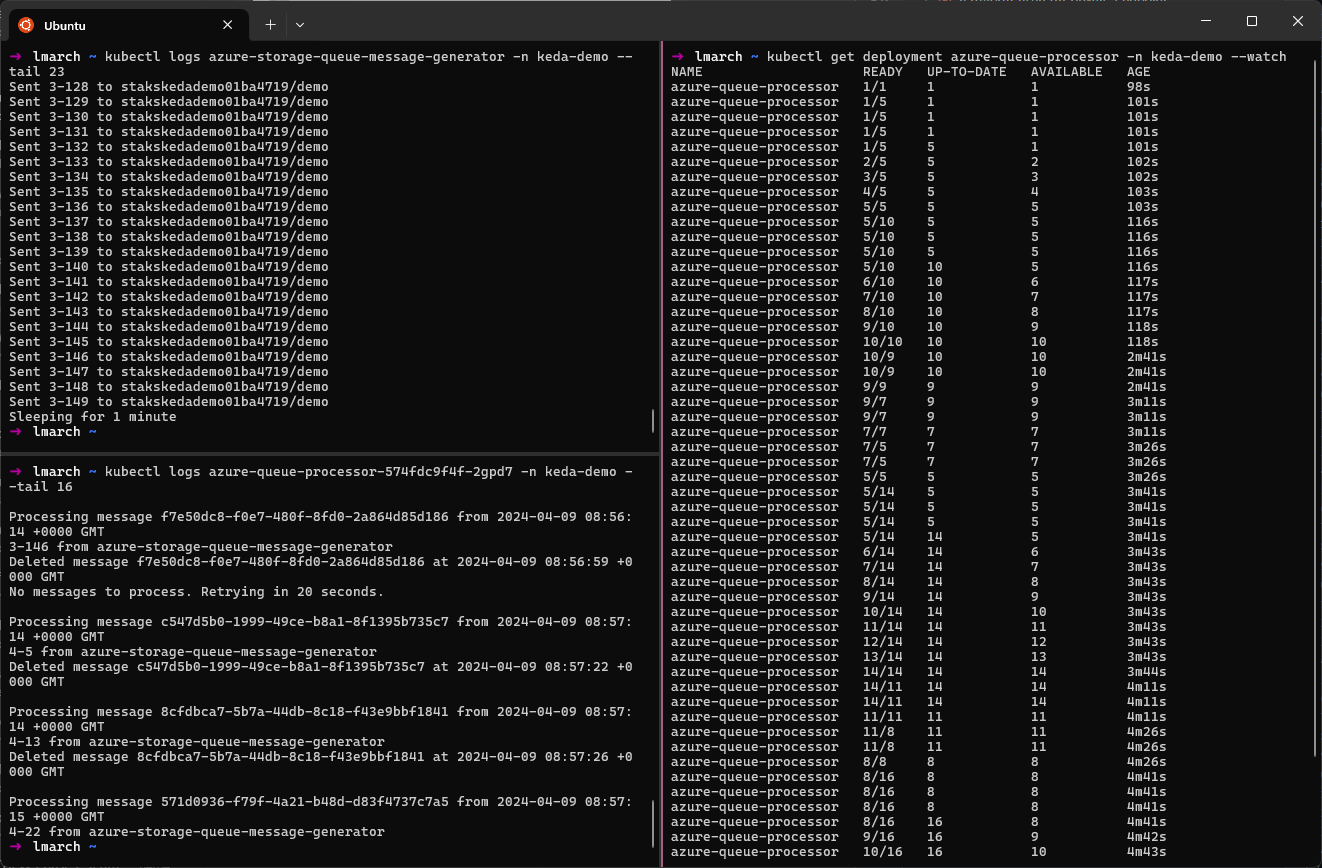

kubectl logs azure-storage-queue-message-generator -n keda-demo- View message generation activity.kubectl logs azure-queue-processor-{podId} -n keda-demo- View message processing activity for a single message processor instance.kubectl get deployment azure-queue-processor -n keda-demo --watch- View the change in the number of deployment replicas being managed by KEDA in response to storage queue length.

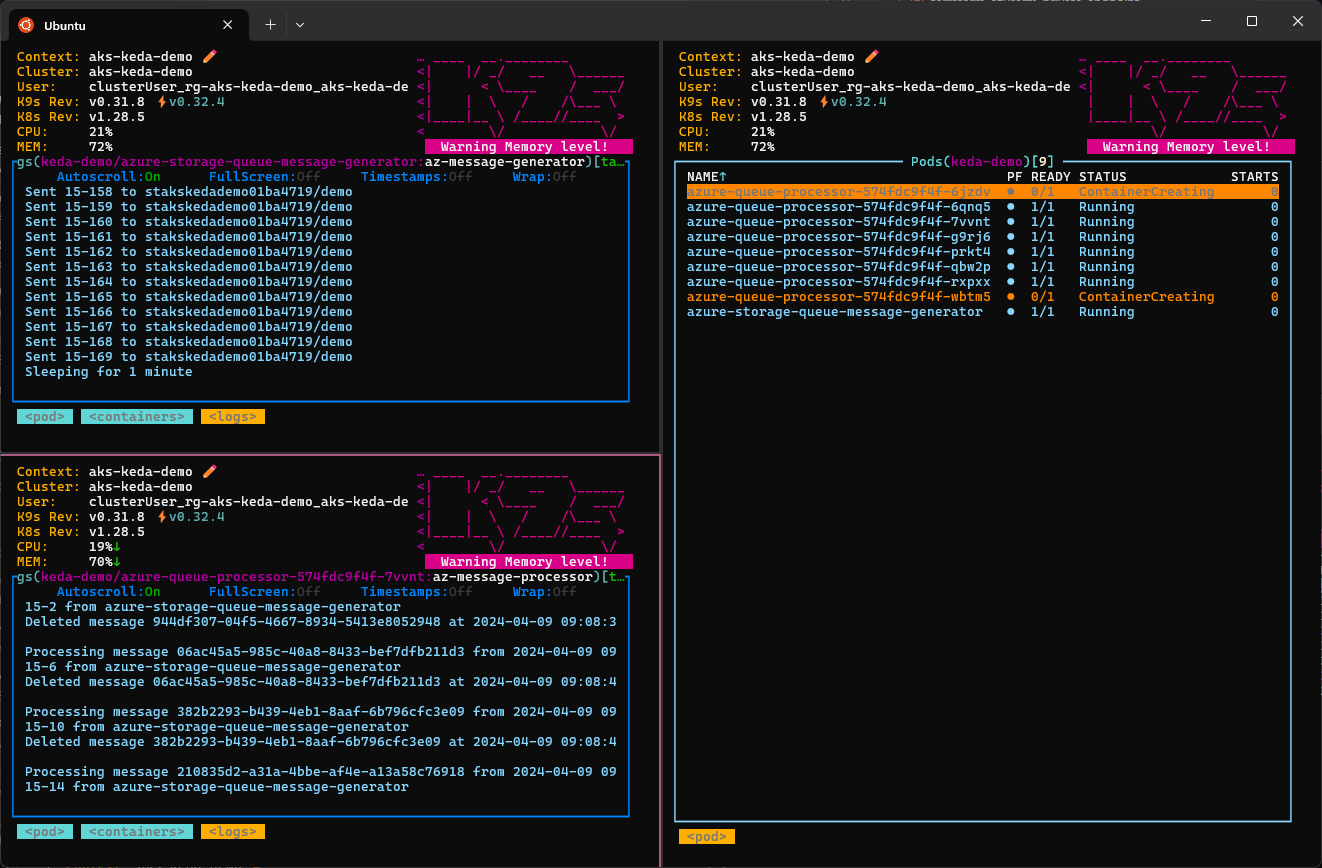

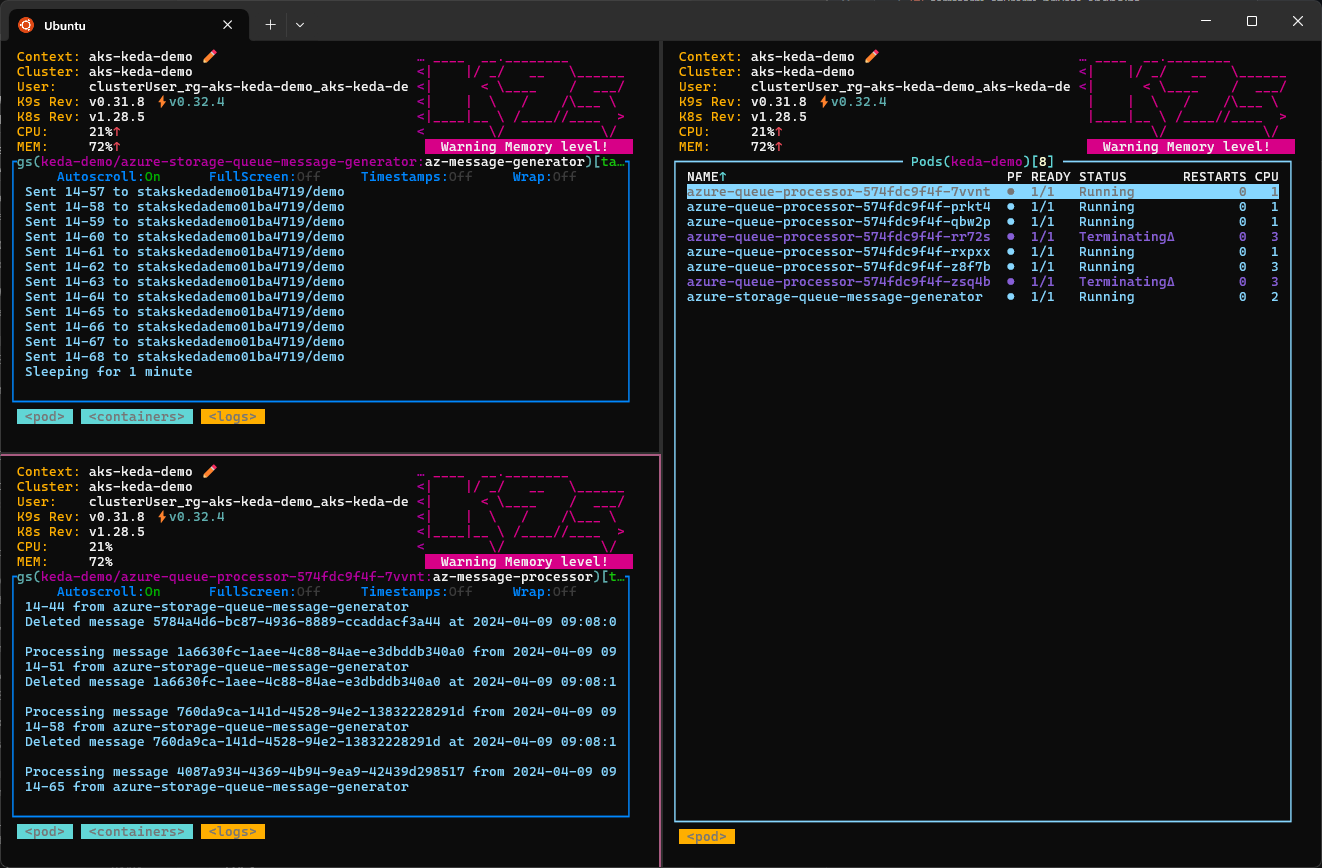

Monitor the solution using the k9s command-line tool:

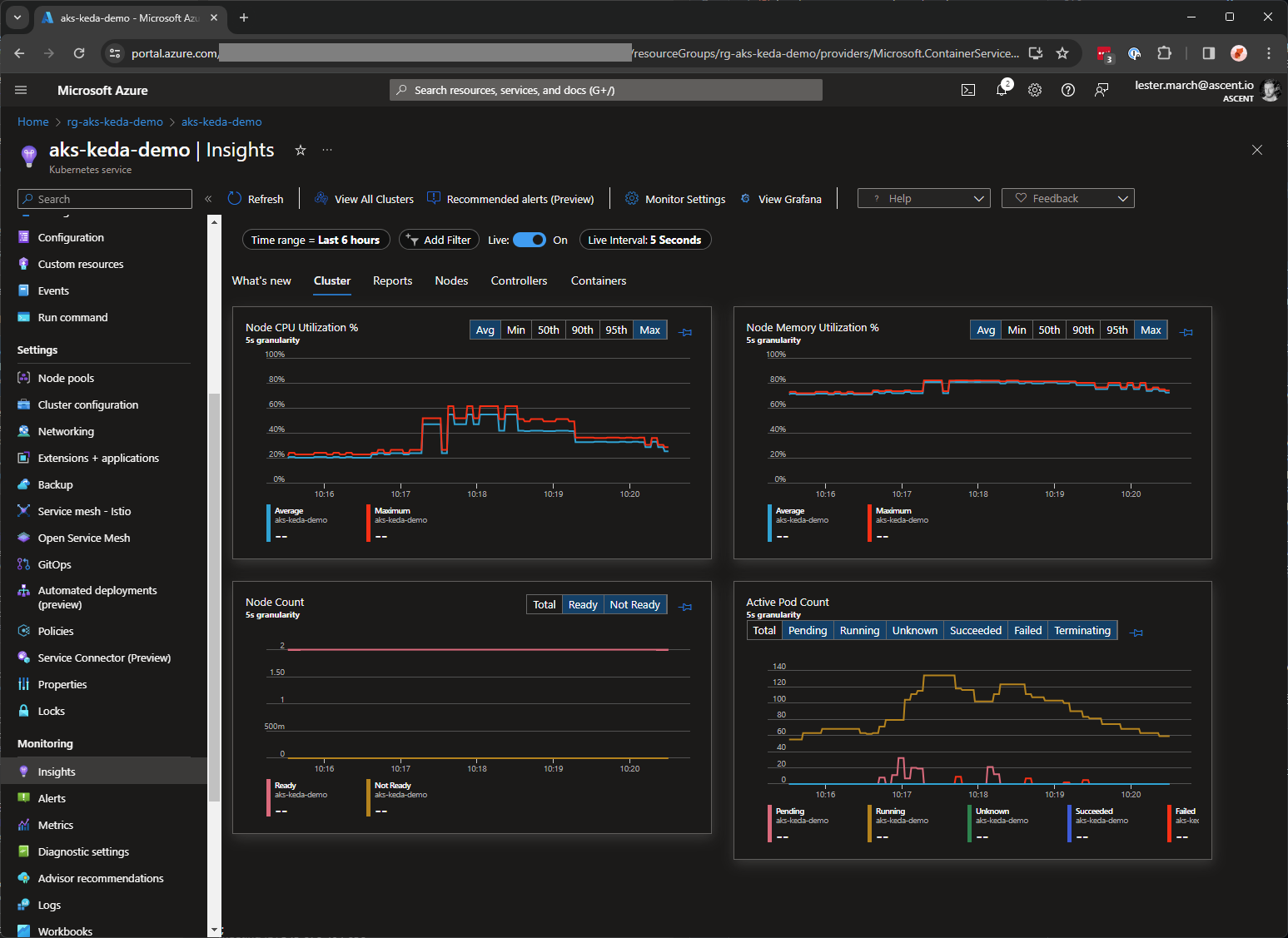

Monitor the solution using container insights (if you followed step 5):