Airflow pipeline to fetch daily new covid cases data for indian states and store them into google BigTable.

- first install Airflow in your system.

pip install apache-airflow - install google cloud dependency for this project.

pip install 'apache-airflow[gcp]' - check airflow version to ensure if it is successfully installed.

airflow version - create a airflow_home folder.

- then set Airflow path:

- go to the folder where you created

airflow_homefolder. - open terminal there and set airflow_path using this command

export AIRFLOW_HOME=$(pwd)/airflow_home

- go to the folder where you created

- then write

airflow initdb. It will create airflow database, logs and unittest file. - then create a

dagsfolder inside airflow_home folder to store our dags. - now to start server run the commands below:

airflow webserverairflow scheduler

- now to go to

localhost:8080in your browser you will see some example dags there.

Note: If you face any sqlite related database exception while running the server, then run airflow initdb command again.

Follow the guide here to ensure you run correct main file:

- first create a json credentials for google bigQuery api and paste them into config folder.

- create a google cloud service connection inside your airflow.

- create variable in airflow using the json inside config/Airflow_variables folder.

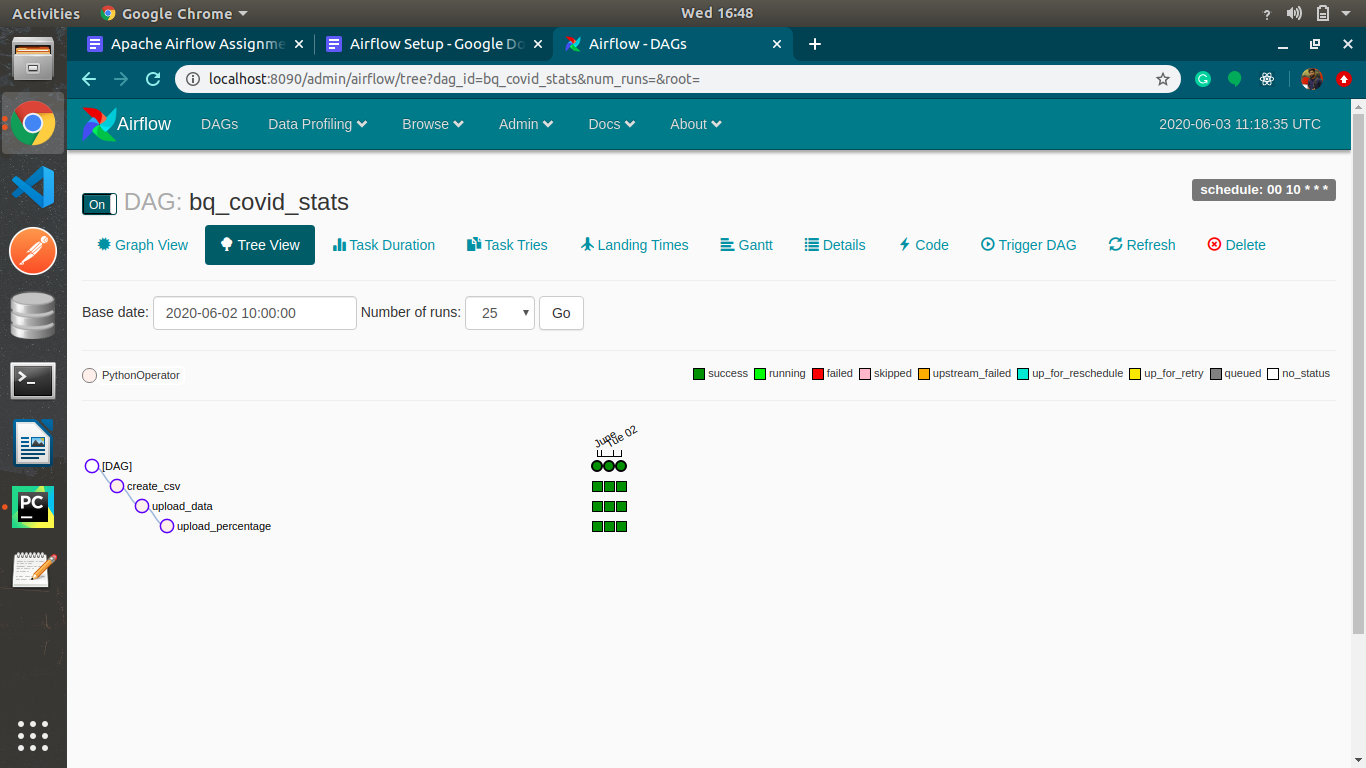

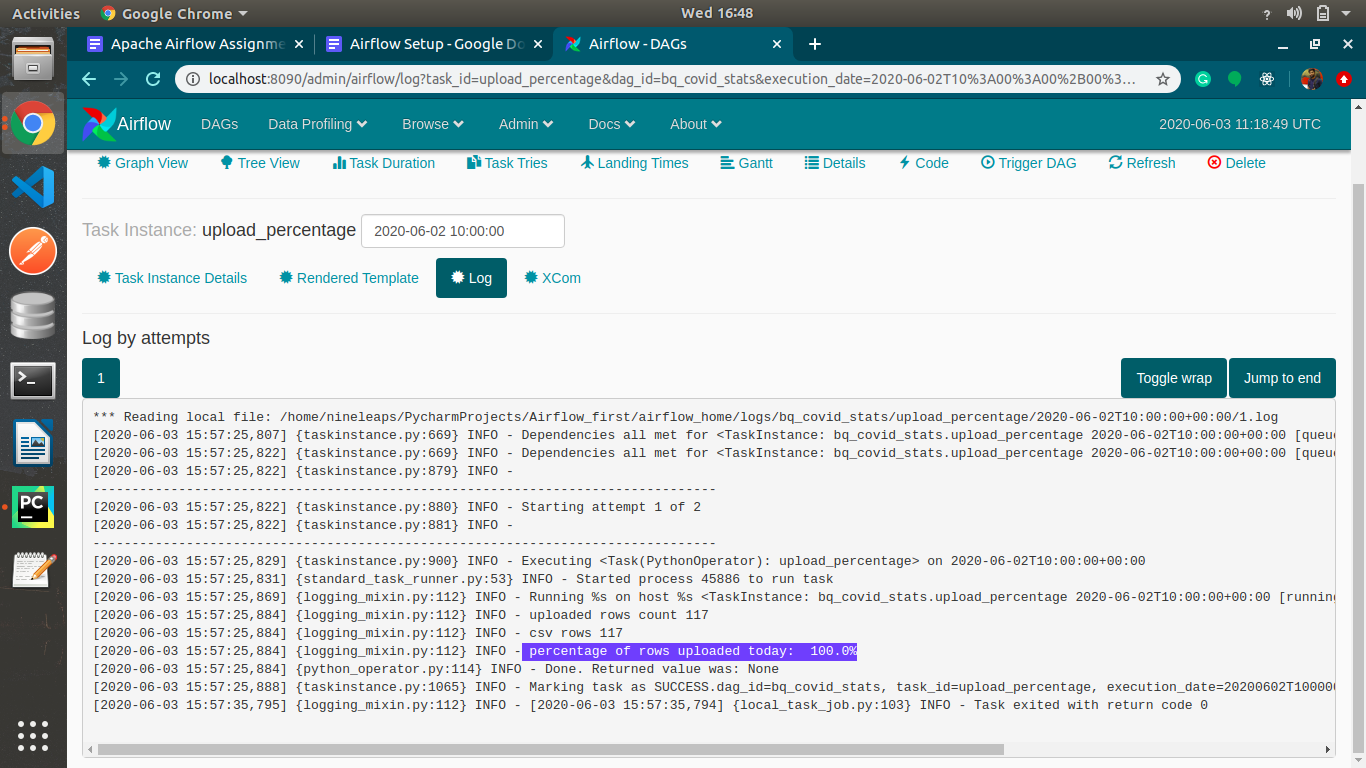

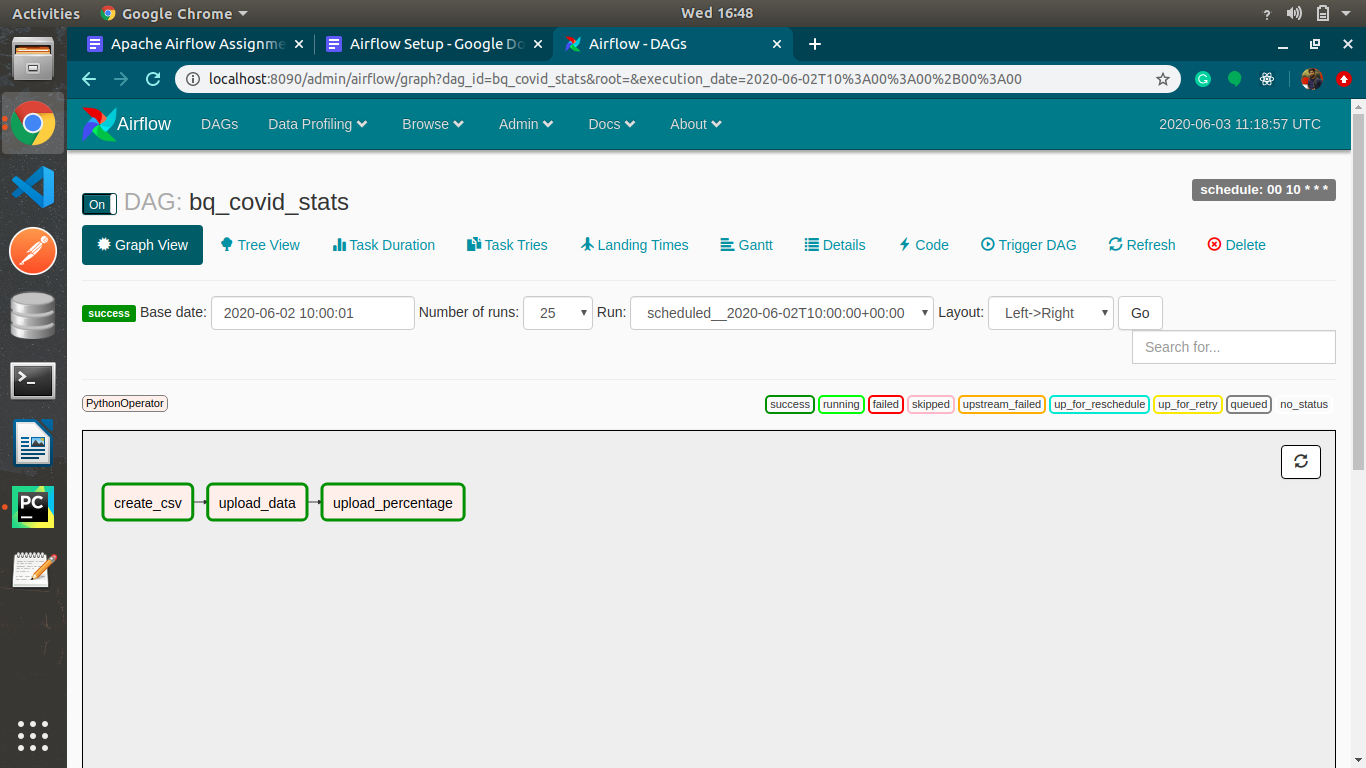

- paste project dags inside your dag folder in airflow_home folder.

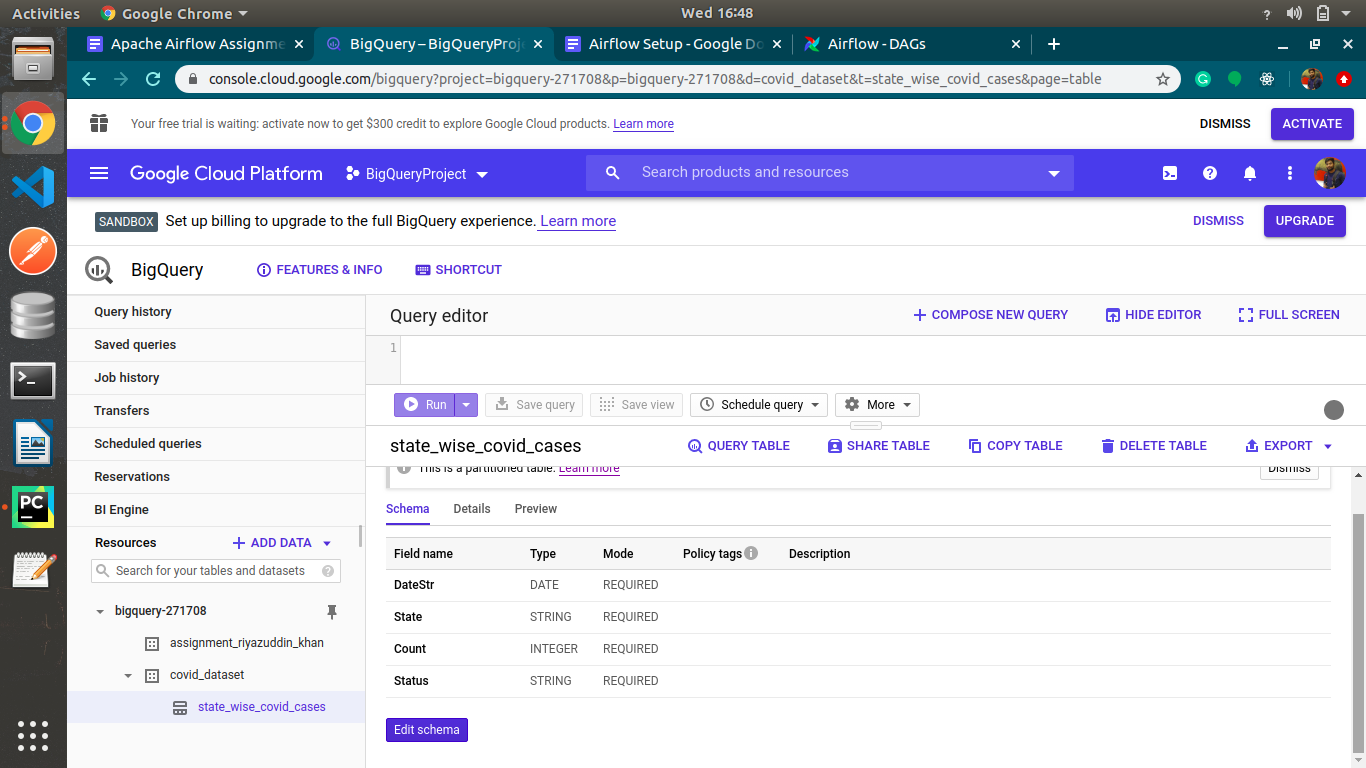

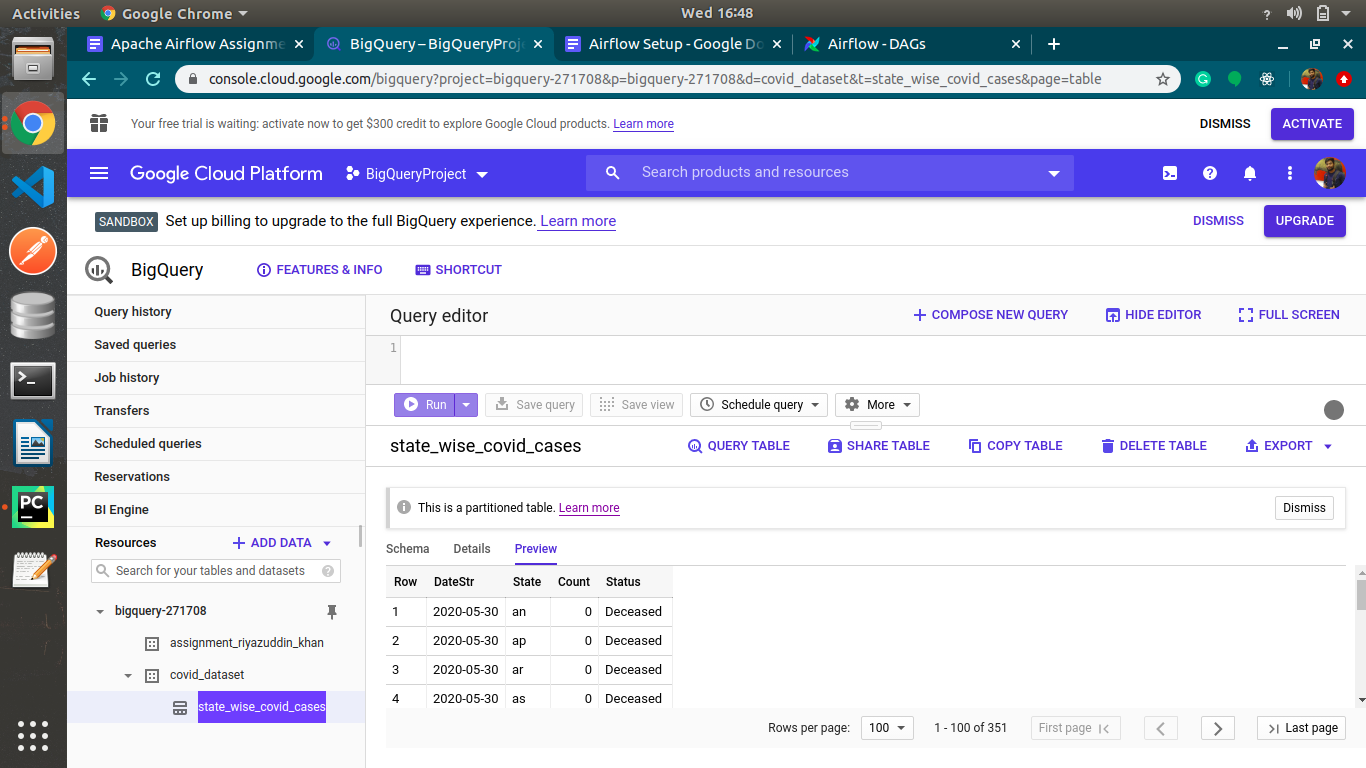

- (optional) you can create dataSet and table on google bigQuery using

create_tabledag. - main dag files to run

covid_19_statewise_bq_table.py.