Implementation of selected Inverse Reinforcement Learning (IRL) algorithms in python/Tensorflow.

python demo.py

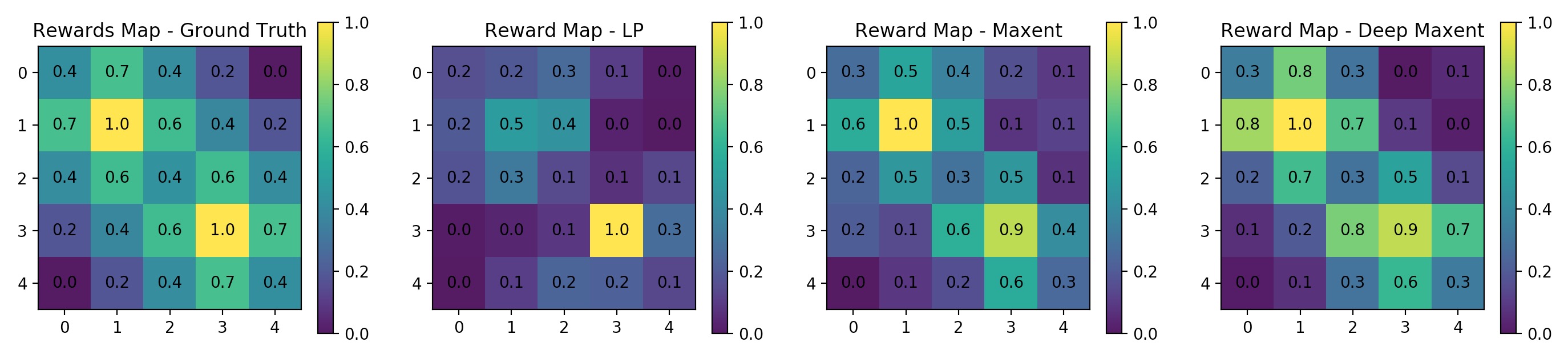

- Linear inverse reinforcement learning (Ng & Russell 2000)

- Maximum entropy inverse reinforcement learning (Ziebart et al. 2008)

- Maximum entropy deep inverse reinforcement learning (Wulfmeier et al. 2015)

- gridworld 2D

- gridworld 1D

- value iteration

Please cite this work using the following bibtex if you use the software in your publications

@software{Lu_yrlu_irl-imitation_Implementation_of_2022,

author = {Lu, Yiren},

doi = {10.5281/zenodo.6796157},

month = {7},

title = {{yrlu/irl-imitation: Implementation of Inverse Reinforcement Learning (IRL) algorithms in python/Tensorflow}},

url = {https://github.com/yrlu/irl-imitation},

version = {1.0.0},

year = {2017}

}

- python 2.7

- cvxopt

- Tensorflow 0.12.1

- matplotlib

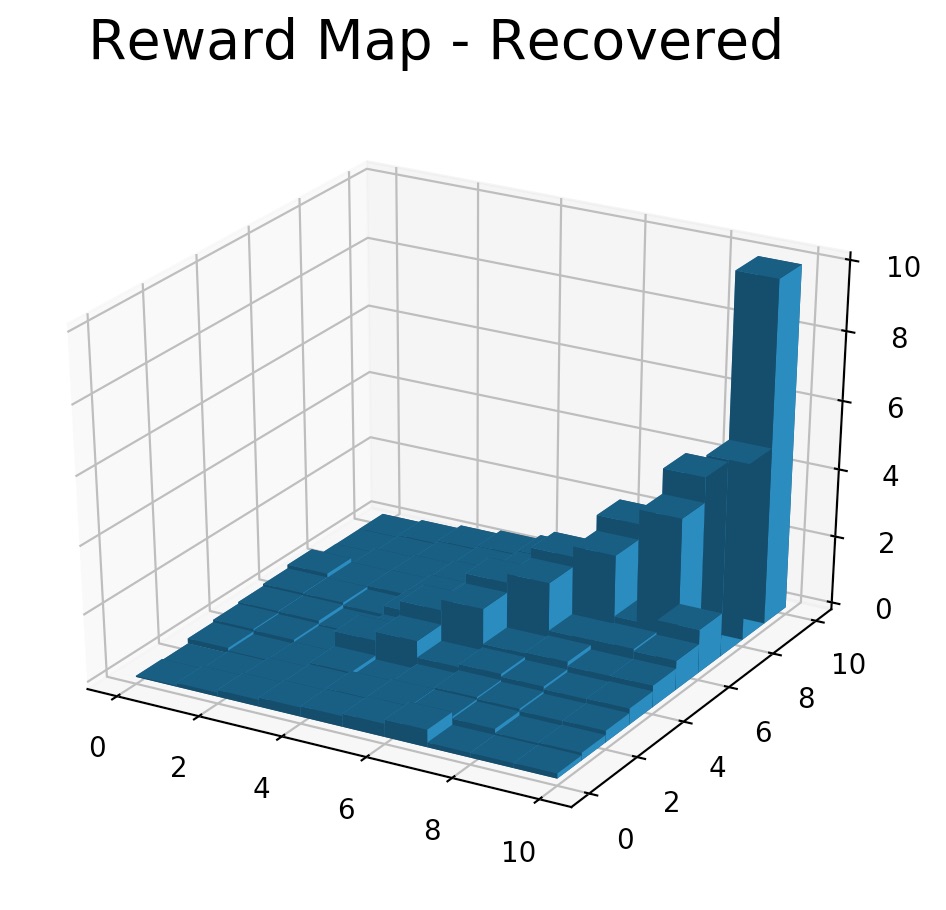

- Following Ng & Russell 2000 paper: Algorithms for Inverse Reinforcement Learning, algorithm 1

$ python linear_irl_gridworld.py --act_random=0.3 --gamma=0.5 --l1=10 --r_max=10

(This implementation is largely influenced by Matthew Alger's maxent implementation)

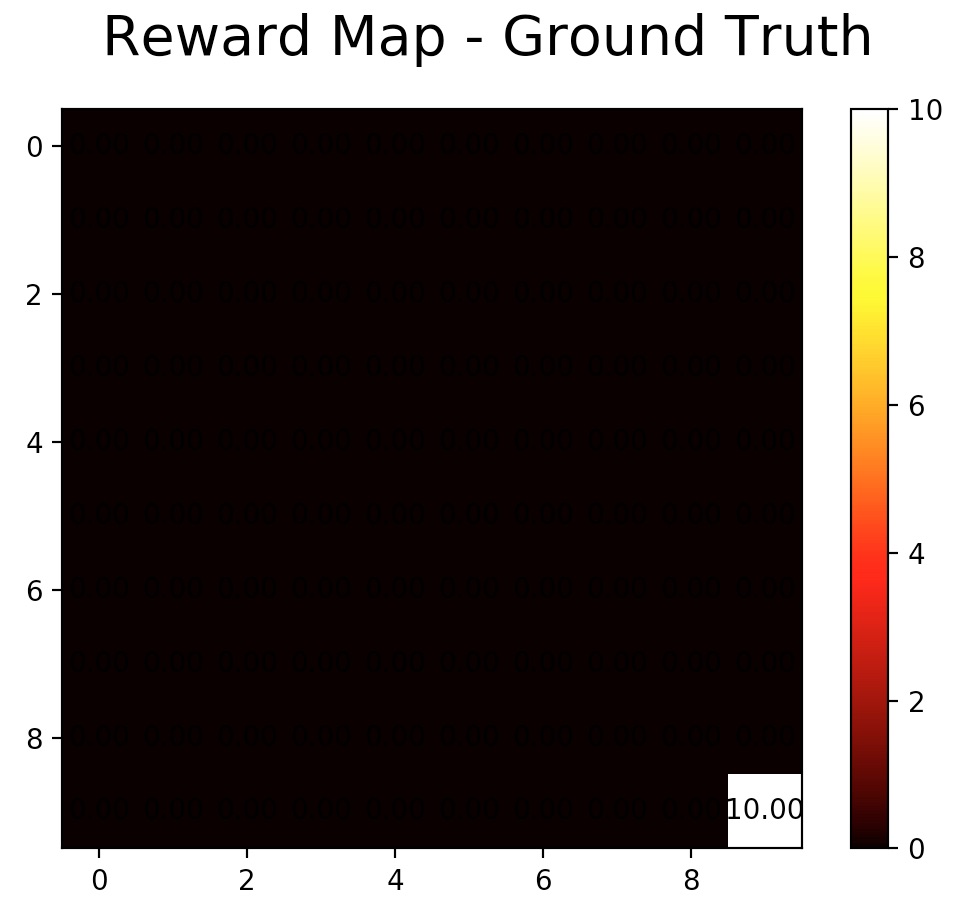

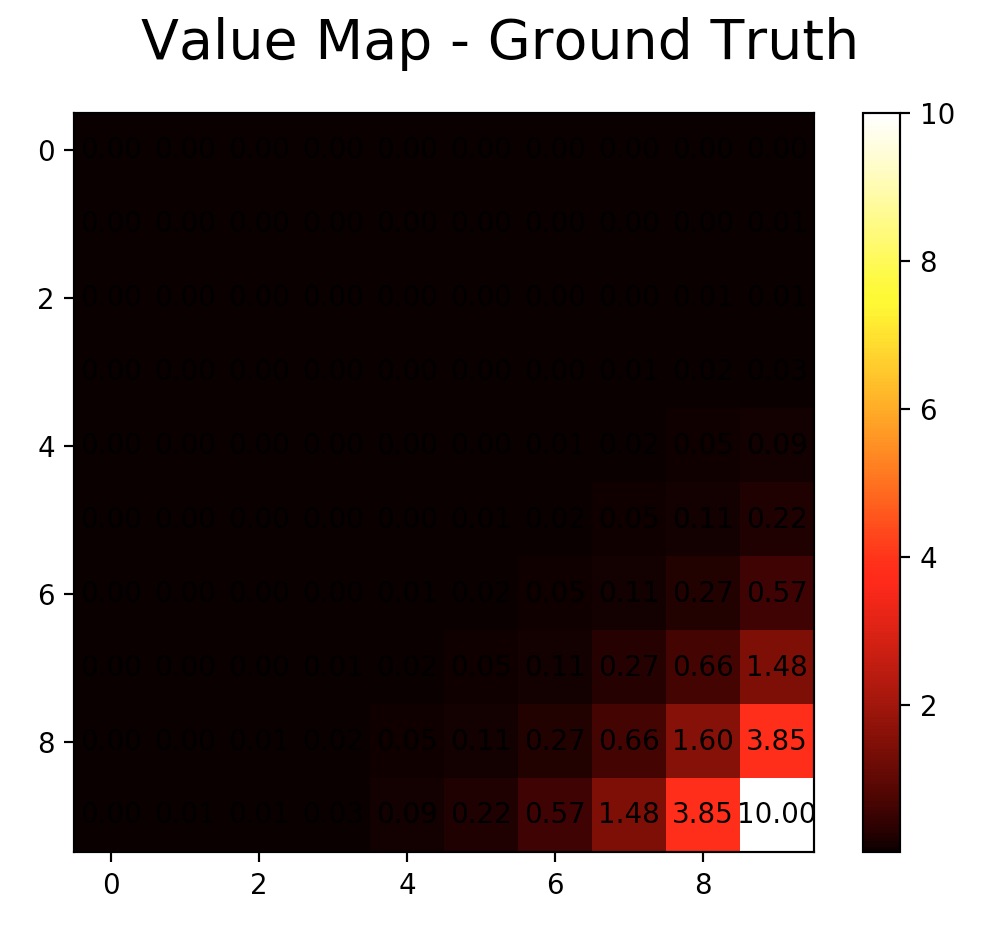

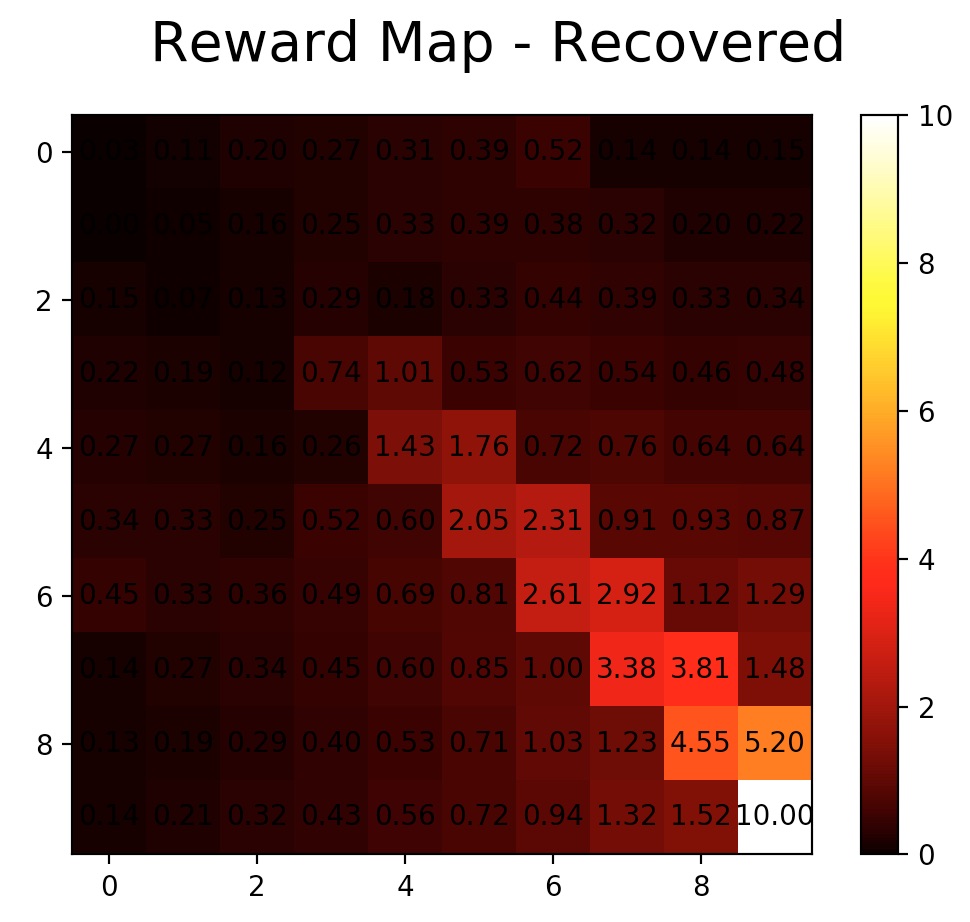

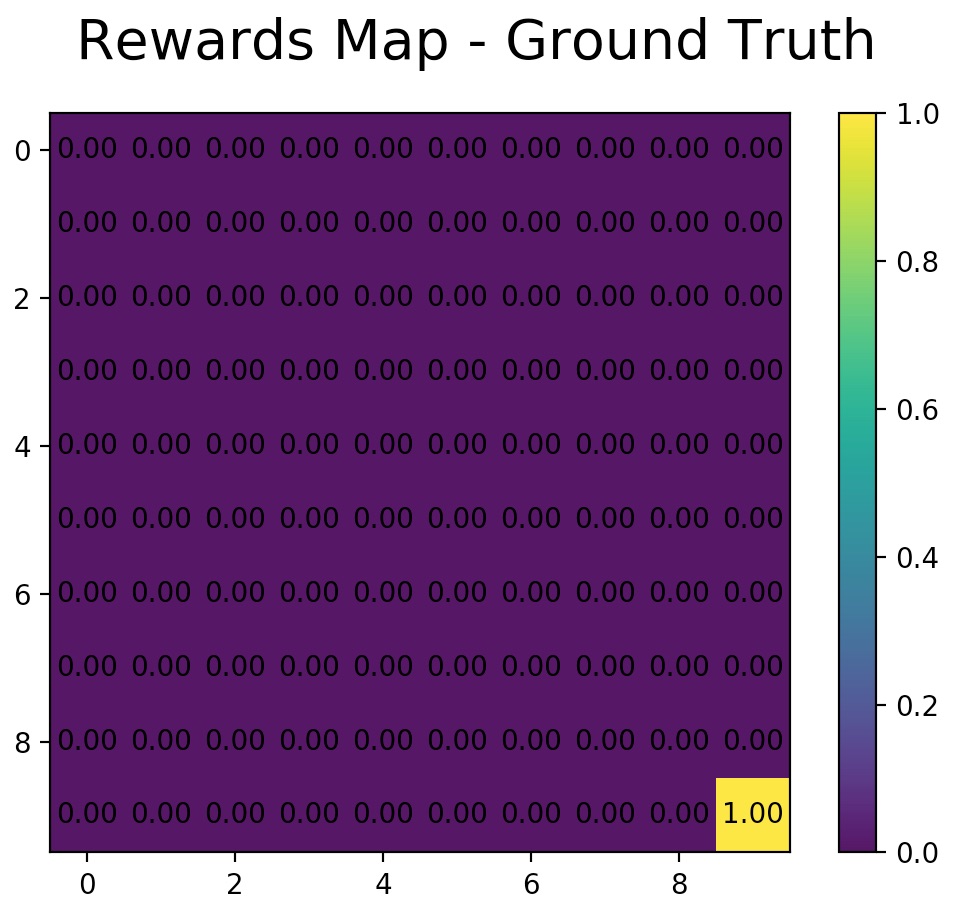

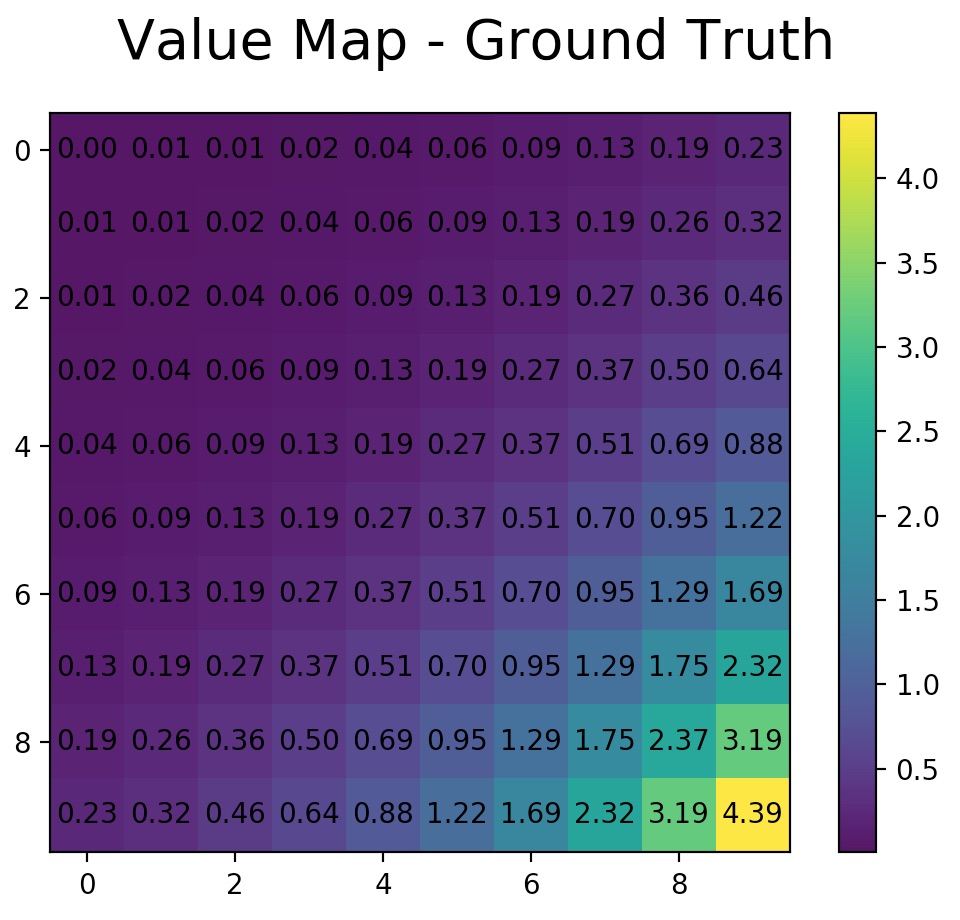

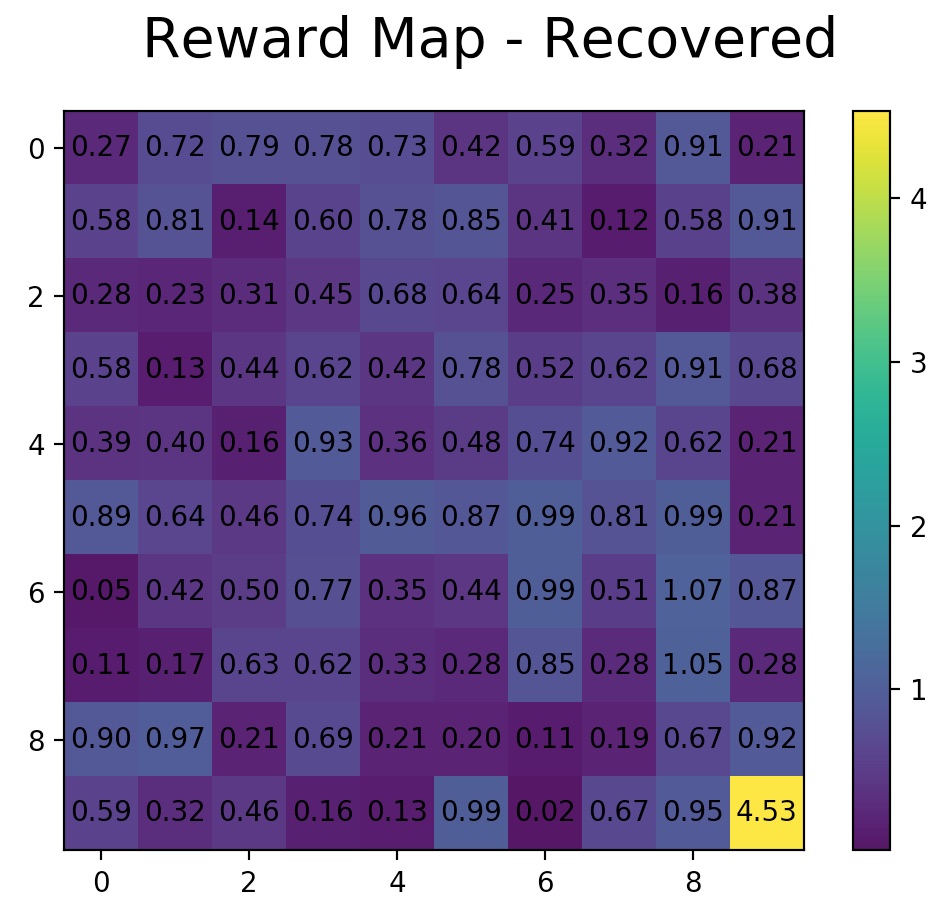

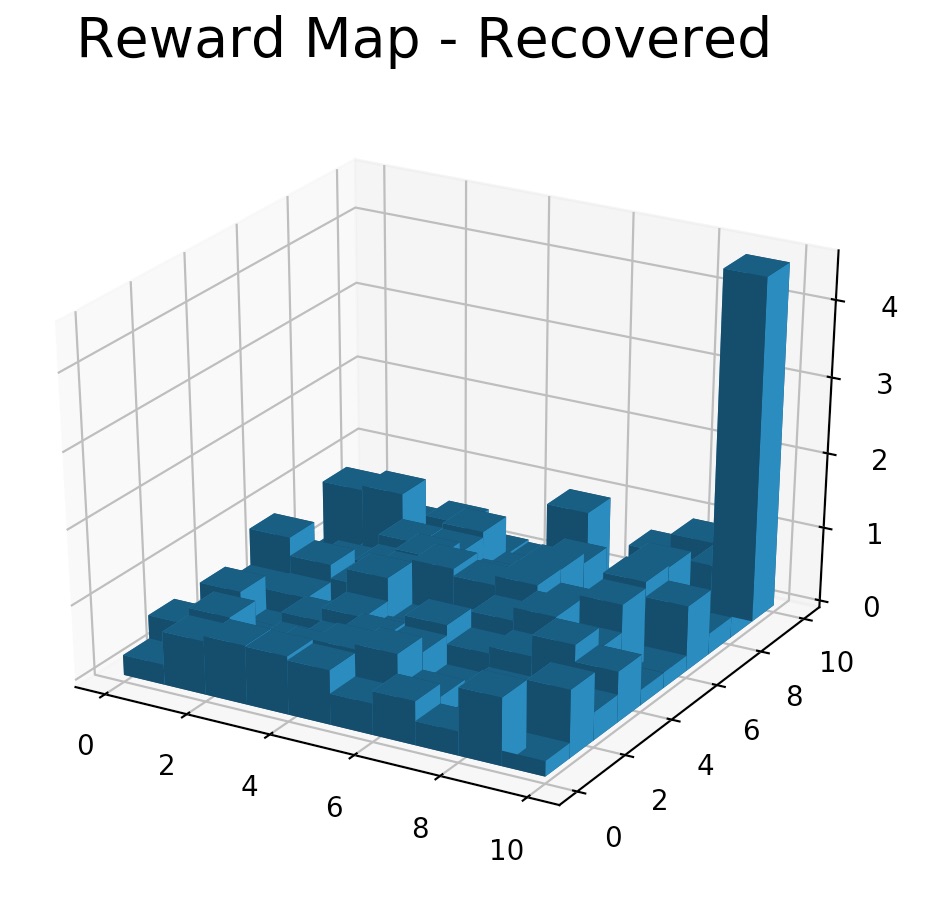

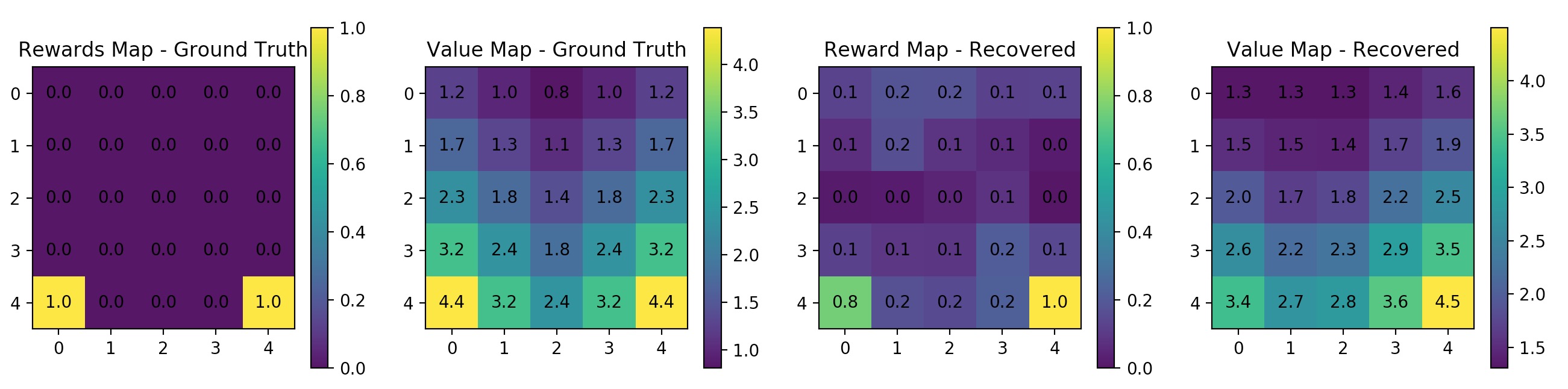

- Following Ziebart et al. 2008 paper: Maximum Entropy Inverse Reinforcement Learning

$ python maxent_irl_gridworld.py --helpfor options descriptions

$ python maxent_irl_gridworld.py --height=10 --width=10 --gamma=0.8 --n_trajs=100 --l_traj=50 --no-rand_start --learning_rate=0.01 --n_iters=20

$ python maxent_irl_gridworld.py --gamma=0.8 --n_trajs=400 --l_traj=50 --rand_start --learning_rate=0.01 --n_iters=20

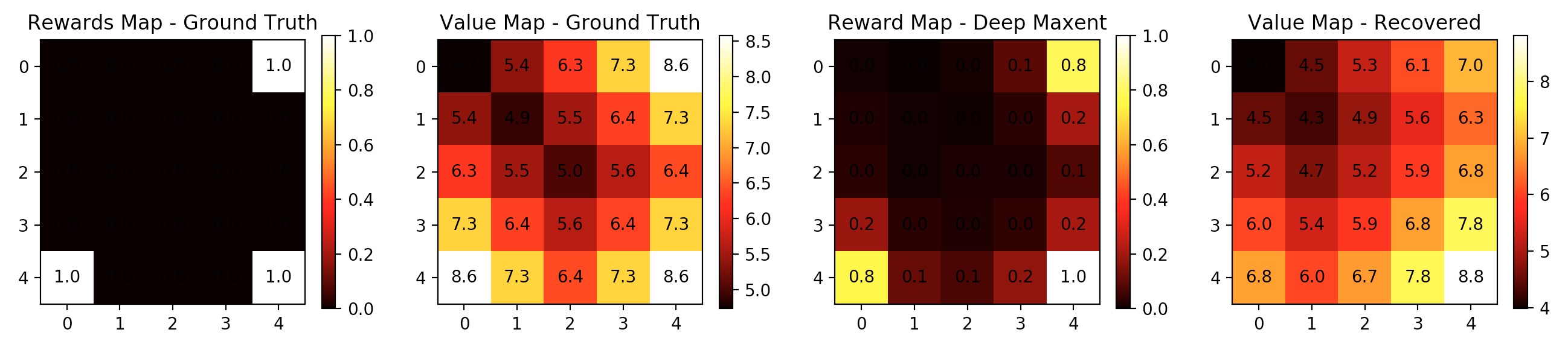

- Following Wulfmeier et al. 2015 paper: Maximum Entropy Deep Inverse Reinforcement Learning. FC version implemented. The implementation does not follow exactly the model proposed in the paper. Some tweaks applied including elu activations, clipping gradients, l2 regularization etc.

$ python deep_maxent_irl_gridworld.py --helpfor options descriptions

$ python deep_maxent_irl_gridworld.py --learning_rate=0.02 --n_trajs=200 --n_iters=20