- Not a standart or specification.

- Not a tool or a particular software.

- Not something you do with a tool of software.

- DevOps is a cultural thing, it represents a change in mindset.

The usual business process is Customter > Project Manager > Developer > Tester > SysAdmins. Now, let's zoom in in Developers and SysAdmins and their main task:

For the Developer

- Building software.

- Adding new features.

For SysAdmins

- Building and maintaining the IT infrastructure.

- With the system run smoothly, securely, and with as little downtime.

Both of them, there are something in common, that's the Software.

For the SysAdmin

- Knows very little about the software that need to operate.

For the developer

- Knows very little of the infrastructure where the software is running.

Everyone in the business process work together on the software just in a different capacity, since the final outcome impact everyone it makesense for all these groups to collaborate. The cultural shift that DevOps brings is also tightly connected to the agile movement.

Where business conditions and requirements change all the time and where we need to juggle tons of tools and technology every day, the best culture is not one of blaming and finger-pointing but one of experimentation and learning from past mistakes. So, we want to have everyone collaborate instead of working in silos and stages or instead of finger pointing everyone takes responsibility for the final outcome. If the final product works and the customers or the users of the product are happy. So, the DevOps are:

- More than just culture.

- Focus on automating their tasks.

- Because of manual and repetitive works is a productivity killer.

So, the main concern of this repository are automatically building and deploying software which fall under a pactice called CI/CD. We want to automate as much as possible to save time and give us the chance to put that time to good use instead of manually repeating the same task over and over again. But to automate things we need to get good at:

- Using the shell.

- Working with the CLI tools.

- Reading documentation.

- And writing scripts.

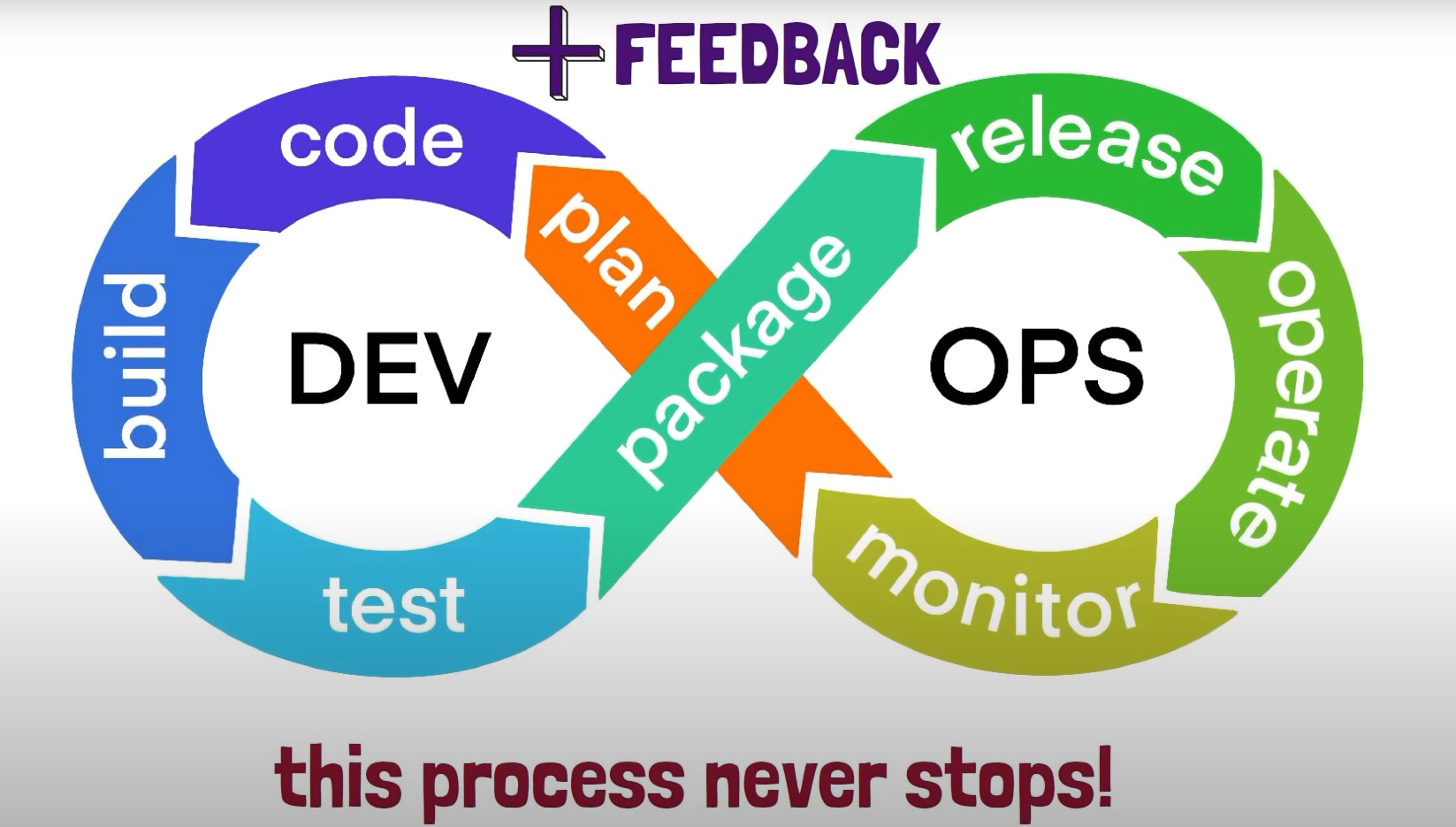

And here's the simple representation of DevOps.

That proccess never stop, it goes on and on in an endless loop. It mean we contionue going through these steps with each iteration or new version of the software and get the feedback that add into the product. So, DevOps goes hand in hand with the agile movement.

- Automation engine.

- Enables teams to perform DevOps pactices.

- Continous Integration.

- Continous Delivery/Deployment.

- Automatically build, test, and deploy using GitLab pipeline.

.gitlab-ci.ymlfrom the root of the project repository.- Automated set of sequential steps to build, test, and deliver/deploy the code.

- GitLab pipeline have two main components.

- Jobs describe the tasks that need to be done.

- Stages define the order in which jobs will be completed.

- Set of instructions for a program to execute.

- Gitlab Runner is the program that executes jobs in a GitLab pipeline.

- Separate program that can be run on your local host, VM or even container.

- GitLab assigns pipeline jobs to available runners at pipeline runtime.

- Enter CI/CD menu and Select Pipelines and check the template example.

- Or enter the Editor and select Create new CI/CD pipelines.

- To check the visualiztion of CI/CD, check the Visualize tab.

- To check the correct syntax, check the Lint tab.

- There are steps for build and test, that called by Continous Integration.

- After the CI process, continue to deploy in the staging server and continue to the production server.

- After the CI process, continue to deploy in the staging server and taking action before deploy to the production server.

Here is the example of pipeline script in .gitlab-ci.yml that can edit from the Editor.

stages: # List of stages for jobs, and their order of execution

- build

- test

- deploy

build-job: # This job runs in the build stage, which runs first.

stage: build

script:

- echo "Compiling the code..."

- echo "Compile complete."

unit-test-job: # This job runs in the test stage.

stage: test # It only starts when the job in the build stage completes successfully.

script:

- echo "Running unit tests... This will take about 60 seconds."

- echo "Code coverage is 90%"

lint-test-job: # This job also runs in the test stage.

stage: test # It can run at the same time as unit-test-job (in parallel).

script:

- echo "Linting code... This will take about 10 seconds."

- echo "No lint issues found."

deploy-job: # This job runs in the deploy stage.

stage: deploy # It only runs when *both* jobs in the test

stage complete successfully.

script:

- echo "Deploying application..."

- echo "Application successfully deployed."

Here are the main syntax of editor in the GitLab CI:

-

stagesInside the

stages, there are:buildtestdeploy

The stages calls in every single CI/CD step like (for example from script above):

- The

build-jobstep hasbuildstages. - The

unit-test-jobstep hasteststages. - The

lint-test-jobstep hasteststages. - The

deploy-jobstep hasdeploystages.

Add docker images to execute the pipeline. It's like the environment where the CI/CD runs. Here's the config example:

image: python:latest

stages: # List of stages for jobs, and their order of execution

- build

- test

- deploy

Add variable that gonna use by the pipeline, you can describe it in the .gitlab-ci.yml. Here the example:

image: python:latest

variable:

USERNAME: rohwid

stages: # List of stages for jobs, and their order of execution

- build

- test

- deploy

Or define with the GitLab repository setting in Settings > CI/CD > Variable > Collapse. You can set the variable into two modes like:

- Protected: Only can be use in the current protected branch.

- Masked: Hide or encrypt the variable.

-

Open source application that is used to run jobs in a GitLab CI/CD pipeline.

-

Pipeline jobs are assigned to available GitLab Runners.

-

The program can be installed on our local machine, VM, or Docker container and Cloud infrastructure.

-

Supported on Linux, Windows, macOS, and FreeBSD.

-

GitLab Runners execute the work defined in GitLab pipeline jobs (check the tasks on

script).build-job: # This job runs in the build stage, which runs first. stage: build script: - echo "Compiling the code..." - echo "Compile complete."

Here are the types of GitLab runner.

- Shared: Available to all projects in a GitLab instance.

- Group: Available to all projects in a group.

- Project: For a single project or a set of project with specific requirements.

The GitLab Runner executor determines the environment in which a job will run.

- In a VM vua a hypervisor such as VirtualBox

- Shell

- Remote SSH

- Docker

- Kubernetes

- Custom executor (for environment that not supported by GitLab runner environment)

The runner program has an embedded Prometheus metric HTTP server for monitoring

- Runner bussiness logic metrics

- Go-specific process metrics

- General process metrics

- Build version information

What is tags in GitLab CI/CD pipeline:

- Tags can be added to a GitLab runner that can be reference in GitLab pipeline.

- Reference tags from within a GitLab pipeline to specify which runners should be used for a job.

- More explanation in the docs.

Why self-host runners?

- Save on costs by using self-managed/hosted runners

- Customization

- Security

Here are the steps:

- Install runner program on target host.

- Choose an executor.

- Register GitLab runner.

- Run a pipeline that utilizes your runner.

Before use GitLab pipeline we needs:

- GitLab account.

- VM or other infrastructure.

- In this example: I'll be using a Linux machine as the GitLab Runner's host.

- GitLab project.

- Docker installed on target host where GitLab Runner will be installed.

Define the GitLab runners in GitLab repository setting in Settings > CI/CD > Variable > Collapse. There are two runners there are:

- Specific Runners: Setup runners for the specific project on our local machinge, VM, or Docker container.

- Shared Runners: Use the runners that provide by GitLab.

Setup GitLab runner on VM or AWS EC2 with the Docker executor, here's the steps.

- Deploy the instance.

- Install the Docker.

- Install the gitlab-ci runner package.

- Create group in GitLab.

- Assign the repository to the group to manage which is need the CI/CDrunner.

- Edit the gitlab-ci runner config in

/etc/gitlab-runner/config.toml.- Change the

privilegedparamameter intotrue.

- Change the

Check the docs to setup the cache.

Here are the steps when trying gitlab-ci:

-

Create the repository

try-gitlab-ci-1. -

Create

.gitlab-ci.ymlfile and commit. -

Create and define the stages.

stages: - build - test - shipAnd call all the stages in every pipeline job. So, the script will be like this:

build-job: stage: build script: - mkdir build - touch build/computer.txt - echo "Build the computer..." - echo "- CPU" >> build/computer.txt - echo "- Mainboard" >> build/computer.txt - echo "- RAM" >> build/computer.txt - echo "- SSD" >> build/computer.txt - echo "- Monitor" >> build/computer.txt - echo "- Keyboard" >> build/computer.txt - echo "- Mouse" >> build/computer.txt - echo "Build complete." test-job: stage: test script: - echo "Checking the components... This will take about 10 seconds." - test -f build/computer.txt - cat build/computer.txt ship-job: stage: ship script: - echo "shiping the computer..." - mkdir ship - cp build/computer.txt ship - echo "The computer successfully shiped." - cat ship/computer.txt -

Setup the artifacts paths.

artifacts: paths: - buildAnd call the artifact path in the pipeline job that needed. So, the script will be like this:

build-job: stage: build script: - ls - mkdir build - touch build/computer.txt - echo "Build the computer..." - echo "- CPU" >> build/computer.txt - echo "- Mainboard" >> build/computer.txt - echo "- RAM" >> build/computer.txt - echo "- SSD" >> build/computer.txt - echo "- Monitor" >> build/computer.txt - echo "- Keyboard" >> build/computer.txt - echo "- Mouse" >> build/computer.txt - echo "Build complete." artifacts: paths: - build -

Setup the variables.

variables: BUILD_FILE_NAME: computer.txtAnd replace some config with variables name.

-

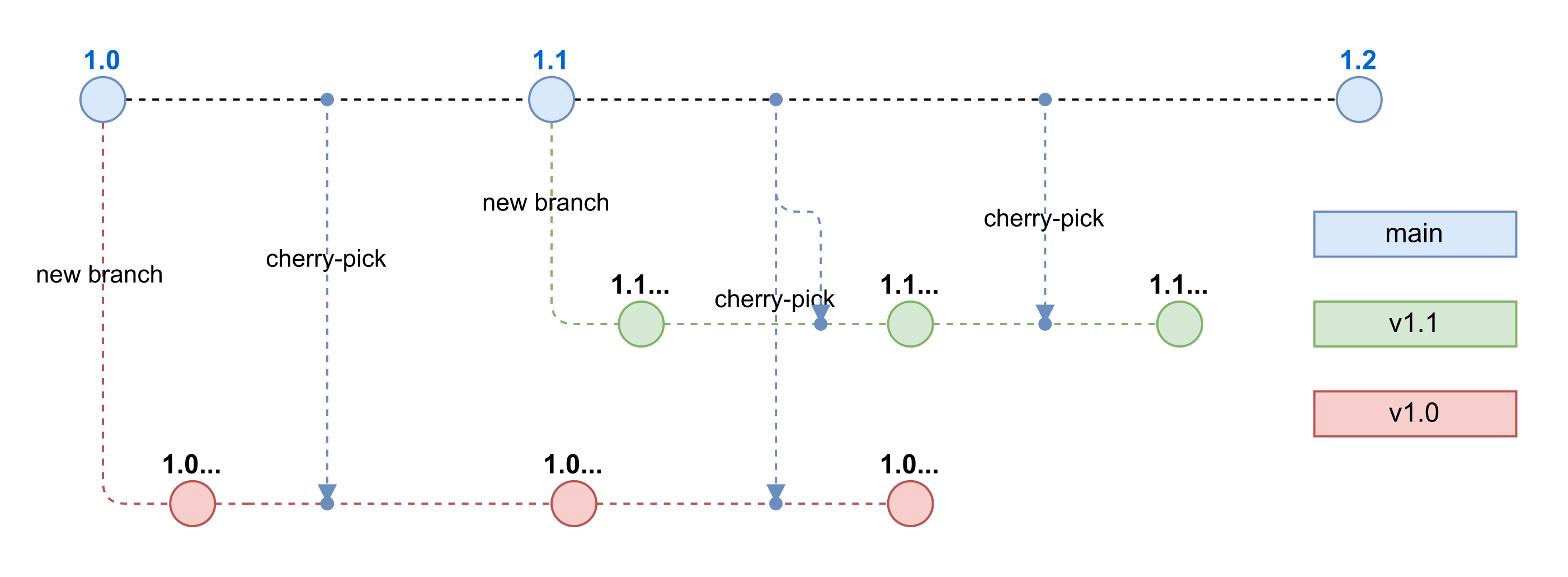

Set the GitLab Setting in General:

- Use Fast-forward merge method.

- Squash commit when mergin into Encourage.

- Merge checks when Pipelines must succeed.

-

Set the GitLab Setting in Repository:

- Allowed to push into No one.

-

That setting allow us to manage the repository easier, just the

mainbranch and the deployed version.

Here the example of the stages that we do previously:

- Build in the

buildstage (ex:build websiteorbuild-job). - linter test in the

teststage (ex:linterorlinter-test-job). - Unit test in the

teststage (ex:unit testorunit-test-job).

And here the stages, that what we will do.

stages:

- build

- test

- deploy production

- test production

Build stages, here are the complete stage for build.

build job:

image: node:16-alpine

stage: build

script:

- yarn install

- yarn build

artifacts:

paths:

- build

Now we will do website test stage (ex: test website or test-website-job). For example, this is a backend program that runs in http://localhost:3000.

-

Add

¶meter in the end running program. For example:python endpoint.py &or

serve -s build & -

Add the delay after the program execution, because of the

curlwill execute imediately after the program execute and didn't make sure the program running well or not.sleep 10 -

Test the output with

curl. For example:curl http://localhost:3000 | grep "data"So, the complete stage will be like this:

test job: image: node:16-alpine stage: test script: - yarn global add serve - apk add curl - serve -s build & - sleep 10 - curl http://localhost:3000 | grep "React App" -

AWS CLI in pipeline, this is example how to gonna use AWS CLI in Gitlab CI pipeline.

deploy to production: stage: deploy production image: name: amazon/aws-cli:2.4.11 entrypoint: [""] script: - aws --version -

Masking and protecting the variables, this part already explained in Pipeline Environment Variables section. So, you don't need to write the variable in

.gitlab-ci.yml, you just need to call the variable like this example:deploy to production: stage: deploy production image: name: amazon/aws-cli:2.4.11 entrypoint: [""] script: - aws --version - echo "Hello S3" > test.txt - aws s3 cp test.txt s3://$AWS_S3_BUCKET/test.txt -

Add the AWS credential in Pipeline Environment Variables like this:

AWS_S3_BUCKETand disable protected branches.AWS_ACCESS_KEY_IDand disable protected branches.AWS_SECRET_ACCESS_KEYthen disable the protected branches and masked the value.AWS_DEFAULT_REGIONand disable protected branches.

-

Upload multiple files into AWS S3 and delete it with

synccommand from AWS CLI.deploy to production: stage: deploy production image: name: amazon/aws-cli:2.4.11 entrypoint: [""] script: - aws --version - echo "Hello S3" > test.txt - aws s3 sync build/ s3://$AWS_S3_BUCKET --delete -

Static website hosting on AWS S3 (So.. WOW).

- Access and edit in menu S3 > Properties > Static Website Hosting and Enable.

- Set the index document and error document.

- Now enter again into the

Static Website Hosting. There a website static hosting URL but it still can't be access if you click on it. - Go to

permissiontab and disable all the Block Public Access and Edit the Bucket Policy .- Search and add the bucket action to

GetObject(for example:"S3:GetObject"). - Change the

Sidvalue intoPublicRead(for example:"Sid": "PublicRead"). - Change the

Principalvalue into*(for example:"Principal": "*"). - Chenge the

Effectvalue intoAllow(for example:"Effect": "Allow"). - Change the

Resourcevalue into aws bucket name (for example:"Resource": "arn:aws:s3:::(aws-s3-bucket-name)/*"). - Now, you can access the static web hosting with AWS S3.

- Search and add the bucket action to

-

Add the

rulesparams in.gitlab-ci.ymlwith this script and fill it with Predefined variable refference. So, it gonna make the currnet pipeline state will only execute in the main branch.rules: - if: $CI_COMMIT_REF_NAME == $CI_DEFAULT_BRANCHSo, in the GitLab config:

deploy to production: stage: deploy production image: name: amazon/aws-cli:2.4.11 entrypoint: [""] rules: - if: $CI_COMMIT_REF_NAME == $CI_DEFAULT_BRANCH script: - aws --version - echo "Hello S3" > test.txt - aws s3 sync build/ s3://$AWS_S3_BUCKET --delete -

After deployed to the production, then add the

production test. Before it, please define thevariableslike mentioned in Pipeline Environment Variables and add the AWS S3 Static Web Hosting.-

Here is the variable example:

variables: APP_BASE_URL: http://(aws-s3-static-web-hosting-url) -

Now create the

production testin thepost deploystages.tests in production: image: curlimages/curl stage: test production rules: - if: $CI_COMMIT_REF_NAME == $CI_DEFAULT_BRANCH script: - curl $APP_BASE_URL | grep "Flask App"

-

-

Add the staging server to deploy.

-

Add new stage that named

deploy stagingandtest staging.stages: - build - test - deploy staging - test staging - deploy production - test production -

Copy the production stage and change it into staging.

deploy to staging: stage: deploy staging image: name: amazon/aws-cli:2.4.11 entrypoint: [""] rules: - if: $CI_COMMIT_REF_NAME == $CI_DEFAULT_BRANCH script: - aws --version - echo "Hello S3" > test.txt - aws s3 sync build/ s3://$AWS_S3_BUCKET_STAGING --deleteAnd for the testing in staging.

tests in staging: image: curlimages/curl stage: test staging rules: - if: $CI_COMMIT_REF_NAME == $CI_DEFAULT_BRANCH script: - curl $APP_BASE_URL | grep "Flask App" -

And here is the variable should be:

variables: APP_BASE_URL: http://(aws-s3-production-static-web-hosting-url) APP_BASE_URL_STAGING: http://(aws-s3-staging-static-web-hosting-url)

-

To enable this feature, enter the GitLab menu in Deployments > Environments > New environment.

-

Create the

stagingenvironment with thestagingname and fill the external URL:http://(aws-s3-staging-static-web-hosting-url). -

Create the

productionenvironment with theproductionname and fill the external URL:http://(aws-s3-production-static-web-hosting-url). -

Now, remove the variables from

.gilab-ci.yml.variables: APP_BASE_URL: http://(aws-s3-production-static-web-hosting-url) APP_BASE_URL_STAGING: http://(aws-s3-staging-static-web-hosting-url) -

Go to Setting > CI/CD

- Then set the Variable into specific Environment scope that defined before (

stagingandproduction). AWS_S3_BUCKETintoproduction.- rename

AWS_S3_BUCKET_STAGINGintoAWS_S3_BUCKETintostaging.

- Then set the Variable into specific Environment scope that defined before (

-

Modify the stage that define before by deleting the

test stagingandtest productionstages. So, the left stages will be like this.stages: - build - test - deploy staging - deploy production -

Modify the

deploy stagingstage by combining with thetest stagingstage and change the$APP_BASE_URLvariable into$CI_ENVIRONMENT_URLthat provide by GitLab on Predefined variable refference and don't forget to addenvironmentvariable withstagingvalue.deploy to staging: stage: deploy staging environment: staging image: name: amazon/aws-cli:2.4.11 entrypoint: [""] rules: - if: $CI_COMMIT_REF_NAME == $CI_DEFAULT_BRANCH script: - aws --version - echo "Hello S3" > test.txt - aws s3 sync build/ s3://$AWS_S3_BUCKET --delete - curl $CI_ENVIRONMENT_URL | grep "Flask App" -

Modify the

deploy productionstage by combining with thetest productionstage and change the$AWS_S3_BUCKET_STAGINGvariable into$CI_ENVIRONMENT_URLthat provide by GitLab Predefined variable refference and don't forget to addenvironmentvariable withproductionvalue.deploy to production: stage: deploy production environment: production image: name: amazon/aws-cli:2.4.11 entrypoint: [""] rules: - if: $CI_COMMIT_REF_NAME == $CI_DEFAULT_BRANCH script: - aws --version - echo "Hello S3" > test.txt - aws s3 sync build/ s3://$CI_ENVIRONMENT_URL --delete - curl $CI_ENVIRONMENT_URL | grep "Flask App"

Here are the steps to reusing the configuration.

-

Create new stage to reuse the

deploy stagingand thedeploy production, because that two stages were identical. Don't forget to add.in front of the new stages, it means that stages are disabled..deploy: image: name: amazon/aws-cli:2.4.11 entrypoint: [""] rules: - if: $CI_COMMIT_REF_NAME == $CI_DEFAULT_BRANCH script: - aws --version - echo "Hello S3" > test.txt - aws s3 sync build/ s3://$CI_ENVIRONMENT_URL --delete - curl $CI_ENVIRONMENT_URL | grep "Flask App" -

Now, change the value of the

deploy to stagingand thedeploy to production.For the

deploy to staging:deploy to staging: stage: deploy staging environment: staging extends: .deployFor the

deploy to production:deploy to production: stage: deploy production environment: production extends: .deploy

Here are the steps to add app buidl version.

-

Add

APP_VERSIONvariable.variables: APP_VERSION: $CI_PIPELINE_IID -

Call the

APP_VERSIONvariable to the every stages.-

Insert the

APP_VERSIONto thebuildstage. The app version also can be check on the artifacts directory, if the artifact variable was enabled.build website: stage: build script: ... - echo $APP_VERSION > build/version.html artifacts: paths: - build -

Test the build version.

.deploy: image: name: amazon/aws-cli:2.4.11 entrypoint: [""] rules: - if: $CI_COMMIT_REF_NAME == $CI_DEFAULT_BRANCH script: - aws --version - echo "Hello S3" > test.txt - aws s3 sync build/ s3://$CI_ENVIRONMENT_URL --delete - curl $CI_ENVIRONMENT_URL | grep "Flask App" - curl $CI_ENVIRONMENT_URL/version.html | grep $APP_VERSION

-

Like that mentioned above, the Contionus Delivery have step that should be executed before deploy to the production server after successfully deployed to the staging server. In this case, just add when variable with value manual to the deploy to production stage.

deploy to production:

stage: deploy production

when: manual

environment: production

extends: .deploy

Here's my step for best practice:

try-gitlab-ci-1: learn how to gitlab-ci workstry-gitlab-ci-2: learn gitlab artifact, gitlab-ci default variable and set local or hardcoded variable.try-extends-and-variable: learn to extend stages and use the gitlab-ci variable feature. In this directory, I was tried how to depoy with SSH from gitlab runner to the EC2 Instance.try-build-image-versioning: Pending.. Focus on GitHub Action due to the GitLab were not free and to pricy for large project.