School of Computer Science and Technology, Harbin Institute of Technology, Shenzhen

*Corresponding author

IEEE Conference on Computer Vision and Pattern Recognition (CVPR) 2024

[Paper] [Project Page] [Video(YouTube)] [Video(bilibili)]

🔥 Details will be released. Stay tuned 🍻 👍

- [02/2024] LION has been accepted by CVPR 2024.

- [11/2023] Arxiv paper released.

- [11/2023] Project page released.

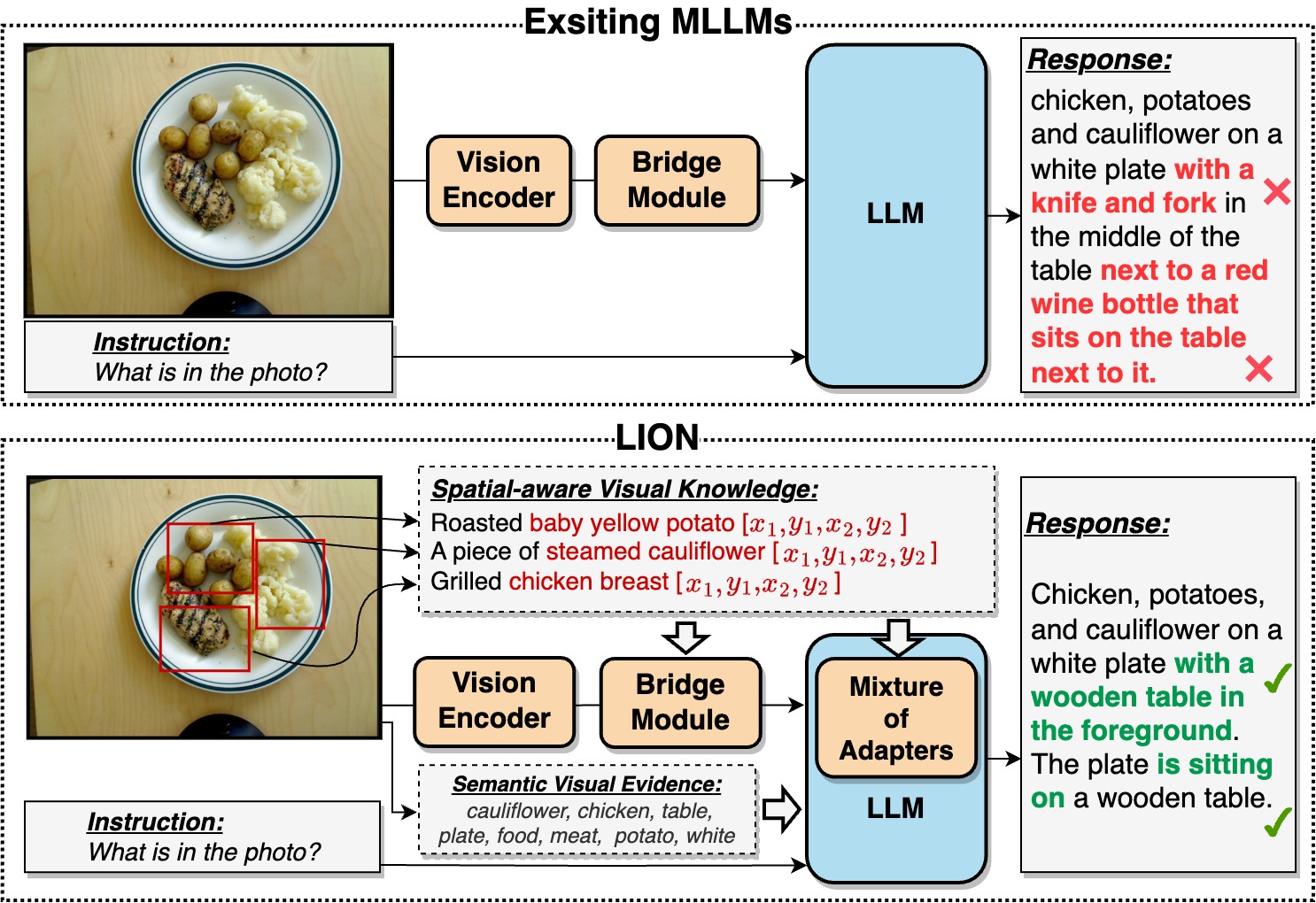

This is the github repository of LION : Empowering Multimodal Large Language Model with Dual-Level Visual Knowledge. In this work, we enhance MLLMs by integrating fine-grained spatial-aware visual knowledge and high-level semantic visual evidence, boosting capabilities and alleviating hallucinations.

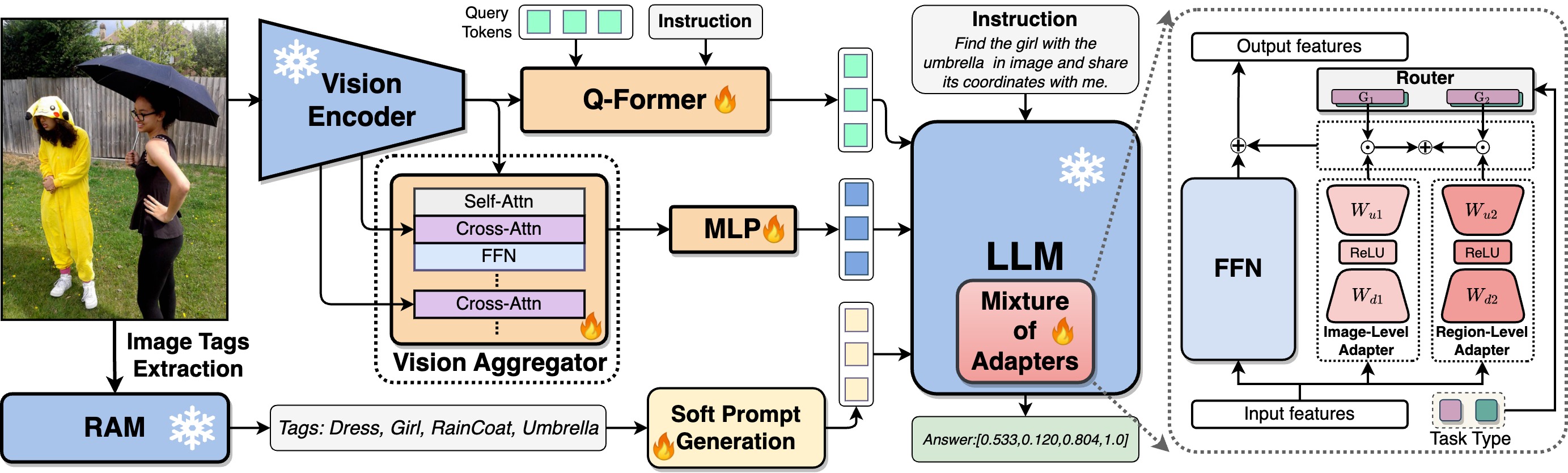

The framework of the proposed LION model:

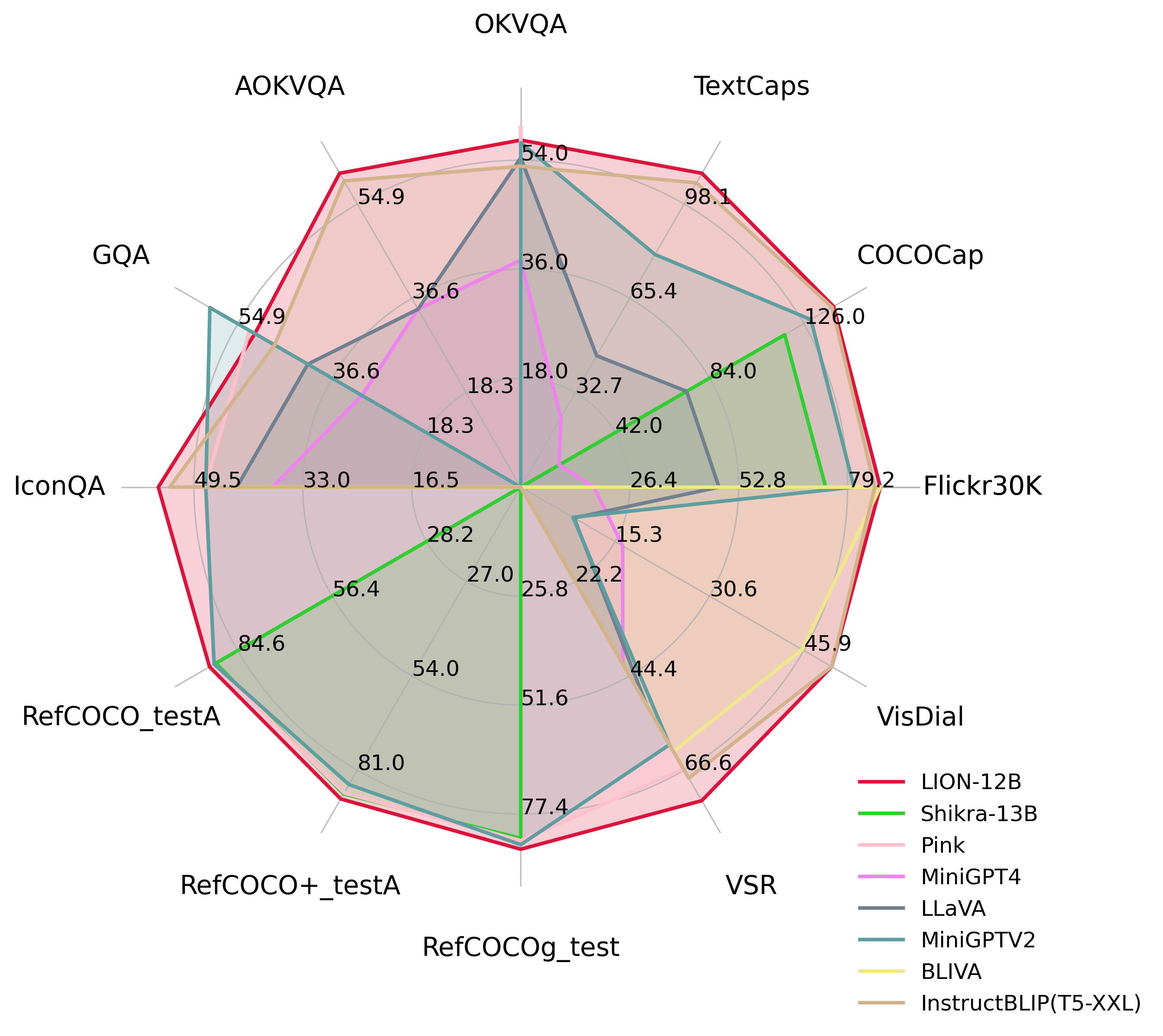

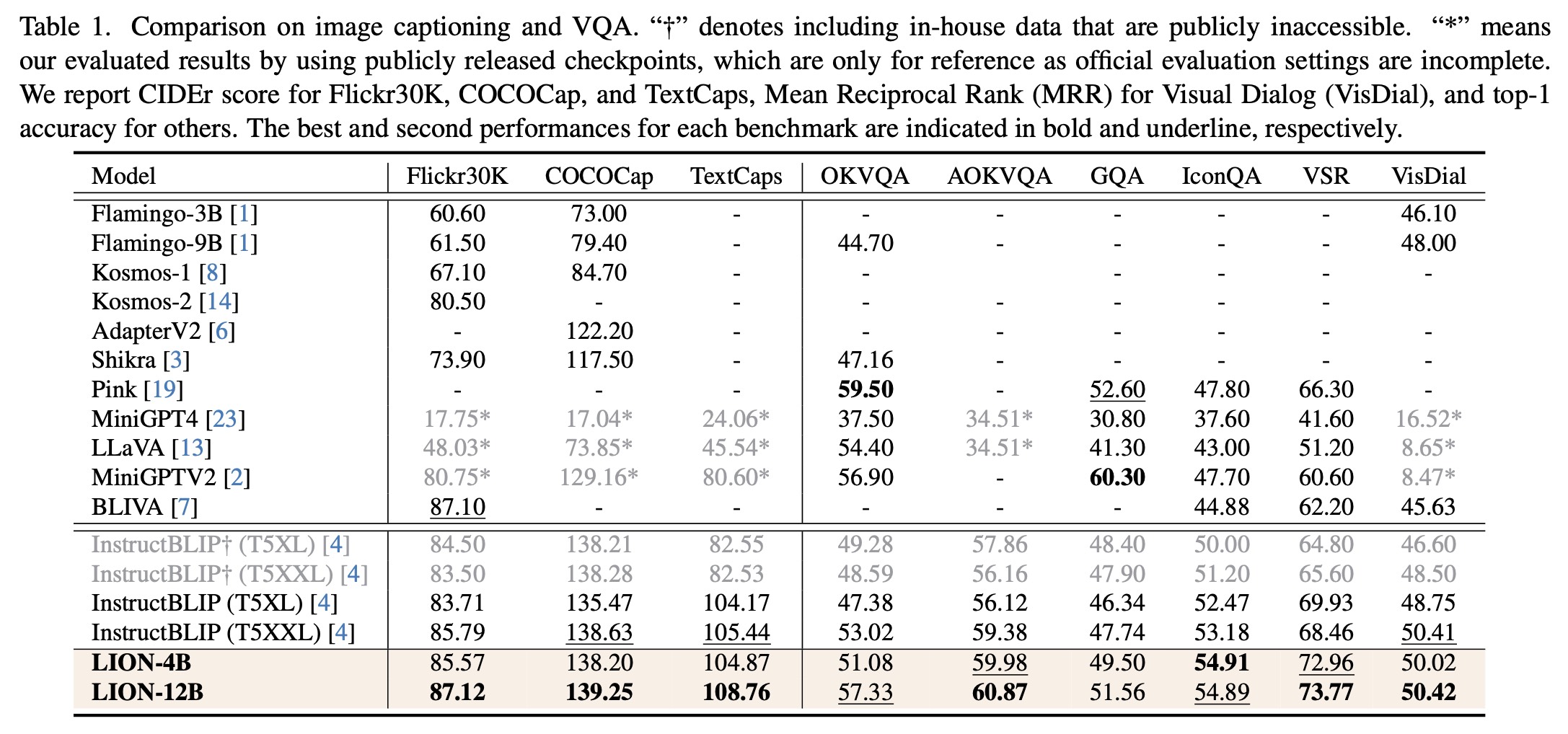

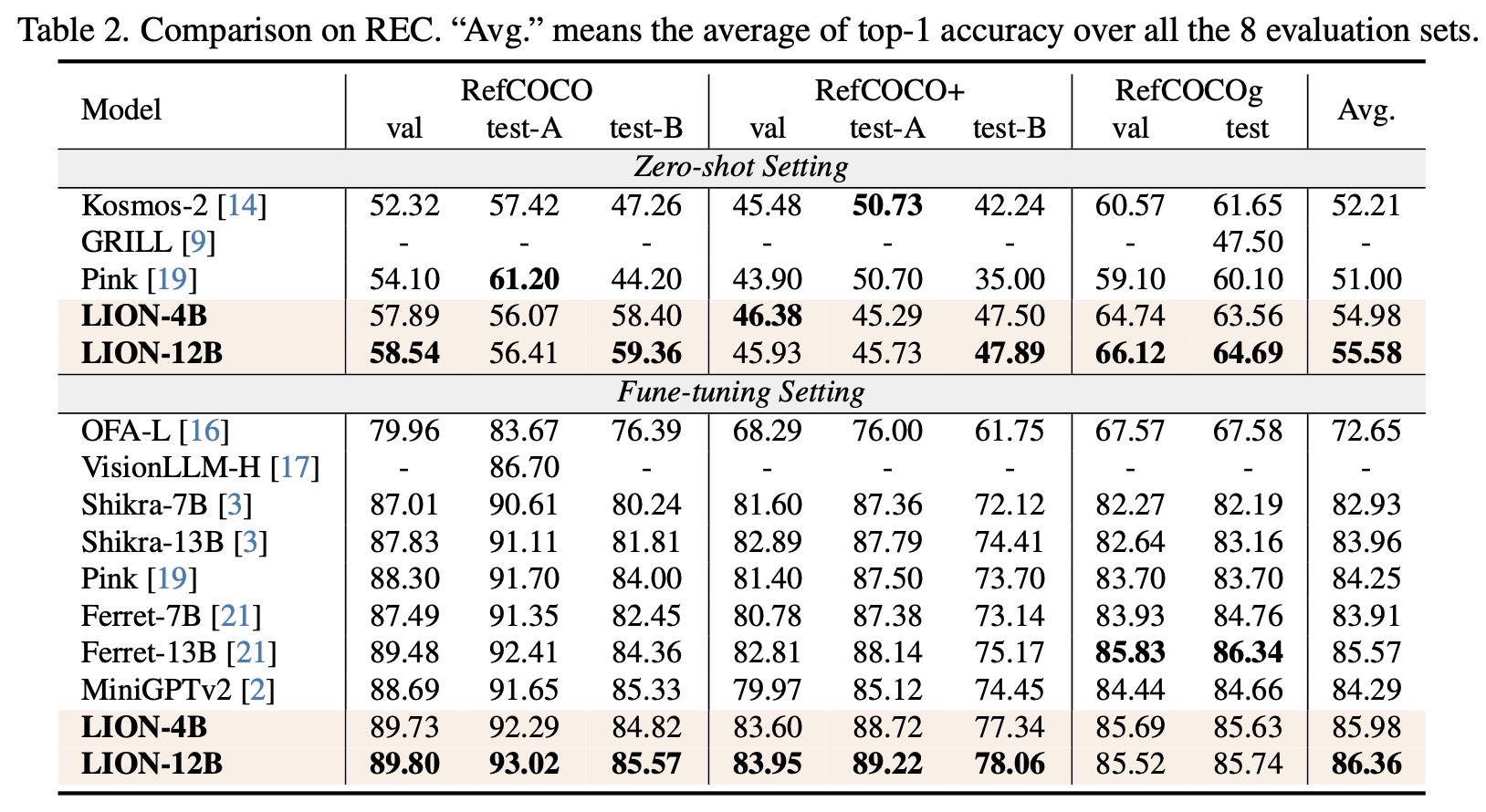

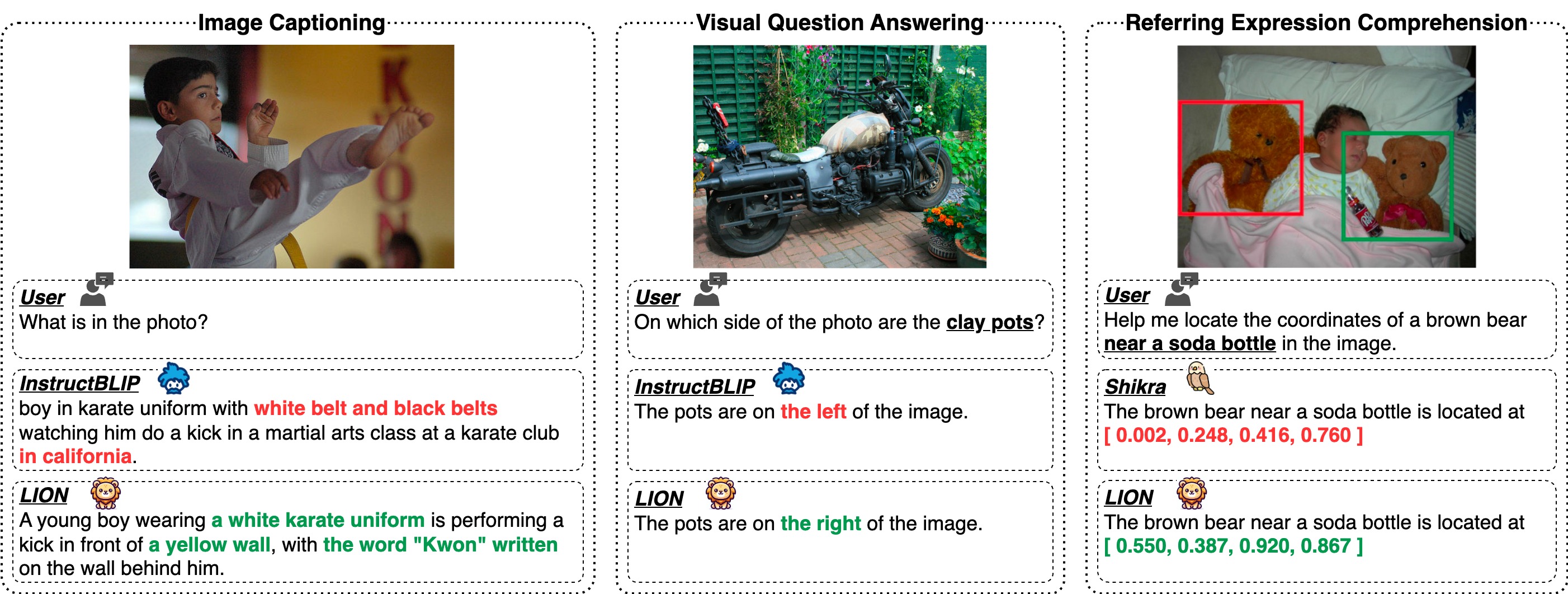

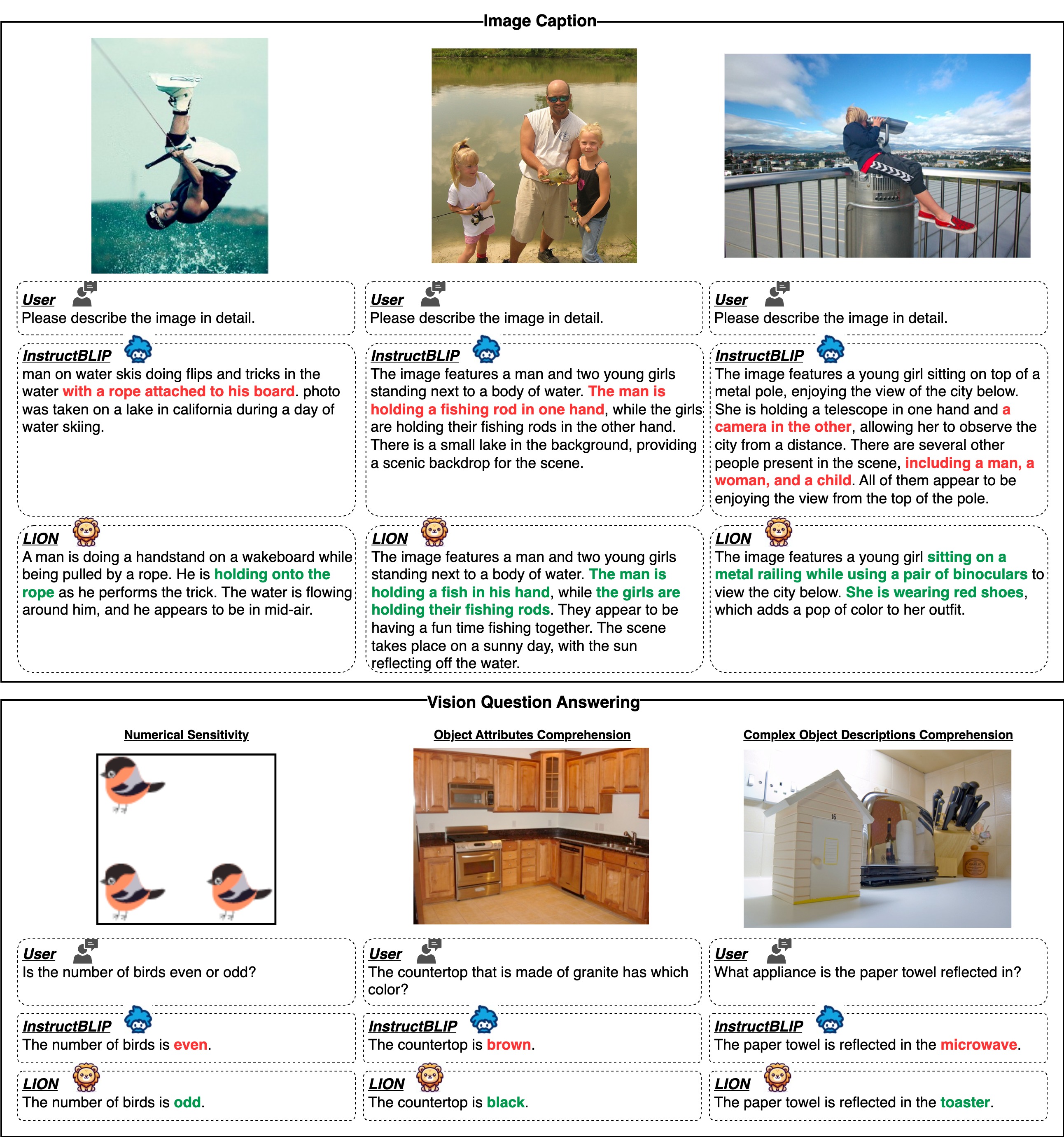

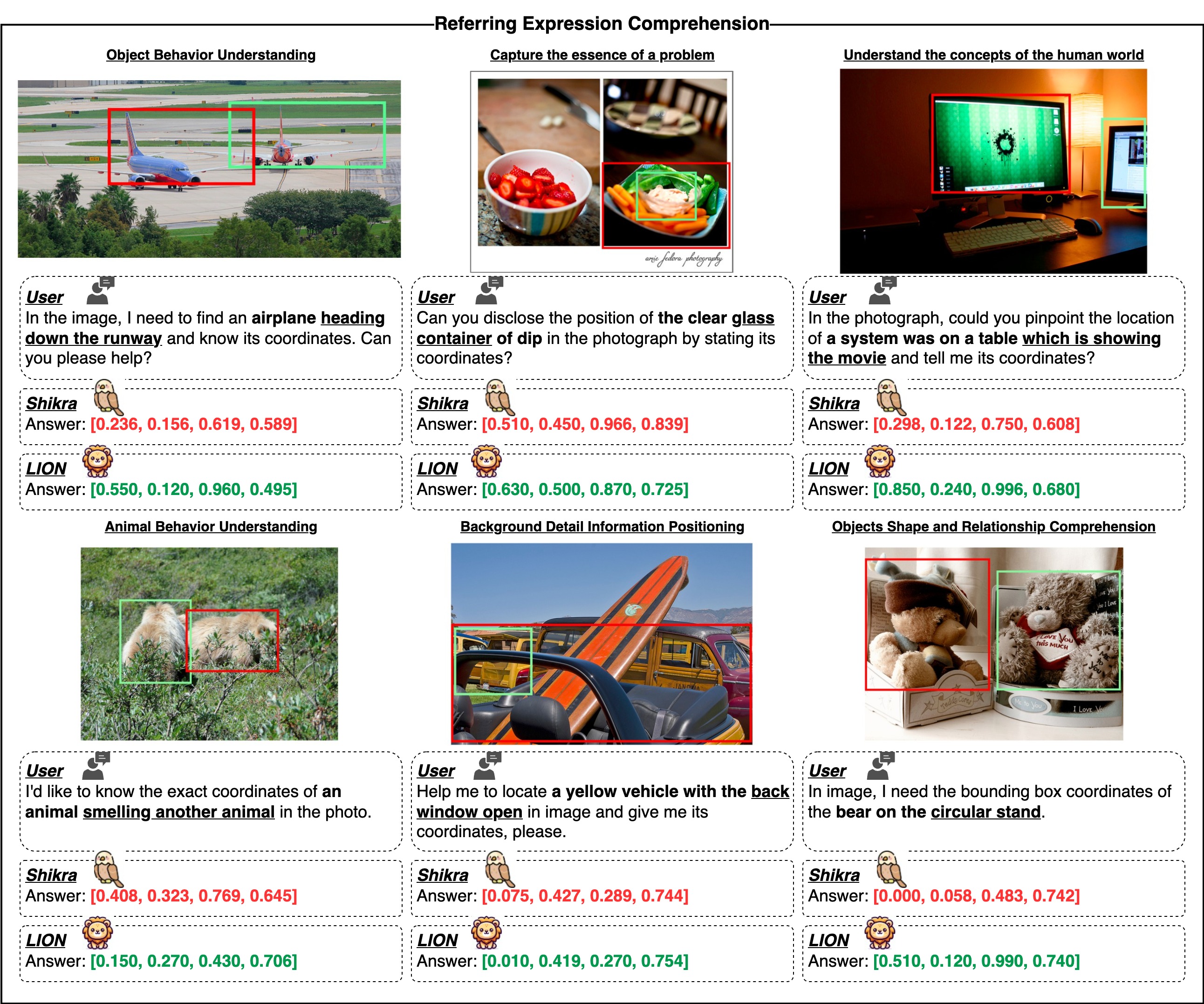

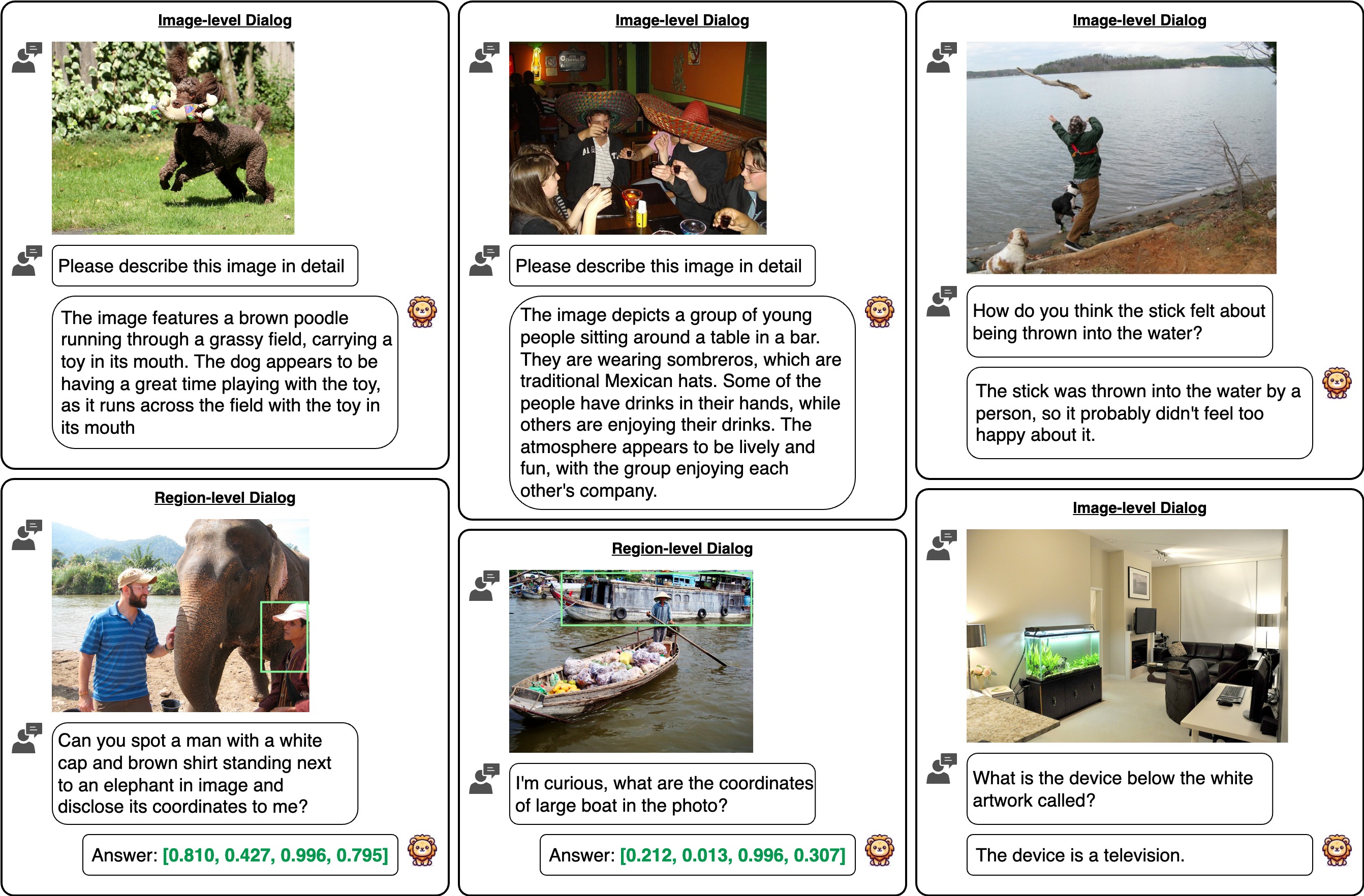

For image-level tasks, we focus on image captioning and Visual Question Answering (VQA). For region-level tasks, we evaluate LION on three REC datasets including RefCOCO, RefCOCO+ and RefCOCOg. The results, detailed in Table 1~2, highlight LION's superior performance compared to baseline models.

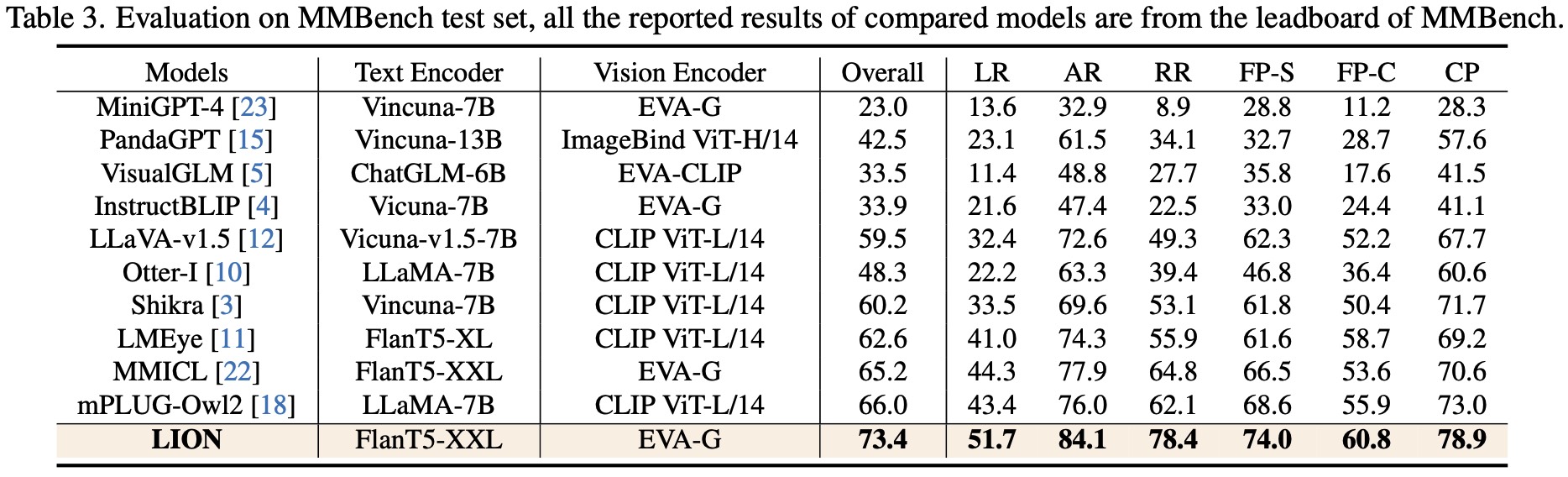

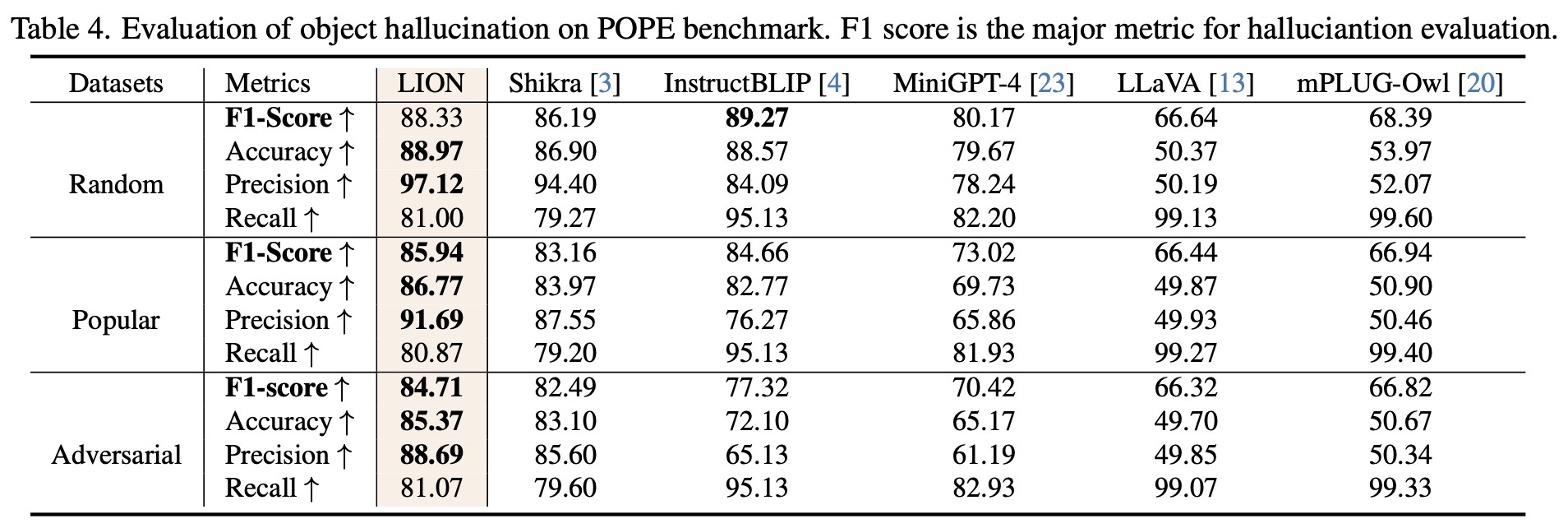

We further evaluate LION on a object hallucination benchmark(POPE) and the most popular MLLM benchmark (MMBench). The results in Table 1~2 show that LION has strong performances across various skills and also demonstrates a strong resistance to hallucinations, particularly in popular and adversarial settings in POPE.

If you find this work useful for your research, please kindly cite our paper:

@inproceedings{chen2024lion,

title={LION: Empowering Multimodal Large Language Model with Dual-Level Visual Knowledge},

author={Chen, Gongwei and Shen, Leyang and Shao, Rui and Deng, Xiang and Nie, Liqiang},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

year={2024}

}