The lightweight PyTorch wrapper for ML researchers. Scale your models. Write less boilerplate.

PyTorch Lightning template for MNIST Classification problem

🏠 Homepage

- Add learning rate scheduler and logger

- Add custom callback class

- Add early stopping callback

- Add Model Checkpoint

- Add TensorBoard for visualisation metric

- Show how to training, validation, testing, and inference the model

Lightning is a way to organize your PyTorch code to decouple the science code from the engineering. It's more of a PyTorch style-guide than a framework.

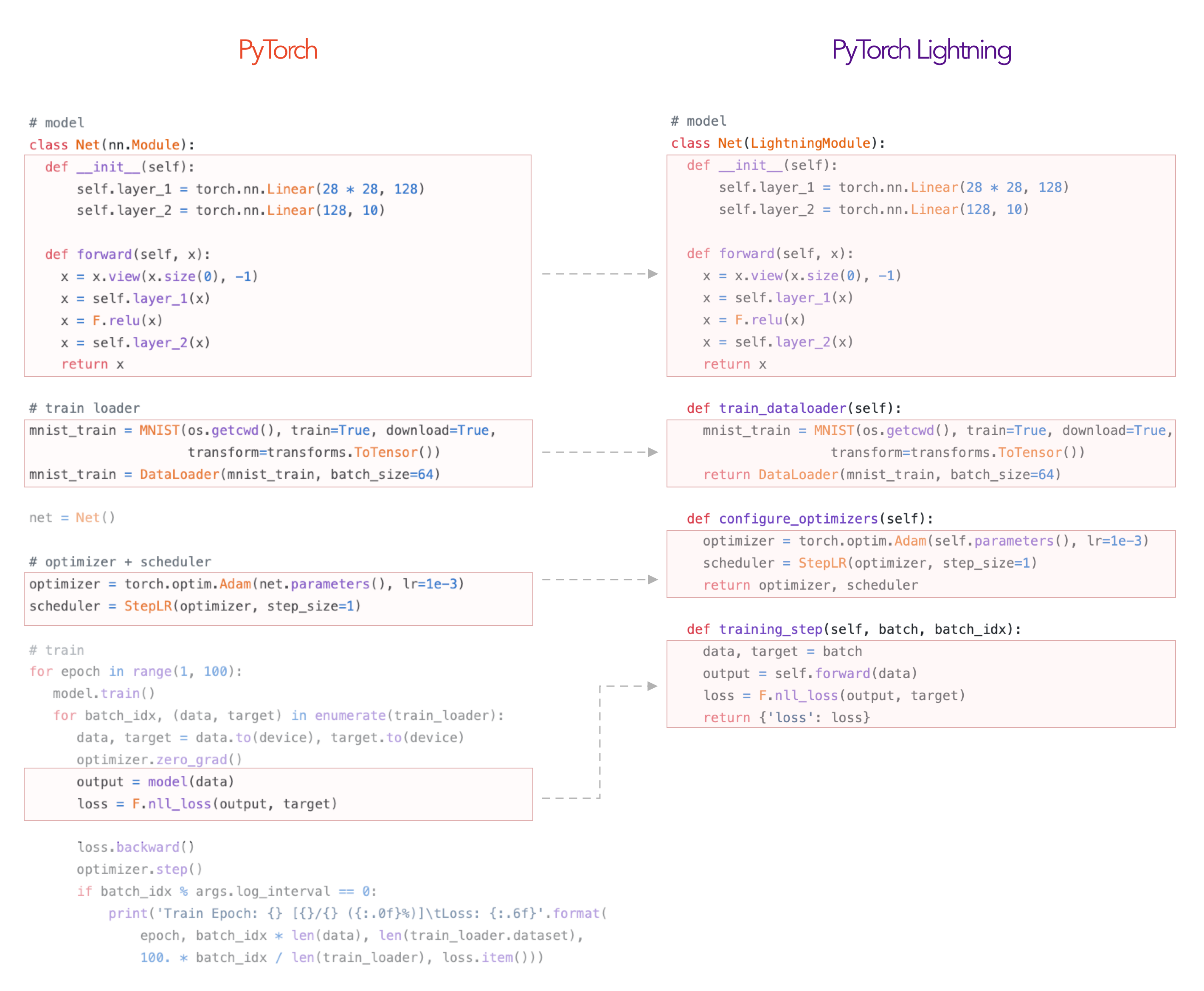

In Lightning, you organize your code into 3 distinct categories:

- Research code (goes in the LightningModule).

- Engineering code (you delete, and is handled by the Trainer).

- Non-essential research code (logging, etc... this goes in Callbacks).

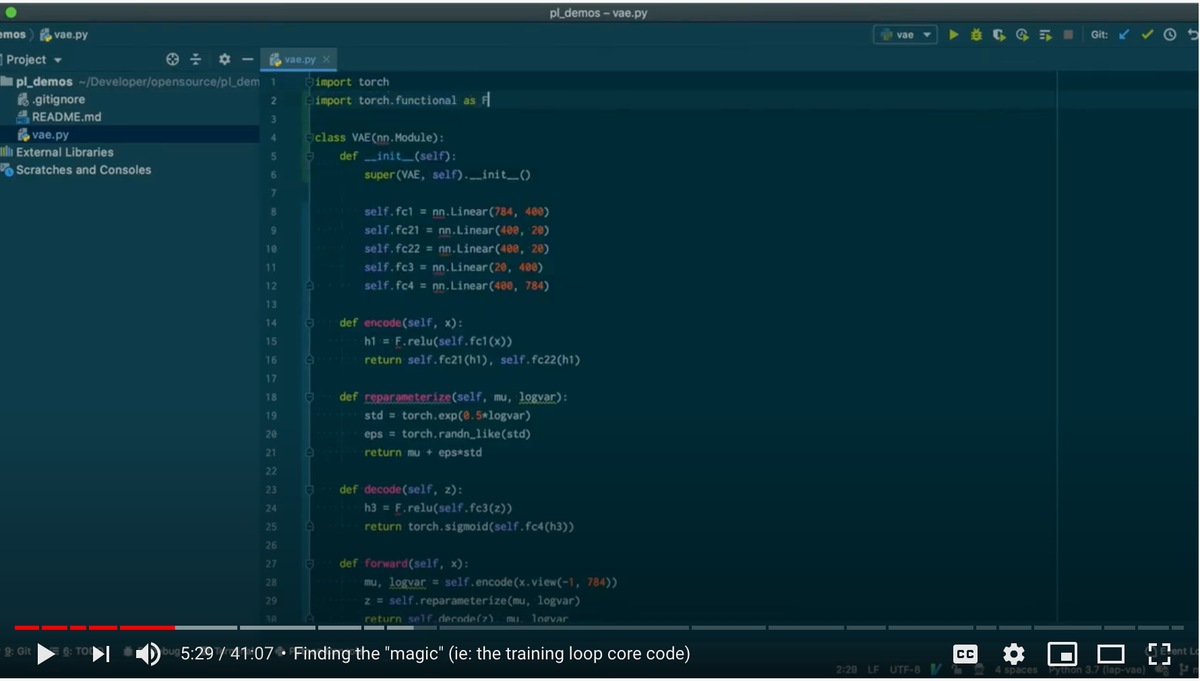

Here's an example of how to refactor your research code into a LightningModule.

The rest of the code is automated by the Trainer!

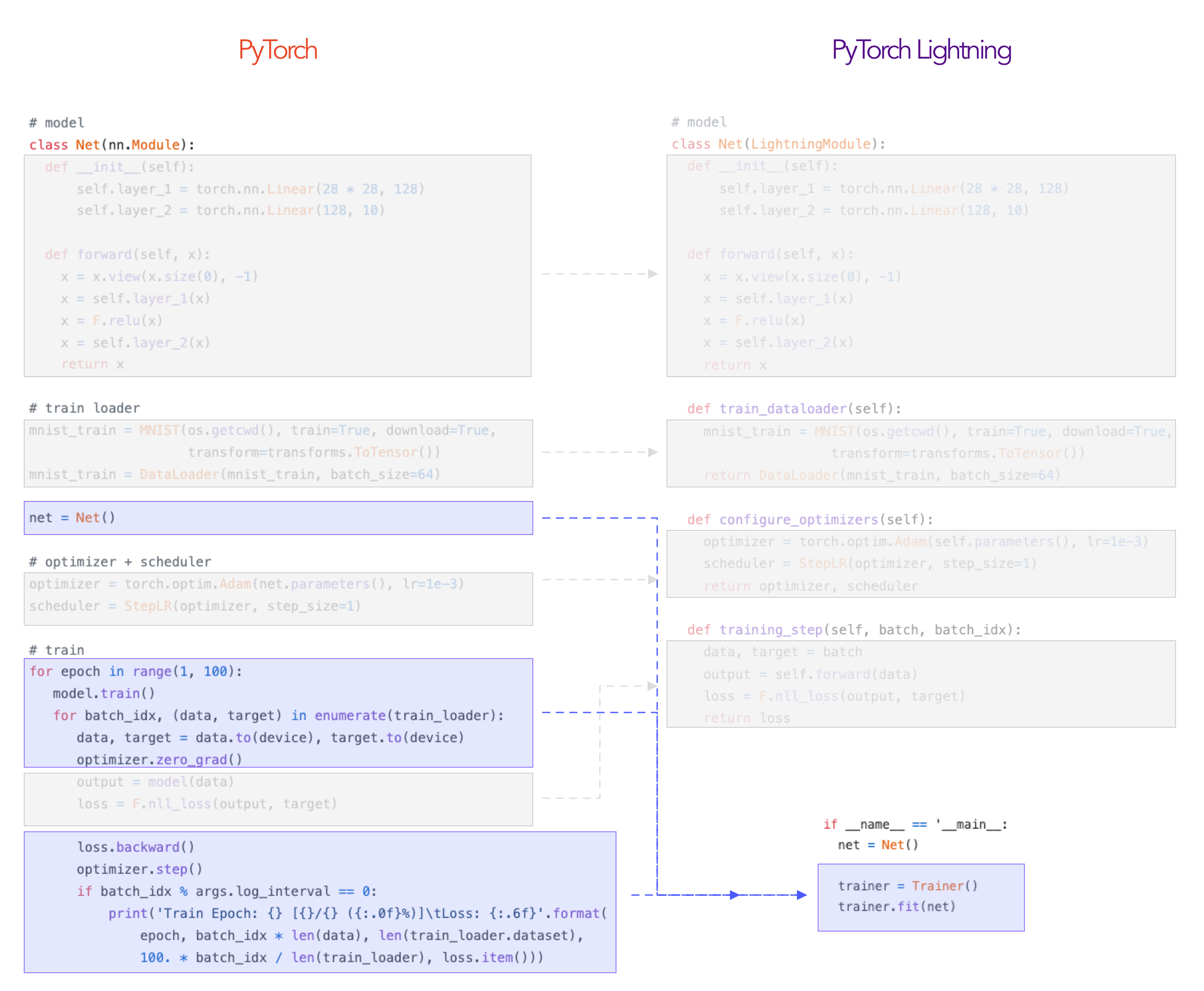

Everything in Blue! This is how lightning separates the science (red) from engineering (blue).

Although your research/production project might start simple, once you add things like GPU AND TPU training, 16-bit precision, etc, you end up spending more time engineering than researching. Lightning automates AND rigorously tests those parts for you.

pip install pytorch-lightningpl_mnist.ipynb👤 Ruben Stefanus

- Website: Ruben Stefanus

- Twitter: @mindbelowink

- Github: @rubentea16

- LinkedIn: @rubenstefanus

Give a ⭐️ if this project helped you!