Official code repository for the papers:

- Tree Detection and Diameter Estimation Based on Deep Learning, published in Forestry: An International Journal Of Forest Research. Preprint version (soon).

- Training Deep Learning Algorithms on Synthetic Forest Images for Tree Detection, presented at ICRA 2022 IFRRIA Workshop. The video presentation is available.

All our datasets are made available to increase the adoption of deep learning for many precision forestry problems.

| Dataset name | Description | Download |

|---|---|---|

| SynthTree43k | A dataset containing 43 000 synthetic images and over 190 000 annotated trees. Includes images, train, test, and validation splits. | OneDrive |

| CanaTree100 | A dataset containing 100 real images and over 920 annotated trees collected in Canadian forests. Includes images, train, test, and validation splits for all five folds. | OneDrive |

Pre-trained models weights are compatible with Detectron2 config files. All models are trained on our synthetic dataset SynthTree43k. We provide a demo file to try it out.

| Backbone | Modality | box AP50 | mask AP50 | Download | |||||

|---|---|---|---|---|---|---|---|---|---|

| R-50-FPN | RGB | 87.74 | 69.36 | model | |||||

| R-101-FPN | RGB | 88.51 | 70.53 | model | |||||

| X-101-FPN | RGB | 88.91 | 71.07 | model | |||||

| R-50-FPN | Depth | 89.67 | 70.66 | model | |||||

| R-101-FPN | Depth | 89.89 | 71.65 | model | |||||

| X-101-FPN | Depth | 87.41 | 68.19 | model | |||||

Once you have a working Detectron2 and OpenCV installation, running the demo is easy.

- Download the pre-trained model weight and save it in the

/outputfolder (of your local PercepTreeV1 repos). -Opendemo_single_frame.pyand uncomment the model config corresponding to pre-trained model weights you downloaded previously, comment the others. Default is X-101. Set themodel_nameto the same name as your downloaded model ex.: 'X-101_RGB_60k.pth' - In

demo_single_frame.py, specify path to the image you want to try it on by setting theimage_pathvariable.

- Download the pre-trained model weight and save it in the

/outputfolder (of your local PercepTreeV1 repos). -Opendemo_video.pyand uncomment the model config corresponding to pre-trained model weights you downloaded previously, comment the others. Default is X-101. - In

demo_video.py, specify path to the video you want to try it on by setting thevideo_pathvariable.

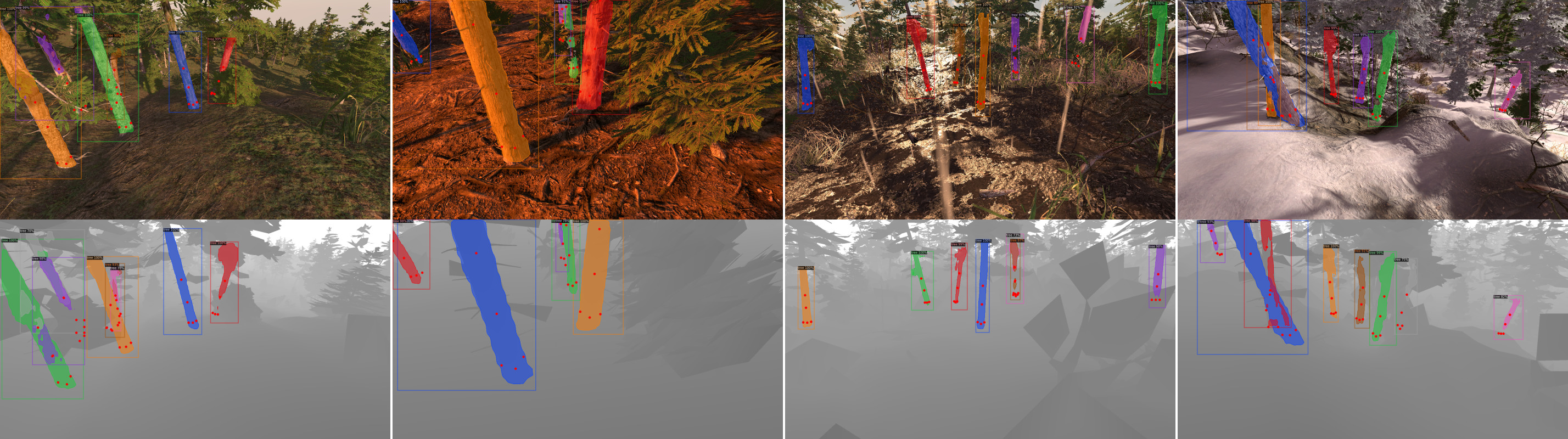

The gif below shows how well the models trained on SynthTree43k transfer to real-world, without any fine-tuning on real-world images. -->