By Rui Liu, Hanming Deng, Yangyi Huang, Xiaoyu Shi, Lewei Lu, Wenxiu Sun, Xiaogang Wang, Jifeng Dai, Hongsheng Li.

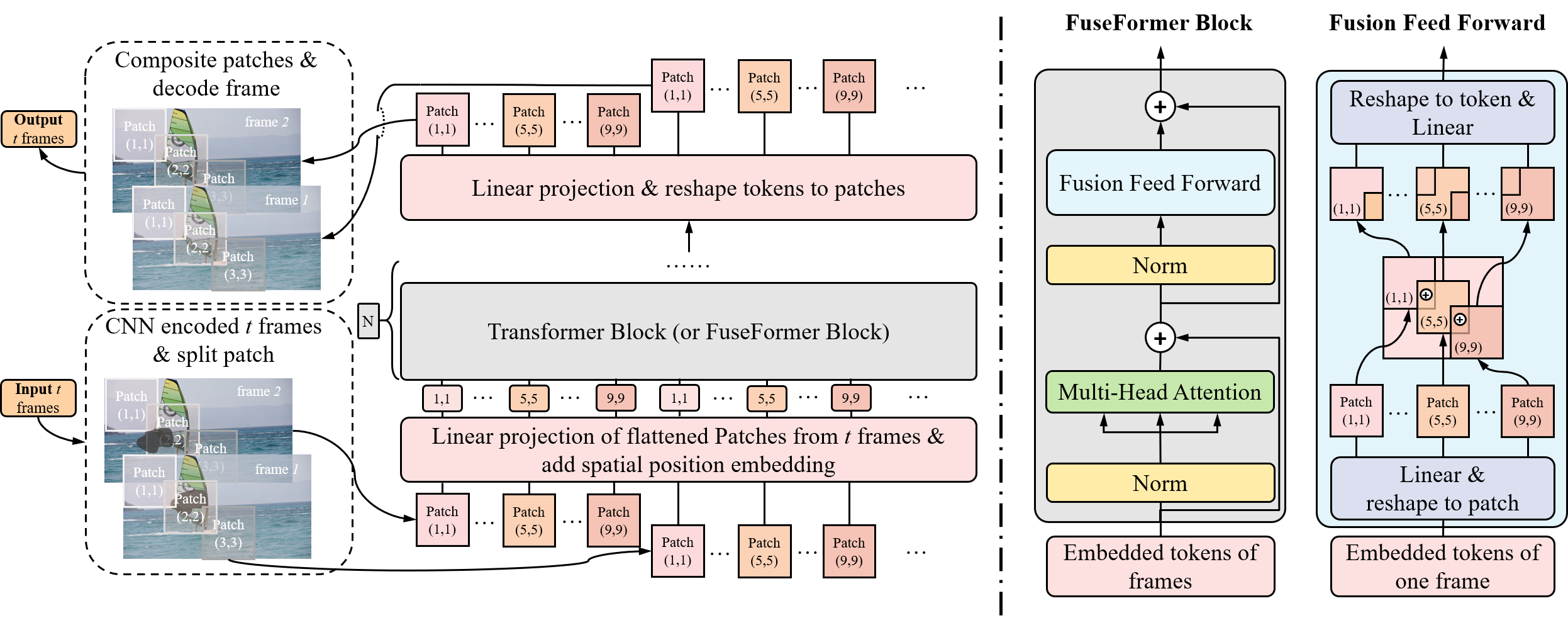

This repo is the official Pytorch implementation of FuseFormer: Fusing Fine-Grained Information in Transformers for Video Inpainting.

- Python >= 3.6

- Pytorch >= 1.0 and corresponding torchvision (https://pytorch.org/)

- Clone this repo:

git clone https://github.com/ruiliu-ai/FuseFormer.git

- Install other packages:

cd FuseFormer

pip install -r requirements.txt

Download datasets (YouTube-VOS and DAVIS) into the data folder.

mkdir data

Note: We use YouTube Video Object Segmentation dataset 2019 version.

python train.py -c configs/youtube-vos.json

Download pre-trained model into checkpoints folder.

mkdir checkpoints

python test.py -c checkpoints/fuseformer.pth -v data/DAVIS/JPEGImages/blackswan -m data/DAVIS/Annotations/blackswan

You can follow free-form mask generation scheme for synthesizing random masks.

Or just download our prepared stationary masks and unzip it to data folder.

mv random_mask_stationary_w432_h240 data/

mv random_mask_stationary_youtube_w432_h240 data/

Then you need to download pre-trained model for evaluating VFID.

mv i3d_rgb_imagenet.pt checkpoints/

python evaluate.py --model fuseformer --ckpt checkpoints/fuseformer.pth --dataset davis --width 432 --height 240

python evaluate.py --model fuseformer --ckpt checkpoints/fuseformer.pth --dataset youtubevos --width 432 --height 240

For evaluating warping error, please refer to https://github.com/phoenix104104/fast_blind_video_consistency

If you find FuseFormer useful in your research, please consider citing:

@InProceedings{Liu_2021_FuseFormer,

title={FuseFormer: Fusing Fine-Grained Information in Transformers for Video Inpainting},

author={Liu, Rui and Deng, Hanming and Huang, Yangyi and Shi, Xiaoyu and Lu, Lewei and Sun, Wenxiu and Wang, Xiaogang and Dai, Jifeng and Li, Hongsheng},

booktitle = {International Conference on Computer Vision (ICCV)},

year={2021}

}

This code borrows heavily from the video inpainting framework spatial-temporal transformer net.