Open source, stable-diffusion production server to show how to deploy diffusion models in a real production environment with: load-balancing, gpu-inference, performance-testing, micro-services orchestration and more. All handled easily with the Lightning Apps framework.

Full tutorial on how to build this app.

Muse uses the opensource Stable Diffusion model made available by stability AI. We apply a few fancy tricks to make the inference super fast.

Here's a small snippet showing our model server

@torch.inference_mode()

def predict(self, dreams: List[Data], entry_time: int):

# handle timeout

if time.time() - entry_time > INFERENCE_REQUEST_TIMEOUT:

raise TimeoutException()

# sets up the inference settings

height = width = IMAGE_SIZE

num_inference_steps = 50 if dreams[0].high_quality else 25

prompts = [dream.prompt for dream in dreams]

# GPU inference

if torch.cuda.is_available():

with autocast("cuda"):

torch.cuda.empty_cache()

pil_results = self._model(

prompts,

height=height,

width=width,

num_inference_steps=num_inference_steps,

)

# apply filter

nsfw_content = self._safety_checker(pil_results)

for i, nsfw in enumerate(nsfw_content):

if nsfw:

pil_results[i] = Image.open("assets/nsfw-warning.png")

else:

time.sleep(3)

pil_results = [Image.fromarray(np.random.randint(0, 255, (height, width, 3), dtype="uint8"))] * len(prompts)

# return the model

results = []

for image in pil_results:

buffered = BytesIO()

image.save(buffered, format="PNG")

img_str = base64.b64encode(buffered.getvalue()).decode("utf-8")

# make sure pil_results is a single item array or it'll rewrite image

results.append({"image": f"data:image/png;base64,{img_str}"})

return resultsTo run this app locally, follow these steps:

conda create --name muse_app python=3.9 --yes

conda activate muse_app

git clone https://github.com/Lightning-AI/stable-diffusion-deploy.git

cd stable-diffusion-deploy

bash dev_install.sh

## To run the app locally

python -m lightning run app app.py

## To run the app on the cloud to share it with your peers and users

python -m lightning run app app.py --cloudYou can configure Muse to select customize number of workers, batch size or select the stable diffusion version of your choice. Learn more.

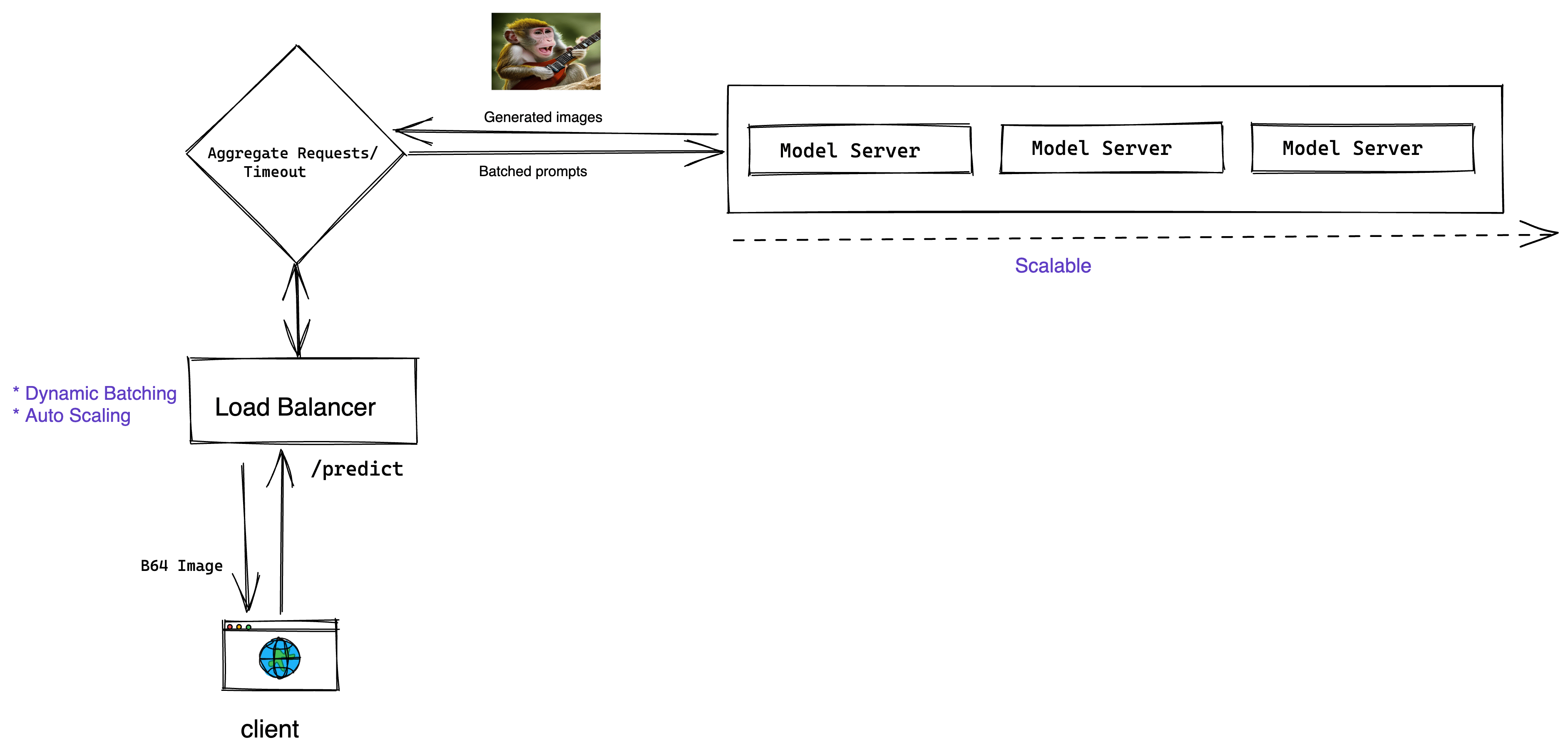

Muse is a blueprint for building diffusion-based production systems with Lightning AI. This app shows you how to:

- Host a multi-tenant Frontend & Backend application architecture

- Full React.js UI

- Micro-services orchestration

- Cloud infrastructure pre-provisioning

- Serves a gpu-powered diffusion model via a REST API

- Dynamic GPU batching for inference requests

- Load balancer that autoscales infrastructure with load-changes

- Load Testing Lightning Component using Locust

- Environment variables to parametrize execution environment

Architecture Diagram of Muse -

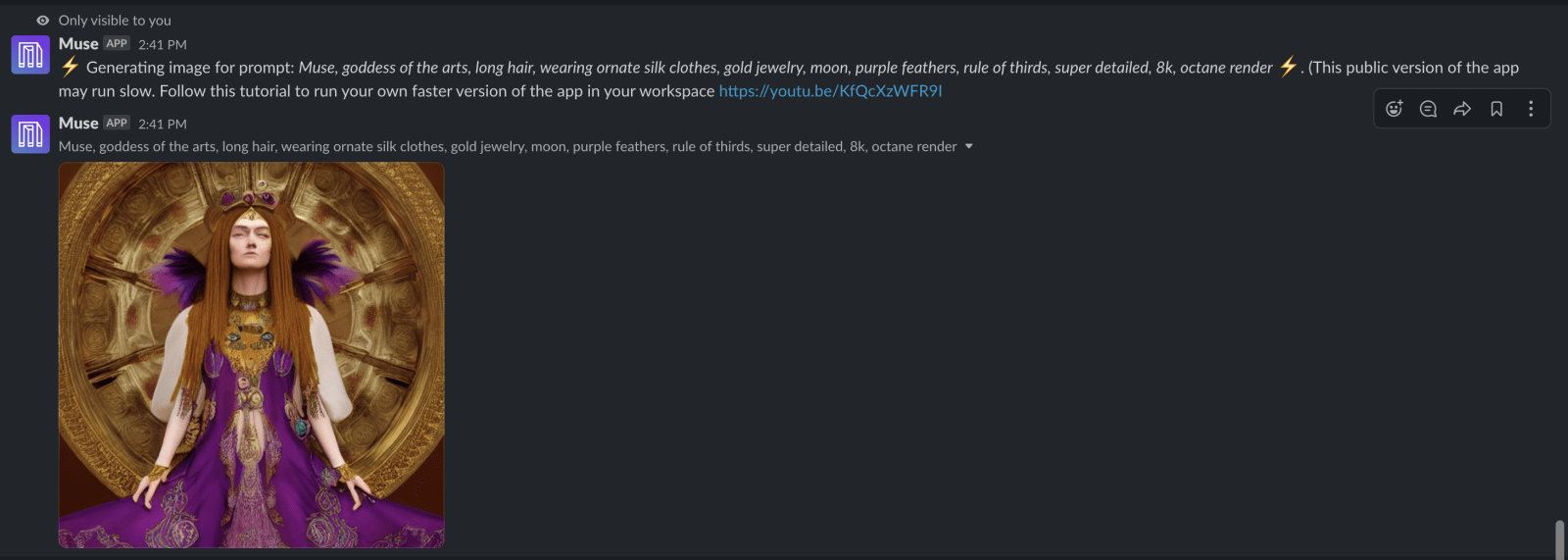

How to integrate Muse to a Slack Workspace

You can integrate this app in your Slack Workspace and send images in Slack channels.

This app uses the Slack Command Bot Component to interact with Slack commands.

You can also check out this video tutorial:

Step 1: Go to https://api.slack.com and create an app.

Step 2: Copy the following tokens and secrets from the Slack API settings by going to https://api.slack.com/apps. These tokens have to be passed either as argument or environment variable to SlackCommandBot class.

Required Token name and environment variables:

- Client ID (SLACK_CLIENT_ID)

- Client Secret (CLIENT_SECRET)

- Signing Secret (SIGNING_SECRET)

- Bot User OAuth Token (BOT_TOKEN)

- App-Level Token (SLACK_TOKEN)

Step 3:

Implement the SlackCommandBot.handle_command(...) method the way you want to interact with the commands.

The return value will be shown only to you.

Step 4: (optional)

If you want your slack app to be distributable to public then you need to

implement SlackCommandBot.save_new_workspace(...) which should save team_id and its corresponding bot_token into a

database.

During the handle_command(...) method you will need to fetch bot_token based on the received team_id.