This repo presents implementation of the InstructGLM and provide a natural language interface for graph machine learning:

Paper: Natural Language is All a Graph Needs

Paper link: https://arxiv.org/abs/2308.07134

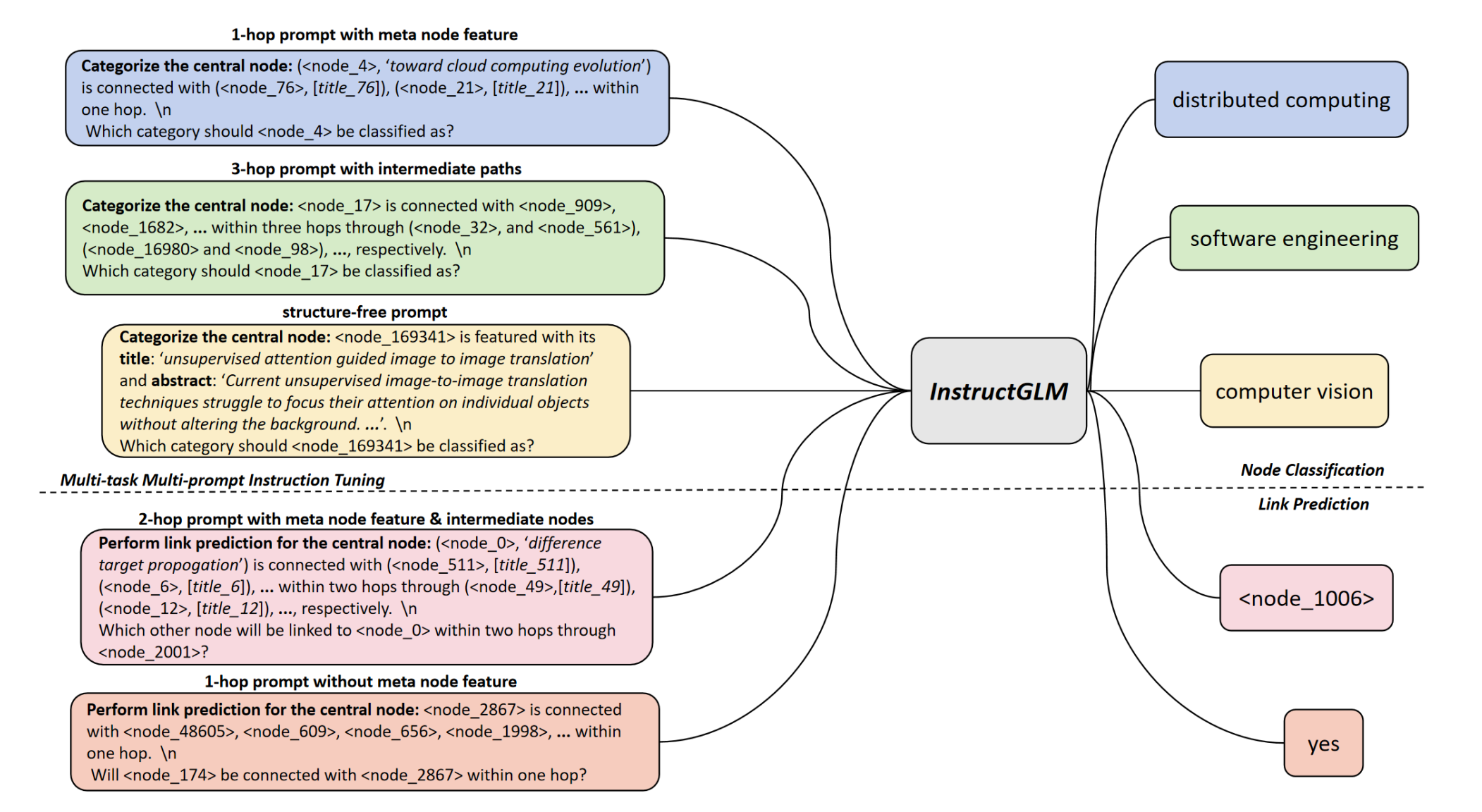

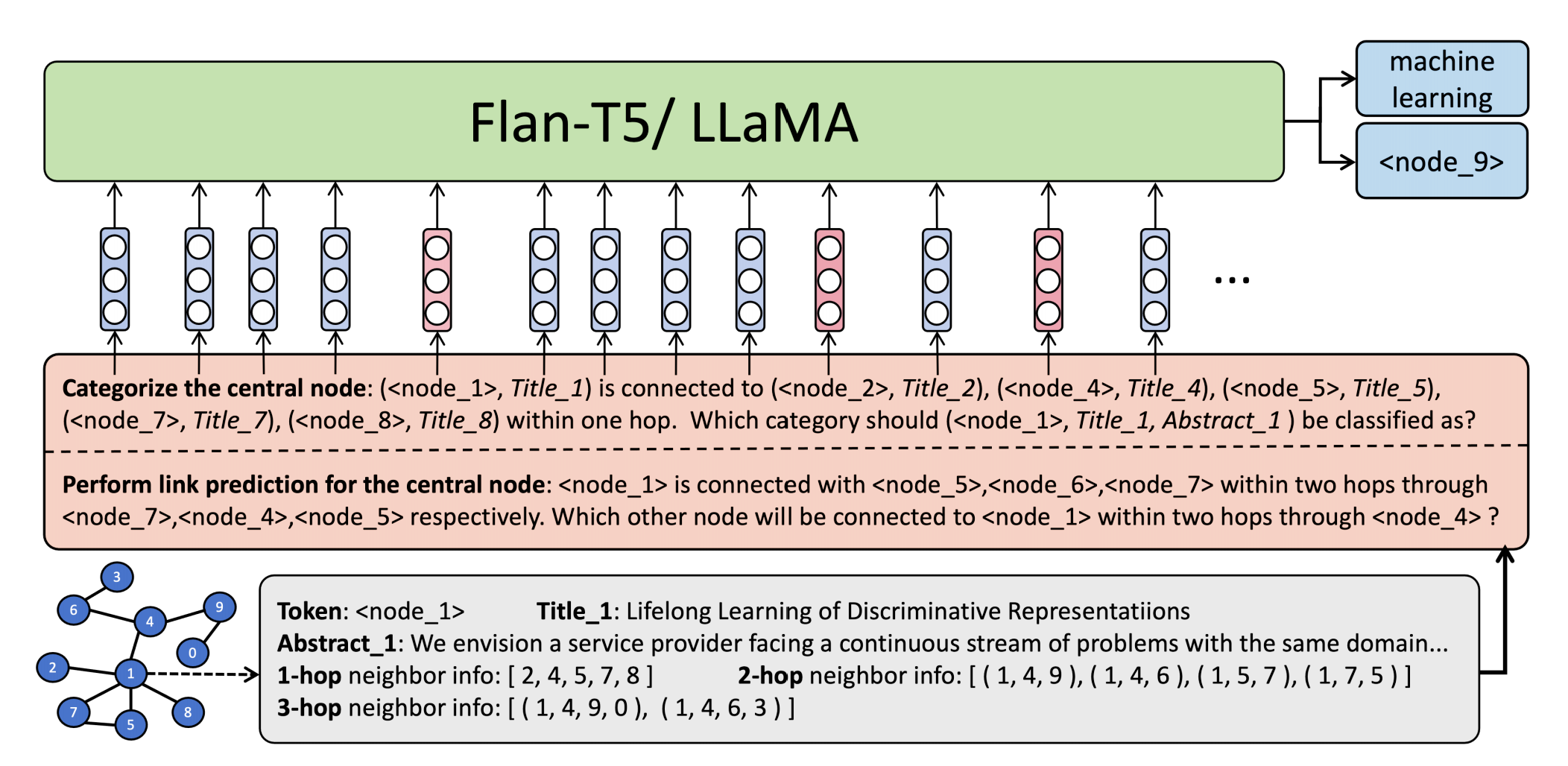

We introduce our proposed Instruction-finetuned Graph Language Model, i.e. InstructGLM, a framework utilizing natural language to describe both graph structure and node features to a generative large language model and further addresses graph-related problems by instruction-tuning, which provides a powerful natural language processing interface for graph machine learning.

- Clone this repo

git clone https://github.com/agiresearch/InstructGLM.git

-

Download preprocessed data from this Google Drive link, and put the Arxiv/ folder under the same path with ./scripts folder. If you would like to preprocess your own data, please follow the data_preprocess folder. Related raw data files can be downloaded from this Google Drive link, and please put these raw data files under ./data_preprocess/Arxiv_preprocess/

-

Download Llama-7b pretrained checkpoint via this Google Drive link. Then please put the ./7B folder under the same path with ./scripts folder.

-

Multi-task Multi-prompt Instruction Tuning

bash scripts/train_llama_arxiv.sh 8

Here 8 means using 8 GPUs to conduct parallel instruction tuning with DDP.

- Validation/ Inference

bash scripts/test_llama.sh 8

- Main key points are summarized in note.txt

See: Google Drive link.

Please cite the following paper corresponding to the repository:

@article{ye2023natural,

title={Natural language is all a graph needs},

author={Ye, Ruosong and Zhang, Caiqi and Wang, Runhui and Xu, Shuyuan and Zhang, Yongfeng},

journal={arXiv:2308.07134},

year={2023}

}