Official PyTorch implementation of FasterViT: Fast Vision Transformers with Hierarchical Attention.

Ali Hatamizadeh, Greg Heinrich, Hongxu (Danny) Yin, Andrew Tao, Jose M. Alvarez, Jan Kautz, Pavlo Molchanov.

For business inquiries, please visit our website and submit the form: NVIDIA Research Licensing

FasterViT achieves a new SOTA Pareto-front in terms of Top-1 accuracy and throughput without extra training data !

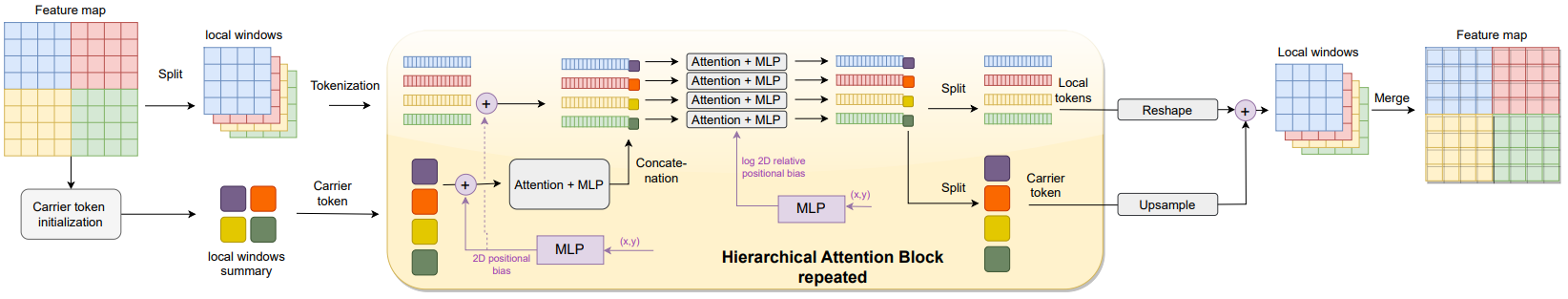

We introduce a new self-attention mechanism, denoted as Hierarchical Attention (HAT), that captures both short and long-range information by learning cross-window carrier tokens.

Note: Please use the latest NVIDIA TensorRT release to enjoy the benefits of optimized FasterViT ops.

- [08.20.2023] 🔥🔥 We have added ImageNet-21K SOTA pre-trained models for various resolutions !

- [07.20.2023] We have created official NVIDIA FasterViT HuggingFace page.

- [07.06.2023] FasterViT checkpoints are now also accecible in HuggingFace!

- [07.04.2023] 🔥🔥 ImageNet pretrained FasterViT models can now be imported with 1 line of code. Please install the latest FasterViT pip package to use this functionality (also supports Any-resolution FasterViT models).

- [06.30.2023] 🔥🔥 We have further improved the TensorRT throughput of FasterViT models by 10-15% on average across different models. Please use the latest NVIDIA TensorRT release to use these throughput performance gains.

- [06.29.2023] Any-resolution FasterViT model can now be intitialized from pre-trained ImageNet resolution (224 x 244) models.

- [06.18.2023] We have released the FasterViT pip package !

- [06.17.2023] 🔥 Any-resolution FasterViT model is now available ! the model can be used for variety of applications such as detection and segmentation or high-resolution fine-tuning with arbitrary input image resolutions.

- [06.09.2023] 🔥🔥 We have released source code and ImageNet-1K FasterViT-models !

We can import pre-trained FasterViT models with 1 line of code. Firstly, FasterViT can be simply installed:

pip install fastervitNote: Please upgrate the package to fastervit>=0.9.6 if you have already installed the package to use the pretrained weights.

A pretrained FasterViT model with default hyper-parameters can be created as in the following:

>>> from fastervit import create_model

# Define fastervit-0 model with 224 x 224 resolution

>>> model = create_model('faster_vit_0_224',

pretrained=True,

model_path="/tmp/faster_vit_0.pth.tar")model_path is used to set the directory to download the model.

We can also simply test the model by passing a dummy input image. The output is the logits:

>>> import torch

>>> image = torch.rand(1, 3, 224, 224)

>>> output = model(image) # torch.Size([1, 1000])We can also use the any-resolution FasterViT model to accommodate arbitrary image resolutions. In the following, we define an any-resolution FasterViT-0 model with input resolution of 576 x 960, window sizes of 12 and 6 in 3rd and 4th stages, carrier token size of 2 and embedding dimension of 64:

>>> from fastervit import create_model

# Define any-resolution FasterViT-0 model with 576 x 960 resolution

>>> model = create_model('faster_vit_0_any_res',

resolution=[576, 960],

window_size=[7, 7, 12, 6],

ct_size=2,

dim=64,

pretrained=True)Note that the above model is intiliazed from the original ImageNet pre-trained FasterViT with original resolution of 224 x 224. As a result, missing keys and mis-matches could be expected since we are addign new layers (e.g. addition of new carrier tokens, etc.)

We can simply test the model by passing a dummy input image. The output is the logits:

>>> import torch

>>> image = torch.rand(1, 3, 576, 960)

>>> output = model(image) # torch.Size([1, 1000])- ImageNet-1K training code

- ImageNet-1K pre-trained models

- Any-resolution FasterViT

- FasterViT pip-package release

- Add capablity to initialize any-resolution FasterViT from ImageNet-pretrained weights.

- ImageNet-21K pre-trained models

- Detection code + models

FasterViT ImageNet-1K Pretrained Models

| Name | Acc@1(%) | Acc@5(%) | Throughput(Img/Sec) | Resolution | #Params(M) | FLOPs(G) | Download |

|---|---|---|---|---|---|---|---|

| FasterViT-0 | 82.1 | 95.9 | 5802 | 224x224 | 31.4 | 3.3 | model |

| FasterViT-1 | 83.2 | 96.5 | 4188 | 224x224 | 53.4 | 5.3 | model |

| FasterViT-2 | 84.2 | 96.8 | 3161 | 224x224 | 75.9 | 8.7 | model |

| FasterViT-3 | 84.9 | 97.2 | 1780 | 224x224 | 159.5 | 18.2 | model |

| FasterViT-4 | 85.4 | 97.3 | 849 | 224x224 | 424.6 | 36.6 | model |

| FasterViT-5 | 85.6 | 97.4 | 449 | 224x224 | 975.5 | 113.0 | model |

| FasterViT-6 | 85.8 | 97.4 | 352 | 224x224 | 1360.0 | 142.0 | model |

FasterViT ImageNet-21K Pretrained Models (ImageNet-1K Fine-tuned)

| Name | Acc@1(%) | Acc@5(%) | Resolution | #Params(M) | FLOPs(G) | Download |

|---|---|---|---|---|---|---|

| FasterViT-4-21K-224 | 86.6 | 97.8 | 224x224 | 271.9 | 40.8 | model |

| FasterViT-4-21K-384 | 87.6 | 98.3 | 384x384 | 271.9 | 120.1 | model |

| FasterViT-4-21K-512 | 87.8 | 98.4 | 512x512 | 271.9 | 213.5 | model |

| FasterViT-4-21K-768 | 87.9 | 98.5 | 768x768 | 271.9 | 480.4 | model |

All models use crop_pct=0.875. Results are obtained by running inference on ImageNet-1K pretrained models without finetuning.

| Name | A-Acc@1(%) | A-Acc@5(%) | R-Acc@1(%) | R-Acc@5(%) | V2-Acc@1(%) | V2-Acc@5(%) |

|---|---|---|---|---|---|---|

| FasterViT-0 | 23.9 | 57.6 | 45.9 | 60.4 | 70.9 | 90.0 |

| FasterViT-1 | 31.2 | 63.3 | 47.5 | 61.9 | 72.6 | 91.0 |

| FasterViT-2 | 38.2 | 68.9 | 49.6 | 63.4 | 73.7 | 91.6 |

| FasterViT-3 | 44.2 | 73.0 | 51.9 | 65.6 | 75.0 | 92.2 |

| FasterViT-4 | 49.0 | 75.4 | 56.0 | 69.6 | 75.7 | 92.7 |

| FasterViT-5 | 52.7 | 77.6 | 56.9 | 70.0 | 76.0 | 93.0 |

| FasterViT-6 | 53.7 | 78.4 | 57.1 | 70.1 | 76.1 | 93.0 |

A, R and V2 denote ImageNet-A, ImageNet-R and ImageNet-V2 respectively.

Please see TRAINING.md for detailed training instructions of all models.

The FasterViT models can be evaluated on ImageNet-1K validation set using the following:

python validate.py \

--model <model-name>

--checkpoint <checkpoint-path>

--data_dir <imagenet-path>

--batch-size <batch-size-per-gpu

Here --model is the FasterViT variant (e.g. faster_vit_0_224_1k), --checkpoint is the path to pretrained model weights, --data_dir is the path to ImageNet-1K validation set and --batch-size is the number of batch size. We also provide a sample script here.

We provide ONNX conversion script to enable dynamic batch size inference. For instance, to generate ONNX model for faster_vit_0_any_res with resolution 576 x 960 and ONNX opset number 17, the following can be used.

python onnx_convert --model-name faster_vit_0_any_res --resolution-h 576 --resolution-w 960 --onnx-opset 17

To generate FasterViT CoreML models, please install coremltools==5.2.0 and use the following script:

import torch

import coremltools

from fastervit import create_model

model = create_model('faster_vit_0_224').eval()

input_size = 224

bs_size = 1

file_name = 'faster_vit_0_224.mlmodel'

img = torch.randn((bs_size, 3, input_size, input_size), dtype=torch.float32)

model_jit_trace = torch.jit.trace(model, img)

model = coremltools.convert(model_jit_trace, inputs=[coremltools.ImageType(shape=img.shape)])

model.save(file_name)

It is recommended to benchmark the performance by using Xcode14 or newer releases.

The dependencies can be installed by running:

pip install -r requirements.txtWe always welcome third-party extentions/implementations and usage for other purposes. If you would like your work to be listed in this repository, please raise an issue and provide us with detailed information.

Please consider citing FasterViT if this repository is useful for your work.

@article{hatamizadeh2023fastervit,

title={FasterViT: Fast Vision Transformers with Hierarchical Attention},

author={Hatamizadeh, Ali and Heinrich, Greg and Yin, Hongxu and Tao, Andrew and Alvarez, Jose M and Kautz, Jan and Molchanov, Pavlo},

journal={arXiv preprint arXiv:2306.06189},

year={2023}

}

Copyright © 2023, NVIDIA Corporation. All rights reserved.

This work is made available under the NVIDIA Source Code License-NC. Click here to view a copy of this license.

For license information regarding the timm repository, please refer to its repository.

For license information regarding the ImageNet dataset, please see the ImageNet official website.

This repository is built on top of the timm repository. We thank Ross Wrightman for creating and maintaining this high-quality library.