Sam Bond-Taylor and Chris G. Willcocks

Published at the International Conference on Learning Representations (ICLR) 2024

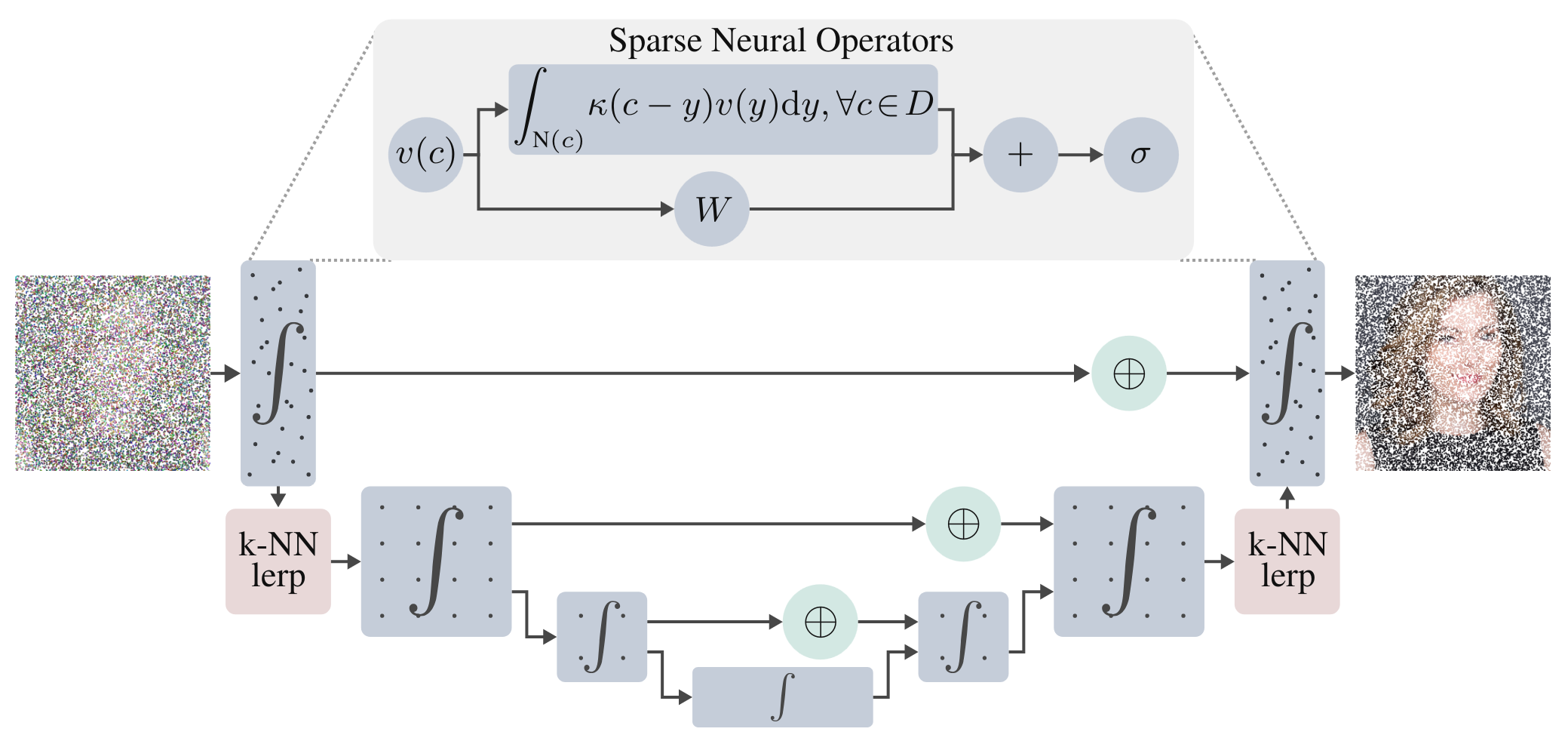

This paper introduces ∞-Diff, a generative diffusion model defined in an infinite-dimensional Hilbert space, which can model infinite resolution data. By training on randomly sampled subsets of coordinates and denoising content only at those locations, we learn a continuous function for arbitrary resolution sampling. Unlike prior neural field-based infinite-dimensional models, which use point-wise functions requiring latent compression, our method employs non-local integral operators to map between Hilbert spaces, allowing spatial context aggregation. This is achieved with an efficient multi-scale function-space architecture that operates directly on raw sparse coordinates, coupled with a mollified diffusion process that smooths out irregularities. Through experiments on high-resolution datasets, we found that even at an 8× subsampling rate, our model retains high-quality diffusion. This leads to significant run-time and memory savings, delivers samples with lower FID scores, and scales beyond the training resolution while retaining detail.

The most easy way to set up the environment is using conda. To get set up quickly, use miniconda, and switch to the libmamba solver to speed up environment solving.

The following commands assume that CUDA 11.7 is installed. If a different version of CUDA is installed, alter requirements.yml accordingly. Run the following command to clone this repo using git and create the environment.

git clone https://github.com/samb-t/infty-diff && cd infty-diff

conda env create --name infty-diff --file requirements.yml

conda activate infty-diff

As part of the installation torchsparse and flash-attention are compiled from source so this may take a while.

By default torchsparse is installed for efficient sparse convolutions. This is what was used in all of our experiments as we found it performed the best; we include a depthwise convolution implementation of torchsparse which we found can outperform dense convolutions in some settings. However, there are other libraries available such as spconv and MinkowksiEngine, which on your hardware may perform better so may be preferred, however, we have not thoroughly tested these. When training models, the sparse backend can be selected with --config.model.backend="torchsparse".

To configure the default paths for datasets used for training the models in this repo, simply edit the config file in in the config file - changing the data.root_dir attribute of each dataset you wish to use to the path where your dataset is saved locally.

| Dataset | Official Link | Academic Torrents Link |

|---|---|---|

| FFHQ | Official FFHQ | Academic Torrents FFHQ |

| LSUN | Official LSUN | Academic Torrents LSUN |

| CelebA-HQ | Official CelebA-HQ | - |

This section contains details on basic commands for training and generating samples. Image level models were trained on an A100 80GB and these commands presume the same level of hardware. If your GPU has less VRAM then you may need to train with smaller batch sizes and/or smaller models than defaults.

The following command starts training the image level diffusion model on FFHQ.

python train_inf_ae_diffusion.py --config configs/ffhq_256_config.py --config.run.experiment="ffhq_mollified_256"

After which the latent model can be trained with

python train_latent_diffusion.py --config configs/ffhq_latent_config.py --config.run.experiment="ffhq_mollified_256_sampler" --decoder_config configs/ffhq_256_config.py --decoder_config.run.experiment="ffhq_mollified_256"

ml_collections is used for hyperparameters, so overriding these can be done by passing in values, for example, batch size can be changed with --config.train.batch_size=32.

After both models have been trained, the following script will generate a folder of samples

python experiments/generate_samples.py --config configs/ffhq_latent_config.py --config.run.experiment="ffhq_mollified_256_sampler" --decoder_config configs/ffhq_256_config.py --decoder_config.run.experiment="ffhq_mollified_256"

Huge thank you to everyone who makes their code available. In particular, some code is based on

- Improved Denoising Diffusion Probabilistic Models

- Diffusion Autoencoders: Toward a Meaningful and Decodable Representation

- Fourier Neural Operator for Parametric Partial Differential Equations

- Unleashing Transformers: Parallel Token Prediction with Discrete Absorbing Diffusion for Fast High-Resolution Image Generation from Vector-Quantized Codes

@inproceedings{bond2024infty,

title = {$\infty$-Diff: Infinite Resolution Diffusion with Subsampled Mollified States},

author = {Sam Bond-Taylor and Chris G. Willcocks},

booktitle = {Interrnational Conference on Learning Representations},

year = {2024}

}