This repo hosts the official implementation of:

Xingqian Xu, Jiayi Guo, Zhangyang Wang, Gao Huang, Irfan Essa, and Humphrey Shi, Prompt-Free Diffusion: Taking "Text" out of Text-to-Image Diffusion Models, Paper arXiv Link.

- [2023.05.25]: Our demo is running on HuggingFace🤗

- [2023.05.25]: Repo created

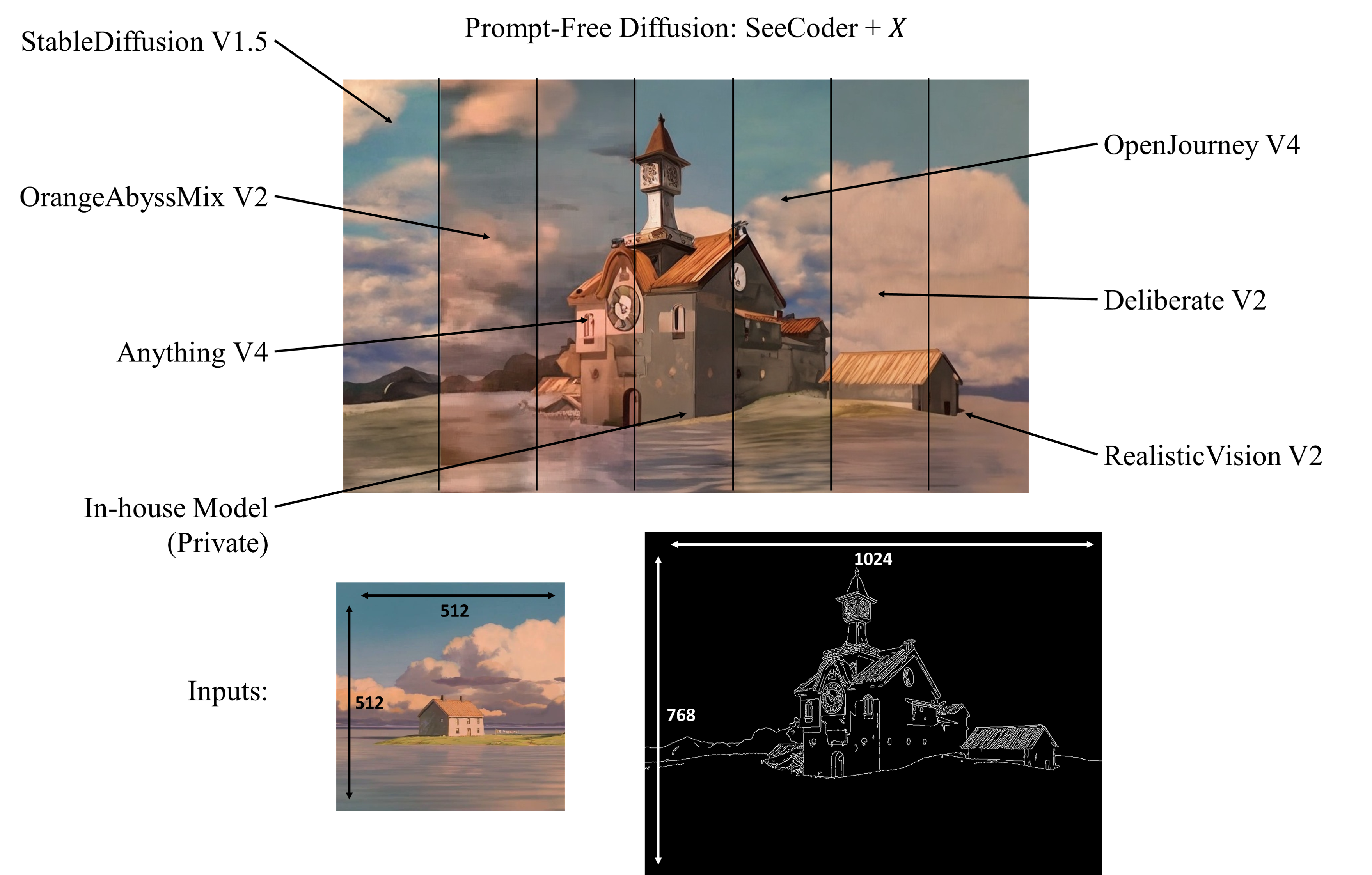

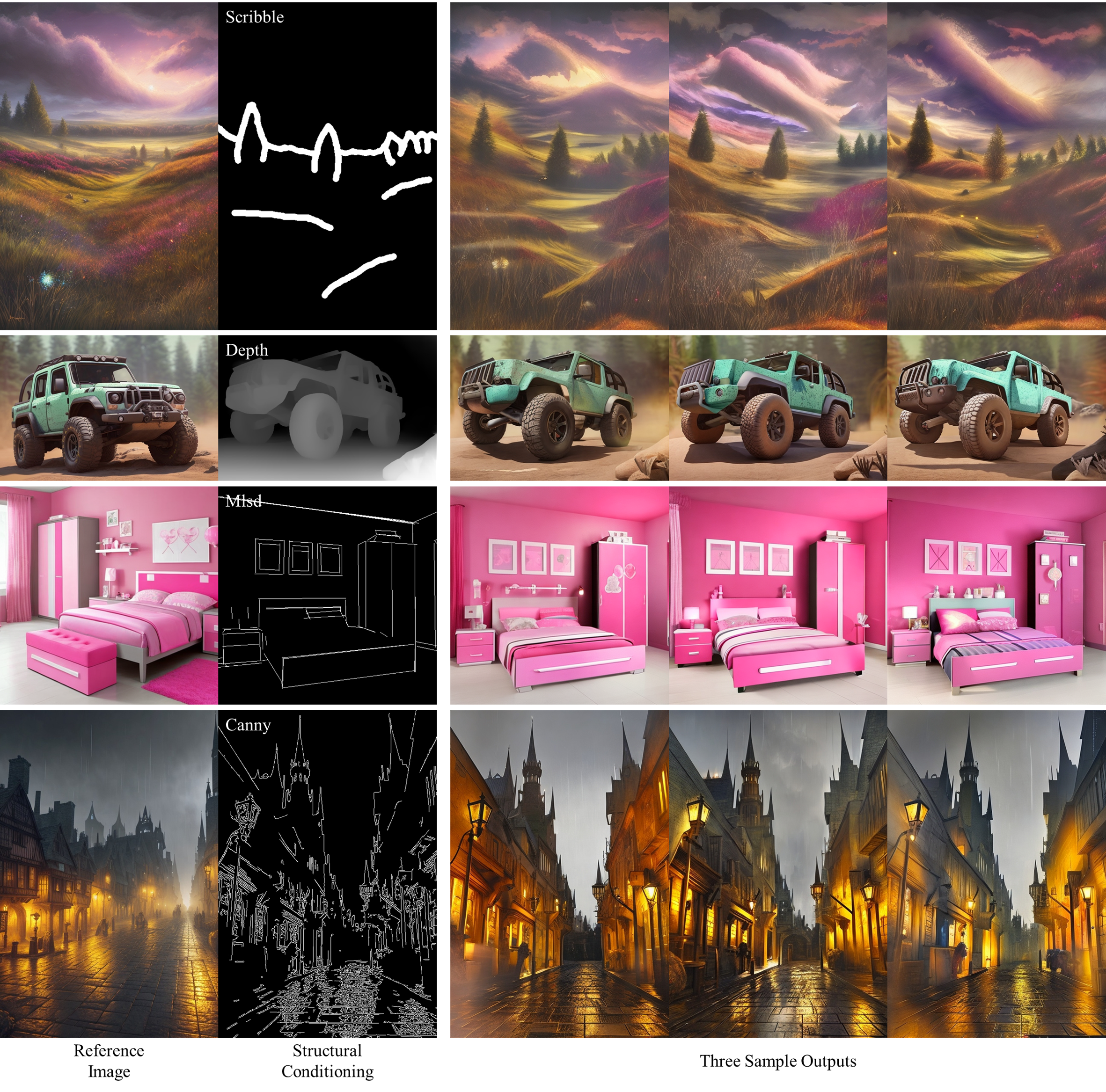

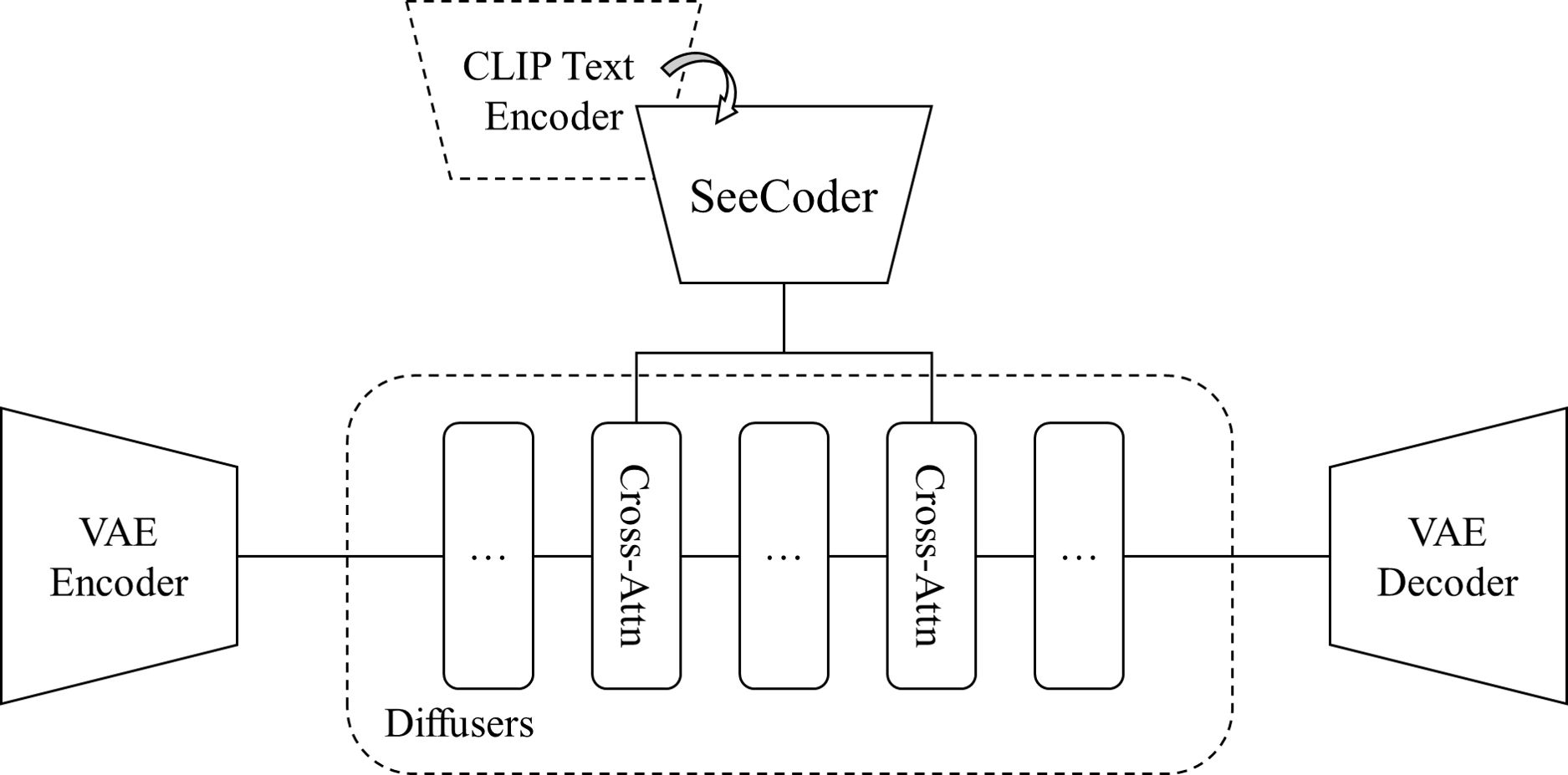

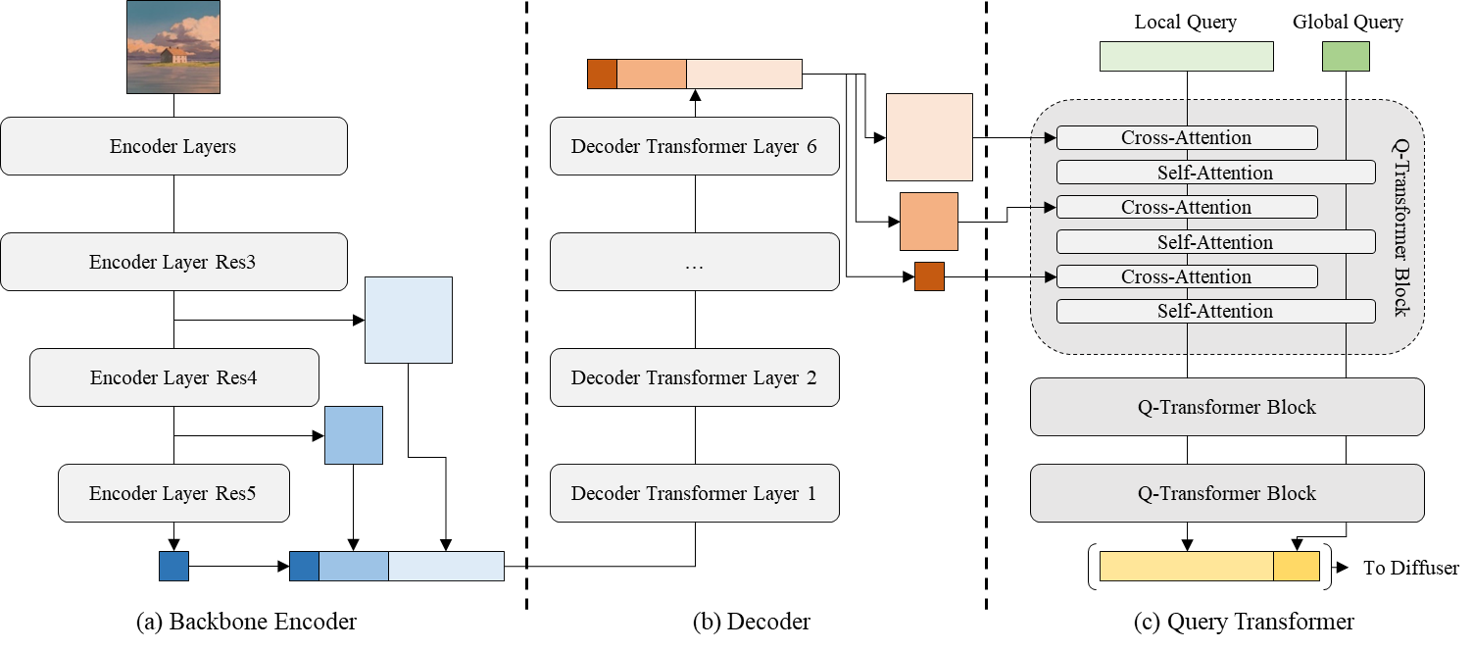

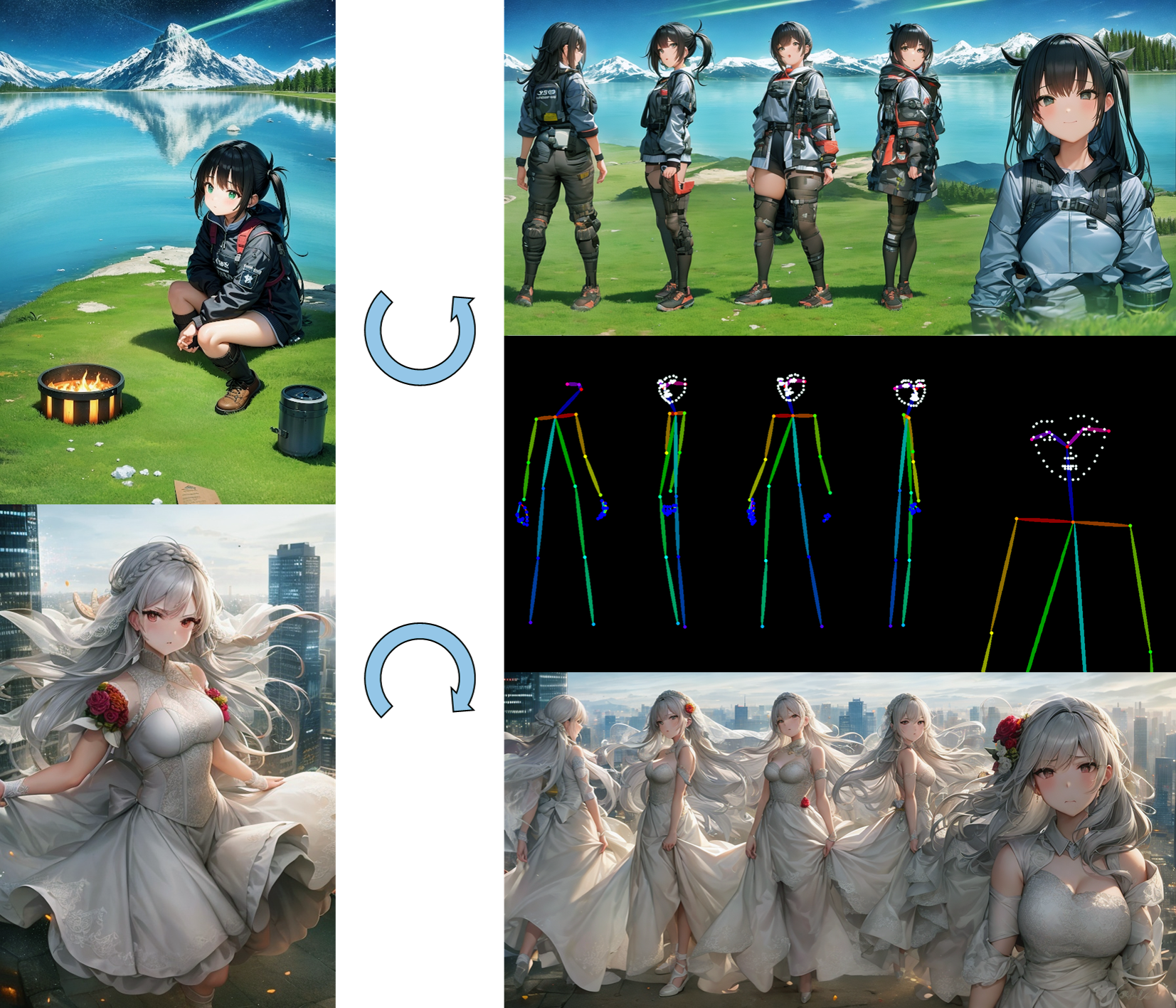

Prompt-Free Diffusion is a diffusion model that relys on only visual inputs to generate new images, handled by Semantic Context Encoder (SeeCoder) by substituting the commonly used CLIP-based text encoder. SeeCoder is reusable to most public T2I models as well as adaptive layers like ControlNet, LoRA, T2I-Adapter, etc. Just drop in and play!

conda create -n prompt-free-diffusion python=3.10

conda activate prompt-free-diffusion

pip install torch==2.0.0+cu117 torchvision==0.15.1 --extra-index-url https://download.pytorch.org/whl/cu117

pip install -r requirements.txt

We provide a WebUI empowered by Gradio. Start the WebUI with the following command:

python app.py

To support the full functionality of our demo. You need the following models located in these paths:

└── pretrained

├── pfd

| ├── vae

| │ └── sd-v2-0-base-autokl.pth

| ├── diffuser

| │ ├── AbyssOrangeMix-v2.safetensors

| │ ├── AbyssOrangeMix-v3.safetensors

| │ ├── Anything-v4.safetensors

| │ ├── Deliberate-v2-0.safetensors

| │ ├── OpenJouney-v4.safetensors

| │ ├── RealisticVision-v2-0.safetensors

| │ └── SD-v1-5.safetensors

| └── seecoder

| ├── seecoder-v1-0.safetensors

| ├── seecoder-pa-v1-0.safetensors

| └── seecoder-anime-v1-0.safetensors

└── controlnet

├── control_sd15_canny_slimmed.safetensors

├── control_sd15_depth_slimmed.safetensors

├── control_sd15_hed_slimmed.safetensors

├── control_sd15_mlsd_slimmed.safetensors

├── control_sd15_normal_slimmed.safetensors

├── control_sd15_openpose_slimmed.safetensors

├── control_sd15_scribble_slimmed.safetensors

├── control_sd15_seg_slimmed.safetensors

├── control_v11p_sd15_canny_slimmed.safetensors

├── control_v11p_sd15_lineart_slimmed.safetensors

├── control_v11p_sd15_mlsd_slimmed.safetensors

├── control_v11p_sd15_openpose_slimmed.safetensors

├── control_v11p_sd15s2_lineart_anime_slimmed.safetensors

├── control_v11p_sd15_softedge_slimmed.safetensors

└── preprocess

├── hed

│ └── ControlNetHED.pth

├── midas

│ └── dpt_hybrid-midas-501f0c75.pt

├── mlsd

│ └── mlsd_large_512_fp32.pth

├── openpose

│ ├── body_pose_model.pth

│ ├── facenet.pth

│ └── hand_pose_model.pth

└── pidinet

└── table5_pidinet.pth

All models can be downloaded at Hugging Face link.

We also provide tools to convert pretrained models from sdwebui and huggingface diffuser library to this codebase, please modify the following files:

└── tools

├── get_controlnet.py

└── model_conversion.pth

You are expected to do some customized coding to make it work (i.e. changing hardcoded input output file paths)

Part of the codes reorganizes/reimplements code from the following repositories: Versatile Diffusion official Github and ControlNet sdwebui Github, which are also great influenced by LDM official Github and DDPM official Github