Wildlife monitoring

Wildlife monitoring is essential for keeping track of animal movement patterns & population change. The tricky part however can be finding & identifying every species.

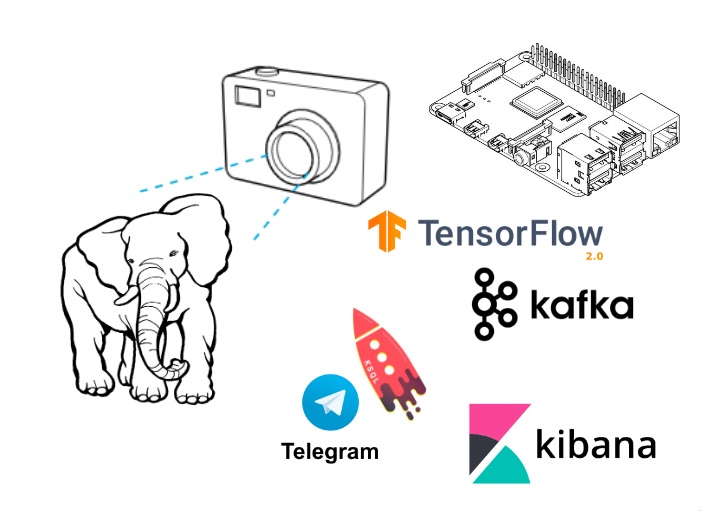

This project is a demonstration of using a raspberry Pi and camera, Apache Kafka, Kafka Connect to identify and classify animals. Using ksqlDB to see population trends over time and the display of real-time analytics on this data with Kibana dashboards. Plus instant alerting using Telegram to send me a push notification to my phone if we discover a rare animal

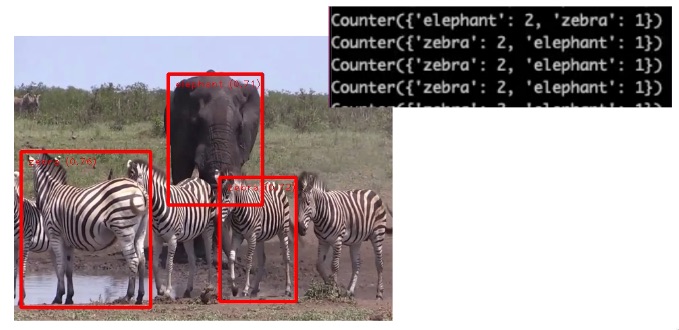

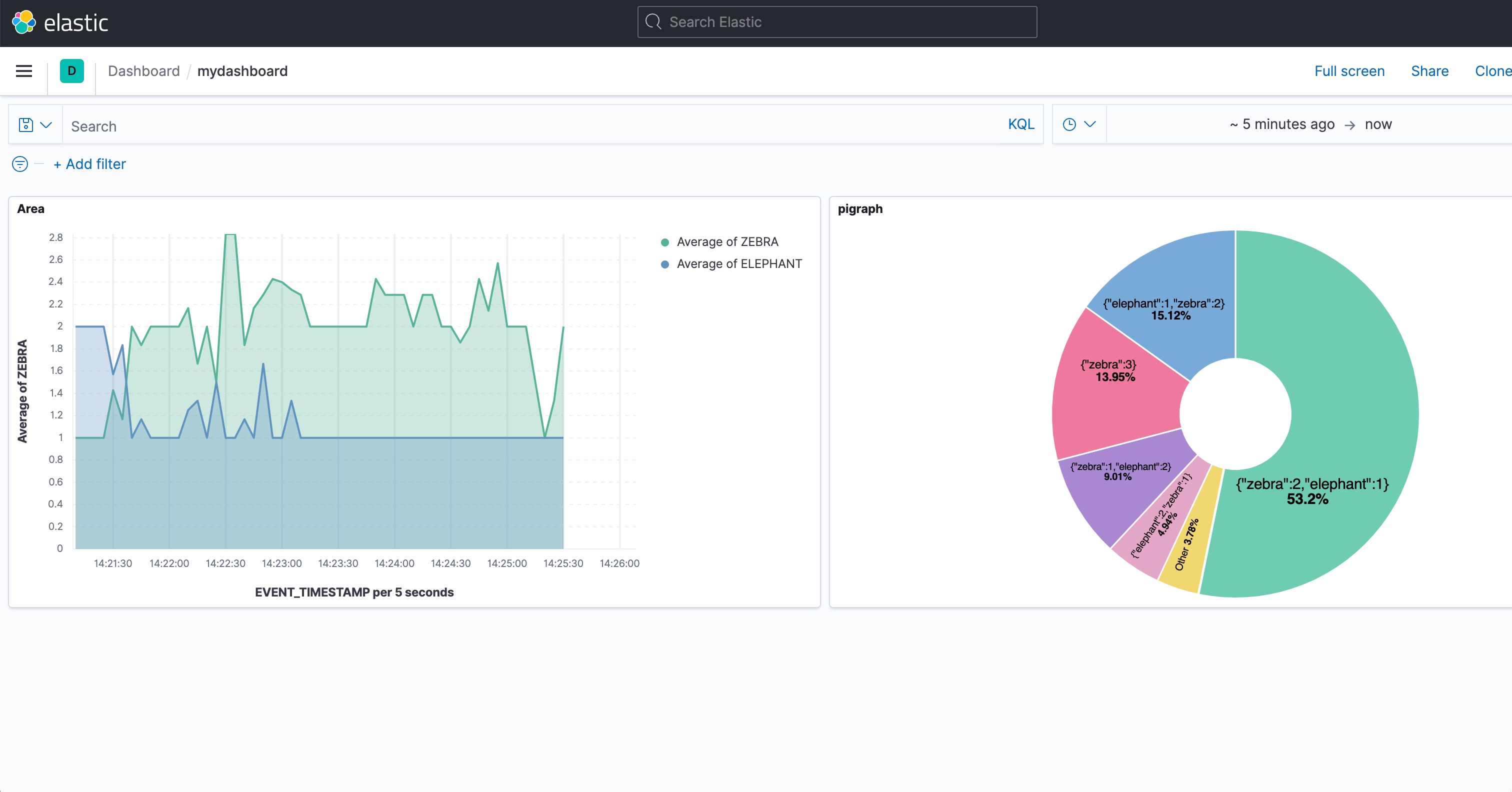

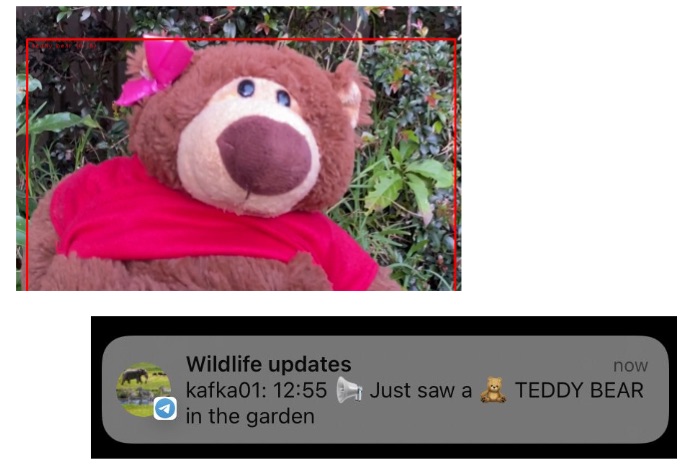

In action

When running end to end, the object detection, Kafka Connect and ksqlDB processing plus the Kibana dashboards look like this

Setup Rasperry Pi / Kafka Producer

This code has been tested on a Raspberry Pi 4 and MacBook Pro (Intel) with Python 3.7.4 and pip 22.1.2

This example uses TensorFlow Lite with Python on a Raspberry Pi to perform real-time object detection using images streamed from the Pi Camera. Much inspiration taken from the TensorFlow Lite Python object detection example

Download the EfficientDet-Lite mode

Download a pre-trained TensorFlow Lite detection model - which can detect elepahnts, cats etc.,

curl -L 'https://tfhub.dev/tensorflow/lite-model/efficientdet/lite0/detection/metadata/1?lite-format=tflite' -o efficientdet_lite0.tfliteSetup virtual python environment

Use a virtual python environment to keep dependancies seperate

python3 -m venv env

source env/bin/activate

python --version

pip --versionInstall requirements

python3 -m pip install pip --upgrade

python3 -m pip install -r requirements.txtFixes

Fix for Library not loaded: ... libusb-1.0.0.dylib

brew install libusbFix for TypeError: create_from_options(): incompatible function arguments.

pip install tflite-support==0.4.0Run detection loop

Run the detection loop using the camera

python3 detect.pyRun the detection loop using the sample video file

python detect.py --videoFile ./cat.movSetup Kafka, Kafka Connect and Kibana

These instructions are for running Kafka, Kafka Connect and Kibana locally using docker containers

Download Kafka connect sinks

Download the http and elasticsearch Kafka connect sink adapters (note: licensed)

cd connect_plugin

unzip ~/Downloads/confluentinc-kafka-connect-http-*.zip

unzip ~/Downloads/confluentinc-kafka-connect-elasticsearch-*.zip

cd ..Start docker containers

docker compose up -dConfigure brokers

Edit the file detect.ini for settings of kafka broker and name of camera

Check connector-plugins are avaiable

Check the http and elasticsearch Kafka connect sink adapters are available

curl -s "http://localhost:8083/connector-plugins" | jq '.'Setup topics

Create Kafka topics and populate with dummy data

./01_create_topicSetup KSQL streams

Connect to ksqlDB server and create streams

ksql

run script './02_ksql.ksql';Load Elastic Dynamic Templates

Load Elastic Dynamic Templates - ensure fields suffixed with TIMESTAMP are created as dates

./03_elastic_dynamic_templateSetup Kafka connect sink to elastic

Ensure the animals and zoo topics are sent from kafka to elastic

./04_kafka_to_elastic_sinkCreate indexes in Kibana

Create indexes in Kibana

./05_create_kibana_metadata.shCan check Kibana index by visiting http://localhost:5601/app/management/kibana/indexPatterns

Create a visulization

Go to http://localhost:5601/app/lens to create a visulization and dashboard

Or - go to http://localhost:5601/app/management/kibana/objects and select Import saved objects and upload 06_kibana_export.ndjson

Setup Telegram

To call Telegram you must use and create your own bot. Although we're not using this connector, these instructions for creating a bot are great steps to follow. You'll need to know the bot url and chat_id.

Setup Kafka connect sink to HTTP

cp 07_teaddybear-telegram-sink-example.json 07_teaddybear-telegram-sink.jsonAnd then edit 07_teaddybear-telegram-sink.json updating the bot url and chat_id

Setup Kafka connect sink to HTTP

Establish Kafka connect sink to HTTP for Telegram

./07_kafka_to_telegram_sinkEnd to end

Now everything is setup, it's time to run detection loop with Rasparry Pi producing results to Kafka. Pass the --enableKafka flag to write object detection as events

Run the detection loop using the camera

python3 detect.py --enableKafkaRun the detection loop using the sample video file

python detect.py --videoFile ./cat.mov --enableKafka