You've started to investigate hypothesis testing, p-values and their use for accepting or rejecting the null hypothesis. With this, the power of a statistical test measures an experiment's ability to detect a difference, when one exists. In the case of testing whether a coin is fair, the power of our statistical test would be the probability of rejecting the null hypothesis "this coin is fair" when the coin was unfair. As you might assume, the power of this statistical test would thus depend on several factors including our p-value threshold for rejecting the null hypothesis, the size of our sample and the 'level of unfairness' of the coin in question.

You will be able to:

- Define power in relation to p-value and the null hypothesis

- Describe the impact of sample size and effect size on power

- Perform power calculation using SciPy and Python

- Demonstrate the combined effect of sample size and effect size on statistical power using simulations

The power of a statistical test is defined as the probability of rejecting the null hypothesis, given that it is indeed false. As with any probability, the power of a statistical test, therefore, ranges from 0 to 1, with 1 being a perfect test that guarantees rejecting the null hypothesis when it is indeed false.

Intrinsically, this is related to

Note: Ideally,

$\alpha$ and$\beta$ would both be minimized, but this is often costly, impractical or impossible depending on the scenario and required sample sizes.

The effect size is the magnitude of the difference you are testing between the two groups. Thus far, you've mainly been investigating the mean of a sample. For example, after flipping a coin n number of times, you've investigated using a t-test to determine whether the coin is a fair coin (p(heads)=0.5). To do this, you compared the mean of the sample to that of another sample, if comparing coins, or to a know theoretical distribution. Similarly, you might compare the mean income of a sample population to that of a census tract to determine if the populations are statistically different. In such cases, Cohen's D is typically the metric used as the effect size.

Cohen's D is defined as: $ d = \frac{m_1 - m_2}{s}$, where

When looking at the difference of means of two populations, Cohen's D is equal to the difference of the sample means divided by the pooled standard deviation of the samples. The pooled standard deviation of the samples is the average spread of all data points in the two samples around their group mean.

Since

- alpha value

- effect size

- sample size

A fantastic visual representation of these values' effect on one another can be found on Kristoffer Magnusson's website.

Let's look at how power might change in the context of varying effect size. To start, imagine the scenario of trying to detect whether or not a coin is fair. In this scenario, the null-hypothesis would be

To start, you might choose an alpha value that you are willing to accept such as

For example, if we wish to state the alternative hypothesis

$ d = \frac{m_1 - m_2}{s}$

$ d = \frac{.55 - .5}{s}$

Furthermore, since we are dealing with a binomial variable, the standard deviation of the sample should follow the formula

So some potential effect size values for various scenarios might look like this:

import numpy as np

import pandas as pdm1 = .55

m2 = .5

p = m2

rows = []

for n in [10, 20, 50, 500]:

std = np.sqrt(n*p*(1-p))

d = (m1-m2)/std

rows.append({'Effect_Size': d, 'STD': std, 'Num_observations': n})

print('Hypothetical effect sizes for p(heads)=.55 vs p(heads)=.5')

pd.DataFrame(rows)Hypothetical effect sizes for p(heads)=.55 vs p(heads)=.5

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| Effect_Size | Num_observations | STD | |

|---|---|---|---|

| 0 | 0.031623 | 10 | 1.581139 |

| 1 | 0.022361 | 20 | 2.236068 |

| 2 | 0.014142 | 50 | 3.535534 |

| 3 | 0.004472 | 500 | 11.180340 |

As a general rule of thumb, all of these effect sizes are quite small. here's the same idea expanded to other alternative hypotheses:

m2 = .5

rows = {}

for n in [10, 20, 50, 500]:

temp_dict = {}

for m1 in [.51, .55, .6, .65, .7, .75, .8, .85, .9]:

p = m1

std = np.sqrt(n*p*(1-p))

d = (m1-m2)/std

temp_dict[m1] = d

rows[n] = temp_dict

print('Hypothetical effect sizes for various alternative hypotheses')

df = pd.DataFrame.from_dict(rows, orient='index')

# df.index = [10,20,50, 500]

# df.index.name = 'Sample_Size'

# df.columns.name = 'Alternative Hypothesis'

dfHypothetical effect sizes for various alternative hypotheses

.dataframe tbody tr th {

vertical-align: top;

}

.dataframe thead th {

text-align: right;

}

| 0.51 | 0.55 | 0.6 | 0.65 | 0.7 | 0.75 | 0.8 | 0.85 | 0.9 | |

|---|---|---|---|---|---|---|---|---|---|

| 10 | 0.006326 | 0.031782 | 0.064550 | 0.099449 | 0.138013 | 0.182574 | 0.237171 | 0.309965 | 0.421637 |

| 20 | 0.004473 | 0.022473 | 0.045644 | 0.070321 | 0.097590 | 0.129099 | 0.167705 | 0.219179 | 0.298142 |

| 50 | 0.002829 | 0.014213 | 0.028868 | 0.044475 | 0.061721 | 0.081650 | 0.106066 | 0.138621 | 0.188562 |

| 500 | 0.000895 | 0.004495 | 0.009129 | 0.014064 | 0.019518 | 0.025820 | 0.033541 | 0.043836 | 0.059628 |

While a bit long winded, you can see that realistic effect sizes for this scenario could be anywhere from 0.05 (or lower) up to approximately .4.

Now that you have some parameter estimates for

As you've also seen, a common statistical test for comparing sample means is the t-test. Statsmodels has some convenient build in functions for calculating the power of a t-test and plotting power curves. Take a look:

from statsmodels.stats.power import TTestIndPower, TTestPower

import matplotlib.pyplot as plt

import seaborn as sns

%matplotlib inline

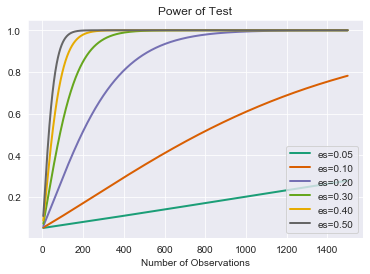

sns.set_style('darkgrid') # Nice background styling on plotspower_analysis = TTestIndPower()power_analysis.plot_power(dep_var='nobs',

nobs = np.array(range(5,1500)),

effect_size=np.array([.05, .1, .2,.3,.4,.5]),

alpha=0.05)

plt.show()As this should demonstrate, detecting small perturbances can be quite difficult!

Similarly, just because a t-test has an incredibly small p-value doesn't necessarily imply a strong statistical test. As is mentioned in the article Using Effect Size - or Why the P Value Is Not Enough, referenced below, using incredibly large sample sizes such as 22,000 can make even the most trivial effect size statistically significant. Realizing these reciprocal relationships and considering all 4 parameters: alpha, effect size, sample size, and power are all important when interpreting the results (such as the p-value) of a statistical test.

In addition to plotting a full curve, you can also calculate specific values. Simply don't specify one of the four parameters.

# Calculate power

power_analysis.solve_power(effect_size=.2, nobs1=80, alpha=.05)0.24175778678474177

# Calculate sample size required

power_analysis.solve_power(effect_size=.2, alpha=.05, power=.8)393.4056989990335

# Calculate minimum effect size to satisfy desired alpha and power as well as respect sample size limitations

power_analysis.solve_power(nobs1=25, alpha=.05, power=.8)0.8087077886680412

# Calculate alpha (less traditional)

power_analysis.solve_power(nobs1=25, effect_size=.3, power=.8)0.6613634273431555

You can also simulate your own data to verify results:

import scipy.stats as stats

def run_ttest_sim(p1, p2, std, nobs, alpha=0.05, n_sim=10**5):

"""p1 and p2 are the underlying means probabilities for 2 normal variables

Samples will be generated using these parameters."""

# Calculate Normalized Effect Size

effect_size = np.abs(p1-p2)/std

# Run a Simulation

# Initialize array to store results

p = (np.empty(n_sim))

p.fill(np.nan)

# Run a for loop for range of values in n_sim

for s in range(n_sim):

control = np.random.normal(loc= p1, scale=std, size=nobs)

experimental = np.random.normal(loc= p2, scale=std, size=nobs)

t_test = stats.ttest_ind(control, experimental)

p[s] = t_test[1]

num_null_rejects = np.sum(p < alpha)

power = num_null_rejects/n_sim

# Store results

stat_dict = {'alpha':alpha,

'nobs':nobs,

'effect_size':effect_size,

'power': power}

return stat_dict

run_ttest_sim(.5, .7, 1, 50){'alpha': 0.05,

'nobs': 50,

'effect_size': 0.19999999999999996,

'power': 0.16879}

And going back to the full stats model implementation for verification:

power_analysis.solve_power(nobs1=50, effect_size=0.19999999999999996, alpha=0.05)0.1676754863454749

power_analysis.solve_power(nobs1=50, effect_size=0.19999999999999996, power=0.16719)0.049779515826212185

power_analysis.solve_power(nobs1=50, power=0.16719, alpha=0.05)0.19959710069445308

power_analysis.solve_power(power=0.16719, effect_size=0.19999999999999996, alpha=0.05)49.80313313853301

- Statsmodels documentation

- Using Effect Size—or Why the P Value Is Not Enough

- Understanding Statistical Power and Significance Testing - an interactive visualization

In this lesson, you learned about the idea of "statistical power" and how sample size, alpha, and effect size impact the power of an experiment. Remember, the power of a statistical test is the probability of rejecting the null hypothesis when it is indeed false.