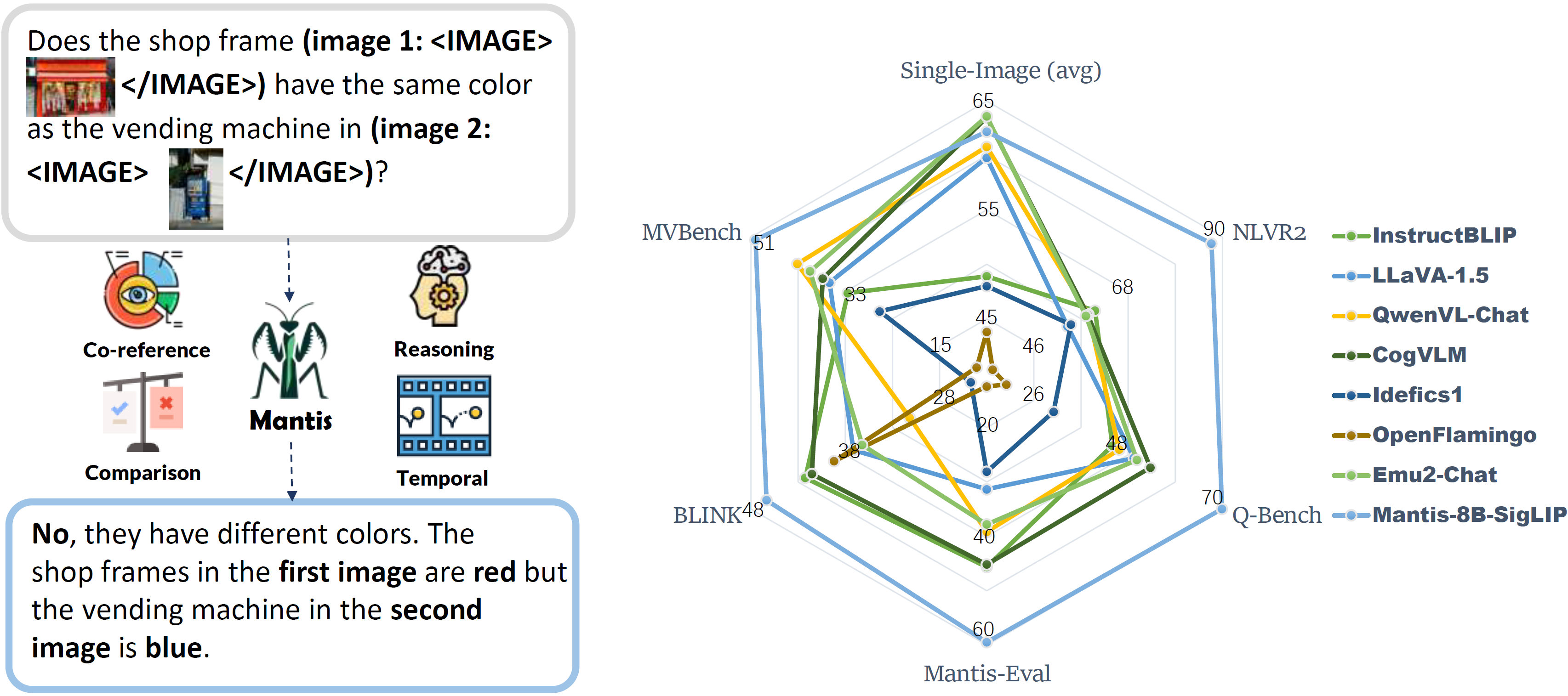

🤔 The recent years have witnessed a great array of large multimodal models (LMMs) to effectively solve single-image vision language tasks. However, their abilities to solve multi-image visual language tasks is yet to be improved.

😦 The existing multi-image LMMs (e.g. OpenFlamingo, Emu, Idefics, etc) mostly gain their multi-image ability through pre-training on hundreds of millions of noisy interleaved image-text data from web, which is neither efficient nor effective.

🔥 Therefore, we present Mantis, an LLaMA-3 based LMM with interleaved text and image as inputs, train on Mantis-Instruct under academic-level resources (i.e. 36 hours on 16xA100-40G).

🚀 Mantis achieves state-of-the-art performance on 5 multi-image benchmarks (NLVR2, Q-Bench, BLINK, MVBench, Mantis-Eval), and maintaining a strong single-image performance on par with CogVLM and Emu2.

- [2024-05-03] We have release our training codes, dataset, evaluation codes codes to the community! Check the following sections for more details.

- [2024-05-02] We release the first multi-image abled LMM model Mantis-8B based on LLaMA3! Interact with Mantis-8B-SigLIP on Hugging Face Spaces or Colab Demo

- [2024-05-02] Mantis's technical report is now available on arXiv. Kudos to the team!

conda create -n mantis python=3.10

conda activate mantis

pip install -e .

# install flash-attention

pip install flash-attn --no-build-isolationYou can run inference with the following command:

cd examples

python run_mantis.pyInstall the requirements with the following command:

pip install -e[train,eval]

cd mantis/trainOur training scripts follows the coding format and model structure of Hugging face. Different from LLaVA Github repo, you can directly load our models from Hugging Face model hub.

We support training of Mantis based on the Fuyu architecture and the LLaVA architecture. You can train the model with the following command:

Training Mantis based on LLaMA3 with CLIP/SigLIP encoder:

- Pretrain Mantis-LLaMA3 Multimodal projector on pretrain data (Stage 1):

bash scripts/pretrain_mllava.sh- Fine-tune the pretrained Mantis-LLaMA3 on Mantis-Instruct (Stage 2):

bash scripts/train_mllava.shTraining Mantis based on Fuyu-8B:

- Fine-tune Fuyu-8B on Mantis-Instruct to get Mantis-Fuyu:

bash scripts/train_fuyu.shNote:

- Our training scripts contain auto inference bash commands to infer the number of GPUs and the number of GPU nodes use for the training. So you only need to modify the data config path and the base models.

- The training data will be automatically downloaded from hugging face when you run the training scripts.

See mantis/train/README.md for more details.

Check all the training scripts in mantist/train/scripts

To reproduce our evaluation results, please check mantis/benchmark/README.md

- 🤗 Mantis-Instruct 721K text-image interleaved datasets for multi-image instruction tuning

- 🤗 Mantis-Eval 217 high-quality examples for evaluating LMM's multi-image skills

We provide the following models in the 🤗 Hugging Face model hub:

The following intermediate checkpoints after pre-training the multi-modal projectors are also available for experiments reproducibility (Please note the follwing checkpoints still needs further fine-tuning on Mantis-Eval to be intelligent. They are not working models.):

- Thanks LLaVA and LLaVA-hf team for providing the LLaVA codebase, and hugging face compatibility!

- Thanks Haoning Wu for providing codes of MVBench evaluation!

@article{jiang2024mantis,

title={MANTIS: Interleaved Multi-Image Instruction Tuning},

author={Jiang, Dongfu and He, Xuan and Zeng, Huaye and Wei, Con and Ku, Max and Liu, Qian and Chen, Wenhu},

journal={arXiv preprint arXiv:2405.01483},

year={2024}

}