This project is a conceptual Node.js analytics web application for a health records system, designed to showcase best in class integration of modern cloud technology, in collaboration with legacy mainframe code.

Summit Health is a conceptual healthcare/insurance type company. It has been around a long time, and has 100s of thousands of patient records in a SQL database connected to a zOS mainframe.

Summit's health records look very similar to the health records of most insurance companies.

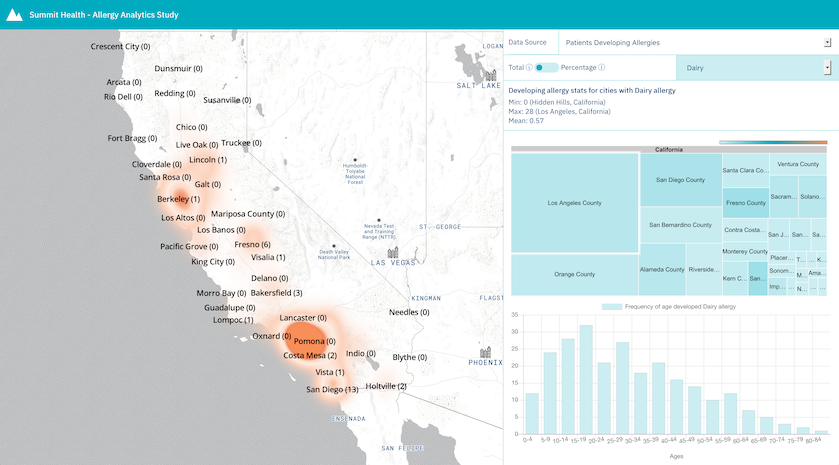

Here's a view a data analyst might see when they interact with the Summit Health Analytics Application:

Summit has recently started understanding how data science/analytics on some of the patient records, might surface interesting insights. There is lots of talk about this among some of the big data companies.

Summit has also heard a lot about cloud computing. There is a lot of legacy code in the mainframe, and it works well for now, but Summit thinks it may be a complimentary opportunity to explore some data science/analytics in the cloud.

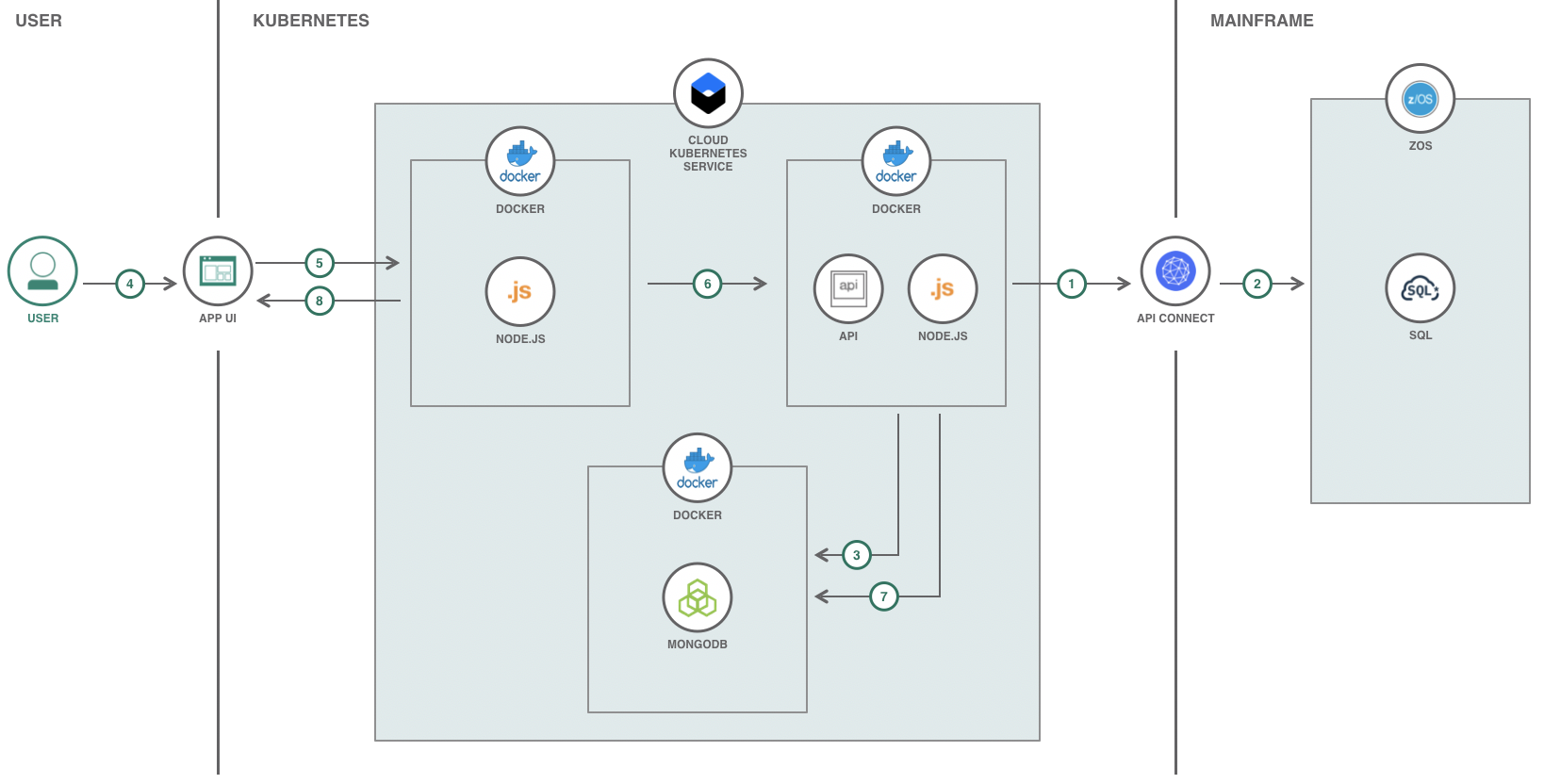

Their CTO sees an architecture for Summit Health like this:

- Data Service API acts as a data pipeline and is triggered for updating data lake with updated health records data by calling API Connect APIs associated with the zOS Mainframe.

- API Connect APIs process relevant health records data from zOS Mainframe data warehouse and send the data through the data pipeline.

- The Data Service data pipeline processes zOS Mainframe data warehouse data and updates MongoDB data lake.

- User interacts with the UI to view and analyze analytics.

- The functionality of the App UI that the User interacts with is handled by Node.js. Node.js is where the API calls are initialized.

- The API calls are processed in the Node.js data service and are handled accordingly.

- The data is gathered from the MongoDB data lake from API calls.

- The responses from the API calls are handled accordingly by the App UI.

Follow these steps to setup and run this code pattern locally and on the Cloud. The steps are described in detail below.

Clone the summit-health-analytics repo locally. In a terminal, run:

$ git clone https://github.com/IBM/summit-health-analytics

$ cd summit-health-analytics

For running these services locally without Docker containers, the following will be needed:

- In order to make API calls to help in populating the Mapbox map used, a Mapbox access token will be needed.

- Assign the access token to

mapbox.accessTokenin/data-service/properties.iniandmapboxAccessTokenin/web/public/javascripts/properties.js.

If your data source for this application is on a zOS Mainframe, follow these steps for populating the datalake and running the application:

- Assign the API Connect URL to

zsystem.apiin/data-service/properties.ini - Start the application by running

docker-compose up --buildin this repo's root directory. - Once the containers are created and the application is running, use the Open API Doc (Swagger) at

http://localhost:3000and API.md for instructions on how to use the APIs. - Run

curl localhost:3000/api/v1/update -X PUTto connect to the zOS Mainframe and populate the data lake. For information on the data lake and data service, read the data service README.md. - Once the data has been populated in the data lake, use

http://localhost:4000to access the Summit Health Analytics UI. For information on the analytics data and UI, read the web README.md.

If you do not have a data source for this application and would like to generate mock data, follow these steps for populating the datalake and running the application:

- Start the application by running

docker-compose up --buildin this repo's root directory. - Once the containers are created and the application is running, use the Open API Doc (Swagger) at

http://localhost:3000and API.md for instructions on how to use the APIs. - Use the provided

generate/generate.shscript to generate and populate data. Read README.md for instructions on how to use the script. For information on the data lake and data service, read the data service README.md. - Once the data has been populated in the data lake, use

http://localhost:4000to access the Summit Health Analytics UI. For information on the analytics data and UI, read the web README.md.

- To allow changes to the Data Service or the UI, create a repo on Docker Cloud where the new modified containers will be pushed to.

NOTE: If a new repo is used for the Docker containers, the container

imagewill need to be modified to the name of the new repo used in deploy-dataservice.yml and/or deploy-webapp.yml.

$ export DOCKERHUB_USERNAME=<your-dockerhub-username>

$ docker build -t $DOCKERHUB_USERNAME/summithealthanalyticsdata:latest data-service/

$ docker build -t $DOCKERHUB_USERNAME/summithealthanalyticsweb:latest web/

$ docker login

$ docker push $DOCKERHUB_USERNAME/summithealthanalyticsdata:latest

$ docker push $DOCKERHUB_USERNAME/summithealthanalyticsweb:latest

- Provision the IBM Cloud Kubernetes Service and follow the set of instructions for creating a Container and Cluster based on your cluster type,

StandardvsLite.

-

Run

bx cs workers myclusterand locate thePublic IP. This IP is used to access the worklog API and UI (Flask Application). Update theenvvalues in both deploy-dataservice.yml and deploy-webapp.yml to thePublic IP. -

To deploy the services to the IBM Cloud Kubernetes Service, run:

$ kubectl apply -f deploy-mongodb.yml

$ kubectl apply -f deploy-dataservice.yml

$ kubectl apply -f deploy-webapp.yml

## Confirm the services are running - this may take a minute

$ kubectl get pods

- Use

https://PUBLIC_IP:32001to access the UI and the Open API Doc (Swagger) athttps://PUBLIC_IP:32000for instructions on how to make API calls.

-

Run

bx cs cluster-get <CLUSTER_NAME>and locate theIngress SubdomainandIngress Secret. This is the domain of the URL that is to be used to access the Data Service and UI on the Cloud. Update theenvvalues in both deploy-dataservice.yml and deploy-webapp.yml to theIngress Subdomain. In addition, update thehostandsecretNamein ingress-dataservice.yml and ingress-webapp.yml toIngress SubdomainandIngress Secret. -

To deploy the services to the IBM Cloud Kubernetes Service, run:

$ kubectl apply -f deploy-mongodb.yml

$ kubectl apply -f deploy-dataservice.yml

$ kubectl apply -f deploy-webapp.yml

## Confirm the services are running - this may take a minute

$ kubectl get pods

## Update protocol being used to https

$ kubectl apply -f ingress-dataservice.yml

$ kubectl apply -f ingress-webapp.yml

- Use

https://<INGRESS_SUBDOMAIN>to access the UI and the Open API Doc (Swagger) athttps://api.<INGRESS_SUBDOMAIN>for instructions on how to make API calls.

-

Provision two SDK for Node.js applications. One will be for

./data-serviceand the other will be for./web. -

Provision a Compose for MongoDB database.

-

Update the following in the manifest.yml file:

namefor both Cloud Foundry application names provisioned from Step 1.

serviceswith the name of the MongoDB service provisioned from Step 2.

HOST_IPandDATA_SERVERwith the host name and domain of thedata-servicefrom Step 1.

MONGODBwith the HTTPS Connection String of the MongoDB provisioned from Step 2. This can be found under Manage > Overview of the database dashboard.

- Connect the Compose for MongoDB database with the data service Node.js app by going to Connections on the dashboard of the data service app provisioned and clicking Create Connection. Locate the Compose for MongoDB database you provisioned and press connect.

-

To deploy the services to IBM Cloud Foundry, go to one of the dashboards of the apps provisioned from Step 1 and follow the Getting Started instructions for connecting and logging in to IBM Cloud from the console (Step 3 of Getting Started). Once logged in, run

ibmcloud app pushfrom the root directory. -

Use

https://<WEB-HOST-NAME>.<WEB-DOMAIN>to access the UI and the Open API Doc (Swagger) athttps://<DATA-SERVICE-HOST-NAME>.<DATA-SERVICE-DOMAIN>for instructions on how to make API calls.

This code pattern is licensed under the Apache License, Version 2. Separate third-party code objects invoked within this code pattern are licensed by their respective providers pursuant to their own separate licenses. Contributions are subject to the Developer Certificate of Origin, Version 1.1 and the Apache License, Version 2.