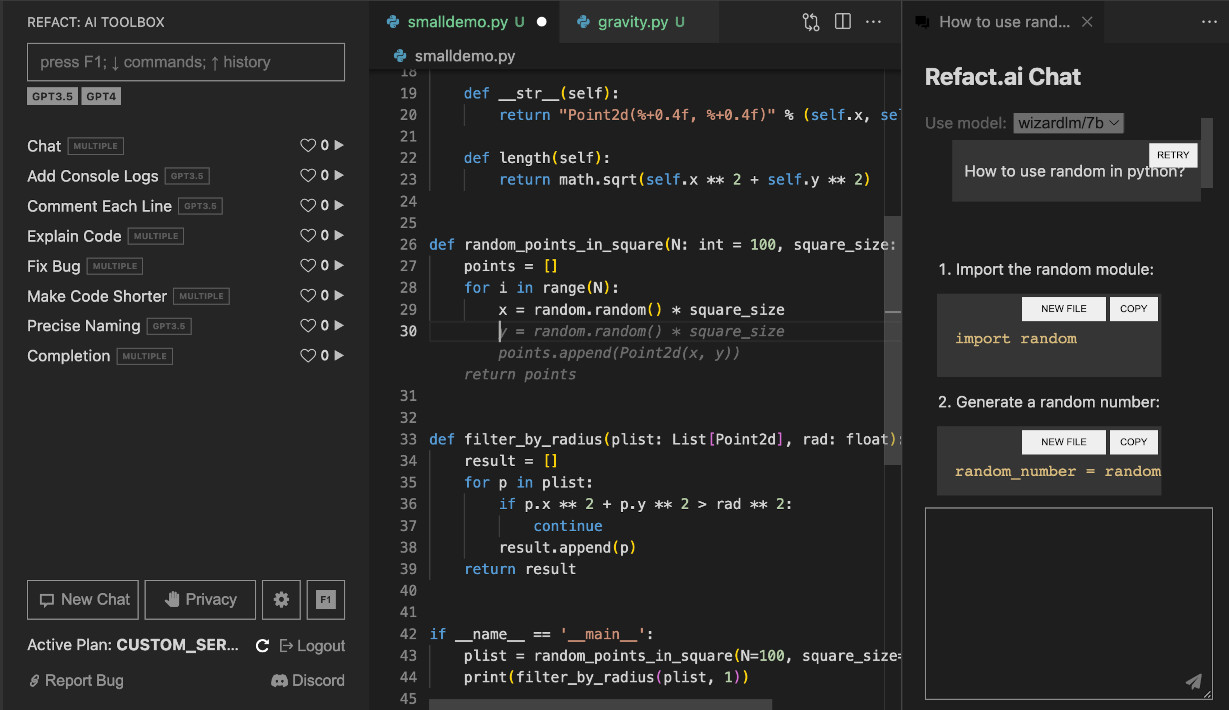

Refact is an open-source Copilot alternative available as a self-hosted or cloud option.

- Autocompletion powered by best-in-class open-source code models

- Context-aware chat on a current file

- Refactor, explain, analyse, optimise code, and fix bug functions

- Fine-tuning on codebase (Beta, self-hosted only) Docs

- Context-aware chat on entire codebase

Download Refact for VS Code or JetBrains.

You can start using Refact Cloud immediately, just create an account at https://refact.ai/.

Instructions below are for the self-hosted version.

The easiest way to run the self-hosted server is a pre-build Docker image.

Install Docker with NVidia GPU support. On Windows you need to install WSL 2 first, one guide to do this.

Run docker container with following command:

docker run -d --rm -p 8008:8008 -v perm-storage:/perm_storage --gpus all smallcloud/refact_self_hosting

perm-storage is a volume that is mounted inside the container. All the configuration files,

downloaded weights and logs are stored here.

To upgrade the docker, delete it using docker kill XXX (the volume perm-storage will retain your

data), run docker pull smallcloud/refact_self_hosting and run it again.

Now you can visit http://127.0.0.1:8008 to see the server Web GUI.

Docker commands super short refresher

Add your yourself to docker group to run docker without sudo (works for Linux):sudo usermod -aG docker {your user}

List all containers:

docker ps -a

Start and stop existing containers (stop doesn't remove them):

docker start XXX

docker stop XXX

Shows messages from a container:

docker logs -f XXX

Remove a container and all its data (except data inside a volume):

docker rm XXX

Check out or delete a docker volume:

docker volume inspect VVV

docker volume rm VVV

See CONTRIBUTING.md for installation without a docker container.

Go to plugin settings and set up a custom inference URL http://127.0.0.1:8008

JetBrains

Settings > Tools > Refact.ai > Advanced > Inference URLVSCode

Extensions > Refact.ai Assistant > Settings > InfurlUnder the hood, it uses Refact models and the best open-source models.

At the moment, you can choose between the following models:

| Model | Completion | Chat | AI Toolbox | Fine-tuning |

|---|---|---|---|---|

| Refact/1.6B | + | + | ||

| CONTRASTcode/medium/multi | + | + | ||

| CONTRASTcode/3b/multi | + | + | ||

| starcoder/15b/base | + | |||

| starcoder/15b/plus | + | |||

| wizardcoder/15b | + | |||

| codellama/7b | + | |||

| starchat/15b/beta | + | |||

| wizardlm/7b | + | |||

| wizardlm/13b | + | |||

| llama2/7b | + | |||

| llama2/13b | + |

Refact is free to use for individuals and small teams under BSD-3-Clause license. If you wish to use Refact for Enterprise, please contact us.

Q: Can I run a model on CPU?

A: it doesn't run on CPU yet, but it's certainly possible to implement this.

Q: Sharding is disabled, why?

A: It's not ready yet, but it's coming soon.

- Contributing CONTRIBUTING.md

- GitHub issues for bugs and errors

- Community forum for community support and discussions

- Discord for chatting with community members

- Twitter for product news and updates