📚🤖 S2QA: Question Answering on research papers from Semantic Scholar

Have you ever wondered what research papers have to say about your burning questions? Look no further than Semantic Scholar Q&A with GPT-4! 🙌

This Python script lets you enter a question, and it uses the power of Semantic Scholar and OpenAI to generate an answer based on the content of the top research papers. 🤖🔍

🚀 What's New!

- Ollama support. See notebook

- S2QA now supports llama-index! Check out the data loader here on llama hub

- Updated UI with chat interface:

- Keeps the context

- Shows only used references

- Index once for each research space; vectors and index are cached

- Full-text support!

💻 Demo Link :

https://shauryr.github.io/s2qa-demo/

👏 Acknowledgements

This project is in collaboration with the Semantic Scholar Team. I am thankful for their support and feedback.

📘 Notebooks

- ollama.ipynb ⭐ Ollama support demo

- chat_qa.ipynb ⭐ sends the context to ChatGPT(using revChatGPT) for generating answers. This gives the best answers and is free. Seems less prone to hallucinations than other pipelines in this repo.

- utils.py - has all the necessary functions for search and GPT-3 prompting

- s2qa_sources_langchain.ipynb - Get better answers with langchain mapreduce but this is very expensive. Prone to hallucinations.

- s2qa_nb.ipynb - first notebook. Very basic QA. Prone to hallucinations.

🚨 Caution ⚠️

Caution should be exercised when using generative AI for scientific text, as the output generated by such models may not be rigorously fact-checked or peer-reviewed, and may therefore contain errors or inaccuracies that could mislead researchers and readers. Be responsible and use this tool with caution.

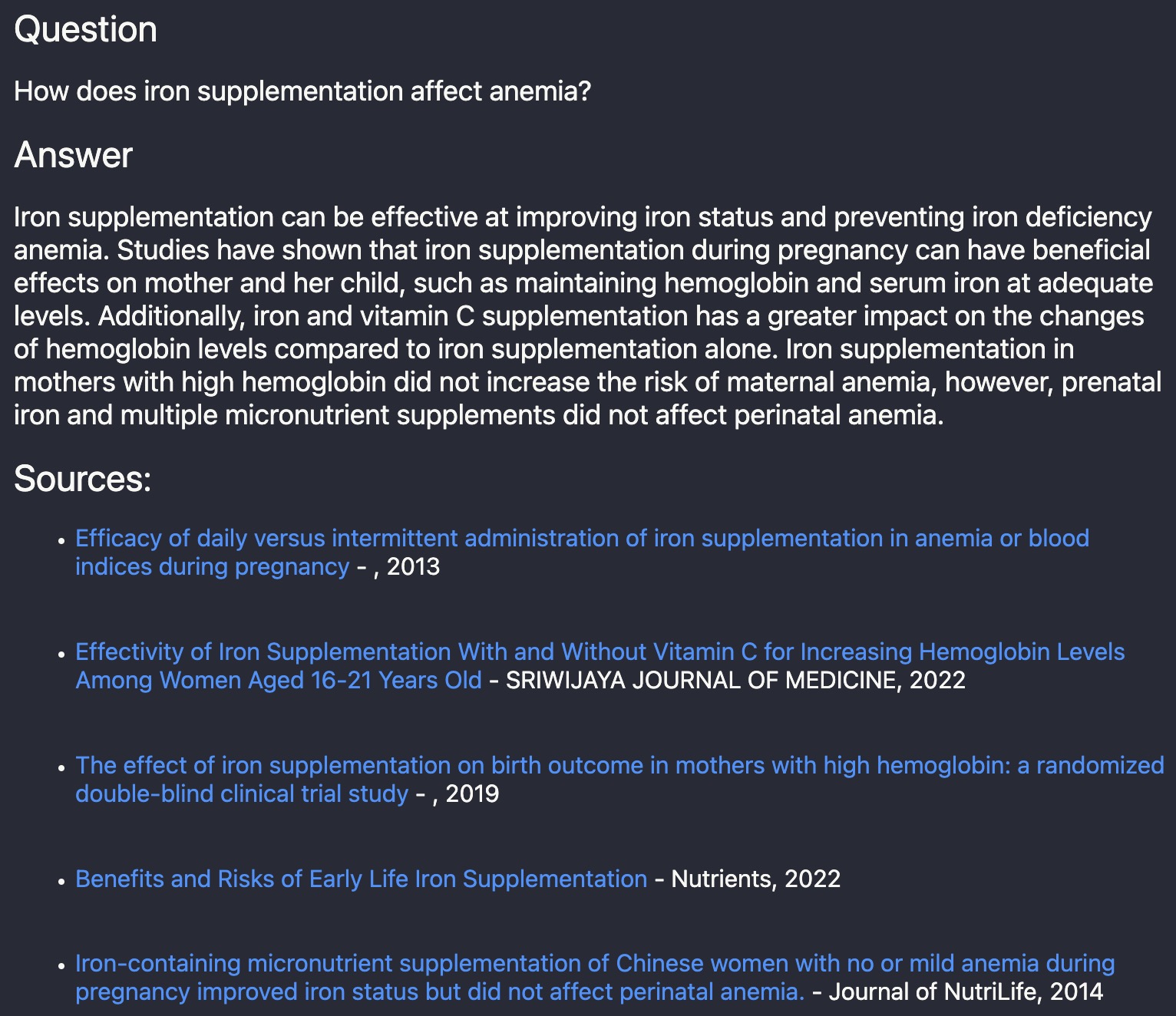

🤖 Examples

❓ Question

what is the impact of transformers on language

🤖 ChatGPT Response:

Transformers have had a significant impact on language in a variety of ways. One of the most prominent areas of impact is in natural language processing (NLP), which has been revolutionized by the development of transformer-based language models. These models have been used for a variety of NLP tasks, including machine translation [1], code summarization [2], detecting patronizing and condescending language [3], and spoken language understanding tasks [4].

Moreover, transformer-based models have been shown to be effective in domain adaptation for bidirectional transformers [7], multi-modal transformer models have been explored for image captioning [8], and pre-training objectives have been used in software engineering to boost performance in code-related tasks [6]. Additionally, transformers have also been used to incorporate document timestamps into language models, which has shown to have a positive impact on downstream classification tasks and masked language modeling [5].

Overall, transformers have had a significant impact on language, particularly in the field of natural language processing, where transformer-based models have been used for a variety of tasks with great success. They have also been explored in other areas such as software engineering and image captioning, showing the versatility of the technology.

[1] A Study on the journey of Natural Language Processing models: from Symbolic Natural Language Processing to Bidirectional Encoder Representations from Transformers - International Journal of Scientific Research in Computer Science Engineering and Information Technology, 2021

[2] Code Summarization: Do Transformers Really Understand Code? - , 2022

[3] PALI-NLP at SemEval-2022 Task 4: Discriminative Fine-tuning of Transformers for Patronizing and Condescending Language Detection - International Workshop on Semantic Evaluation, 2022

[4] Benchmarking Transformers-based models on French Spoken Language Understanding tasks - Interspeech, 2022

[5] Temporal Language Modeling for Short Text Document Classification with Transformers - Conference on Computer Science and Information Systems, 2022

[6] Automating Code-Related Tasks Through Transformers: The Impact of Pre-training - ArXiv, 2023

[7] Exploiting Auxiliary Data for Offensive Language Detection with Bidirectional Transformers - WOAH, 2021

[8] What Does a Language-And-Vision Transformer See: The Impact of Semantic Information on Visual Representations - Frontiers in Artificial Intelligence, 2021

🤖 Answers with sources and langchain mapreduce

🧰 Requirements

OpenAI API key(if you are using langchain)Semantic Scholar Academic Graph API key- https://www.semanticscholar.org/product/api

These can be added in the constants.py

The main third-party package requirements are tiktoken, openai, transformers and langchain.

To install all the required packages

pip install -r requirements.txt

📍 Pipeline

1️⃣ Searching : We begin by searching the vast and ever-growing database of Semantic Scholar to find the most up-to-date and relevant papers and articles related to your question.

2️⃣ Re-Ranking : We then use SPECTER to embed these papers and re-rank the search results, ensuring that the most informative and relevant articles appear at the top of your search results.

3️⃣ Answering : Finally, we use the powerful natural language processing capabilities of GPT-3 to generate informative and accurate answers to your question, using custom prompts to ensure the best results.

🖊️ Customizable

-

Try other open embedding methods on huggingface to see better re-ranking results.

-

Try other prompts or refine the current prompt to get even better answers.