🤖 Gopilot - AI-Assitant for Golang

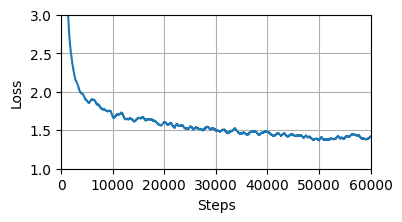

GoPilot is a 290M parameters language model trained exclusively on Go code using a small research budget (~100$).

Demo of the Gopilot VSCode Code Extension

Overview

Gopilot is a GPT-style Transformer model trained on 20B tokens on a single RTX4090 for less than a week using the Go split of The Stack Dedup v1.2 dataset. It comes in two flavours: a HuggingFace tokenizer based model and a model based on a custom Go tokenizer that we developped.

The pre-training and fine-tuning weights are made available here.

aws s3 ls s3://gopilot/checkpoints/ --region ap-east-1

Installation

You need to have conda and go installed on your machine. You can install the necessary dependencies using conda and the provided environment_cpu.yml (choose environment_cuda.yml when running CUDA). Dependencies may not be up to date, hence, using the official Docker image is preferred.

Build the Go tokenizer binary:

# Linux, MacOS

go build -o tokenizer/libgotok.so -buildmode=c-shared ./tokenizer/libgotok.go

# Windows

go build -o tokenizer/libgotok.dll -buildmode=c-shared ./tokenizer/libgotok.goUsage

A CUDA Docker image is made available here.

Pre-Training

The pre-training script trains the model for the specified token budget. Expects a pre-tokenized dataset.

python train.py \

--model-cf model/config/gopilot-290M.yml \

--tokenizer hugging-face \

--tokenizer-cf tokenizer/config/hugging-face.json \

--s3-dataset-prefix <prefix-of-your-s3-dataset> \

--s3-bucket <your-s3-bucket> \

--gradient-accumulation-steps 64 \

--optimizer sophiag \

--batch-size 8 \

--lr 0.0005 \

--token-budget 10000000000 \

--device cuda \

--precision fp16 \

--s3-checkpoints \

--warmup 1000 \

--neptune \

--compileFine-tuning

You can fine-tune Gopilot on any JSONL dataset composed of samples of the following form: {"sample": "package main\nconst Value = 1..."}. We use a mix of pre-training samples, AI-generated samples to perform finetuning.

python finetune.py \

--model-cf model/config/gopilot-290M.yml \

--tokenizer-cf tokenizer/config/hugging-face.json \

--tokenizer hugging-face \

--in-model-weights checkpoints/hugging-face.pt \

--out-model-weights checkpoints/hugging-face-ft.pt \

--dataset-filepath all \

--gradient-accumulation-steps 16 \

--batch-size 8 \

--dropout 0.1 \

--weight-decay 0.1 \

--lr 0.000025 \

--num-epochs 10 \

--precision fp16 \

--neptuneEvaluation

The evaluation script runs evaluation on the HumanEvalX benchmark. Our best model obtains 7.4% pass@10 and 77.1% compile@10. Check out the results folder for more information.

python evaluate.py \

--model-cf model/config/gopilot-290M.yml \

--tokenizer-cf tokenizer/config/gopilot.json \

--tokenizer gopilot \

--model-weights /checkpoints/gopilot-ft.pt \

--device cuda \

--k 10 \

--max-new-tokens 128 \

--verboseInference Server

The inference server is a simple HTTP server that hosts the model and exposes a /complete endpoint to submit samples to auto-complete. It's used by the VSCode extension to provide completions.

python inference_server.py \

--model-cf model/config/gopilot-290M.yml \

--tokenizer-cf tokenizer/config/gopilot.json \

--tokenizer gopilot \

--device mps \

--checkpoint-path .cache/checkpoints/gopilot-ft.ptVSCode Extension

Check out the Gopilot VSCode extension here. Works with the inference server.

Acknowledgements & Notes

- Thank you to Qinkai Zheng for providing guidance and the hardware resources.

- We did not check for leakage when performing HumanEvalX evaluation. Do not include these results in research.

- This project was made during the course of Deep Learning (80240743-0) at Tsinghua University.

- While fun to play around with, we do not recommend using a model of this size for code completion in your editor. It's a school project!

- Feel free to use the code, tweak the checkpoints, and all!

Future Work

- Release the model weights on HuggingFace

- Quantize the model weights for fast inference

- Interactive online demo

- Try on other languages such as Rust or C++

- Experiment with different tokenization strategies

- Train for longer on more data