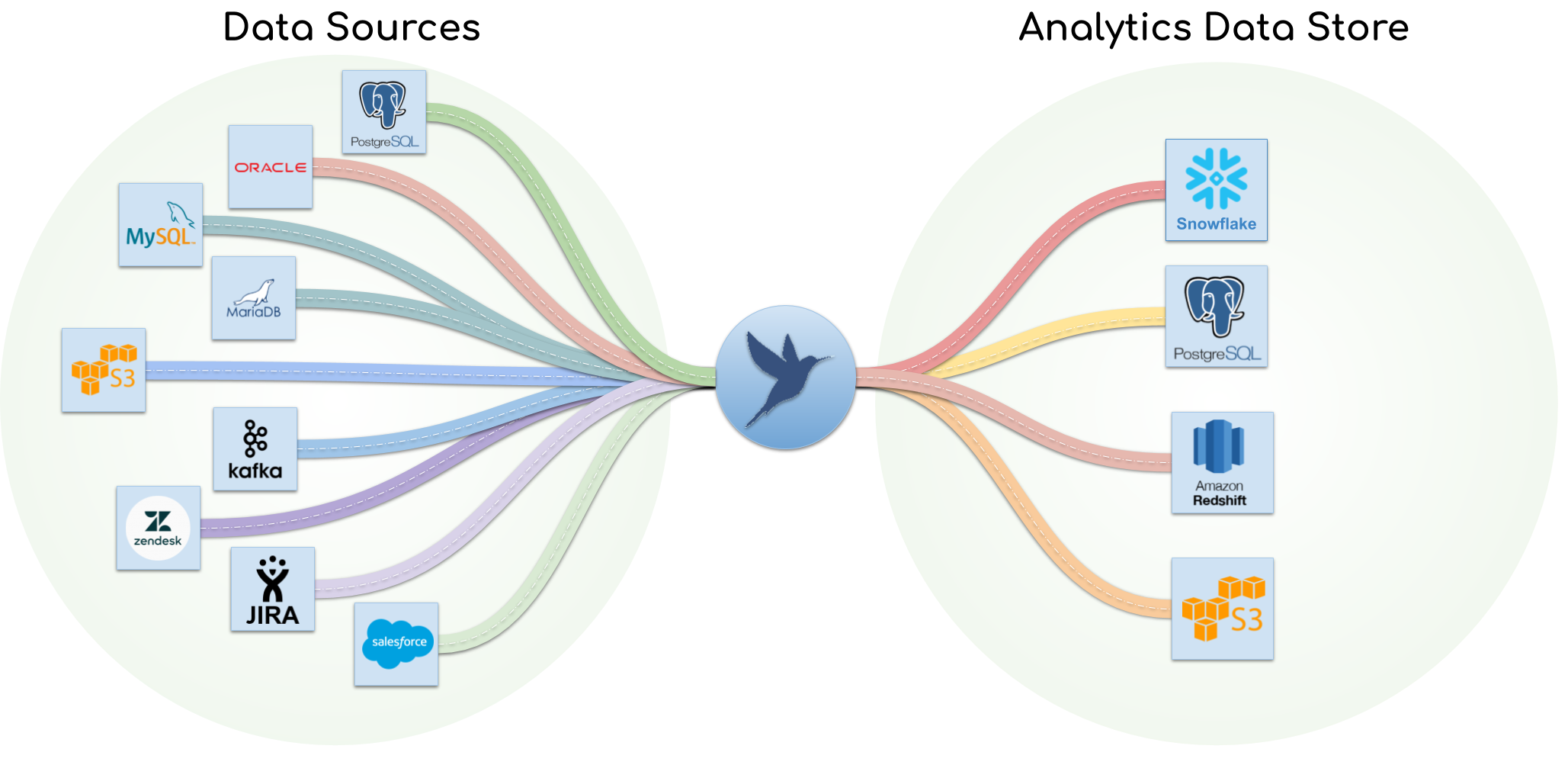

PipelineWise is a Data Pipeline Framework using the Singer.io specification to ingest and replicate data from various sources to various destinations. Documentation is available at https://transferwise.github.io/pipelinewise/

-

Built with ELT in mind: PipelineWise fits into the ELT landscape and is not a traditional ETL tool. PipelineWise aims to reproduce the data from the source to an Analytics-Data-Store in as close to the original format as possible. Some minor load time transformations are supported but complex mapping and joins have to be done in the Analytics-Data-Store to extract meaning.

-

Managed Schema Changes: When source data changes, PipelineWise detects the change and alters the schema in your Analytics-Data-Store automatically

-

Load time transformations: Ideal place to obfuscate, mask or filter sensitive data that should never be replicated in the Data Warehouse

-

YAML based configuration: Data pipelines are defined as YAML files, ensuring that the entire configuration is kept under version control

-

Lightweight: No daemons or database setup are required

-

Extensible: PipelineWise is using Singer.io compatible taps and target connectors. New connectors can be added to PipelineWise with relatively small effort

Tap extracts data from any source and write it to a standard stream in a JSON-based format, and target consumes data from taps and do something with it, like load it into a file, API or database

| Type | Name | Extra | Latest Version | Description |

|---|---|---|---|---|

| Tap | Postgres | Extracts data from PostgreSQL databases. Supporting Log-Based, Key-Based Incremental and Full Table replications | ||

| Tap | MySQL | Extracts data from MySQL databases. Supporting Log-Based, Key-Based Incremental and Full Table replications | ||

| Tap | Kafka | Extracts data from Kafka topics | ||

| Tap | S3 CSV | Extracts data from S3 csv files (currently a fork of tap-s3-csv because we wanted to use our own auth method) | ||

| Tap | Zendesk | Extracts data from Zendesk using OAuth and Key-Based incremental replications | ||

| Tap | Snowflake | Extracts data from Snowflake databases. Supporting Key-Based Incremental and Full Table replications | ||

| Tap | Salesforce | Extracts data from Salesforce database using BULK and REST extraction API with Key-Based incremental replications | ||

| Tap | Jira | Extracts data from Atlassian Jira using Base auth or OAuth credentials | ||

| Tap | MongoDB | Extracts data from MongoDB databases. Supporting Log-Based and Full Table replications | ||

| Tap | AdWords | Extra | Extracts data Google Ads API (former Google Adwords) using OAuth and support incremental loading based on input state | |

| Tap | Google Analytics | Extra | Extracts data from Google Analytics | |

| Tap | Oracle | Extra | Extracts data from Oracle databases. Supporting Log-Based, Key-Based Incremental and Full Table replications | |

| Tap | Zuora | Extra | Extracts data from Zuora database using AQAA and REST extraction API with Key-Based incremental replications | |

| Tap | GitHub | Extra | Extracts data from GitHub API using Personal Access Token and Key-Based incremental replications | |

| Target | Postgres | Loads data from any tap into PostgreSQL database | ||

| Target | Redshift | Loads data from any tap into Amazon Redshift Data Warehouse | ||

| Target | Snowflake | Loads data from any tap into Snowflake Data Warehouse | ||

| Target | S3 CSV | Uploads data from any tap to S3 in CSV format | ||

| Transform | Field | Transforms fields from any tap and sends the results to any target. Recommended for data masking/ obfuscation |

Note: Extra connectors are experimental connectors and written by community contributors. These connectors are not maintained regularly and not installed by default. To install the extra packages use the --connectors=all option when installing PipelineWise.

If you have Docker installed then using docker is the easiest and recommended method of start using PipelineWise.

-

Build an executable docker images that has every required dependency and it's isolated from your host system:

$ docker build -t pipelinewise:latest . -

Once the image is ready, create an alias to the docker wrapper script:

$ alias pipelinewise="$(PWD)/bin/pipelinewise-docker"

-

Check if the installation was successfully by running the

pipelinewise statuscommand:$ pipelinewise status Tap ID Tap Type Target ID Target Type Enabled Status Last Sync Last Sync Result -------- ------------ ------------ --------------- --------- -------- ----------- ------------------ 0 pipeline(s)

You can run any pipelinewise command at this point. Tutorials to create and run pipelines is at creating pipelines.

Running tests:

- To run unit tests, follow the instructions in the Building from source section.

- To run integration and unit tests, follow the instructions in the Developing with Docker section.

-

Make sure that every dependencies installed on your system:

- Python 3.x

- python3-dev

- python3-venv

- mongo-tools

- mbuffer

-

Run the install script that installs the PipelineWise CLI and every supported singer connectors into separated virtual environments:

$ ./install.sh --connectors=all

Press

Yto accept the license agreement of the required singer components. To automate the installation and accept every license agreement run./install --acceptlicensesUse the optional--connectors=...,...argument to install only a specific list of singer connectors. -

To start CLI you need to activate the CLI virtual environment and has to set

PIPELINEWISE_HOMEenvironment variable:$ source {ACTUAL_ABSOLUTE_PATH}/.virtualenvs/pipelinewise/bin/activate $ export PIPELINEWISE_HOME={ACTUAL_ABSOLUTE_PATH}

(The

ACTUAL_ABSOLUTE_PATHdiffers on every system, the install script prints you the correct command that fits to your system once the installation completed) -

Check if the installation was successfully by running the

pipelinewise statuscommand:$ pipelinewise status Tap ID Tap Type Target ID Target Type Enabled Status Last Sync Last Sync Result -------- ------------ ------------ --------------- --------- -------- ----------- ------------------ 0 pipeline(s)

You can run any pipelinewise command at this point. Tutorials to create and run pipelines is at creating pipelines.

To run unit tests:

$ pytest --ignore tests/end_to_endTo run unit tests and generate code coverage:

$ coverage run -m pytest --ignore tests/end_to_end && coverage report

To generate HTML coverage report.

$ coverage run -m pytest --ignore tests/end_to_end && coverage html -d coverage_html

Note: The HTML report will be generated in coverage_html/index.html

To run integration and end to end tests:

To run integration and end-to-end tests you need to use the Docker Development Environment. This will spin up a pre-configured PipelineWise project with pre-configured source and target databases in several docker containers which is required for the end-to-end test cases.

If you have Docker and Docker Compose installed, you can create a local development environment that includes not only the PipelineWise executables but a pre-configured development project as well with some databases as source and targets for a more convenient development experience and to run integration and end to end tests.

For further instructions about setting up local development environment go to Test Project for Docker Development Environment.

To add new taps and targets follow the instructions on

Apache License Version 2.0

See LICENSE to see the full text.