Polars is a blazingly fast DataFrames library implemented in Rust. Its memory model uses Apache Arrow as backend.

It currently consists of an eager API similar to pandas and a lazy API that is somewhat similar to spark. Amongst more, Polars has the following functionalities.

| Functionality | Eager | Lazy (DataFrame) | Lazy (Series) |

|---|---|---|---|

| Filters | ✔ | ✔ | ✔ |

| Shifts | ✔ | ✔ | ✔ |

| Joins | ✔ | ✔ | |

| GroupBys + aggregations | ✔ | ✔ | |

| Comparisons | ✔ | ✔ | ✔ |

| Arithmetic | ✔ | ✔ | |

| Sorting | ✔ | ✔ | ✔ |

| Reversing | ✔ | ✔ | ✔ |

| Closure application (User Defined Functions) | ✔ | ✔ | |

| SIMD | ✔ | ✔ | |

| Pivots | ✔ | ✗ | |

| Melts | ✔ | ✗ | |

| Filling nulls + fill strategies | ✔ | ✗ | ✔ |

| Aggregations | ✔ | ✔ | ✔ |

| Moving Window aggregates | ✔ | ✗ | ✗ |

| Find unique values | ✔ | ✗ | |

| Rust iterators | ✔ | ✔ | |

| IO (csv, json, parquet, Arrow IPC | ✔ | ✗ | |

| Query optimization: (predicate pushdown) | ✗ | ✔ | |

| Query optimization: (projection pushdown) | ✗ | ✔ | |

| Query optimization: (type coercion) | ✗ | ✔ |

Note that almost all eager operations supported by Eager on Series/ChunkedArrays can be used in Lazy via UDF's

Want to know about all the features Polars support? Read the docs!

- installation guide:

pip install py-polars - Reference guide

- 10 minutes to py-polars notebook

- lazy py-polars notebook

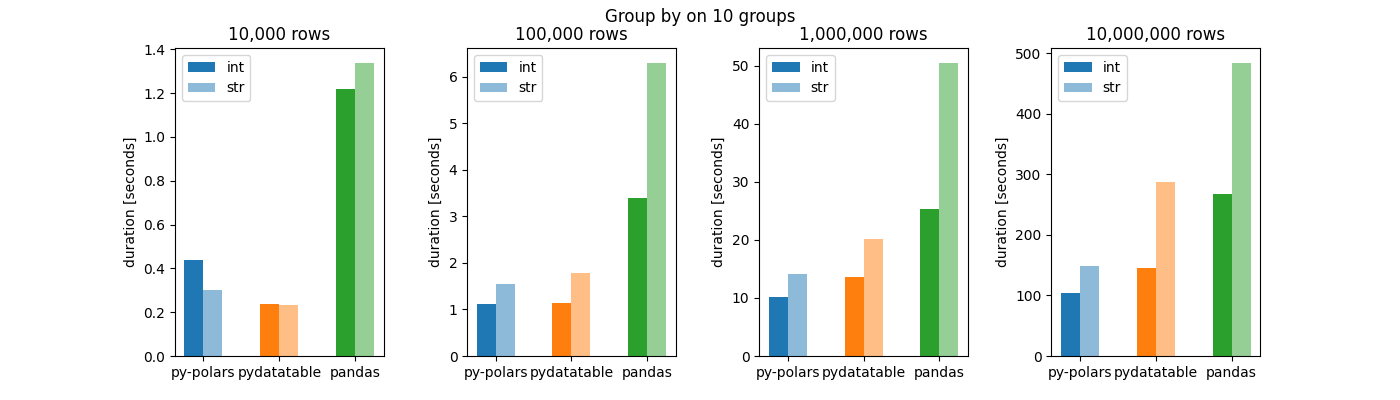

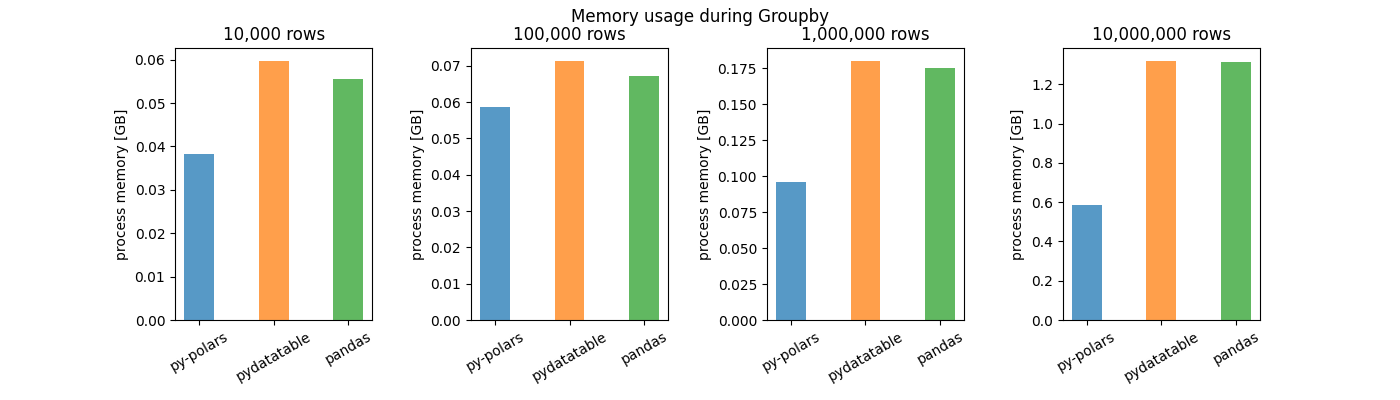

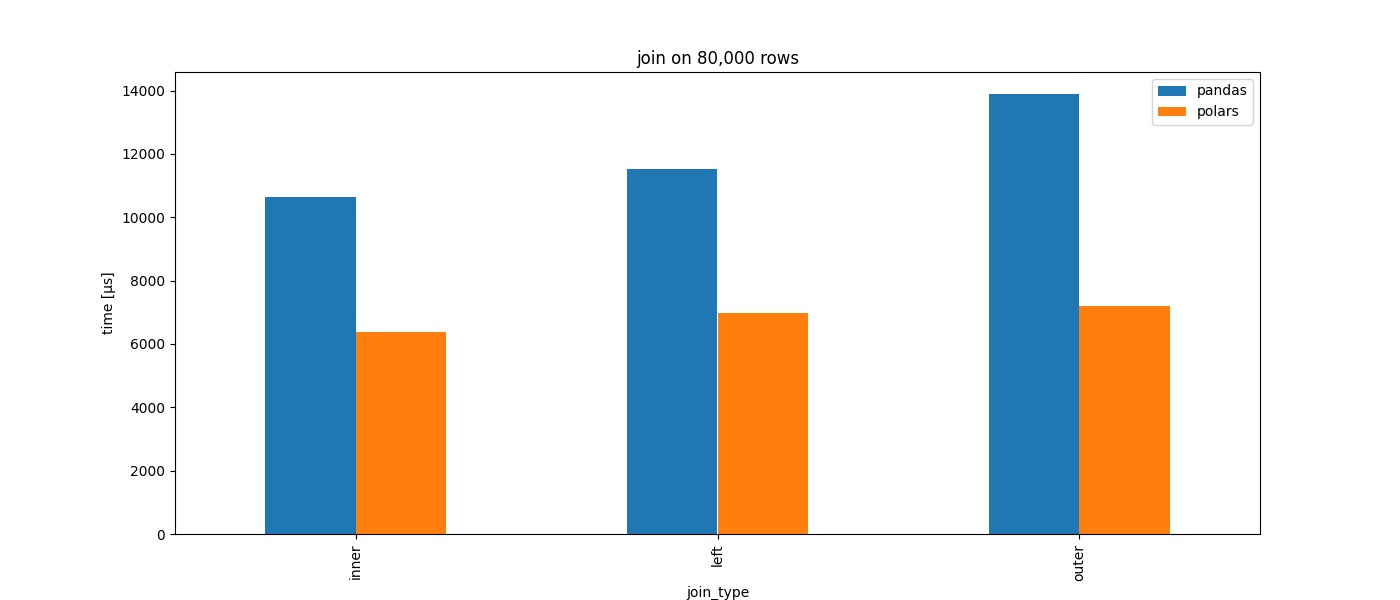

Polars is written to be performant. Below are some comparisons with pandas and pydatatable DataFrame library (lower is better).

Additional cargo features:

temporal (default)- Conversions between Chrono and Polars for temporal data

simd (default)- SIMD operations

parquet- Read Apache Parquet format

random- Generate array's with randomly sampled values

ndarray- Convert from

DataFrametondarray

- Convert from

lazy- Lazy api

strings- String utilities for

Utf8Chunked

- String utilities for

Want to contribute? Read our contribution guideline.

- POLARS_PAR_COLUMN_BP -> breakpoint for (some) parallel operations on columns. If the number of columns exceeds this it will run in parallel