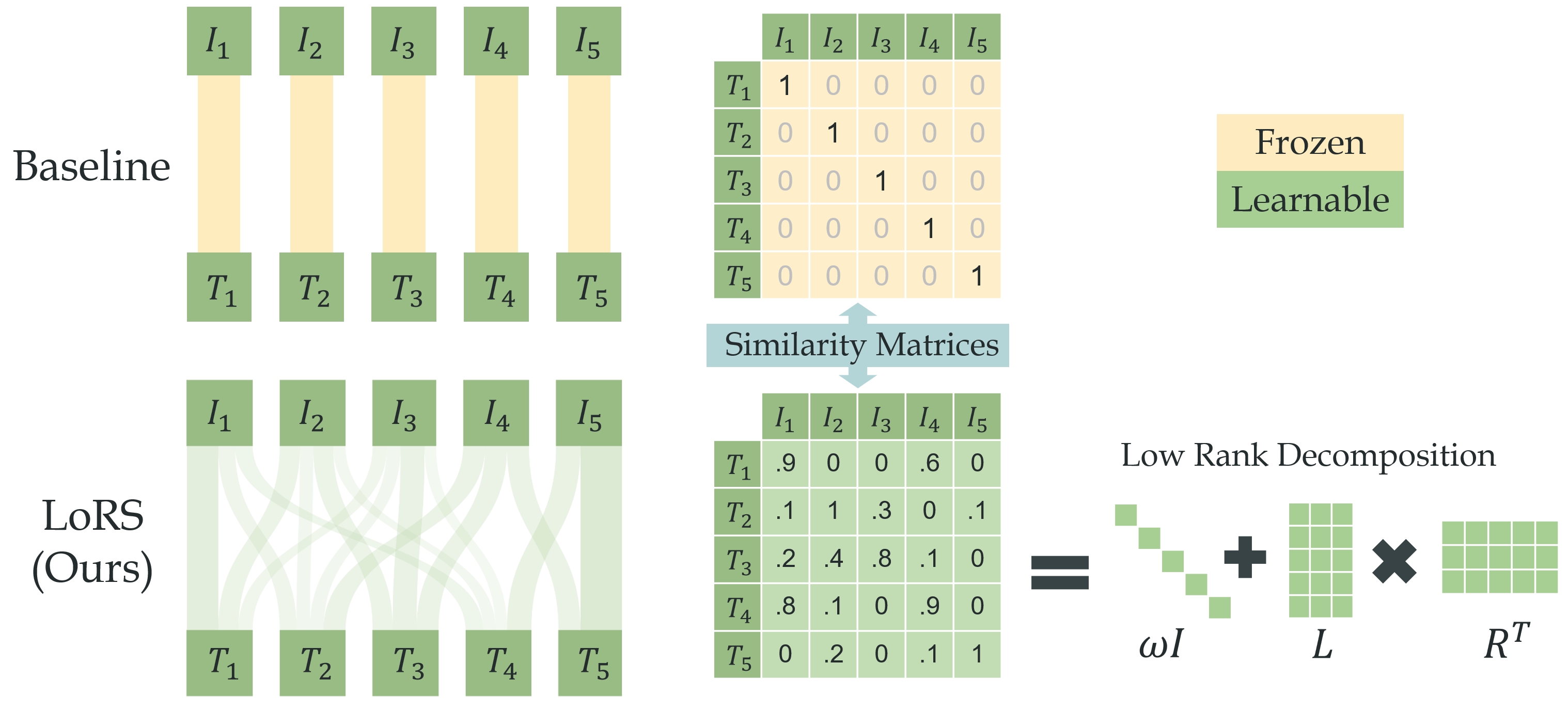

This repo contains code of our ICML'24 work LoRS: Low-Rank Similarity Mining for Multimodal Dataset Distillation. LoRS propose to learn the similarity matrix during distilling the image and text. The simple and plug-and-play method yields significant performance gain. Please check our paper for more analysis.

Requirements: please see requirements.txt.

Pretrained model checkpoints: you may manually download checkpoint of BERT, NFNet (from TIMM) and put them here:

distill_utils/checkpoints/

├── bert-base-uncased/

│ ├── config.json

│ ├── LICENSE.txt

│ ├── model.onnx

│ ├── pytorch_model.bin

│ ├── vocab.txt

│ └── ......

└── nfnet_l0_ra2-45c6688d.pth

Datasets: please download Flickr30K: [Train][Val][Test][Images] and COCO: [Train][Val][Test][Images] datasets, and put them here:

./distill_utils/data/

├── Flickr30k/

│ ├── flickr30k-images/

│ │ ├── 1234.jpg

│ │ └── ......

│ ├── results_20130124.token

│ └── readme.txt

└── COCO/

├── train2014/

├── val2014/

└── test2014/

Training Expert Buffer: e.g. run sh sh/buffer_flickr.sh. The expert training takes days. You could manually split the num_experts and run multiple processes.

Distill with LoRS: e.g. run sh sh/distill_flickr_lors_100.sh. The distillation could be run on one single RTX 3090/4090 thanks to TESLA.

If you find our work useful and inspiring, please cite our paper:

@article{xu2024lors,

title={Low-Rank Similarity Mining for Multimodal Dataset Distillation},

author={Xu, Yue and Lin, Zhilin and Qiu, Yusong and Lu, Cewu and Li, Yong-Lu},

journal={arXiv e-prints},

pages={arXiv--2406},

year={2024}

}

We following the setting and code of VL-Distill and re-implement the algorithm with TESLA. We deeply appreciate their valuable contribution!