As large models are released and iterated upon, they are becoming increasingly intelligent. However, in the process of using large models, we face significant challenges in data security and privacy. We need to ensure that our sensitive data and environments remain completely controlled and avoid any data privacy leaks or security risks. Based on this, we have launched the DB-GPT project to build a complete private large model solution for all database-based scenarios. This solution supports local deployment, allowing it to be applied not only in independent private environments but also to be independently deployed and isolated according to business modules, ensuring that the ability of large models is absolutely private, secure, and controllable.

DB-GPT is an experimental open-source project that uses localized GPT large models to interact with your data and environment. With this solution, you can be assured that there is no risk of data leakage, and your data is 100% private and secure.

- [2023/06/01]🔥 On the basis of the Vicuna-13B basic model, task chain calls are implemented through plugins. For example, the implementation of creating a database with a single sentence.demo

- [2023/06/01]🔥 QLoRA guanaco(7b, 13b, 33b) support.

- [2023/05/28]🔥 Learning from crawling data from the Internet demo

- [2023/05/21] Generate SQL and execute it automatically. demo

- [2023/05/15] Chat with documents. demo

- [2023/05/06] SQL generation and diagnosis. demo

Currently, we have released multiple key features, which are listed below to demonstrate our current capabilities:

-

SQL language capabilities

- SQL generation

- SQL diagnosis

-

Private domain Q&A and data processing

-

Database knowledge Q&A

-

Data processing

-

Plugins

- Support custom plugin execution tasks and natively support the Auto-GPT plugin, such as:

- Automatic execution of SQL and retrieval of query results

- Automatic crawling and learning of knowledge

-

Unified vector storage/indexing of knowledge base

- Support for unstructured data such as PDF, Markdown, CSV, and WebURL

-

Milti LLMs Support

- Supports multiple large language models, currently supporting Vicuna (7b, 13b), ChatGLM-6b (int4, int8), guanaco(7b,13b,33b)

- TODO: codegen2, codet5p

Run on an RTX 4090 GPU.

DB-GPT creates a vast model operating system using FastChat and offers a large language model powered by Vicuna. In addition, we provide private domain knowledge base question-answering capability through LangChain. Furthermore, we also provide support for additional plugins, and our design natively supports the Auto-GPT plugin.

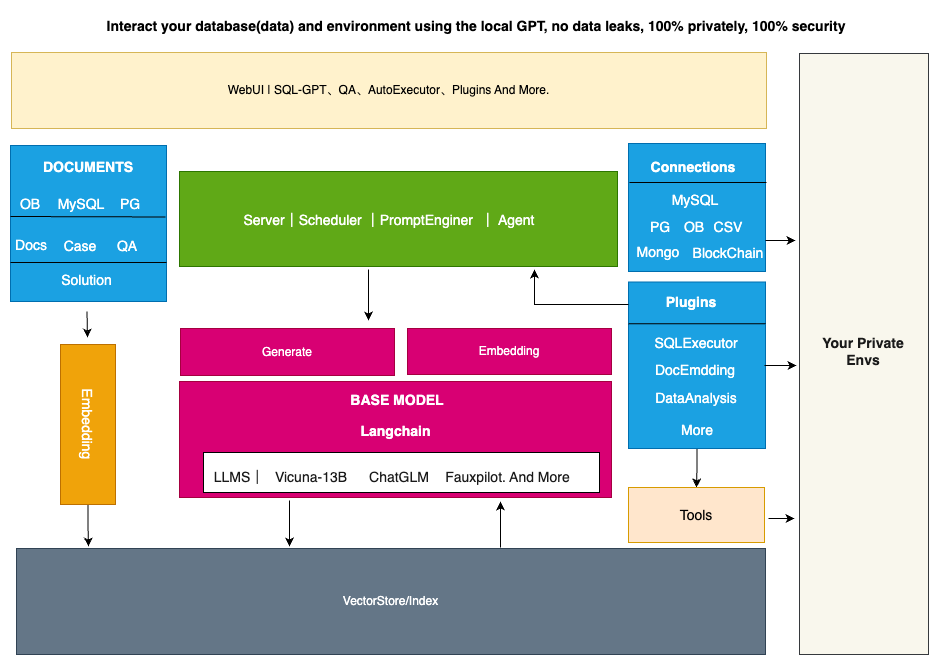

Is the architecture of the entire DB-GPT shown in the following figure:

The core capabilities mainly consist of the following parts:

- Knowledge base capability: Supports private domain knowledge base question-answering capability.

- Large-scale model management capability: Provides a large model operating environment based on FastChat.

- Unified data vector storage and indexing: Provides a uniform way to store and index various data types.

- Connection module: Used to connect different modules and data sources to achieve data flow and interaction.

- Agent and plugins: Provides Agent and plugin mechanisms, allowing users to customize and enhance the system's behavior.

- Prompt generation and optimization: Automatically generates high-quality prompts and optimizes them to improve system response efficiency.

- Multi-platform product interface: Supports various client products, such as web, mobile applications, and desktop applications.

Below is a brief introduction to each module:

As the knowledge base is currently the most significant user demand scenario, we natively support the construction and processing of knowledge bases. At the same time, we also provide multiple knowledge base management strategies in this project, such as:

- Default built-in knowledge base

- Custom addition of knowledge bases

- Various usage scenarios such as constructing knowledge bases through plugin capabilities and web crawling. Users only need to organize the knowledge documents, and they can use our existing capabilities to build the knowledge base required for the large model.

In the underlying large model integration, we have designed an open interface that supports integration with various large models. At the same time, we have a very strict control and evaluation mechanism for the effectiveness of the integrated models. In terms of accuracy, the integrated models need to align with the capability of ChatGPT at a level of 85% or higher. We use higher standards to select models, hoping to save users the cumbersome testing and evaluation process in the process of use.

In order to facilitate the management of knowledge after vectorization, we have built-in multiple vector storage engines, from memory-based Chroma to distributed Milvus. Users can choose different storage engines according to their own scenario needs. The storage of knowledge vectors is the cornerstone of AI capability enhancement. As the intermediate language for interaction between humans and large language models, vectors play a very important role in this project.

In order to interact more conveniently with users' private environments, the project has designed a connection module, which can support connection to databases, Excel, knowledge bases, and other environments to achieve information and data exchange.

The ability of Agent and Plugin is the core of whether large models can be automated. In this project, we natively support the plugin mode, and large models can automatically achieve their goals. At the same time, in order to give full play to the advantages of the community, the plugins used in this project natively support the Auto-GPT plugin ecology, that is, Auto-GPT plugins can directly run in our project.

Prompt is a very important part of the interaction between the large model and the user, and to a certain extent, it determines the quality and accuracy of the answer generated by the large model. In this project, we will automatically optimize the corresponding prompt according to user input and usage scenarios, making it easier and more efficient for users to use large language models.

TODO: In terms of terminal display, we will provide a multi-platform product interface, including PC, mobile phone, command line, Slack and other platforms.

As our project has the ability to achieve ChatGPT performance of over 85%, there are certain hardware requirements. However, overall, the project can be deployed and used on consumer-grade graphics cards. The specific hardware requirements for deployment are as follows:

| GPU | VRAM Size | Performance |

|---|---|---|

| RTX 4090 | 24 GB | Smooth conversation inference |

| RTX 3090 | 24 GB | Smooth conversation inference, better than V100 |

| V100 | 16 GB | Conversation inference possible, noticeable stutter |

This project relies on a local MySQL database service, which you need to install locally. We recommend using Docker for installation.

$ docker run --name=mysql -p 3306:3306 -e MYSQL_ROOT_PASSWORD=aa123456 -dit mysql:latestWe use Chroma embedding database as the default for our vector database, so there is no need for special installation. If you choose to connect to other databases, you can follow our tutorial for installation and configuration. For the entire installation process of DB-GPT, we use the miniconda3 virtual environment. Create a virtual environment and install the Python dependencies.

python>=3.10

conda create -n dbgpt_env python=3.10

conda activate dbgpt_env

pip install -r requirements.txt

You can refer to this document to obtain the Vicuna weights: Vicuna .

If you have difficulty with this step, you can also directly use the model from this link as a replacement.

- Run server

$ python pilot/server/llmserver.pyRun gradio webui

$ python pilot/server/webserver.pyNotice: the webserver need to connect llmserver, so you need change the .env file. change the MODEL_SERVER = "http://127.0.0.1:8000" to your address. It's very important.

We provide a user interface for Gradio, which allows you to use DB-GPT through our user interface. Additionally, we have prepared several reference articles (written in Chinese) that introduce the code and principles related to our project.

To use multiple models, modify the LLM_MODEL parameter in the .env configuration file to switch between the models.

To use multiple language model, modify the LLM_MODEL parameter in the .env configuration file to switch between the models.

In the .env configuration file, modify the LANGUAGE parameter to switch between different languages, the default is English (Chinese zh, English en, other languages to be added)

1.Place personal knowledge files or folders in the pilot/datasets directory.

2.set .env configuration set your vector store type, eg:VECTOR_STORE_TYPE=Chroma, now we support Chroma and Milvus(version > 2.1)

3.Run the knowledge repository script in the tools directory.

& python tools/knowledge_init.py

--vector_name : your vector store name default_value:default

--append: append mode, True:append, False: not append default_value:False

4.Add the knowledge repository in the interface by entering the name of your knowledge repository (if not specified, enter "default") so you can use it for Q&A based on your knowledge base.

Note that the default vector model used is text2vec-large-chinese (which is a large model, so if your personal computer configuration is not enough, it is recommended to use text2vec-base-chinese). Therefore, ensure that you download the model and place it in the models directory.

If nltk-related errors occur during the use of the knowledge base, you need to install the nltk toolkit. For more details, please refer to: nltk documents Run the Python interpreter and type the commands:

>>> import nltk

>>> nltk.download()This project is standing on the shoulders of giants and is not going to work without the open-source communities. Special thanks to the following projects for their excellent contribution to the AI industry:

- FastChat for providing chat services

- vicuna-13b as the base model

- langchain tool chain

- Auto-GPT universal plugin template

- Hugging Face for big model management

- Chroma for vector storage

- Milvus for distributed vector storage

- ChatGLM as the base model

- llama_index for enhancing database-related knowledge using in-context learning based on existing knowledge bases.

- Please run

black .before submitting the code.

The MIT License (MIT)

We are working on building a community, if you have any ideas about building the community, feel free to contact us. Discord