A gym environment and commandline tool for automating rigid origami crease pattern design.

Note We provide an introduction to the underlying principles of rigid origami and some practical use cases in our paper Automating Rigid Origami Design.

We reformulate the rigid-origami problem as a board game, where an agent interacts with the rigid-origami gym environment according to a set of rules which define an origami-specific problem.

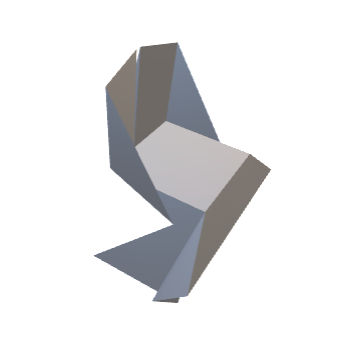

Figure: The rigid origami game for shape-approximation. The target shape (a) approximated by an agent playing the game (b) to find a pattern (c) which in its folded state (d) approximates the target.

Our commandline tool comprises a set of agents (or classical search methods and algorithms) for 3D shape approximation, packaging, foldable funiture and more.

Note The rigid-origami environment is not limited to these particular origami design challenges and agents. You can also deploy it on your own custom use case.

Note Before installing the dependencies, it is recommended that you setup a conda virtual environment.

Setup and activate the conda environment using the conda_rigid_origami_env.yml package list.

$ conda create --name=rigid-origami --file=conda_rigid_ori_env.yml

$ conda activate rigid-origami

Next install the gym-environment.

(rigid-origami) $ cd gym-rori

(rigid-origami) $ pip install -e .

The environment is now all set up.

We play the rigid-origami game for shape-approximation.

(rigid-origami) $ python main.py --objective=shape-approx --search-algorithm=RDM --num-steps=100000 --board-length=25 --num-symmetries=2 --optimize-psi

Adjust the game objective, agent, or any other conditions by setting specific options.

Note You can utilize the environment for different design tasks or objectives. You can also add a custom reward function in the rewarder.

A non-exhaustive list of the basic game settings is given below.

| Option | Flag | Value | Default value |

|---|---|---|---|

| Name | --name | string | "0" |

| Number of env interactions | --num-steps | int | 500 |

| Game objective | --objective | {shape-approx, packaging, chair, table, shelf, bucket} | shape-approx |

| Agent | --search-algorithm | {RDM, MCTS, evolution, PPO, DFTS, BFTS, human} | RDM |

| Seed pattern | --base | {plain, simple, simple-vert, single, quad} | plain |

| Seed sequence | --start-sequence | list | [] |

| Seed pattern size | --seed-pattern-size | int | 2 |

| Board edge length | --board-length | int | 13 |

| Number of symmetry axes | --num-symmetries | {0,1,2,3} | 2 |

| Maximum number of vertices | --max-vertices | int | 100 |

| (Max.) fold angle | --psi | float | 3.14 |

| Auto optimize fold angle | --optimize-psi | True | |

| Maximum crease length | --cl-max | float | infinity |

| Random seed | --seed | int | 16711 |

| Allow source action | --allow-source-action | string | False |

| Target | --target | string | "target.obj" |

| Target transform | --target-transform | transform | [0,0,0] |

| Auto target mesh transform | --auto-mesh-transform | False | |

| Count interior vertices | --count-interior | False | |

| Mode | --mode | {TRAIN, DEBUG} | TRAIN |

| Resume run | --resume | False | |

| Local directory | --local-dir | string | "cwd" |

| Branching factor | --bf | int | 10 |

| Number of workers (RL) | --num-workers | int | 0 |

| Training iterations RL) | --training-iteration | int | 100 |

| Number of CPUs (RL) | --ray-num-cpus | int | 1 |

| Number of GPUs (RL) | --ray-num-gpus | int | 0 |

| Animation view | --anim-view | list | [90, -90, 23] |

Note The action- and configuration space complexity grows exponentially with the board size. On the contrary additional symmetries help reduce the complexity.

The gym environment class RoriEnv contains the methods for the agents to interact with.

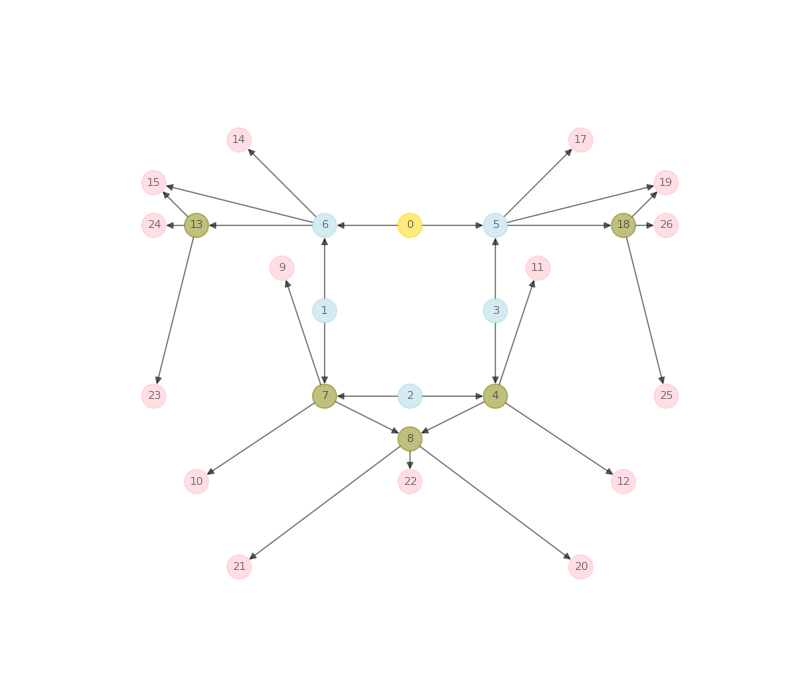

In essence agents construct graphs of connected single vertices in the environment, from which they receive sparse rewards in return.

Rewards depend on the set objective and rewarder.

Note You can add deploy your custom rewarder. To make it run you also need to add and call your custom objective from the main.

A game terminates if a terminal state is reached, either by choice of the terminal action of the agent or by violation of a posteriori foldability conditions.

Agents interact with the environment. They can be human or artificial. We provide a list of standard search algorithms as artificial agents.

| Agent | Search Algorithm |

|---|---|

| RDM | Uniform Random Search |

| BFTS | Breadth First Tree Search |

| DFTS | Depth First Tree Search |

| MCTS | Monte Carlo Tree Search |

| evolution | Evolutionary Search |

| PPO | Proximal Policy Optimization (Reinforcement Learning) |

Note You can add you own custom agent or search-algorithm in the main.

Rules and symmetry-rules are enforced a priori through action masking, constraining the action space of agents.

A game can however reach a non-foldable state. A particular state is foldable if and only if it complies with the following conditions:

- Faces do not intersect during folding as proven by a triangle-triangle intersection test.

- The corresponding Origami Pattern is rigidly foldable as validated by the kinematic model.

Any violation of the two conditions results in a terminal game state.

The best episodes (origami patterns) are documented in the results directory per experiment. For each best episode three files, a PNG of the corresponding origami graph, folded shape OBJ and animation GIF are stored.

| Origami Graph (Pattern) | Folded Shape | Folding Animation |

|---|---|---|

|

|

|