Vinicio DeSola, Kevin Hanna, Pri Nonis

Goal 1 FinBERT-Prime_128MSL-500K+512MSL-10K vs BERT

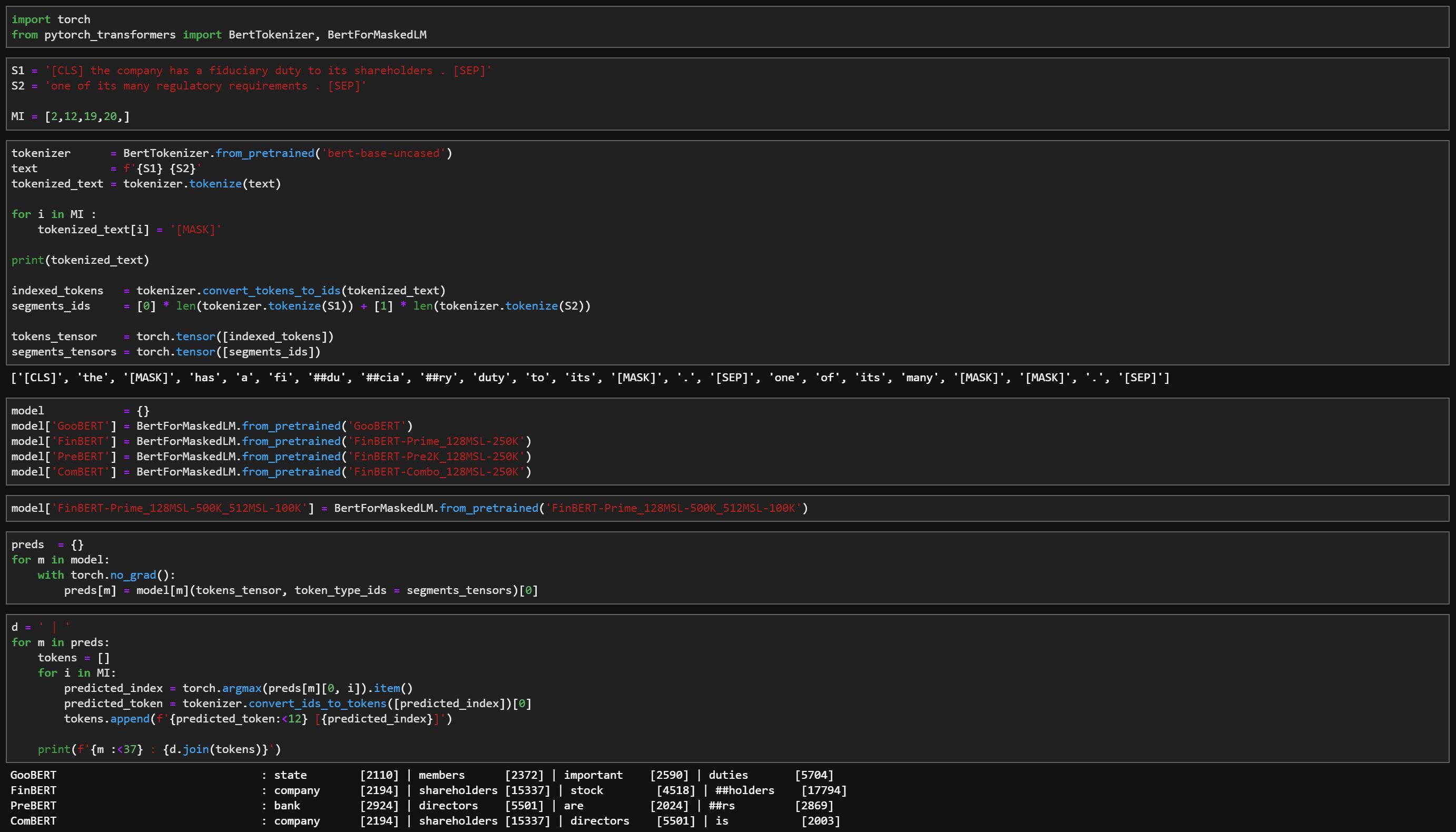

- Compare mask LM prediction accurracy on technical financial sentences

- Compare analogy on financial relationships

Goal 2 FinBERT-Prime_128MSL-500K vs FinBERT-Pre2K_128MSL-500K

- Compare mask LM prediction accuracy on financial news from 2019

- Compare analogy on financial relationship, measure shift in understanding : risk vs climate in 1999 vs 2019

Goal 3 FinBERT-Prime_128MSL-500K vs FinBERT-Prime_128MSK-500K+512MSL-10K

- Compare mask LM prediction accuracy on long financial sentences

Goal 4 FinBERT-Combo_128MSL-250K vs FinBERT-Prime_128MSL-500K+512MSL-10K

- Compare mask LM prediction accuracy on financial sentences : can we get same accuracy with less training by building on original BERT weights.

-

PrimePre-trained from scratch on 2017, 2018, 2019 SEC 10K dataset -

Pre2KPre-traind from scratch on 1998, 1999 SEC 10K dataset -

ComboPre-trained continued from original BERT on 2017, 2018, 2019 SEC 10K dataset