[CVPR 2021 Oral] Robust Neural Routing Through Space Partitions for Camera Relocalization in Dynamic Indoor Environments

*Siyan Dong, *Qingnan Fan, He Wang, Ji Shi, Li Yi, Thomas Funkhouser, Baoquan Chen, Leonidas J. Guibas

* Equal Contribution | Video | Poster

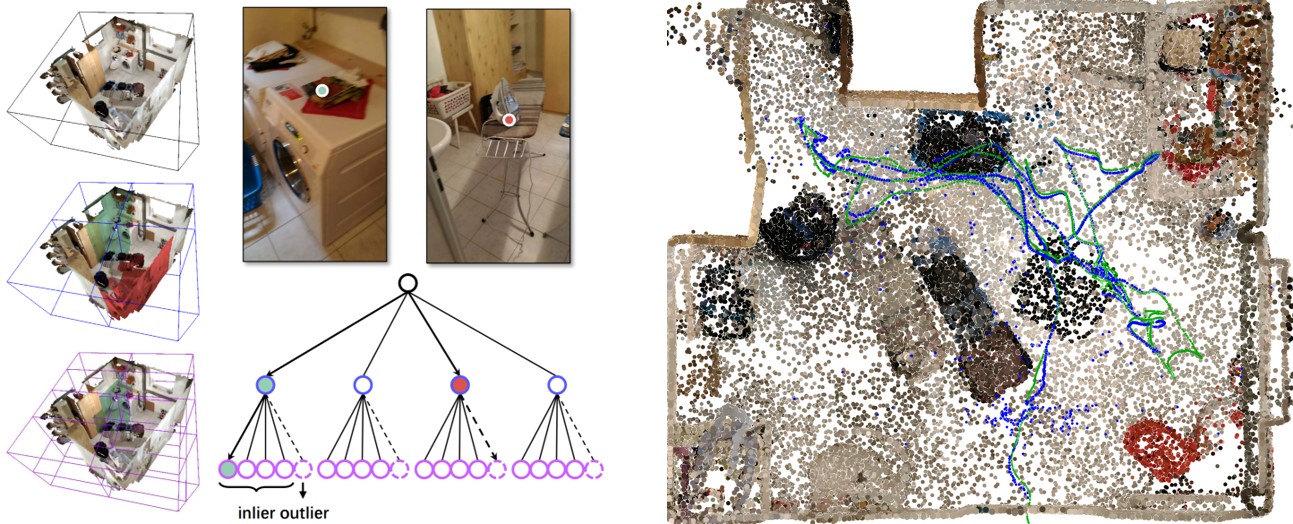

We provide the implementation of NeuralRouting, a novel outlier-aware neural tree model to estimate camera pose in dynamic environments. The model builds on three important blocks: (a) a hierarchical space partition over the indoor scene to construct a decision tree; (b) a neural routing function, implemented as a deep classification network, employed for better 3D scene understanding; and (c) an outlier rejection module used to filter out dynamic points during the hierarchical routing process. After establishing camera-to-world 3D-3D correspondences, a Kabsch based RANSAC is applied to solve the camera pose.

Overall, our algorithm consists of two steps: a scene coordinate regressor for 3D-3D correspondence establishment and a Kabsch-based RANSAC algorithm for camera pose optimization. The coordinate regressor is scene-specific and learned in each scene. At inference time, the camera pose is estimated by running the coordinate regressor and the RANSAC algorithm.

If you find our work helpful in your research, please consider citing:

@InProceedings{Dong_2021_CVPR,

author = {Dong, Siyan and Fan, Qingnan and Wang, He and Shi, Ji and Yi, Li and Funkhouser, Thomas and Chen, Baoquan and Guibas, Leonidas J.},

title = {Robust Neural Routing Through Space Partitions for Camera Relocalization in Dynamic Indoor Environments},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2021},

pages = {8544-8554}

}

The codes in this repository are tested with Python 3.7.3, PyTorch 1.1.0, CUDA 10.0, OpenCV 4.5.1 and scikit-learn 0.21.2. We recommend running our code in the Docker container siyandong/neuralrouting:ransac_v0.2.

docker run -v <dataset folder>:/opt/dataset -it --gpus all --rm --entrypoint /bin/bash --name <container name> siyandong/neuralrouting:ransac_v0.2

In the container, run source activate NeuralRouting to activate the environment.

The data follows the format of the RIO10 benchmark. To use the code, please download the dataset first.

In our experiments, we apply a random transformation to the original ground-truth camera poses each scene. The pre-computed parameters and pre-trained model weights rely on the transformed coordiante system. Therefore, run the following script to convert coordinate system before running our algorithm

python random_transformation.py --dataset_folder <dataset folder>

For example, python random_transformation.py --dataset_folder /opt/dataset. After camera pose estimation, run the same script with different argument to convert the estimation to the original coordinate system.

In the training stage, we build the space partition tree and train the neural routing functions to memorize the static points while rejecting dynamic ones.

Set dataset_folder and scene_id in config.py.

Run the script

python train.py --exp_name <checkpoint folder>

For example, python train.py --exp_name rio10_scene01.

It will build the tree, train it level by level, and save the model parameters in the folder ./experiment/<checkpoint folder>.

You can find the pre-trained checkpoints here.

Set dataset_folder and scene_id in config.py.

Run the script

python test.py --exp_name <checkpoint folder> --test_seq <sequence name>

For example, python test.py --exp_name rio10_scene01 --test_seq seq01_02.

It will infer pixel-wise scene coordinates (as GMM) and save them in the folder ./gmm_prediction.

The following two parts are both required to achieve the performance reported in the paper.

You should run this part of codes in the Docker container siyandong/neuralrouting:ransac_v0.2. In the container, run the following commands

cd /opt/relocalizer_codes/spaint

python run_ransac.py --data_folder_mask <dataset folder mask> --scene_id <scene id> --sequence_id <sequence id> --prediction_folder <gmm prediction folder>

For example, python run_ransac.py --data_folder_mask /opt/dataset/scene{:02d}/seq{:02d}/seq{:02d}_{:02d} --scene_id 1 --sequence_id 2 --prediction_folder /opt/relocalizer_codes/NeuralRouting/gmm_prediction/rio10_scene01_seq01_02.

It will output the estimated camera poses in the folder /opt/relocalizer_codes/spaint/build/bin/apps/relocgui/reloc_poses.

You should run this part of codes in the Docker container siyandong/neuralrouting:ransac_icp_v0.0. In the container, run the following commands

cd /opt/relocalizer_codes/spaint

python run_ransac_icp.py --data_folder_mask <dataset folder mask> --scene_id <scene id> --sequence_id <sequence id> --prediction_folder <gmm prediction folder>

For example, python run_ransac_icp.py --data_folder_mask /opt/dataset/scene{:02d}/seq{:02d} --scene_id 1 --sequence_id 2 --prediction_folder /opt/relocalizer_codes/NeuralRouting/gmm_prediction/rio10_scene01_seq01_02.

It will output the estimated camera poses in the folder /opt/relocalizer_codes/spaint/build/bin/apps/spaintgui/reloc_poses.

To convert the pose estimation to the original scene coordinate system of the RIO10 benchmark, run

python random_transformation.py --pose_folder <camera pose estimation folder> --scene_id <scene id>

For example, python random_transformation.py --pose_folder /opt/relocalizer_codes/spaint/build/bin/apps/spaintgui/reloc_poses/20210620T112628 --scene_id 1.

We provide our camera pose accuracy on the validation set for each scene of RIO10 dataset. We further compute the weighted average pose accuracy by weighing each scene via the number of scene frames, following the pose accuracy calculation in the RIO-10 online benchmark.

| (5cm, 5deg) | scene01 | scene02 | scene03 | scene04 | scene05 | scene06 | scene07 | scene08 | scene09 | scene10 | average | weighted average |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| NeuralRouting(w/o ICP) | 58.62 | 18.70 | 17.02 | 10.59 | 27.14 | 62.06 | 55.54 | 11.55 | 7.63 | 1.69 | 27.05 | 21.59 |

| NeuralRouting(w/ ICP) | 66.80 | 23.40 | 19.48 | 17.81 | 42.08 | 69.15 | 57.79 | 15.24 | 7.52 | 0.62 | 31.99 | 24.05 |

In this repository, we use parts of the implementation from spaint and InfiniTAM. We thank the respective authors for open sourcing their code.