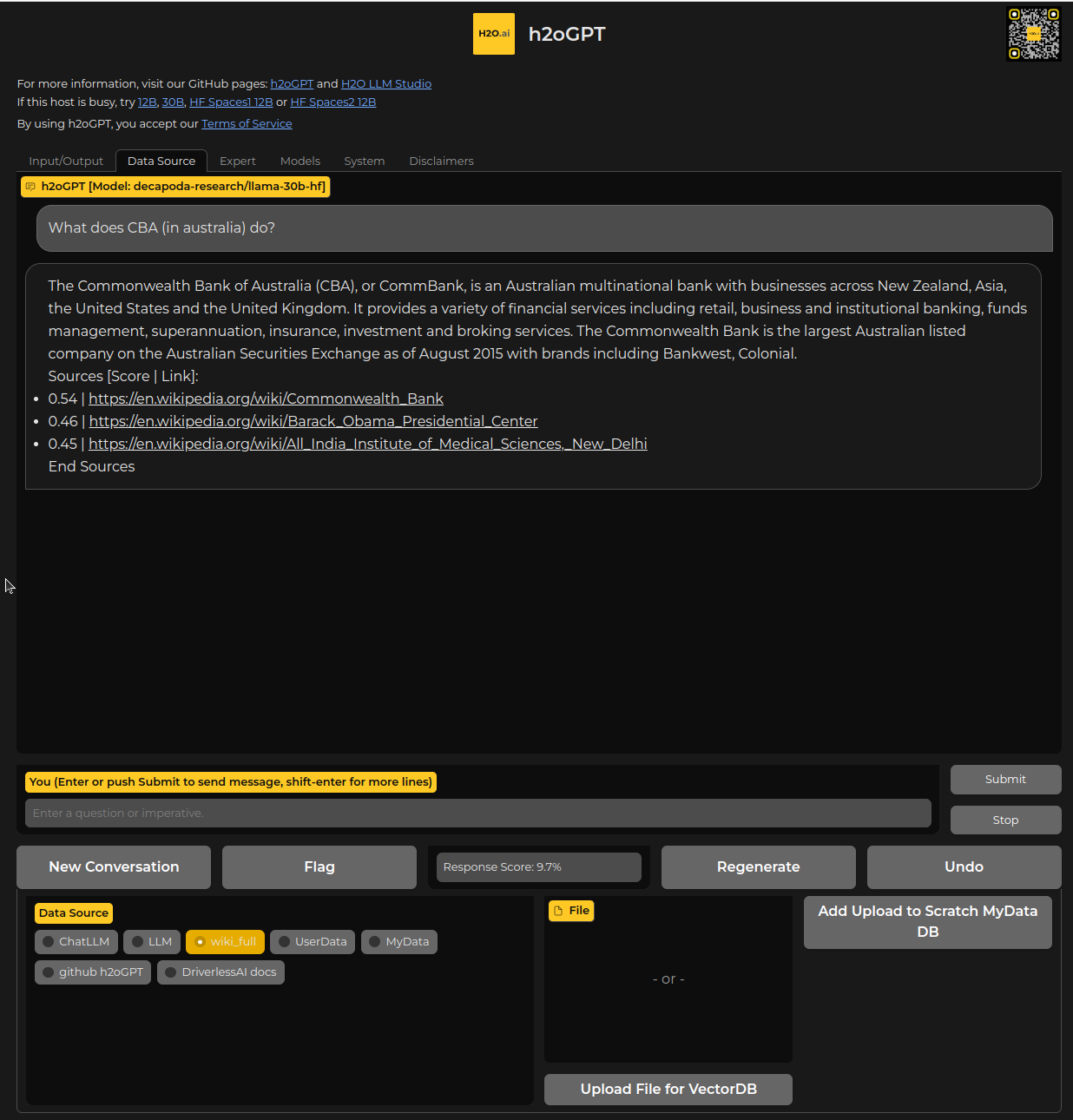

h2oGPT is a large language model (LLM) fine-tuning framework and chatbot UI with document(s) question-answer capabilities. Documents help to ground LLMs against hallucinations by providing them context relevant to the instruction. h2oGPT is fully permissive Apache V2 open-source project for 100% private and secure use of LLMs and document embeddings for document question-answer.

Welcome! Join us and make an issue or a PR, and contribute to making the best fine-tuned LLMs, chatbot UI, and document question-answer framework!

Turn ★ into ⭐ (top-right corner) if you like the project!

Live hosted instances:

h2oGPT 12B

h2oGPT 12B- 🤗 h2oGPT 12B #1

- 🤗 h2oGPT 12B #2

h2oGPT (research) 30B

h2oGPT (research) 30B Latest LangChain-enabled h2oGPT (temporary link) 12B

Latest LangChain-enabled h2oGPT (temporary link) 12B Latest LangChain-enabled h2oGPT (temporary link) 12B

Latest LangChain-enabled h2oGPT (temporary link) 12B Latest LangChain-enabled h2oGPT (temporary link) 12B

Latest LangChain-enabled h2oGPT (temporary link) 12B Latest LangChain-enabled h2oGPT (temporary link) 12B

Latest LangChain-enabled h2oGPT (temporary link) 12B

For questions, discussing, or just hanging out, come and join our Discord!

GPU mode requires CUDA support via torch and transformers. A 6.9B (or 12GB) model in 8-bit uses 7GB (or 13GB) of GPU memory. 8-bit or 4-bit precision can further reduce memory requirements.

CPU mode uses GPT4ALL and LLaMa.cpp, e.g. gpt4all-j, requiring about 14GB of system RAM in typical use.

GPU and CPU mode tested on variety of NVIDIA GPUs in Ubuntu 18-22, but any modern Linux variant should work. MACOS support tested on Macbook Pro running Monterey v12.3.1 using CPU mode.

- LangChain equipped Chatbot integration and streaming responses

- Persistent database using Chroma or in-memory with FAISS

- Original content url links and scores to rank content against query

- Private offline database of any documents (PDFs and more)

- Upload documents via chatbot into shared space or only allow scratch space

- Control data sources and the context provided to LLM

- Efficient use of context using instruct-tuned LLMs (no need for many examples)

- API for client-server control

- CPU and GPU support from variety of HF models, and CPU support using GPT4ALL and LLaMa cpp

- Linux, MAC, and Windows support

- Variety of models (h2oGPT, WizardLM, Vicuna, OpenAssistant, etc.) supported

- Fully Commercially Apache V2 code, data and models

- High-Quality data cleaning of large open-source instruction datasets

- LORA (low-rank approximation) efficient 4-bit, 8-bit and 16-bit fine-tuning and generation

- Large (up to 65B parameters) models built on commodity or enterprise GPUs (single or multi node)

- Evaluate performance using RLHF-based reward models

Screen.Recording.2023-04-18.at.4.10.58.PM.mov

All open-source datasets and models are posted on 🤗 H2O.ai's Hugging Face page.

Also check out H2O LLM Studio for our no-code LLM fine-tuning framework!

- Integration of code and resulting LLMs with downstream applications and low/no-code platforms

- Complement h2oGPT chatbot with search and other APIs

- High-performance distributed training of larger models on trillion tokens

- Enhance the model's code completion, reasoning, and mathematical capabilities, ensure factual correctness, minimize hallucinations, and avoid repetitive output

- Add other tools like search

- Add agents for SQL and CSV question/answer

For help installing a Python 3.10 environment, see Install Python 3.10 Environment

For help installing cuda toolkit, see CUDA Toolkit

git clone https://github.com/h2oai/h2ogpt.git

cd h2ogpt

pip install -r requirements.txt

python generate.py --base_model=h2oai/h2ogpt-oig-oasst1-512-6_9b --load_8bit=TrueThen point browser at http://0.0.0.0:7860 (linux) or http://localhost:7860 (windows/mac) or the public live URL printed by the server (disable shared link with --share=False). For 4-bit or 8-bit support, older GPUs may require older bitsandbytes installed as pip uninstall bitsandbytes -y ; pip install bitsandbytes==0.38.1.

For quickly using a private document collection for Q/A, place documents (PDFs, text, etc.) into a folder called user_path and run

pip install -r requirements_optional_langchain.txt

python generate.py --base_model=h2oai/h2ogpt-oig-oasst1-512-6_9b --load_8bit=True --langchain_mode=UserData --user_path=user_pathFor more ways to ingest on CLI and control see LangChain Readme.

For 4-bit support, the latest dev versions of transformers, accelerate, and peft are required, which can be installed by running:

pip uninstall peft transformers accelerate -y

pip install -r requirements_optional_4bit.txtwhere uninstall is required in case, e.g., peft was installed from GitHub previously. Then when running generate pass --load_4bit=True, which is only supported for certain architectures like GPT-NeoX-20B, GPT-J, LLaMa, etc.

Any other instruct-tuned base models can be used, including non-h2oGPT ones. Larger models require more GPU memory.

CPU support is obtained after installing two optional requirements.txt files. GPU support is also present if one has GPUs.

- Install base, langchain, and GPT4All dependencies:

git clone https://github.com/h2oai/h2ogpt.git

cd h2ogpt

pip install -r requirements.txt -c req_constraints.txt

pip install -r requirements_optional_langchain.txt -c req_constraints.txt

pip install -r requirements_optional_gpt4all.txt -c req_constraints.txtSee GPT4All for details on installation instructions if any issues encountered.

One can run make req_constraints.txt to ensure that the constraints file is consistent with requirements.txt.

- Change

.env_gpt4allmodel name if desired.

# model path and model_kwargs

model_path_gptj=ggml-gpt4all-j-v1.3-groovy.bin

You can choose a different model than our default choice by going to GPT4All Model explorer GPT4All-J compatible model. Do not need to download, the gp4all package will download at runtime and put it into .cache like huggingface would.

See llama.cpp for instructions on getting model for --base_model=llama case.

- Run generate.py

For LangChain support using documents in user_path folder, run h2oGPT like:

python generate.py --base_model=gptj --score_model=None --langchain_mode='UserData' --user_path=user_pathSee LangChain Readme for more details. For no langchain support (still uses LangChain package as model wrapper), run as:

python generate.py --base_model=gptj --score_model=NoneAll instructions are same as for GPU or CPU installation, except first install Rust:

curl –proto ‘=https’ –tlsv1.2 -sSf https://sh.rustup.rs | shEnter new shell and test: rustc --version

When running a Mac with Intel hardware (not M1), you may run into _clang: error: the clang compiler does not support '-march=native'_ during pip install.

If so, set your archflags during pip install. eg: ARCHFLAGS="-arch x86_64" pip3 install -r requirements.txt

If you encounter an error while building a wheel during the pip install process, you may need to install a C++ compiler on your computer.

All instructions are same as for GPU or CPU installation, except also need C++ compiler by doing:

- Install Visual Studio 2022.

- Make sure the following components are selected:

- Universal Windows Platform development

- C++ CMake tools for Windows

- Download the MinGW installer from the MinGW website.

- Run the installer and select the

gcccomponent.

For GPU support of 4-bit and 8-bit, run:

pip install https://github.com/jllllll/bitsandbytes-windows-webui/blob/main/bitsandbytes-0.39.0-py3-none-any.whlIf you encounter issues on older GPUs, it may require older bitsandbytes installed as:

pip install https://github.com/jllllll/bitsandbytes-windows-webui/raw/main/bitsandbytes-0.38.1-py3-none-any.whl

The CLI can be used instead of gradio by running for some base model, e.g.:

python generate.py --base_model=gptj --cli=Trueand for LangChain run:

python make_db.py --user_path=user_path --collection_name=UserData

python generate.py --base_model=gptj --cli=True --langchain_mode=UserDatawith documents in user_path folder, or directly run:

python generate.py --base_model=gptj --cli=True --langchain_mode=UserData --user_path=user_pathwhich will build the database first time. One can also use any other models, like:

python generate.py --base_model=h2oai/h2ogpt-oig-oasst1-512-6_9b --cli=True- To create a development environment for training and generation, follow the installation instructions.

- To fine-tune any LLM models on your data, follow the fine-tuning instructions.

- To create a container for deployment, follow the Docker instructions.

For help installing flash attention support, see Flash Attention

You can also use Docker for inference.

- Some training code was based upon March 24 version of Alpaca-LoRA.

- Used high-quality created data by OpenAssistant.

- Used base models by EleutherAI.

- Used OIG data created by LAION.

Our Makers at H2O.ai have built several world-class Machine Learning, Deep Learning and AI platforms:

- #1 open-source machine learning platform for the enterprise H2O-3

- The world's best AutoML (Automatic Machine Learning) with H2O Driverless AI

- No-Code Deep Learning with H2O Hydrogen Torch

- Document Processing with Deep Learning in Document AI

We also built platforms for deployment and monitoring, and for data wrangling and governance:

- H2O MLOps to deploy and monitor models at scale

- H2O Feature Store in collaboration with AT&T

- Open-source Low-Code AI App Development Frameworks Wave and Nitro

- Open-source Python datatable (the engine for H2O Driverless AI feature engineering)

Many of our customers are creating models and deploying them enterprise-wide and at scale in the H2O AI Cloud:

- Multi-Cloud or on Premises

- Managed Cloud (SaaS)

- Hybrid Cloud

- AI Appstore

We are proud to have over 25 (of the world's 280) Kaggle Grandmasters call H2O home, including three Kaggle Grandmasters who have made it to world #1.

Please read this disclaimer carefully before using the large language model provided in this repository. Your use of the model signifies your agreement to the following terms and conditions.

- Biases and Offensiveness: The large language model is trained on a diverse range of internet text data, which may contain biased, racist, offensive, or otherwise inappropriate content. By using this model, you acknowledge and accept that the generated content may sometimes exhibit biases or produce content that is offensive or inappropriate. The developers of this repository do not endorse, support, or promote any such content or viewpoints.

- Limitations: The large language model is an AI-based tool and not a human. It may produce incorrect, nonsensical, or irrelevant responses. It is the user's responsibility to critically evaluate the generated content and use it at their discretion.

- Use at Your Own Risk: Users of this large language model must assume full responsibility for any consequences that may arise from their use of the tool. The developers and contributors of this repository shall not be held liable for any damages, losses, or harm resulting from the use or misuse of the provided model.

- Ethical Considerations: Users are encouraged to use the large language model responsibly and ethically. By using this model, you agree not to use it for purposes that promote hate speech, discrimination, harassment, or any form of illegal or harmful activities.

- Reporting Issues: If you encounter any biased, offensive, or otherwise inappropriate content generated by the large language model, please report it to the repository maintainers through the provided channels. Your feedback will help improve the model and mitigate potential issues.

- Changes to this Disclaimer: The developers of this repository reserve the right to modify or update this disclaimer at any time without prior notice. It is the user's responsibility to periodically review the disclaimer to stay informed about any changes.

By using the large language model provided in this repository, you agree to accept and comply with the terms and conditions outlined in this disclaimer. If you do not agree with any part of this disclaimer, you should refrain from using the model and any content generated by it.