IBM Developer - Data Asset Exchange

Curated free and open datasets under open data licenses for enterprise data science.

Link to download: https://developer.ibm.com/exchanges/data/all/fashion-mnist/

The model is developed using the Tensorflow framework.

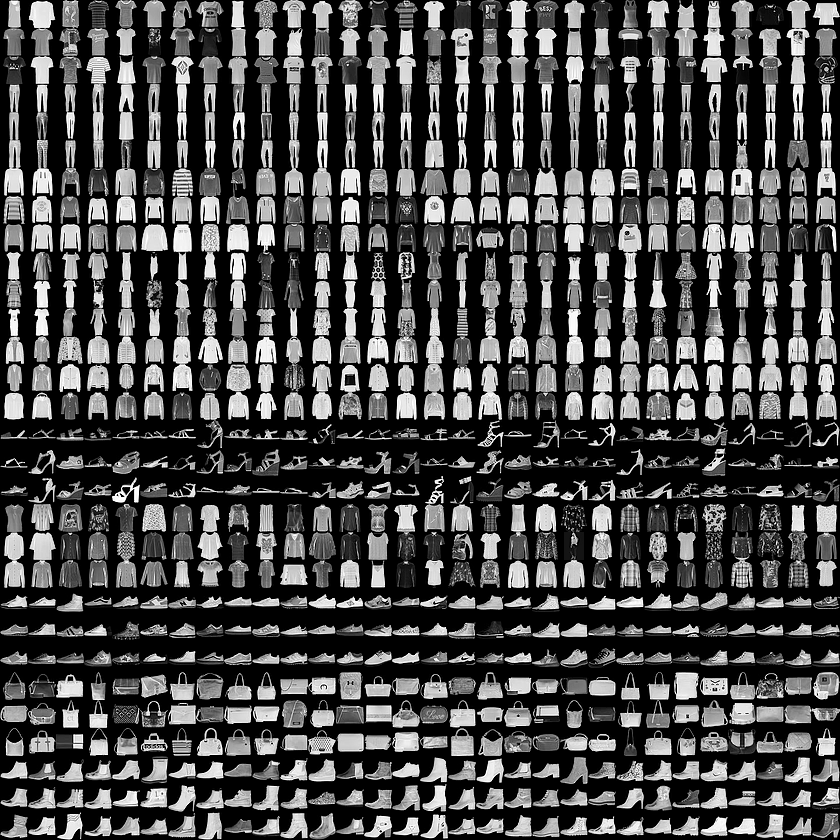

Each training and test example is assigned to one of the following labels:

| Label | Description |

|---|---|

| 0 | T-shirt/top |

| 1 | Trouser |

| 2 | Pullover |

| 3 | Dress |

| 4 | Coat |

| 5 | Sandal |

| 6 | Shirt |

| 7 | Sneaker |

| 8 | Bag |

| 9 | Ankle boot |

- Docker

- Python IDE or code editors

- Pre-trained model weights stored in a downloadable location

- List of required python packages

- Input pre-processing code

- Prediction/Inference code

- Output variables

- Output post-processing code

- Fork the Template and Clone the Repository

- Update Dockerfile

- Update Package Requirements

- Update API and Model Metadata

- Update Scripts

- Build the model Docker image

- Run the model server

-

Login to GitHub and go to MAX Skeleton

-

Click on

Use this templateand provide a name for the repo. -

Clone the newly created repository using the below command:

$ git clone https://github.com/......Open the Dockerfile file and update the following:

-

ARG model_bucket=with the link to the model file public storage that can be downloaded -

ARG model_file=with the model file name.

For testing purpose, update as below:

model_bucket = https://github.com/SSaishruthi/max_mnist/raw/master/samples

model_file = fashion_mnist.h5

Add required python packages for running the model prediction to requirements.txt.

Following packages are required for this model:

- numpy==1.14.1

- Pillow==5.4.1

- h5py==2.9.0

- tensorflow==1.14

- In

config.py, update the API metadata.

- API_TITLE

- API_DESC

- API_VERSION

-

Set

MODEL_NAME = 'fashion_mnist.h5'NOTE: Model files are always downloaded to

assetsfolder inside docker. -

In

code/model.py, fill in theMODEL_META_DATA- Model id

- Model name

- Description of the model

- Model type based on what the model does (e.g. Digit recognition)

- Source to the model belongs

- Model license

All you need to start wrapping your model is pre-processing, prediction and post-processing code.

- In

code/model.py, load the model under__init__()method. Here, saved model.h5can be loaded using the below command:

global sess

global graph

sess = tf.Session()

graph = tf.get_default_graph()

set_session(sess)

self.model = tf.keras.models.load_model(path)- In

code/model.py, pre-processing functions required for the input should get into the_pre_processfunction. Here, the input image needs to be read and converted into an array of acceptable shape.

# Open the input image

img = Image.open(io.BytesIO(inp))

print('reading image..', img.size)

# Convert the PIL image instance into numpy array and

# get in proper dimension.

image = tf.keras.preprocessing.image.img_to_array(img)

print('image array shape..', image.shape)

image = np.expand_dims(image, axis=0)

print('image array shape..', image.shape)NOTE: Pre-processing is followed by prediction function which accepts only one input, so create a dictionary to hold the return results if needed. In this case, we only have one input so we are good to go.

- Predicted digit and its probability are the expected output. Add these two fields to

label_predictioninapi/predict.py

label_prediction = MAX_API.model('LabelPrediction', {

'prediction': fields.Integer(required=True),

'probability': fields.Float(required=True)

})NOTE: These fields can vary depending on the model.

-

Place the prediction code under

_predictmethod incode/model.py. In the above step, we have defined two outputs. Now we need to extract these two results from the model.NOTE: Prediction is followed by post-processing function which accepts only one input, so create a dictionary to hold the results in case of multiple outputs returned from the function.

with graph.as_default():

set_session(sess)

predict_result = self.model.predict(x)

print(predict_result)

return predict_result-

Post-processing function will go under

_post_processmethod incode/model.py. Result from the above step will be the input to this step.Here, result from the above step will contain prediction probability for all 10 classes (digit 0 to 9).

Output response has two fields

statusandpredictionsas defined in theapi/predict.py.

predict_response = MAX_API.model('ModelPredictResponse', {

'status': fields.String(required=True, description='Response status message'),

'predictions': fields.List(fields.Nested(label_prediction), description='Predicted labels and probabilities')

})Predictions is of type list and holds the model results. Create a dictionary inside a list with key names used in label_prediction (step 4) and update the

model results accordingly.

# Extract prediction probability using `amax` and

# digit prediction using `argmax`

return [{'probability': np.amax(result),

'prediction': np.argmax(result)}]- Assign the result from post-processing to the appropriate response field in

api/predict.py.

# Assign result

result['predictions'] = preds- Add test images to

samples/

To build the docker image locally, run:

$ docker build -t max-mnist .

If you want to print debugging messages make sure to set DEBUG=True in config.py.

To run the docker image, which automatically starts the model serving API, run:

$ docker run -it -p 5000:5000 max-mnist

-

Add a few integration tests using pytest in tests/test.py to check that your model works.

Example:

- Update model endpoint and sample input file path.

model_endpoint = 'http://localhost:5000/model/predict'

file_path = 'samples/1.jpeg'

- Check if the prediction is

0.

assert response['predictions'][0]['prediction'] == 0

- To enable Travis CI testing uncomment the docker commands and pytest command in

.travis.yml.