By finishing the "LLM Twin: Building Your Production-Ready AI Replica" free course, you will learn how to design, train, and deploy a production-ready LLM twin of yourself powered by LLMs, vector DBs, and LLMOps good practices.

Why should you care? 🫵

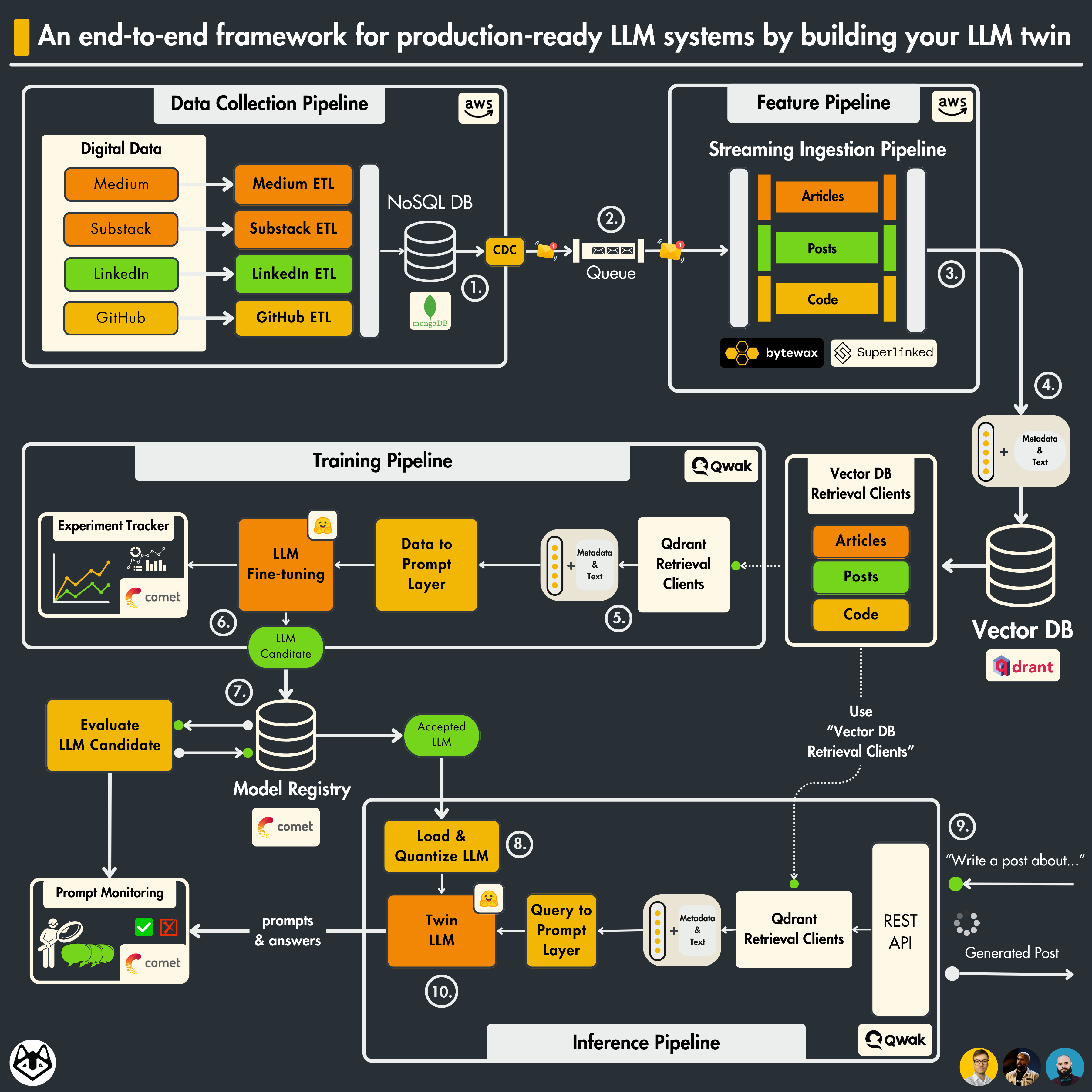

→ No more isolated scripts or Notebooks! Learn production ML by building and deploying an end-to-end production-grade LLM system.

You will learn how to architect and build a real-world LLM system from start to finish - from data collection to deployment.

You will also learn to leverage MLOps best practices, such as experiment trackers, model registries, prompt monitoring, and versioning.

The end goal? Build and deploy your own LLM twin.

What is an LLM Twin? It is an AI character that learns to write like somebody by incorporating its style and personality into an LLM.

- Crawl your digital data from various social media platforms.

- Clean, normalize and load the data to a Mongo NoSQL DB through a series of ETL pipelines.

- Send database changes to a RabbitMQ queue using the CDC pattern.

- ☁️ Deployed on AWS.

- Consume messages from a queue through a Bytewax streaming pipeline.

- Every message will be cleaned, chunked, embedded (using Superlinked, and loaded into a Qdrant vector DB in real-time.

- ☁️ Deployed on AWS.

- Create a custom dataset based on your digital data.

- Fine-tune an LLM using QLoRA.

- Use Comet ML's experiment tracker to monitor the experiments.

- Evaluate and save the best model to Comet's model registry.

- ☁️ Deployed on Qwak.

- Load and quantize the fine-tuned LLM from Comet's model registry.

- Deploy it as a REST API.

- Enhance the prompts using RAG.

- Generate content using your LLM twin.

- Monitor the LLM using Comet's prompt monitoring dashboard.

- ☁️ Deployed on Qwak.

Along the 4 microservices, you will learn to integrate 3 serverless tools:

Audience: MLE, DE, DS, or SWE who want to learn to engineer production-ready LLM systems using LLMOps good principles.

Level: intermediate

Prerequisites: basic knowledge of Python, ML, and the cloud

The course contains 11 hands-on written lessons and the open-source code you can access on GitHub.

You can read everything and try out the code at your own pace.

The articles and code are completely free. They will always remain free.

But if you plan to run the code while reading it, you have to know that we use several cloud tools that might generate additional costs.

The cloud computing platforms (AWS, Qwak) have a pay-as-you-go pricing plan. Qwak offers a few hours of free computing. Thus, we did our best to keep costs to a minimum.

For the other serverless tools (Qdrant, Comet), we will stick to their freemium version, which is free of charge.

Important

The course is a work in progress. We plan to release a new lesson every 2 weeks.

To understand the entire code step-by-step, check out our articles ↓

The course is split into 11 lessons. Every Medium article will be its own lesson.

- An End-to-End Framework for Production-Ready LLM Systems by Building Your LLM Twin

- The Importance of Data Pipelines in the Era of Generative AI

- CDC [Module 1] …WIP

- Streaming ingestion pipeline [Module 2] …WIP

- Vector DB retrieval clients [Module 2] …WIP

- Training data preparation [Module 3] …WIP

- Fine-tuning LLM [Module 3] …WIP

- LLM evaluation [Module 4] …WIP

- Quantization [Module 5] …WIP

- Build the digital twin inference pipeline [Module 6] …WIP

- Deploy the digital twin as a REST API [Module 6] …WIP

The course is created under the Decoding ML umbrella by:

|

Paul Iusztin Senior ML & MLOps Engineer |

|

Alexandru Vesa Senior AI Engineer |

|

Răzvanț Alexandru Senior ML Engineer |

This course is an open-source project released under the MIT license. Thus, as long you distribute our LICENSE and acknowledge our work, you can safely clone or fork this project and use it as a source of inspiration for whatever you want (e.g., university projects, college degree projects, personal projects, etc.).

A big "Thank you 🙏" to all our contributors! This course is possible only because of their efforts.