Benchmark for federated noisy label learning.

[toc]

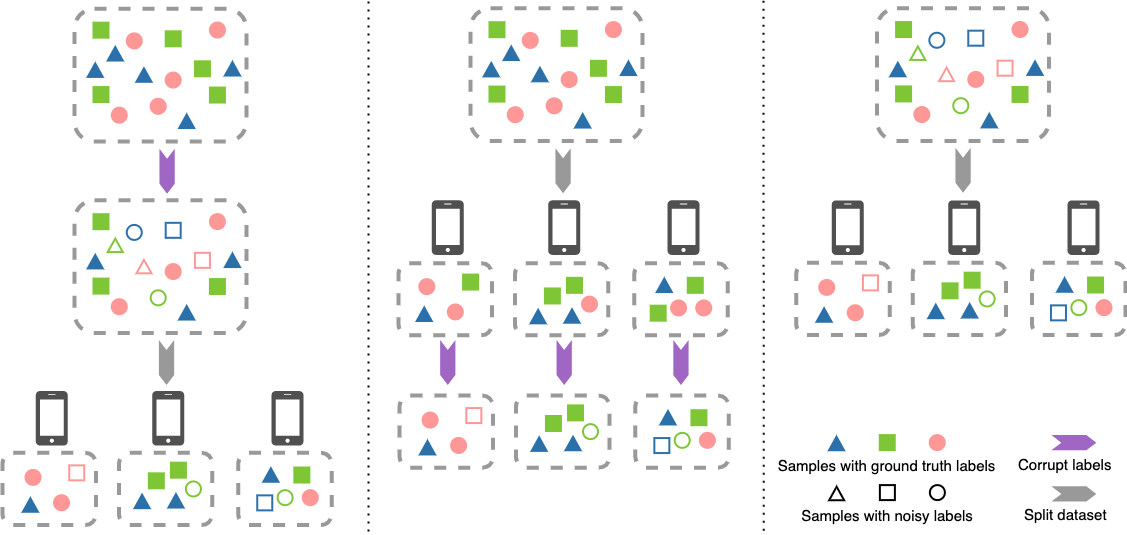

Federated learning has gained popularity for distributed learning without aggregating sensitive data from clients. But meanwhile, the distributed and isolated nature of data isolation may be complicated by data quality, making it more vulnerable to noisy labels. Many efforts exist to defend against the negative impacts of noisy labels in centralized or federated settings. However, there is a lack of a benchmark that comprehensively considers the impact of noisy labels in a wide variety of typical FL settings.

In this work, we serve the first standardized benchmark that can help researchers fully explore potential federated noisy settings. Also, we conduct comprehensive experiments to explore the characteristics of these data settings and unravel challenging scenarios on the federated noisy label learning, which may guide method development in the future. We highlight the 20 basic settings for more than 5 datasets proposed in our benchmark and standardized simulation pipeline for federated noisy label learning. We hope this benchmark can facilitate idea verification in federated learning with noisy labels.

Pytorch installation via conda:

$ conda install pytorch==1.8.0 torchvision==0.9.0 torchaudio==0.8.0 cudatoolkit=11.1 -c pytorch -c nvidia -yExtra dependencies:

$ pip install -r requirements.txtCurrent supported datasets:

| Noise Scene | Dataset | #Train | #Validation | #Test | #Class | ImageSize | Noise Ratio (%) |

|---|---|---|---|---|---|---|---|

|

Globalized & Localized |

MNIST | 60K | N/A | 10K | 10 | 28×28 | N/A |

| SVHN | 73K | N/A | 26K | 10 | 32×32×3 | N/A | |

| CIFAR-10 | 50K | N/A | 10K | 10 | 32×32×3 | N/A | |

| CIFAR-100 | 50K | N/A | 10K | 100 | 32×32×3 | N/A | |

| Real-world | Clothing1M | 1M | 14K | 10K | 14 | 224×224×3 | ≈39.46 |

| WebVision | 2.4M | 50K | 50K | 1000 | 256×256×3 | ≈20.00 |

Federated noise scenes provided in

Raw dataset should be downloaded in to local folder before data-build process. The folder structure can be:

.

├── FedNoisy

│ ├── LICENSE

│ ├── README.md

│ ├── build_dataset_fed.py

│ ├── fednoisy

│ ├── imgs

│ ├── requirements.txt

│ └── scripts

├── rawdata

│ ├── cifar10

│ │ ├── cifar-10-batches-py

│ │ └── cifar-10-python.tar.gz

│ ├── cifar100

│ │ ├── cifar-100-python

│ │ └── cifar-100-python.tar.gz

│ ├── mnist

│ │ └── MNIST

│ │ └── raw

│ │ ├── t10k-images-idx3-ubyte

│ │ ...

│ │ └── train-labels-idx1-ubyte.gz

│ ├── clothing1M/

│ │ ├── category_names_chn.txt

│ │ ├── category_names_eng.txt

│ │ ├── clean_label_kv.txt

│ │ ├── clean_test_key_list.txt

│ │ ├── clean_train_key_list.txt

│ │ ├── clean_val_key_list.txt

│ │ ├── images

│ │ │ ├── 0

│ │ │ ├── 1

│ │ │ ...

│ │ │ └── 9

│ │ ├── noisy_label_kv.txt

│ │ ├── noisy_train_key_list.txt

│ │ ├── README.md

│ │ └── venn.png

│ └── svhn

│ ├── extra_32x32.mat

│ ├── test_32x32.mat

│ └── train_32x32.mat

├── fedNLLdata # to store fedNLL data settings

└── Fed-Noisy-checkpoint # to store algorithm logging output and final models -

Use

torchvision.datasetto download of CIFAR-10/CIFAR-100/SVHN/MNIST directly:import torchvision mnist_train = torchvision.datasets.MNIST(root="rawdata/mnist", train=True, download=True) mnist_test = torchvision.datasets.MNIST(root="rawdata/mnist", train=False, download=True) cifar10_train = torchvision.datasets.CIFAR10(root="rawdata/cifar10", train=True, download=True) cifar10_test = torchvision.datasets.CIFAR10(root="rawdata/cifar10", train=False, download=True) cifar100_train = torchvision.datasets.CIFAR100(root="rawdata/cifar100", train=True, download=True) cifar100_test = torchvision.datasets.CIFAR100(root="rawdata/cifar100", train=False, download=True) svhn_train = torchvision.datasets.SVHN(root="rawdata/svhn", split="train", download=True) svhn_test = torchvision.datasets.SVHN(root="rawdata/svhn", split="test", download=True)

-

To download Clothing1M, please contact tong.xiao.work[at]gmail[dot]com to get the download link. Untar the images and unzip the annotations under

rawdata/clothing1M.

The basic command usage is

$ cd FedNoisy

# under dir FedNoisy/

$ python build_dataset_fed.py --dataset cifar10 \

--partition iid \

--num_clients 10 \

--globalize \

--noise_mode clean \

--raw_data_dir ../rawdata/cifar10 \

--data_dir ../fedNLLdata/cifar10 \

--seed 1-

Clean:

--globalize --noise_mode cleanfor data setting without noise -

Globalized noise

-

--globalize --noise_ratio 0.4 --noise_mode symfor globalized symmetric noise$\varepsilon_{global}=0.4$ -

--globalize --noise_ratio 0.4 --noise_mode asymfor globalized asymmetric noise$\varepsilon_{global}=0.4$

-

-

Localized noise

-

--min_noise_ratio 0.3 --max_noise_ratio 0.5 --noise_mode symfor localized symmetric noise$\varepsilon_k \sim \mathcal{U}(0.3, 0.5)$ -

--min_noise_ratio 0.3 --max_noise_ratio 0.5 --noise_mode asymfor localized asymmetric noise$\varepsilon_k \sim \mathcal{U}(0.3, 0.5)$

-

-

Real noise (only works for Clothing1M):

--dataset clothing1m --globalize --noise_mode real --num_sampels 64000-

--num_samplesis for specifying number of training samples used for Clothing1M, the default is 64000

-

-

MNIST:

--dataset mnist- IID:

--partition iid --num_clients 10 - Non-IID quantity skew:

--partition noniid-quantity --num_clients 10 --dir_alpha 0.1 - Non-IID Dirichlet-based label skew:

--partition noniid-labeldir --dir_alpha 0.1 --num_clients 10 - Non-IID quantity-based label skew:

--partition noniid-#label --major_classes_num 3 --num_clients 10

- IID:

-

SVHN:

--dataset svhn -

CIFAR-10:

--dataset cifar10 -

CIFAR-100:

--dataset cifar100 -

Clothing1M:

--dataset cloting1m- IID:

--partition iid --num_clients 10 - Non-IID quantity skew:

--partition noniid-quantity --num_clients 10 --dir_alpha 0.1 - Non-IID Dirichlet-based label skew:

--partition noniid-labeldir --dir_alpha 0.1 --num_clients 10 - Non-IID quantity-based label skew:

--partition noniid-#label --major_classes_num 5 --num_clients 10

- IID:

| Federated Algorithm | Noisy Label Algorithm | Paper | |

| Category | Method | ||

| FedAvg | Robust Regularizaiton | Mixup | [2018 ICLR] Mixup: Beyond empirical risk minimization |

| RobustLoss Function | SCE | [2019 ICCV] Symmetric Cross Entropy for Robust Learning with Noisy Labels | |

| GCE | [2018 NeurIPS] Generalized Cross Entropy Loss for Training Deep Neural Networks with Noisy Labels | ||

| MAE | [2017 AAAI] Robust Loss Functions under Label Noise for Deep Neural Networks | ||

| Loss Adjustment | M-DYR-H | [2019 ICML] Unsupervised Label Noise Modeling and Loss Correction | |

| M-DYR-S | [2019 ICML] Unsupervised Label Noise Modeling and Loss Correction | ||

| DM-DYR-SH | [2019 ICML] Unsupervised Label Noise Modeling and Loss Correction | ||

| Sample Selection | Co-teaching | [2018 NeurIPS] Co-teaching: Robust Training of Deep Neural Networks with Extremely Noisy Labels | |

-

FedAvg

-

Vanilla FedAvg

# under dir FedNoisy/ $ python python fednoisy/algorithms/fedavg/main.py --dataset mnist \ --model SimpleCNN \ --partition iid \ --num_clients 10 \ --globalize \ --noise_mode sym \ --noise_ratio 0.4 \ --seed 1 \ --sample_ratio 1.0 \ --com_round 500 \ --epochs 5 \ --momentum 0.9 \ --lr 0.01 \ --weight_decay 0.0005 \ --data_dir ../fedNLLdata/mnist \ --out_dir ../Fed-Noisy-checkpoint/mnist/ -

FedAvg + Symmetric Cross Entropy

# under dir FedNoisy/ $ python fednoisy/algorithms/fedavg/main.py --dataset mnist \ --model SimpleCNN \ --partition iid \ --num_clients 10 \ --globalize \ --noise_mode sym \ --noise_ratio 0.4 \ --data_dir ../fedNLLdata/mnist \ --out_dir ../Fed-Noisy-checkpoint/mnist/ \ --com_round 500 \ --epochs 5 \ --sample_ratio 1.0 \ --lr 0.01 \ --momentum 0.9 \ --weight_decay 0.0005 \ --criterion sce \ --sce_alpha 0.01 \ --sce_beta 1.0 \ --seed 1 -

FedAvg + DM-DYR-SH

# under dir FedNoisy/ $ python fednoisy/algorithms/fedavg/main.py --dataset mnist \ --model SimpleCNN \ --partition iid \ --num_clients 10 \ --globalize \ --noise_mode sym \ --noise_ratio 0.4 \ --data_dir ../fedNLLdata/mnist \ --out_dir ../Fed-Noisy-checkpoint/mnist/ \ --com_round 500 \ --epochs 5 \ --sample_ratio 1.0 \ --lr 0.01 \ --momentum 0.9 \ --weight_decay 1e-4 \ --dynboot \ --dynboot_alpha 32 \ --dynboot_mixup dynamic \ --dynboot_reg 1.0 \ --seed 1 -

FedAvg + Mixup

# under dir FedNoisy/ $ python fednoisy/algorithms/fedavg/main.py --dataset mnist \ --model SimpleCNN \ --partition iid \ --num_clients 10 \ --globalize \ --noise_mode sym \ --noise_ratio 0.4 \ --data_dir ../fedNLLdata/mnist \ --out_dir ../Fed-Noisy-checkpoint/mnist/ \ --com_round 500 \ --epochs 5 \ --sample_ratio 1.0 \ --lr 0.01 \ --momentum 0.9 \ --weight_decay 5e-4 \ --mixup \ --mixup_alpha 1.0 \ --seed 1 -

FedAvg + Co-teaching

# under dir FedNoisy/ $ python fednoisy/algorithms/fedavg/main.py --dataset mnist \ --model SimpleCNN \ --partition iid \ --num_clients 10 \ --globalize \ --noise_mode sym \ --noise_ratio 0.4 \ --data_dir ../fedNLLdata/mnist \ --out_dir ../Fed-Noisy-checkpoint/mnist/ \ --com_round 500 \ --epochs 5 \ --sample_ratio 1.0 \ --lr 0.01 \ --momentum 0.9 \ --weight_decay 5e-4 \ --coteaching \ --coteaching_forget_rate 0.4 \ # depends on noise ratio, possible value can be noise ratio it self --coteaching_num_gradual 25 \ # default setting is 10 for 200 epochs, here we set similar ratio with number of global round --seed 1

-

For more scripts, please check scripts folder.

[1] LeCun, Y. (1998). The MNIST database of handwritten digits. http://yann.lecun.com/exdb/mnist/.

[2] Krizhevsky, A., & Hinton, G. (2009). Learning multiple layers of features from tiny images.

[3] Netzer, Y., Wang, T., Coates, A., Bissacco, A., Wu, B., & Ng, A. Y. (2011). Reading digits in natural images with unsupervised feature learning.

[4] Xiao, T., Xia, T., Yang, Y., Huang, C., & Wang, X. (2015). Learning from massive noisy labeled data for image classification. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 2691-2699).

[5] Li, W., Wang, L., Li, W., Agustsson, E., & Van Gool, L. (2017). Webvision database: Visual learning and understanding from web data. arXiv preprint arXiv:1708.02862.