Project | Paper | ModelScope

This repository is the official implementation of HRN.

A Hierarchical Representation Network for Accurate and Detailed Face Reconstruction from In-The-Wild Images

Biwen Lei, Jianqiang Ren, Mengyang Feng, Miaomiao Cui, Xuansong Xie

In CVPR 2023

DAMO Academy, Alibaba Group, Hangzhou, China

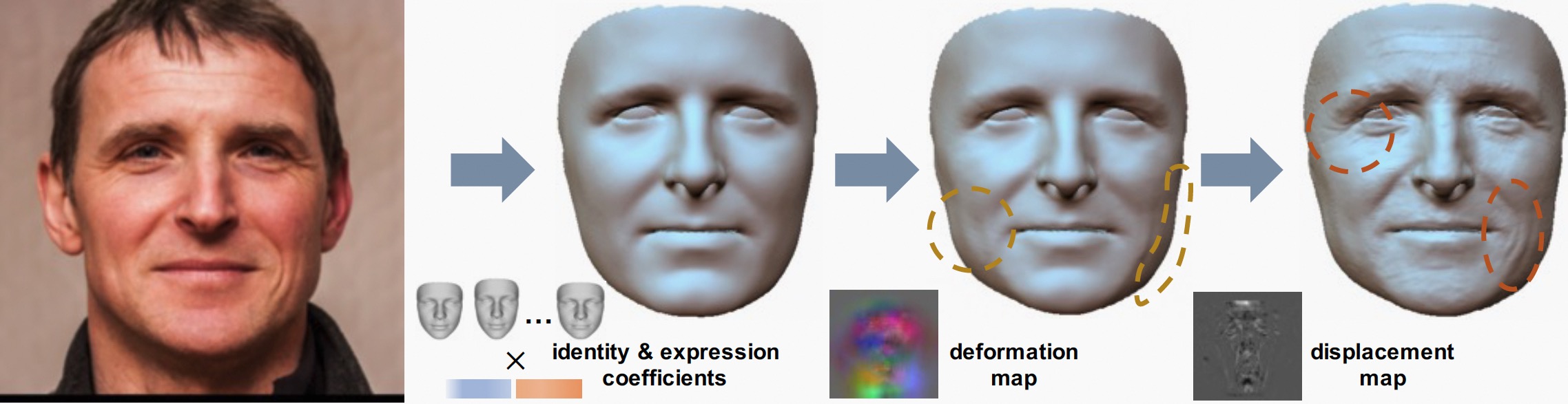

We present a novel hierarchical representation network (HRN) to achieve accurate and detailed face reconstruction from a single image. Specifically, we implement the geometry disentanglement and introduce the hierarchical representation to fulfill detailed face modeling.

We present a novel hierarchical representation network (HRN) to achieve accurate and detailed face reconstruction from a single image. Specifically, we implement the geometry disentanglement and introduce the hierarchical representation to fulfill detailed face modeling.

- [05/06/2023] The ModelScope demo and Colab demo are available now!

- [04/21/2023] Add the codes of exporting mesh with high frequency details.

- [04/19/2023] The source codes are available!

- [03/01/2023] HRN achieved top-1 results on single image face reconstruction benchmark REALY!

- [02/28/2023] Paper HRN released!

-

[Chinese version] Integrated into ModelScope. Try out the Web Demo.

-

Integrated into Colab notebook. Try out the colab demo.

Clone the repo:

git clone https://github.com/youngLBW/HRN.git

cd HRNThis implementation is only tested under Ubuntu/CentOS environment with Nvidia GPUs and CUDA installed.

- Python >= 3.8

- PyTorch >= 1.6

- Basic requirements, you can run

conda create -n HRN python=3.8 source activate HRN pip install -r requirements.txt - pytorch3d

- nvdiffrast

If there is a "[F glutil.cpp:338] eglInitialize() failed" error, you can try to change all the "dr.RasterizeGLContext" in util/nv_diffrast.py into "dr.RasterizeCudaContext".

cd .. git clone https://github.com/NVlabs/nvdiffrast.git cd nvdiffrast pip install . apt-get install freeglut3-dev apt-get install binutils-gold g++ cmake libglew-dev mesa-common-dev build-essential libglew1.5-dev libglm-dev apt-get install mesa-utils apt-get install libegl1-mesa-dev apt-get install libgles2-mesa-dev apt-get install libnvidia-gl-525

-

Prepare assets and pretrained models

Please refer to this README to download the assets and pretrained models.

-

Run demos

a. single-view face reconstruction

CUDA_VISIBLE_DEVICES=0 python demo.py --input_type single_view --input_root ./assets/examples/single_view_image --output_root ./assets/examples/single_view_image_results

b. multi-view face reconstruction

CUDA_VISIBLE_DEVICES=0 python demo.py --input_type multi_view --input_root ./assets/examples/multi_view_images --output_root ./assets/examples/multi_view_image_results

where the "input_root" saves the multi-view images of the same subject.

-

inference time

The pure inference time of HRN for single view reconstruction is less than 1 second. We added some visualization codes to the pipeline, resulting in an overall time of about 5 to 10 seconds. The multi-view reconstruction of MV-HRN involves the fitting process and the overall time is about 1 minute.

We haven't released the training code yet.

-

This implementation has made a few changes on the basis of the original HRN to improve the effect and robustness:

- Introduce a valid mask to alleviate the interference caused by the occlusion of objects such as hair.

- Re-implement texture map generation and re-alignment module, which is faster than the original implementation.

- Introduce two trainable parameters α and β to improve the training stability at the beginning stage.

-

The displacement map is designed to apply on the rendering process, so the effect of the exported mesh with high frequency details may not be as ideal as the rendered 2D image.

If you have any questions, please contact Biwen Lei (biwen1996@gmail.com).

If you use our work in your research, please cite our publication:

@misc{lei2023hierarchical,

title={A Hierarchical Representation Network for Accurate and Detailed Face Reconstruction from In-The-Wild Images},

author={Biwen Lei and Jianqiang Ren and Mengyang Feng and Miaomiao Cui and Xuansong Xie},

year={2023},

eprint={2302.14434},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

There are some functions or scripts in this implementation that are based on external sources. We thank the authors for their excellent works.

Here are some great resources we benefit:

- Deep3DFaceRecon_pytorch for the base model of HRN.

- DECA, Pytorch3D, nvdiffrast for rendering.

- pix2pix for the image translation network.

- retinaface for face detector.

- face-alignment for cropping.

- FAN for landmark detection.

- Liu for face mask.

- face3d for generating face albedo map.

We would also like to thank these great datasets and benchmarks that allow us to easily perform quantitative and qualitative comparisons :)