ETH Zurich

CVPR'19 (oral presentation)

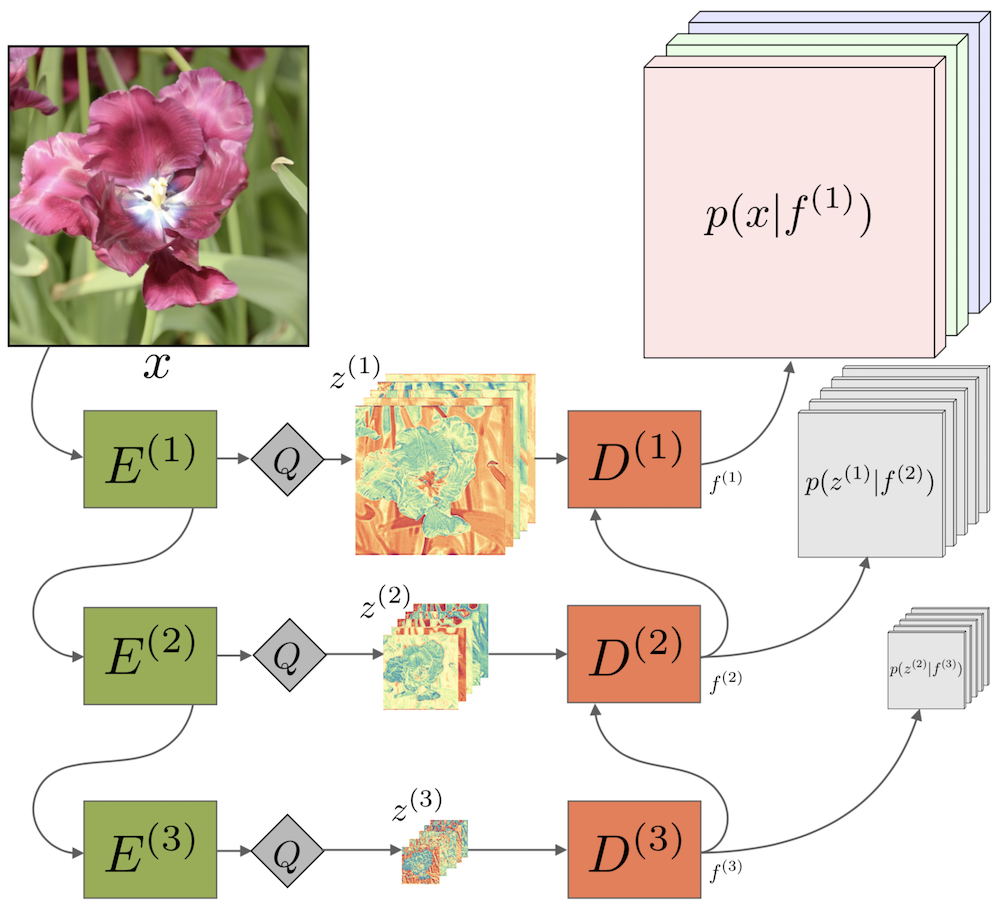

We propose the first practical learned lossless image compression system, L3C, and show that it outperforms the popular engineered codecs, PNG, WebP and JPEG 2000. At the core of our method is a fully parallelizable hierarchical probabilistic model for adaptive entropy coding which is optimized end-to-end for the compression task. In contrast to recent autoregressive discrete probabilistic models such as PixelCNN, our method i) models the image distribution jointly with learned auxiliary representations instead of exclusively modeling the image distribution in RGB space, and ii) only requires three forward-passes to predict all pixel probabilities instead of one for each pixel. As a result, L3C obtains over two orders of magnitude speedups when sampling compared to the fastest PixelCNN variant (Multiscale-PixelCNN). Furthermore, we find that learning the auxiliary representation is crucial and outperforms predefined auxiliary representations such as an RGB pyramid significantly.

Clone the repo and create a conda environment as follows:

conda create --name l3c_env python=3.7 pip --yes

conda activate l3c_env

We need PyTorch, CUDA, and some PIP packages:

conda install pytorch torchvision cudatoolkit=10.0 -c pytorch

pip install -r pip_requirements.txt

To test our entropy coding, you must also install torchac, as described below.

- We tested this code with Python 3.7 and PyTorch 1.1

- The training code also works with PyTorch 0.4, but for testing, we use the

torchacmodule, which needs PyTorch 1.0 or newer to build, see below. - The code relies on

tensorboardX==1.2, even though TensorBoard is now part of PyTorch (since 1.1)

We release the following trained models:

| Name | Training Set | ID | Download Model | |

|---|---|---|---|---|

| Main Model | L3C | Open Images | 0524_0001 |

L3C.tar.gz |

| Baseline | RGB Shared | Open Images | 0524_0002 |

RGB_Shared.tar.gz |

| Baseline | RGB | Open Images | 0524_0003 |

RGB.tar.gz |

| Main Model | L3C | ImageNet32 | 0524_0004 |

L3C_inet32.tar.gz |

| Main Model | L3C | ImageNet64 | 0524_0005 |

L3C_inet64.tar.gz |

See Evaluation of Models to learn how to evaluate on a dataset.

To train a model yourself, you have to first prepare the data as shown in Prepare Open Images Train. Then, use one of the following commands, explained in more detail below:

| Model | Train with the following flags to train.py |

|---|---|

| L3C | configs/ms/cr.cf configs/dl/oi.cf log_dir |

| RGB Shared | configs/ms/cr_rgb_shared.cf configs/dl/oi.cf log_dir |

| RGB | configs/ms/cr_rgb.cf configs/dl/oi.cf log_dir |

| L3C ImageNet32 | configs/ms/cr.cf configs/dl/in32.cf -p lr.schedule=exp_0.75_e1 log_dir |

| L3C ImageNet64 | configs/ms/cr.cf configs/dl/in64.cf -p lr.schedule=exp_0.75_e1 log_dir |

Each of the released models were trained for around 5 days on a Titan Xp.

Note: We do not provide code for multi-GPU training. To incorporate nn.DataParallel, the code must be changed

slightly: In net.py, EncOut and DecOut are namedtuples, which is not supported by nn.DataParallel.

To test an experiment, use test.py. For example, to test L3C and the baselines, run

python test.py /path/to/logdir 0524_0001,0524_0002,0524_0003 /some/imgdir,/some/other/imgdir \

--names "L3C,RGB Shared,RGB" --recursive=auto

To use the entropy coder and get timings for encoding/decoding, use --write_to_files (this needs torchac,

see below):

python test.py /path/to/logdir 0524_0001 /some/imgdir --write_to_files=files_out_dir

More flags available with python test.py -h.

When preparing this repo, we found that removing one approximation in the loss originally introduced by the PixelCNN++ code slightly improved the final bitrates of L3C, while performance of the baselines got slightly worse.

The code contains the loss without the approximation. We note that arXiv v2 is the same as CVPR Camera Ready version, and the results in there where obtained with the approximation.

However, if you re-train with the provided code, you'll get the new results. For clarity, we compare the new results as obtained by the released code, with the results in the Camera Ready:

| Model | Released [bpsp OI] | Camera Ready [bpsp OI] |

|---|---|---|

| L3C | 2.578 | 2.604 |

| RGB Shared | 2.948 | 2.918 |

| RGB | 2.832 | 2.819 |

Here, bpsp OI means bit per sub-pixel on Open Images Test.

We did not re-train the ImageNet32 and ImageNet64 models.

Whenever train.py is executed, a new experiment is started.

Every experiment is based on a specific configuration file for the network, stored in configs/ms and

another file for the dataloading, stored in configs/dl.

An experiment is uniquely identified by the log date, which is just date and time (e.g. 0506_1107).

The config files are parsed with the parser from fjcommon,

which allows hiararchies of configs.

Additionally, there is global_config.py, to allow quick changes by passing

additional parameters via the -p flag, which are then available everywhere in the code, see below.

The config files plus the global_config flags specify all parameters needed to train a network.

When an experiment is started, a directory with all this information is created in the folder passed as

LOG_DIR_ROOT to train.py (see python train.py -h).

For example, running

python train.py configs/ms/cr.cf configs/dl/oi.cf log_dir -p upsampling=deconvresults in a folder log_dir, and in there another folder called

0502_1213 cr oi upsampling=deconv

Checkpoints (weights) will be stored in a subfolder called ckpts.

This experiment can then be evaluated simply by passing the log date to test.py, in addition to some image folders:

python test.py logs 0502_1213 data/openimages_test,data/raise1kwhere we test on images in data/openimages_test and data/raise1k.

To use another model as a pretrained model, use --restore and --restore_restart:

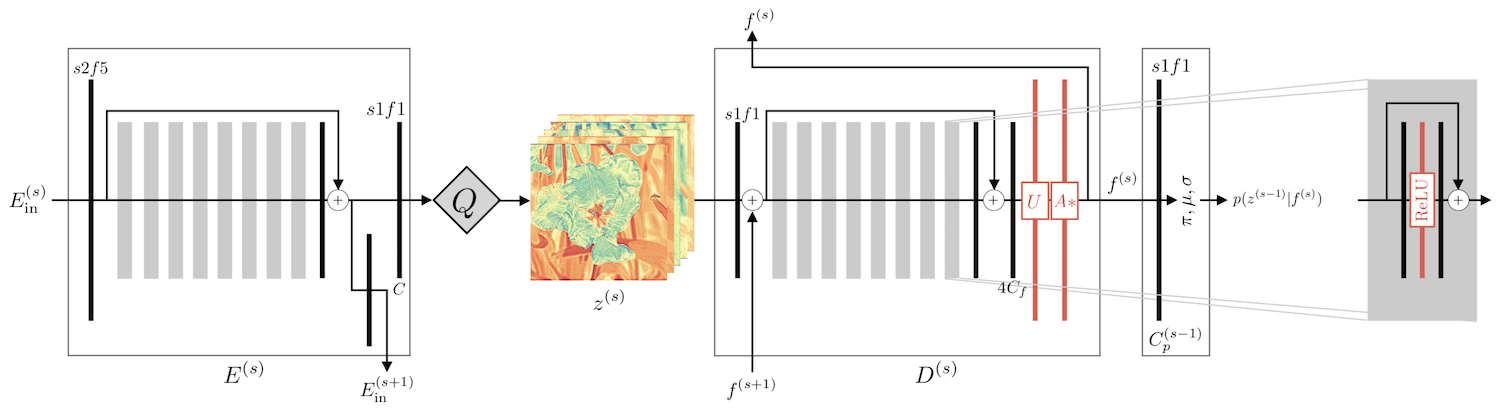

python train.py configs/ll/cr.cf configs/dl/oi.cf logs --restore 0502_1213 --restore_restart| Name in Paper | Symbol | Name in Code | Short | Class |

|---|---|---|---|---|

| Feature Extractor | E |

Encoder | enc |

EDSRLikeEnc |

| Predictor | D |

Decoder | dec |

EDSRLikeDec |

| Quantizer | Q |

Quantizer | q |

Quantizer |

| Final box, outputting pi, mu, sigma | Probability Classifier | prob_clf |

AtrousProbabilityClassifier |

See also the notes in src/multiscale_network/multiscale.py.

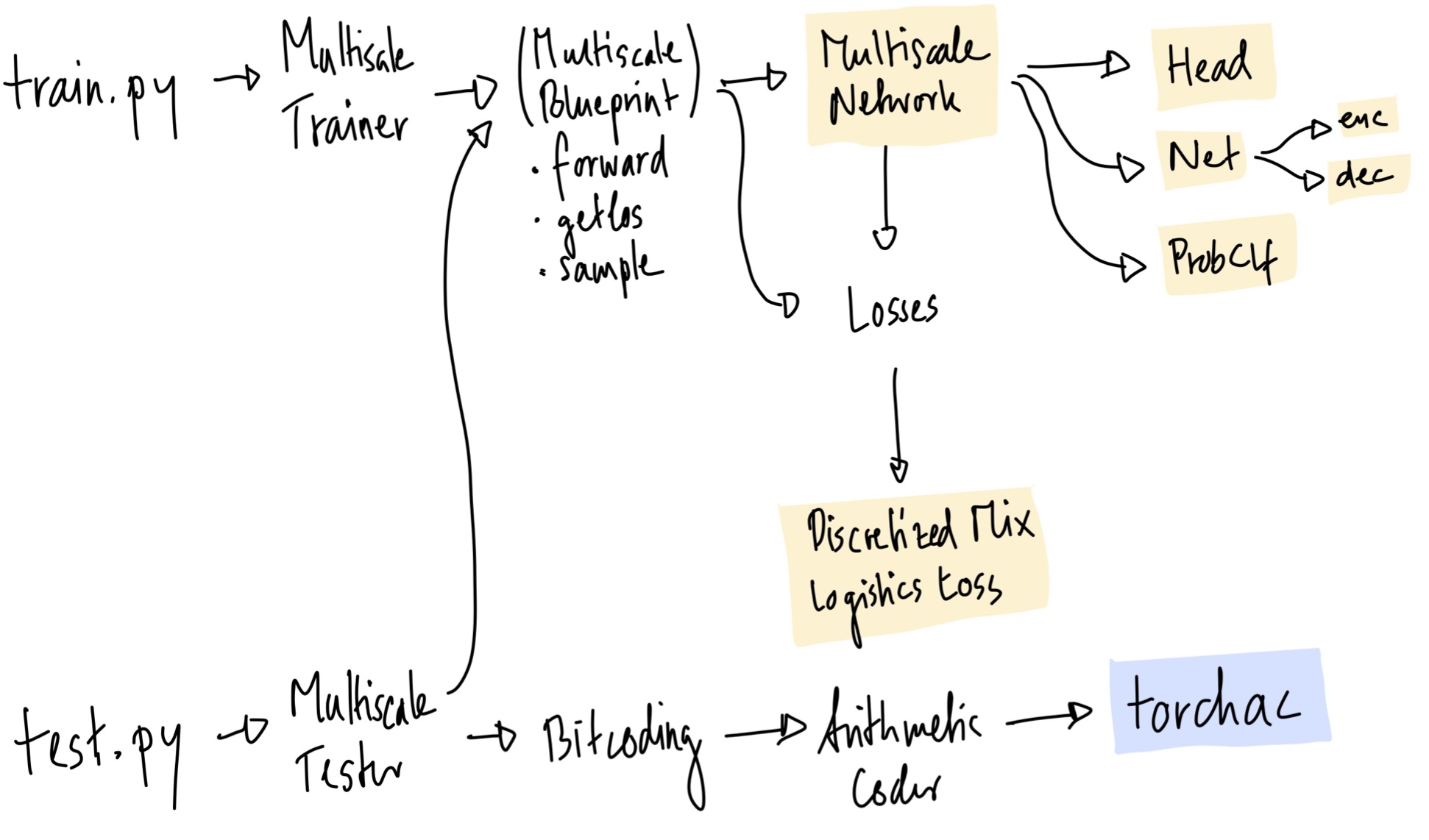

The code is quite modular, as it was used to experiment with different things. At the heart is the

MultiscaleBlueprint class, which has the following main functions: forward, get_loss, sample. It is used by the

MultiscaleTrainer and MultiscaleTester. The network is created by MultiscaleNetwork, which pulls together all

the PyTorch modules needed. The discretized mixture of logistics loss is in DiscretizedMixLogisticsLoss, which is

usally referred to as dmll or dmol in the code.

For bitcoding, there is the Bitcoding class, which uses the ArithmeticCoding class, which in turn uses my

torchac module, written in C++, and described below.

We implemented an entropy coding module as a C++ extension for PyTorch, because no existing fast Python entropy

coding module was available. You'll need to build it if you plan to use the --write_to_file flag for test.py

(see Evaluation of Models).

The implementation is based on this blog post,

meaning that we implement arithmetic coding.

It is not optimized, however, it's much faster than doing the equivalent thing in pure-Python (because of all the

bit-shift etc.). Encoding an entire 512 x 512 image happens in 0.202s (see Appendix A in the paper).

A good starting point for optimizing the code would probably be the range_coder.cc

implementation of

TFC.

The module can be built with or without CUDA. The only difference between the CUDA and non-CUDA versions is:

With CUDA, _get_uint16_cdf from torchac.py is done with a simple/non-optimized CUDA kernel (torchac_kernel.cu),

which has one benefit: we can directly write into shared memory! This saves an expensive copying step from GPU to CPU.

However, compiling with CUDA is probably a hassle. On our machines, it works with a GCC newer than version 5 but older than 6 (tested with 5.5), in combination with nvcc 9. We did not test other configurations, but they may work. Please comment if you have insights into which other configurations work (or don't.)

The main part (arithmetic coding), is always on CPU.

Make sure a recent gcc is available in $PATH (do gcc --version, tested with 5.5).

For CUDA, make sure nvcc -V gives the desired version (tested with 9.0).

Then do:

conda activate l3c_env

cd src/torchac

COMPILE_CUDA=auto python setup.pyCOMPILE_CUDA=auto: Use CUDA if agccbetween 5 and 6, andnvcc9 is avaiableCOMPILE_CUDA=force: Use CUDA, don't checkgccornvccCOMPILE_CUDA=no: Don't use CUDA

This installs a package called torchac-backend-cpu or torchac-backend-gpu in your pip. To test if it works,

you can do

conda activate l3c_env

cd src/torchac

python -c "import torchac"

It should not print anything.

To sample from L3C, use test.py with --sample:

python test.py /path/to/logdir 0524_0001 /some/imgdir --sample=samplesThis produces outputs in a directory samples. Per image, you'll get something like

# Ground Truth

0_IMGNAME_3.549_gt.png

# Sampling from RGB scale, resulting bitcost 1.013bpsp

0_IMGNAME_rgb_1.013.png

# Sampling from RGB scale and z1, resulting bitcost 0.342bpsp

0_IMGNAME_rgb+bn0_0.342.png

# Sampling from RGB scale and z1 and z2, resulting bitcost 0.121bpsp

0_IMGNAME_rgb+bn0+bn1_0.121.pngSee Section 5.4. ("Sampling Representations") in the paper.

To encode/decode a single image, use l3c.py. This requires torchac:

# Encode to out.l3c

python l3c.py /path/to/logdir 0524_0001 enc /path/to/img out.l3c

# Decode from out.l3c, save to decoded.png

python l3c.py /path/to/logdir 0524_0001 dec out.l3c decoded.pngUse the prep_openimages.sh script. Run it in an environment with

Python 3,

skimage (pip install scikit-image, tested with version 0.13.1), and

awscli (pip install awscli):

cd src

./prep_openimages.sh <DATA_DIR>This will download all images to DATA_DIR. Make sure there is enough space there, as this script will create

around 300 GB of data. Also, it will probably run for a few hours.

After ./prep_openimages.sh is done, everything is in DATA_DIR/train_oi and DATA_DIR/val_oi. Follow the

instructions printed by ./prep_openimages.sh to update the config file. You may rm -rf DATA_DIR/download and

rm -rf DATA_DIR/imported to free up some space.

- Download Open Images training sets and validation set,

we used the parts 0, 1, 2, plus the validation set:

aws s3 --no-sign-request cp s3://open-images-dataset/tar/train_0.tar.gz train_0.tar.gz aws s3 --no-sign-request cp s3://open-images-dataset/tar/train_1.tar.gz train_1.tar.gz aws s3 --no-sign-request cp s3://open-images-dataset/tar/train_2.tar.gz train_2.tar.gz aws s3 --no-sign-request cp s3://open-images-dataset/tar/validation.tar.gz validation.tar.gz - Extract to a folder, let's say

data. Now you should havedata/train_0,data/train_1,data/train_2, as well asdata/validation. - (Optional) to do the same preprocessing as in our paper, run the following. Note that it requires the

skimagepackage. To speed this up, you can distribute it on some server, by implementing atask_array.sh, seeimport_train_images.py.python import_train_images.py data train_0 train_1 train_2 validation - Put all (preprocessed) images into a train and a validation folder, let's say

data/train_oianddata/validation_oi. - (Optional) If you are on a slow file system, it helps to cache the contents of

data/train_oi. RunThecd src export CACHE_P="data/cache.pkl" # <--- Change this export PYTHONPATH=$(pwd) python dataloaders/images_loader.py update data/train_oi "$CACHE_P" --min_size 128--min_sizemakes sure to skip smaller images. NOTE: If you skip this step, make sure no files with dimensions smaller than 128 are in your training foler. If they are there, training might crash. - Update the dataloader config,

configs/dl/oi.cf: Settrain_imgs_glob = 'data/train_oi'(or whatever folder you used.) If you did the previous step, setimage_cache_pkl = 'data/cache.pkl, if you did not, setimage_cache_pkl = None. Finally, updateval_glob = 'data/validation_oi'. - (Optional) It helps to have one fixed validation image to monitor training. You may put any image at

src/train/fixedimg.jpgand it will be used for that (seemultiscale_trainer.py).

- Add support for

nn.DataParallel. - Incorporate TensorBoard support from PyTorch, instead of pip package.

- p.6: "On ImageNet32/64, we increase the batch size to 120 [...]." → Batch size is actually also 30, like for the other experiments.

- p.13, Fig A2: There should not be any arrows between predictors

D(1), because we only train one predictor.

If you use the work released here for your research, please cite this paper:

@inproceedings{mentzer2019practical,

Author = {Mentzer, Fabian and Agustsson, Eirikur and Tschannen, Michael and Timofte, Radu and Van Gool, Luc},

Booktitle = {Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

Title = {Practical Full Resolution Learned Lossless Image Compression},

Year = {2019}}