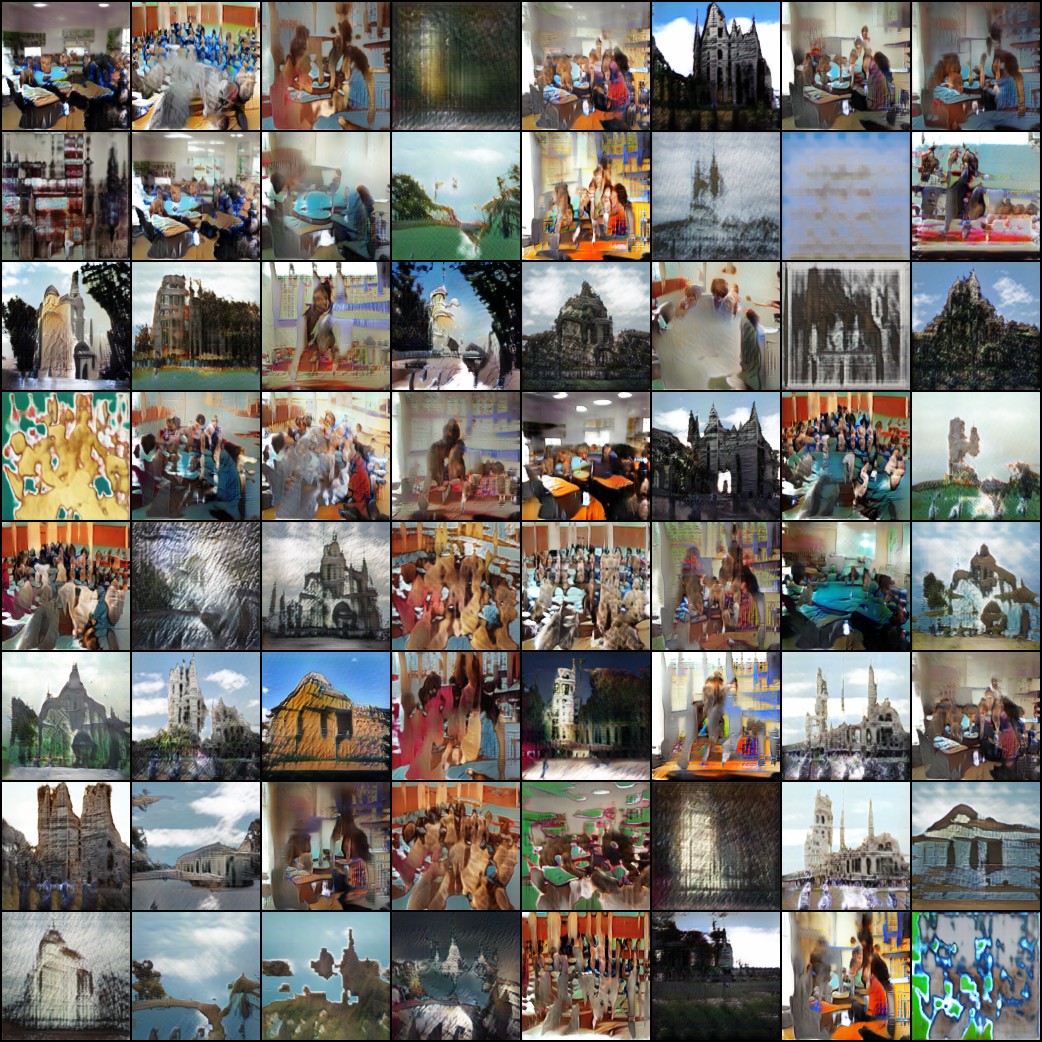

Pytorch implementation of LARGE SCALE GAN TRAINING FOR HIGH FIDELITY NATURAL IMAGE SYNTHESIS (BigGAN)

for 128*128*3 resolution

python main.py --batch_size 64 --dataset imagenet --adv_loss hinge --version biggan_imagenet --image_path /data/datasets

python main.py --batch_size 64 --dataset lsun --adv_loss hinge --version biggan_lsun --image_path /data1/datasets/lsun/lsun

python main.py --batch_size 64 --dataset lsun --adv_loss hinge --version biggan_lsun --parallel True --gpus 0,1,2,3 --use_tensorboard True

- not use cross-replica BatchNorm (Ioffe & Szegedy, 2015) in G

- CPU

- GPU

LSUN Pretrained model Download

Some methods in the paper to avoid model collapse, please see the paper and retrain your model.

- Infact, as mentioned in the paper, the model will collapse

- I use LSUN datasets to train this model maybe cause bad performance due to the class of classroom is more complex than �ImageNet

LSUN DATASETS(two classes): classroom and church_outdoor