AIConfig - the open-source framework for building production-grade AI applications

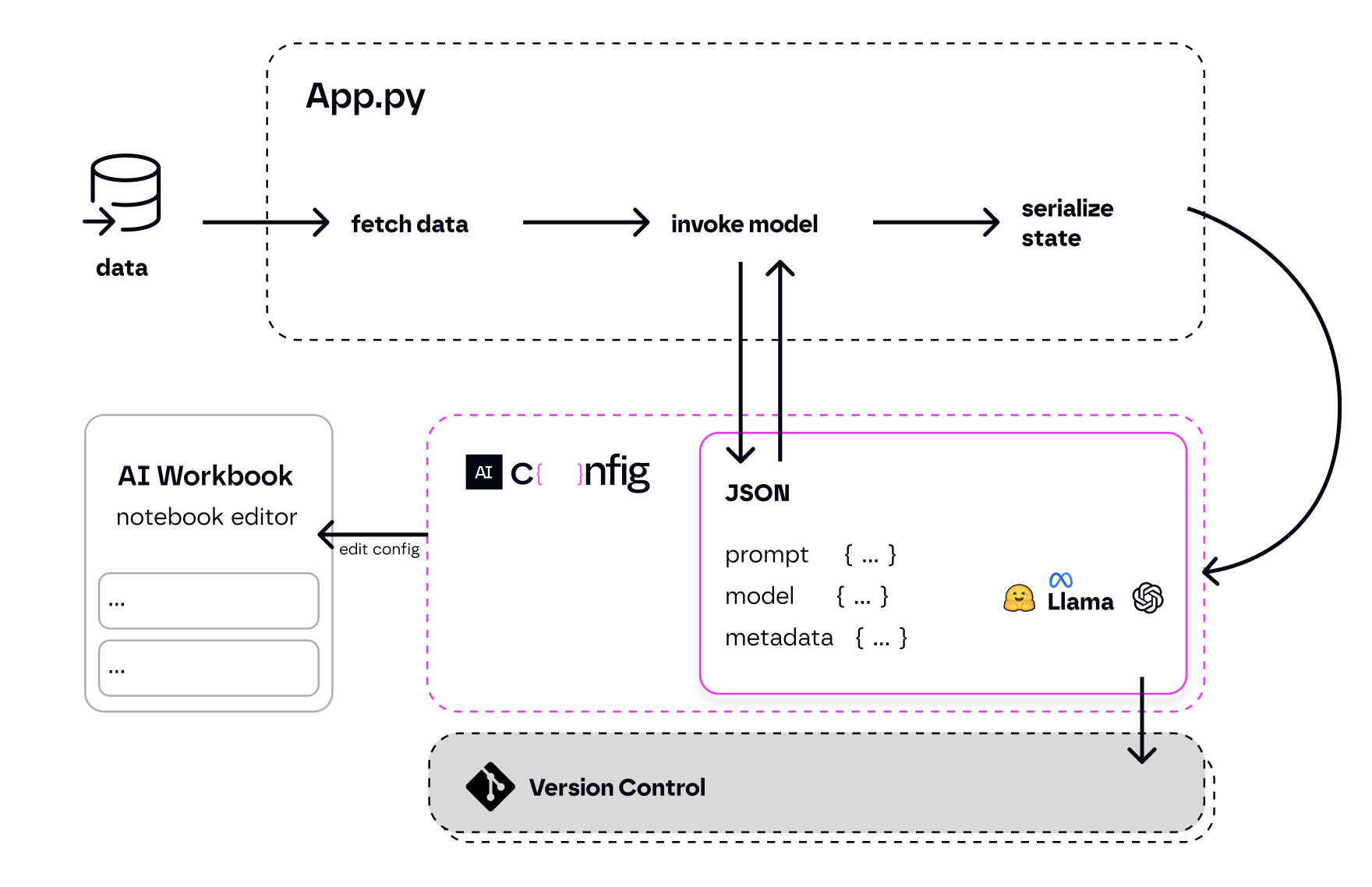

AIConfig is a framework that makes it easy to build generative AI applications for production. It manages generative AI prompts, models and model parameters as JSON-serializable configs that can be version controlled, evaluated, monitored and opened in a local editor for rapid prototyping.

It allows you to store and iterate on generative AI behavior separately from your application code, offering a streamlined AI development workflow.

For VS Code Users:

- Install the AIConfig Editor VS Code Extension

If you're not using VS Code, follow these steps:

pip3 install python-aiconfigexport OPENAI_API_KEY='your-key'aiconfig edit

Check out the full Getting Started tutorial.

# for python installation:

pip3 install python-aiconfig

# or using poetry: poetry add python-aiconfig

# for node.js installation:

npm install aiconfig

# or using yarn: yarn add aiconfigNote: You need to install the python AIConfig package to use AIConfig Editor to create and iterate on prompts even if you plan to use the Node SDK to interact with your aiconfig in your application code.

You must specify your OpenAI API Key. Open your Terminal and add this line, replacing ‘your-api-key-here’ with your API key: export OPENAI_API_KEY='your-api-key-here'.

AIConfig Editor helps you visually create and edit the prompts and model parameters stored as AIConfigs.

- Install the AIConfig Editor for VS Code

- Open the

travel.aiconfig.jsonfile in VS Code. This will automatically open the AIConfig Editor in VS Code.

With AIConfig Editor, you can create and run prompts with complex chaining and variables. The editor auto-saves every 15 seconds and you can manually save with the Save button. Your updates will be reflected in the AIConfig JSON file. See this example of a prompt chain created with the editor:

Corresponding AIConfig JSON file:

travel.aiconfig.json

{

"name": "NYC Trip Planner",

"description": "Intrepid explorer with ChatGPT and AIConfig",

"schema_version": "latest",

"metadata": {

"models": {

"gpt-3.5-turbo": {

"model": "gpt-3.5-turbo",

"top_p": 1,

"temperature": 1

},

"gpt-4": {

"model": "gpt-4",

"max_tokens": 3000

}

},

"default_model": "gpt-3.5-turbo"

},

"prompts": [

{

"name": "get_activities",

"input": "Tell me 10 fun attractions to do in NYC."

},

{

"name": "gen_itinerary",

"input": "Generate an itinerary ordered by {{order_by}} for these activities: {{get_activities.output}}.",

"metadata": {

"model": "gpt-4",

"parameters": {

"order_by": "geographic location"

}

}

}

]

}

You can run the prompts from the aiconfig generated from AIConfig Editor in your application code using either python or Node SDK. We’ve shown the python SDK below.

# load your AIConfig

from aiconfig import AIConfigRuntime, InferenceOptions

import asyncio

config = AIConfigRuntime.load("travel.aiconfig.json")

# setup streaming

inference_options = InferenceOptions(stream=True)

# run a prompt

async def gen_nyc_itinerary():

gen_itinerary_response = await config.run("gen_itinerary", params = {"order_by" : "location"}, options=inference_options, run_with_dependencies=True)

asyncio.run(gen_nyc_itinerary())

# save the aiconfig to disk and serialize outputs from the model run

config.save('updated_travel.aiconfig.json', include_outputs=True)You can quickly iterate and edit your aiconfig using AIConfig Editor.

- Open your Terminal

- Run this command:

aiconfig edit --aiconfig-path=travel.aiconfig.json

A new tab with AIConfig Editor opens in your default browser at http://localhost:8080/ with the prompts, chaining logic, and settings from travel.aiconfig.json. The editor auto-saves every 15 seconds and you can manually save with the Save button. Your updates will be reflected in the AIConfig file.

Today, application code is tightly coupled with the gen AI settings for the application -- prompts, parameters, and model-specific logic is all jumbled in with app code.

- results in increased complexity

- makes it hard to iterate on the prompts or try different models easily

- makes it hard to evaluate prompt/model performance

AIConfig helps unwind complexity by separating prompts, model parameters, and model-specific logic from your application.

- simplifies application code -- simply call

config.run() - open the

aiconfigin a playground to iterate quickly - version control and evaluate the

aiconfig- it's the AI artifact for your application.

- Prompts as Configs: standardized JSON format to store prompts and model settings in source control.

- Editor for Prompts: Prototype and quickly iterate on your prompts and model settings with AIConfig Editor.

- Model-agnostic and multimodal SDK: Python & Node SDKs to use

aiconfigin your application code. AIConfig is designed to be model-agnostic and multi-modal, so you can extend it to work with any generative AI model, including text, image and audio. - Extensible: Extend AIConfig to work with any model and your own endpoints.

- Collaborative Development: AIConfig enables different people to work on prompts and app development, and collaborate together by sharing the

aiconfigartifact.

AIConfig makes it easy to work with complex prompt chains, various models, and advanced generative AI workflows. Start with these recipes and access more in /cookbooks:

- RAG with AIConfig

- Function Calling with OpenAI

- CLI Chatbot

- Prompt Routing

- Multi-LLM Consistency

- Safety Guardrails for LLMs - LLama Guard

- Chain-of-Verification

AIConfig supports the following models out of the box. See examples:

- OpenAI models (GPT-3, GPT-3.5, GPT-4, DALLE3)

- Gemini

- LLaMA

- LLaMA Guard

- Google PaLM models (PaLM chat)

- Hugging Face Text Generation Task models (Ex. Mistral-7B)

If you need to use a model that isn't provided out of the box, you can implement a ModelParser for it.

See instructions on how to support a new model in AIConfig.

AIConfig is designed to be customized and extended for your use-case. The Extensibility guide goes into more detail.

Currently, there are 3 core ways to extend AIConfig:

- Supporting other models - define a ModelParser extension

- Callback event handlers - tracing and monitoring

- Custom metadata - save custom fields in

aiconfig

We are rapidly developing AIConfig! We welcome PR contributions and ideas for how to improve the project.

- Join the conversation on Discord -

#aiconfigchannel - Open an issue for feature requests

- Read our contributing guide

We currently release new tagged versions of the pypi and npm packages every week. Hotfixes go out when completed.

)