Simple static web-based mask drawer, supporting semantic drawing with Segment Anything Model (SAM) and Video Segmentation Propagation with XMem.

|

|

|

- Video Segmentation with XMem

original video

|

first frame

|

segmentation

|

VideoSeg

|

From top to bottom

- Clear image

- Drawer

- SAM point-segmenter (Need backend)

- SAM rect-segmenter (Need backend)

- SAM Seg-Everything (Need backend)

- Undo

- Eraser

- Download

- VideoSeg (Need backend)

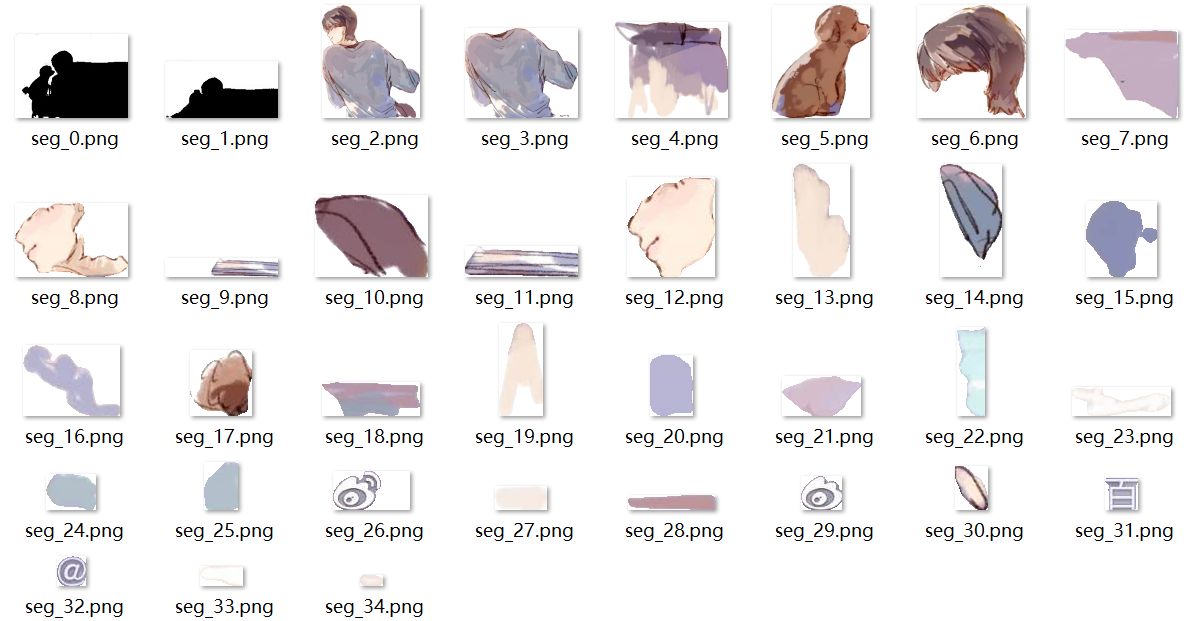

After Seg-Everything, the downloaded files would include .zip file, which contains all cut-offs.

|

|

For Video Segmentation, according to XMem, an initial segmentation map is needed, which can be easily achieved with SAM. You can upload a video just as uploading an image, then draw a segmentation on it, after which you can click the final button of VideoSeg to upload it to the server and wait for the automatic download of video seg result.

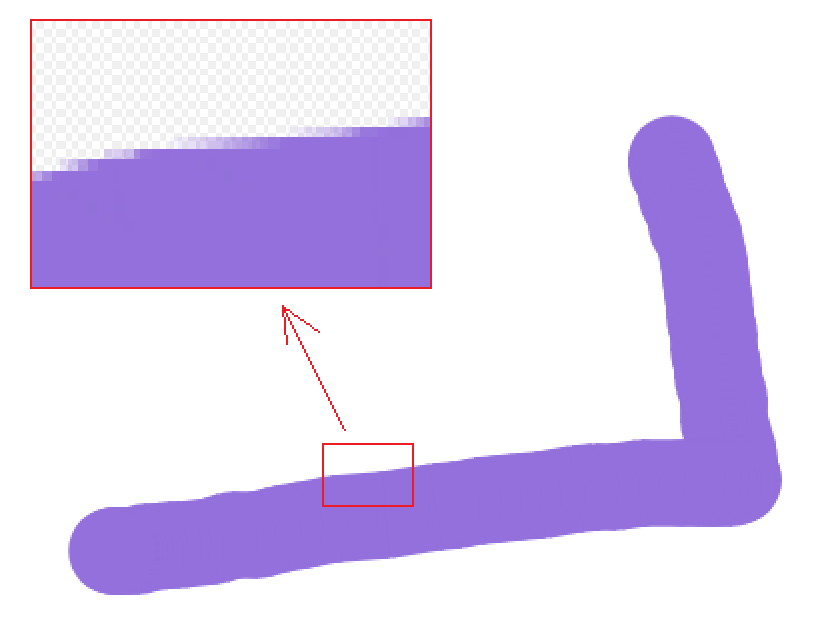

Note: you may not want to draw the segmentation map manually with the tool Drawer (Same problem holds for Eraser), which leads to non-single color paints especially on the edge as shown below. This is not good for XMem video segmentation. For more details please refer to the original paper.

|

If don't need SAM for segmentation, just open segDrawer.html and use tools except SAM segmenter.

If use SAM segmenter, do following steps (CPU can be time-consuming)

- Download models as mentioned in segment-anything and XMem. For example

wget https://dl.fbaipublicfiles.com/segment_anything/sam_vit_l_0b3195.pth

wget -P ./XMem/saves/ https://github.com/hkchengrex/XMem/releases/download/v1.0/XMem.pth

- Launch backend

python server.py

- Go to Browser

http://127.0.0.1:8000

For configuring CPU/GPU and model, just change the code in server.py

sam_checkpoint = "sam_vit_l_0b3195.pth" # "sam_vit_l_0b3195.pth" or "sam_vit_h_4b8939.pth"

model_type = "vit_l" # "vit_l" or "vit_h"

device = "cuda" # "cuda" if torch.cuda.is_available() else "cpu"

Follow this Colab example, or run on Colab. Need to register an ngrok account and copy your token to replace "{your_token}".