Official repository for Simple but Effective: CLIP Embeddings for Embodied AI.

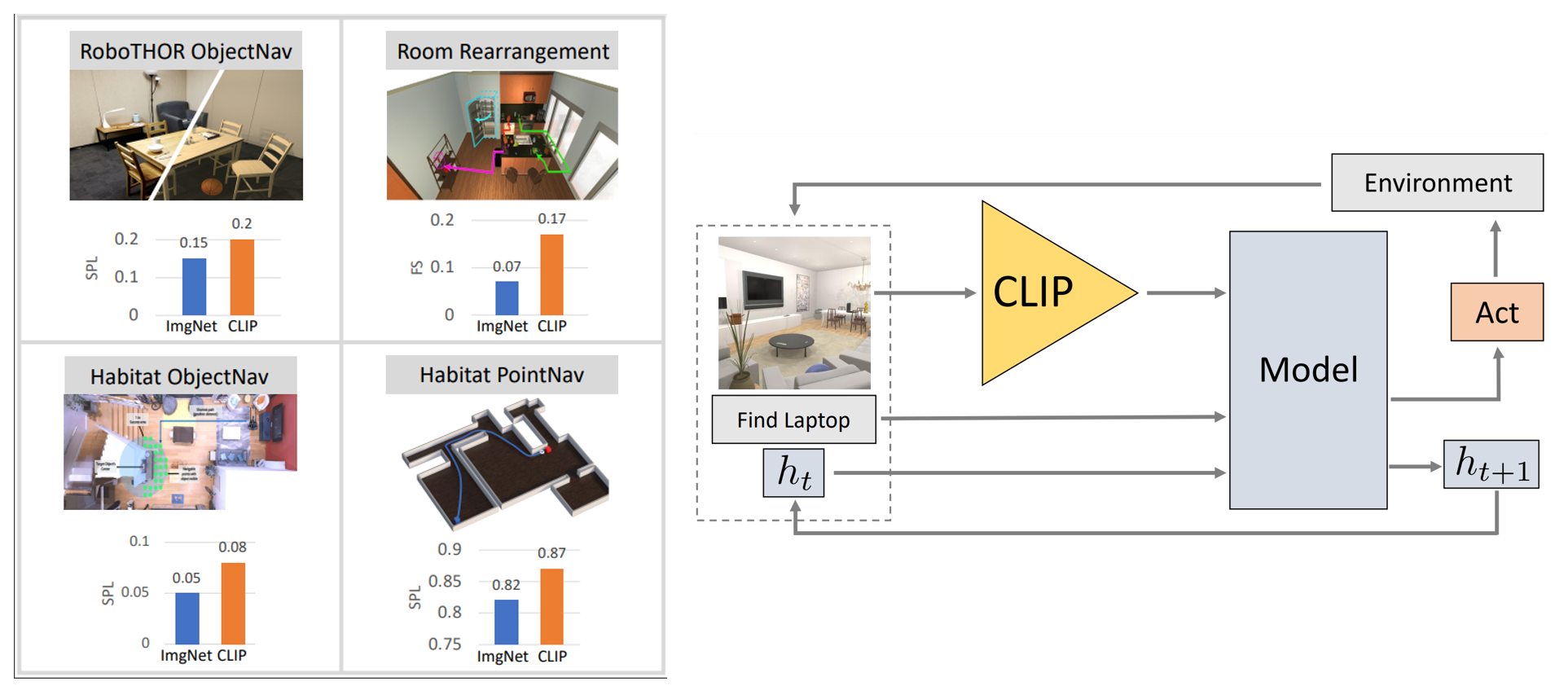

We present competitive performance on navigation-heavy tasks in Embodied AI using frozen visual representations from CLIP.

This repository includes all code and pretrained models necessary to replicate the experiments in our paper. We have included forks of other repositories as branches, as we find this is a convenient way to centralize our experiments and track changes.

Please see the following links with detailed instructions on how to replicate each experiment:

- Baselines

- RoboTHOR ObjectNav (Sec. 4.1)

- iTHOR Rearrangement (Sec. 4.2)

- Habitat ObjectNav (Sec. 4.3)

- Habitat PointNav (Sec. 4.4)

- Probing for Navigational Primitives (Sec. 5)

- ImageNet Acc vs. ObjectNav Success (Sec. 6)

- Zero-shot ObjectNav in RoboTHOR (Sec. 7)

@inproceedings{khandelwal2022:embodied-clip,

author = {Khandelwal, Apoorv and Weihs, Luca and Mottaghi, Roozbeh and Kembhavi, Aniruddha},

title = {Simple but Effective: CLIP Embeddings for Embodied AI},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2022}

}