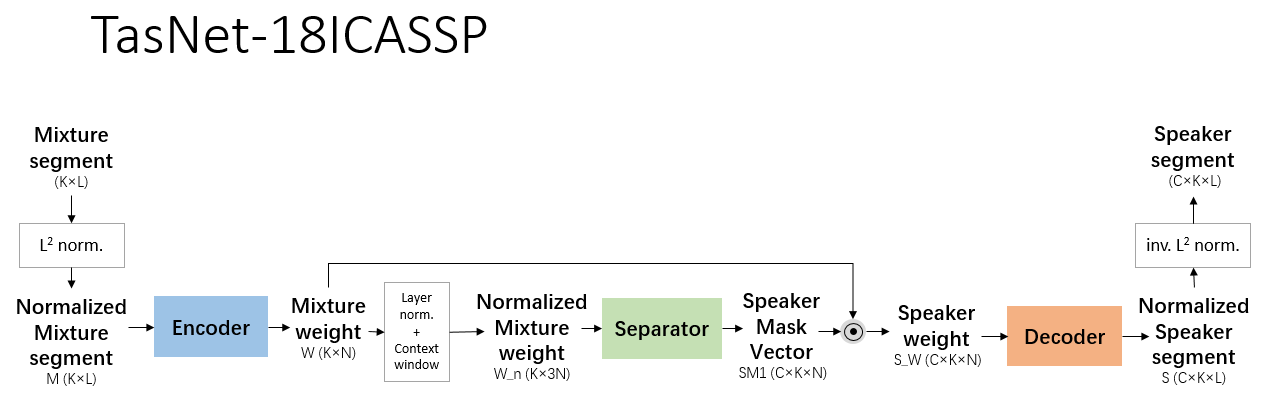

This is the implementation in Tensorflow of "TasNet: Time-domain Audio Separation Network for Real-time, single-channel speech separation", published in ICASSP2018, by Yi Luo and Nima Mesgarani.

This implementation takes ododoyo's as

reference, especially in SI-SNR and PIT training part. A extra MSE training objective and PIT

training policy is implemented by myself. Also, this implementation haven't supported

variable-length segments in training so far.

Discussion, (friendly) criticism, suggestions are always welcomed!

- tensorflow 1.8.0

- python 3.5

- librosa

params.pydefines all global parameters.data_generator.pyThis file establishes WSJ0 2-mix datasets (referred to ICASSP 2016 Deep Clustering paper) and generates batch data for training. You may run this code firstly to generate datasets and change the path intf_train.py.tf_net.pydefines the TasNet structure, loss, training optimizer, etc.tf_train.pytrains the model. Rewrite the dataset path with your own path.tf_test.pyevaluates the model performance. This code hasn't been written well, still under repair.mir_eval.pyandmir_util.pyare forked from ododoyo's, implementing bss_eval calculation in Python rather than MATLAB.