A Unified Transformer Framework for Group-based Segmentation: Co-Segmentation, Co-Saliency Detection and Video Salient Object Detection

08/09/2022 Video Inpainting script and model coming soon!

22/07/2022 Add demo to Huggingface Spaces with Gradio.

| Paper Link | Huggingface Demo |

|---|---|

| [paper] |  |

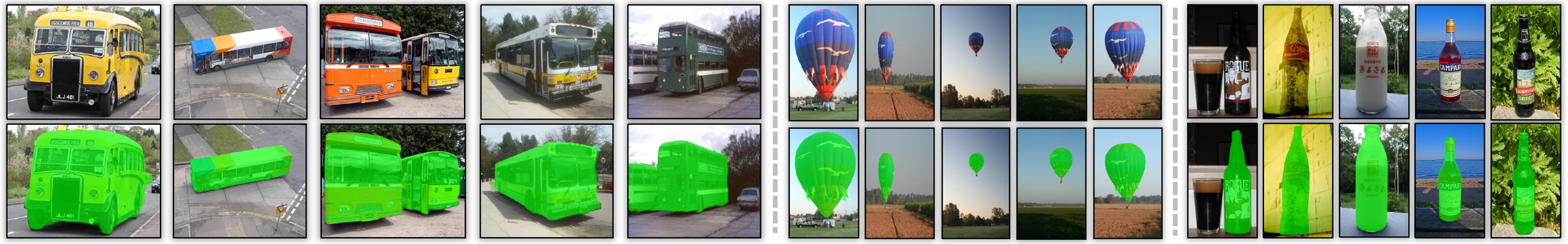

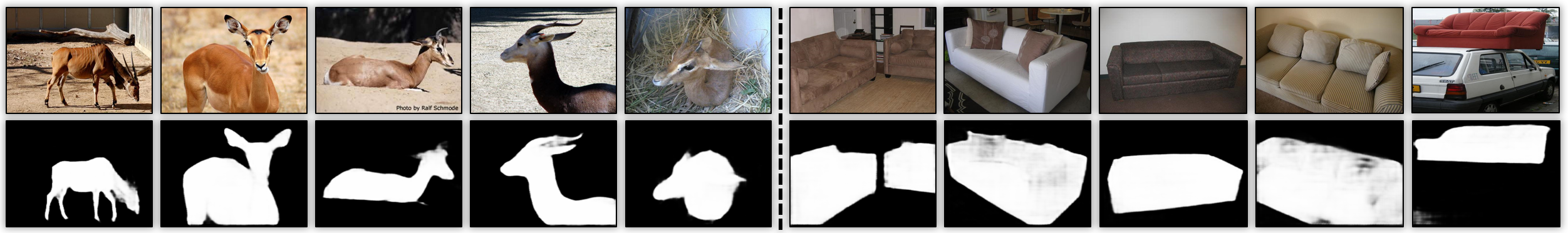

UFO is a simple and Unified framework for addressing Co-Object Segmentation tasks: Co-Segmentation, Co-Saliency Detection and Video Salient Object Detection. Humans tend to mine objects by learning from a group of images or a several frames of video since we live in a dynamic world. In computer vision area, many researches focus on co-segmentation (CoS), co-saliency detection (CoSD) and video salient object detection (VSOD) to discover the co-occurrent objects. However, previous approaches design different networks on these tasks separately, which lower the upper bound on the ease of use of deep learning frameworks. In this paper, we introduce a unified framework to tackle these issues, term as UFO (Unified Framework for Co-Object Segmentation). All tasks share the same framework.

torch >= 1.7.0

torchvision >= 0.7.0

python3Training on group-based images. We use COCO2017 train set with the provided group split dict.npy.

python main.pyTraining on video (w/o flow) . We load the weight pre-trained on the static image dataset, and use DAVIS and FBMS to train our framework.

python finetune.py --model=models/image_best.pth --use_flow=FalseTraining on video (w/ flow). The same as above, then we use DAVIS_flow and FBMS_flow to train our network.

python finetune.py --model=models/image_best.pth --use_flow=TrueGenerate the image results [checkpoint]

python test.py --model=models/image_best.pth --data_path=CoSdatasets/MSRC7/ --output_dir=CoS_results/MSRC7 --task=CoS_CoSDGenerate the video results [checkpoint]

python test.py --model=models/video_best.pth --data_path=VSODdatasets/DAVIS/ --output_dir=VSOD_results/wo_optical_flow/DAVIS --task=VSODGenerate the video results with optical flow [checkpoint]

python test.py --model=models/video_flow_best.pth --data_path=VSODdatasets/DAVIS_flow/ --output_dir=VSOD_results/w_optical_flow/w_optical_flow --use_flow=True --task=VSOD- Pre-Computed Results: Please download the prediction results of our framework form the Results section.

- Evaluation Toolbox: We use the standard evaluation toolbox from COCA benchmark.

- Co-Segmentation (CoS) on PASCAL-VOC, iCoseg, Internet and MSRC [Pre-computed Results]

- Co-Saliency Detection(CoSD) on CoCA,CoSOD3k and CoSal2015 [Pre-computed Results]

- Video Salient Object Detection (VSOD) on DAVIS16 val set [Pre-computed Results]

- [Optional] Single Object Tracking (SOT) on GOT-10k val set

- Video inpainting on YouTube-VOS test set (Currently not fully trained)

python demo.py --data_path=./demo_mp4/video/kobe.mp4 --output_dir=./demo_mp4/resultkobe.mp4

bash demo_bullet_chat.shcat.mp4

If you find the code useful, please consider citing our paper using the following BibTeX entry.

@misc{2203.04708,

Author = {Yukun Su and Jingliang Deng and Ruizhou Sun and Guosheng Lin and Qingyao Wu},

Title = {A Unified Transformer Framework for Group-based Segmentation: Co-Segmentation, Co-Saliency Detection and Video Salient Object Detection},

Year = {2022},

Eprint = {arXiv:2203.04708},

}

@article{su2023unified,

title = {A Unified Transformer Framework for Group-based Segmentation: Co-Segmentation, Co-Saliency Detection and Video Salient Object Detection},

author = {Yukun Su and Jingliang Deng and Ruizhou Sun and Guosheng Lin and Qingyao Wu},

journal = {IEEE Transactions on Multimedia},

year = {2023},

publisher = {IEEE}

}

Our project references the codes in the following repos.