English | 简体中文

Reverse engineered ChatGPT proxy (bypass Cloudflare 403 Access Denied)

If the project is helpful to you, please consider donating support for continued project maintenance, or you can Pay for consulting and technical support services.

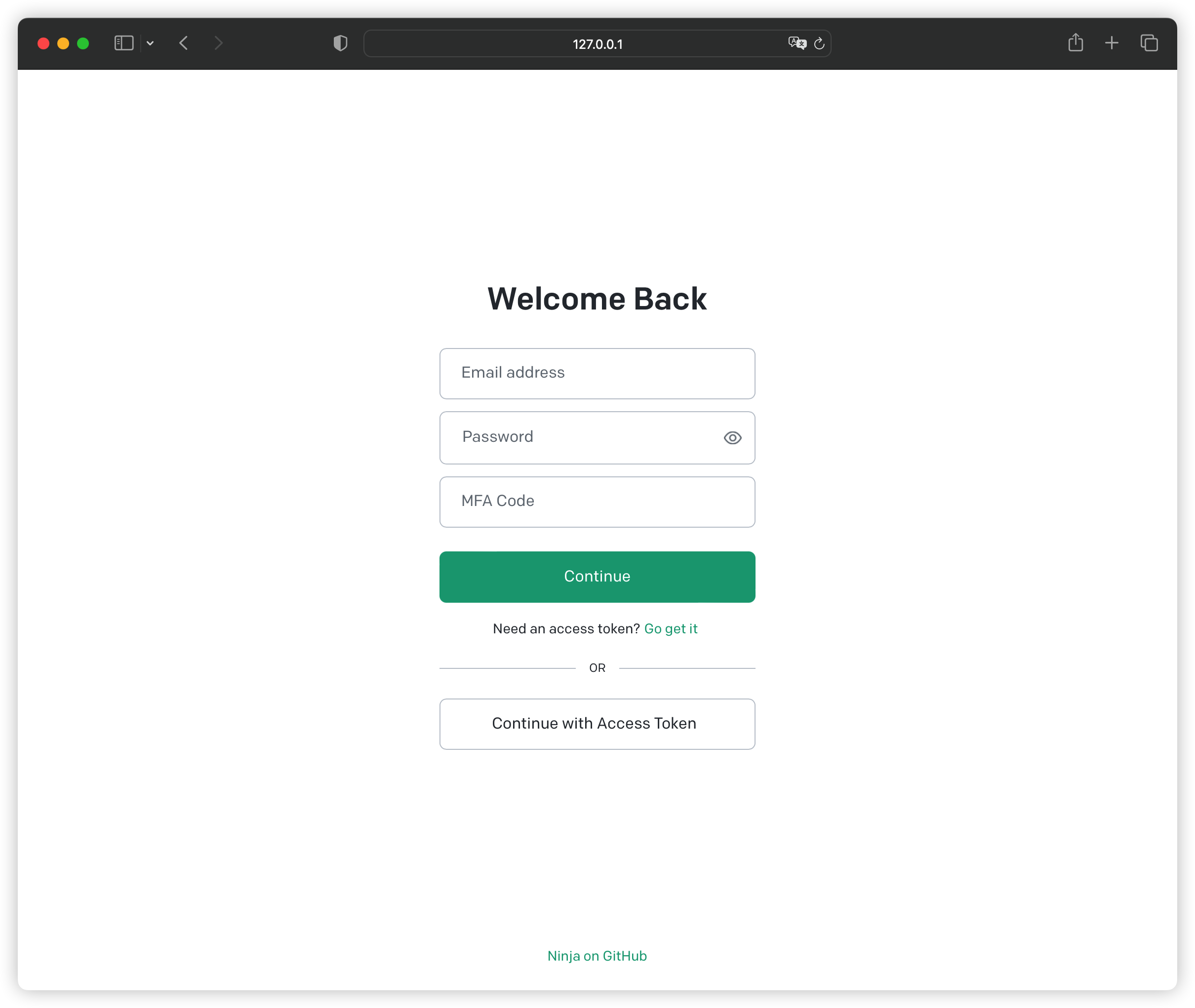

- API key acquisition

- Email/password account authentication (Google/Microsoft third-party login not supported)

- Supports obtaining RefreshToken

ChatGPT-API/OpenAI-API/ChatGPT-to-APIHttp API proxy (for third-party client access)- Support IP proxy pool (support using Ipv6 subnet as proxy pool)

- ChatGPT WebUI

- Very small memory footprint

Limitations: This cannot bypass OpenAI's outright IP ban

Sending GPT-4/GPT-3.5/Creating API-Key dialog requires sending Arkose Token as a parameter. There are only two supported solutions for the time being.

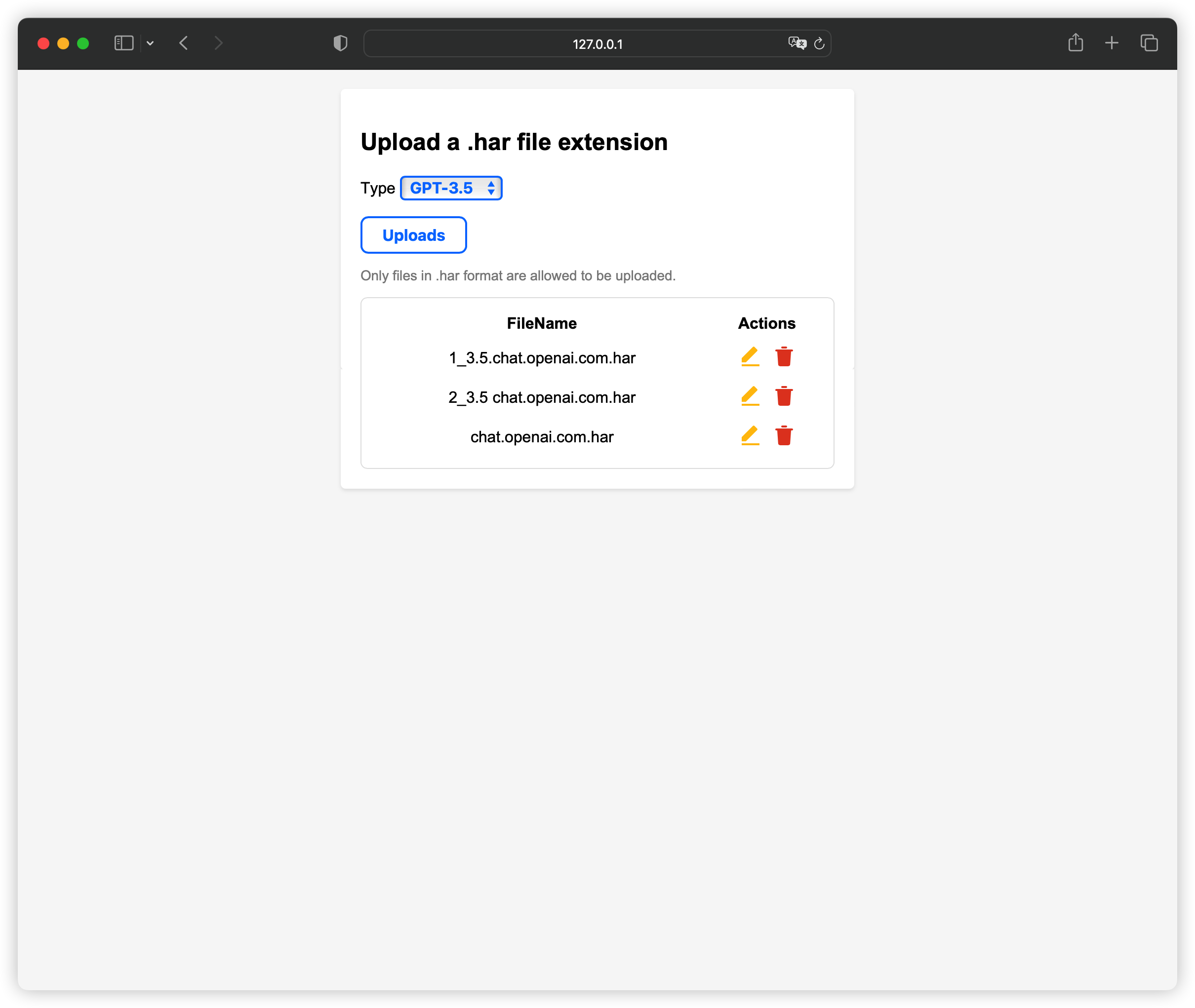

- Use HAR

- Supports HAR feature pooling, can upload multiple HARs at the same time, and use rotation training strategy

The

ChatGPTofficial website sends aGPT-4session message, and the browserF12downloads thehttps://tcr9i.chat.openai.com/fc/gt2/public_key/35536E1E-65B4-4D96-9D97-6ADB7EFF8147interface. HAR log file, use the startup parameter--arkose-gpt4-har-dirto specify the HAR directory path to use (if you do not specify a path, use the default path~/.gpt4, you can directly upload and update HAR ), the same method applies toGPT-3.5and other types. Supports WebUI to upload and update HAR, request path:/har/upload, optional upload authentication parameter:--arkose-har-upload-key

- Use YesCaptcha / CapSolver

The platform performs verification code parsing, start the parameter

--arkose-solverto select the platform (useYesCaptchaby default),--arkose-solver-keyfill inClient Key

- Both solutions are used, the priority is:

HAR>YesCaptcha/CapSolver YesCaptcha/CapSolveris recommended to be used with HAR. When the verification code is generated, the parser is called for processing. After verification, HAR is more durable.

Currently OpenAI has updated

Loginwhich requires verification ofArkose Token. The solution is the same asGPT-4. Fill in the startup parameters and specify the HAR file--arkose-auth-har-dir. To create an API-Key, you need to upload the HAR feature file related to the Platform. The acquisition method is the same as above.

Recently,

OpenAIhas canceled theArkoseverification forGPT-3.5. It can be used without uploading HAR feature files (uploaded ones will not be affected). After compatibility,Arkoseverification may be turned on again, and startup parameters need to be added.--arkose-gpt3-experimentenables theGPT-3.5modelArkoseverification processing, and the WebUI is not affected.

-

ChatGPT-API

/public-api/*/backend-api/*

-

OpenAI-API

/v1/*

-

Platform-API

/dashboard/*

-

ChatGPT-To-API

/to/v1/chat/completions

About using

ChatGPTtoAPI, useAceessTokendirectly asAPI Key, interface path:/to/v1/chat/completions -

Files-API

/files/*

Image and file upload and download API proxy, the API returned by the

/backend-api/filesinterface has been converted to/files/* -

Authorization

- Login:

/auth/token, formoptionoptional parameter, default isweblogin, returnsAccessTokenandSession; parameter isapple/platform, returnsAccessTokenandRefreshToken - Refresh

RefreshToken:/auth/refresh_token - Revoke

RefreshToken:/auth/revoke_token - Refresh

Session:/api/auth/session, send a cookie named__Secure-next-auth.session-tokento call refreshSession, and return a newAccessToken

Web login, a cookie named:__Secure-next-auth.session-tokenis returned by default. The client only needs to save this cookie. Calling/api/auth/sessioncan also refreshAccessTokenAbout the method of obtaining

RefreshToken, use theChatGPT Applogin method of theAppleplatform. The principle is to use the built-in MITM agent. When theApple deviceis connected to the agent, you can log in to theApple platformto obtainRefreshToken. It is only suitable for small quantities or personal use(large quantities will seal the device, use with caution). For detailed usage, please see the startup parameter description.# Generate certificate ninja genca ninja run --pbind 0.0.0.0:8888 # Set the network on your mobile phone to set your proxy listening address, for example: http://192.168.1.1:8888 # Then open the browser http://192.168.1.1:8888/preauth/cert, download the certificate, install it and trust it, then open iOS ChatGPT and you can play happily

- Login:

- ChatGPT WebUI

- Expose

ChatGPT-API/OpenAI-APIproxies APIprefix is consistent with the official oneChatGPTtoAPI- Can access third-party clients

- Can access IP proxy pool to improve concurrency

- Supports obtaining RefreshToken

- Support file feature pooling in HAR format

--level, environment variableLOG, log level: default info--bind, environment variableBIND, service listening address: default 0.0.0.0:7999,--tls-cert, environment variableTLS_CERT', TLS certificate public key. Supported format: EC/PKCS8/RSA--tls-key, environment variableTLS_KEY, TLS certificate private key--proxies, Proxy, supports proxy pool, multiple proxies are separated by,, format: protocol://user:pass@ip:port, if the local IP is banned, you need to turn off the use of direct IP when using the proxy pool,--disable-directturns off direct connection, otherwise your banned local IP will be used according to load balancing--workers, worker threads: default 1--disable-webui, if you don’t want to use the default built-in WebUI, use this parameter to turn it off--enable-file-proxy, environment variableENABLE_FILE_PROXY, turns on the file upload and download API proxy

Making Releases has a precompiled deb package, binaries, in Ubuntu, for example:

wget https://github.com/gngpp/ninja/releases/download/v0.8.4/ninja-0.8.4-x86_64-unknown-linux-musl.tar.gz

tar -xf ninja-0.8.4-x86_64-unknown-linux-musl.tar.gz

./ninja runThere are pre-compiled ipk files in GitHub Releases, which currently provide versions of aarch64/x86_64 and other architectures. After downloading, use opkg to install, and use nanopi r4s as example:

wget https://github.com/gngpp/ninja/releases/download/v0.8.4/ninja_0.8.4_aarch64_generic.ipk

wget https://github.com/gngpp/ninja/releases/download/v0.8.4/luci-app-ninja_1.1.6-1_all.ipk

wget https://github.com/gngpp/ninja/releases/download/v0.8.4/luci-i18n-ninja-zh-cn_1.1.6-1_all.ipk

opkg install ninja_0.8.4_aarch64_generic.ipk

opkg install luci-app-ninja_1.1.6-1_all.ipk

opkg install luci-i18n-ninja-zh-cn_1.1.6-1_all.ipkMirror source supports

gngpp/ninja:latest/ghcr.io/gngpp/ninja:latest

docker run --rm -it -p 7999:7999 --name=ninja \

-e WORKERS=1 \

-e LOG=info \

gngpp/ninja:latest run- Docker Compose

CloudFlare Warpis not supported in your region (China), please delete it, or if yourVPSIP can be directly connected toOpenAI, you can also delete it

version: '3'

services:

ninja:

image: ghcr.io/gngpp/ninja:latest

container_name: ninja

restart: unless-stopped

environment:

- TZ=Asia/Shanghai

- PROXIES=socks5://warp:10000

command: run --disable-direct

ports:

- "8080:7999"

depends_on:

- warp

warp:

container_name: warp

image: ghcr.io/gngpp/warp:latest

restart: unless-stopped

watchtower:

container_name: watchtower

image: containrrr/watchtower

volumes:

- /var/run/docker.sock:/var/run/docker.sock

command: --interval 3600 --cleanup

restart: unless-stopped

$ ninja --help

Reverse engineered ChatGPT proxy

Usage: ninja [COMMAND]

Commands:

run Run the HTTP server

stop Stop the HTTP server daemon

start Start the HTTP server daemon

restart Restart the HTTP server daemon

status Status of the Http server daemon process

log Show the Http server daemon log

genca Generate MITM CA certificate

gt Generate config template file (toml format file)

update Update the application

help Print this message or the help of the given subcommand(s)

Options:

-h, --help Print help

-V, --version Print version

$ ninja run --help

Run the HTTP server

Usage: ninja run [OPTIONS]

Options:

-L, --level <LEVEL>

Log level (info/debug/warn/trace/error) [env: LOG=] [default: info]

-C, --config <CONFIG>

Configuration file path (toml format file) [env: CONFIG=]

-b, --bind <BIND>

Server bind address [env: BIND=] [default: 0.0.0.0:7999]

-W, --workers <WORKERS>

Server worker-pool size (Recommended number of CPU cores) [default: 1]

--concurrent-limit <CONCURRENT_LIMIT>

Enforces a limit on the concurrent number of requests the underlying [default: 1024]

-x, --proxies <PROXIES>

Server proxies pool, Only support http/https/socks5 protocol [env: PROXIES=]

-i, --interface <INTERFACE>

Bind address for outgoing connections (or IPv6 subnet fallback to Ipv4) [env: INTERFACE=]

-I, --ipv6-subnet <IPV6_SUBNET>

IPv6 subnet, Example: 2001:19f0:6001:48e4::/64 [env: IPV6_SUBNET=]

--disable-direct

Disable direct connection [env: DISABLE_DIRECT=]

--cookie-store

Enabled Cookie Store [env: COOKIE_STORE=]

--timeout <TIMEOUT>

Client timeout (seconds) [default: 360]

--connect-timeout <CONNECT_TIMEOUT>

Client connect timeout (seconds) [default: 20]

--tcp-keepalive <TCP_KEEPALIVE>

TCP keepalive (seconds) [default: 60]

--pool-idle-timeout <POOL_IDLE_TIMEOUT>

Set an optional timeout for idle sockets being kept-alive [default: 90]

--tls-cert <TLS_CERT>

TLS certificate file path [env: TLS_CERT=]

--tls-key <TLS_KEY>

TLS private key file path (EC/PKCS8/RSA) [env: TLS_KEY=]

--cf-site-key <CF_SITE_KEY>

Cloudflare turnstile captcha site key [env: CF_SECRET_KEY=]

--cf-secret-key <CF_SECRET_KEY>

Cloudflare turnstile captcha secret key [env: CF_SITE_KEY=]

-A, --auth-key <AUTH_KEY>

Login Authentication Key [env: AUTH_KEY=]

-D, --disable-webui

Disable WebUI [env: DISABLE_WEBUI=]

-F, --enable-file-proxy

Enable file proxy [env: ENABLE_FILE_PROXY=]

--arkose-endpoint <ARKOSE_ENDPOINT>

Arkose endpoint, Example: https://client-api.arkoselabs.com

-E, --arkose-gpt3-experiment

Enable Arkose GPT-3.5 experiment

--arkose-gpt3-har-dir <ARKOSE_GPT3_HAR_DIR>

About the browser HAR directory path requested by ChatGPT GPT-3.5 ArkoseLabs

--arkose-gpt4-har-dir <ARKOSE_GPT4_HAR_DIR>

About the browser HAR directory path requested by ChatGPT GPT-4 ArkoseLabs

--arkose-auth-har-dir <ARKOSE_AUTH_HAR_DIR>

About the browser HAR directory path requested by Auth ArkoseLabs

--arkose-platform-har-dir <ARKOSE_PLATFORM_HAR_DIR>

About the browser HAR directory path requested by Platform ArkoseLabs

-K, --arkose-har-upload-key <ARKOSE_HAR_UPLOAD_KEY>

HAR file upload authenticate key

-s, --arkose-solver <ARKOSE_SOLVER>

About ArkoseLabs solver platform [default: yescaptcha]

-k, --arkose-solver-key <ARKOSE_SOLVER_KEY>

About the solver client key by ArkoseLabs

-T, --tb-enable

Enable token bucket flow limitation

--tb-store-strategy <TB_STORE_STRATEGY>

Token bucket store strategy (mem/redis) [default: mem]

--tb-redis-url <TB_REDIS_URL>

Token bucket redis connection url [default: redis://127.0.0.1:6379]

--tb-capacity <TB_CAPACITY>

Token bucket capacity [default: 60]

--tb-fill-rate <TB_FILL_RATE>

Token bucket fill rate [default: 1]

--tb-expired <TB_EXPIRED>

Token bucket expired (seconds) [default: 86400]

-B, --pbind <PBIND>

Preauth MITM server bind address [env: PREAUTH_BIND=]

-X, --pupstream <PUPSTREAM>

Preauth MITM server upstream proxy, Only support http/https/socks5 protocol [env: PREAUTH_UPSTREAM=]

--pcert <PCERT>

Preauth MITM server CA certificate file path [default: ca/cert.crt]

--pkey <PKEY>

Preauth MITM server CA private key file path [default: ca/key.pem]

-h, --help

Print help- Linux

x86_64-unknown-linux-muslaarch64-unknown-linux-muslarmv7-unknown-linux-musleabiarmv7-unknown-linux-musleabihfarm-unknown-linux-musleabiarm-unknown-linux-musleabihfarmv5te-unknown-linux-musleabi

- Windows

x86_64-pc-windows-msvc

- MacOS

x86_64-apple-darwinaarch64-apple-darwin

- Linux compile, Ubuntu machine for example:

apt install build-essential

apt install cmake

apt install libclang-dev

git clone https://github.com/gngpp/ninja.git && cd ninja

cargo build --release- OpenWrt Compile

cd package

svn co https://github.com/gngpp/ninja/trunk/openwrt

cd -

make menuconfig # choose LUCI->Applications->luci-app-ninja

make V=s- Open source projects can be modified, but please keep the original author information to avoid losing technical support.

- Project is standing on the shoulders of other giants, thanks!

- Submit an issue if there are errors, bugs, etc., and I will fix them.