Earthformer

By Zhihan Gao, Xingjian Shi, Hao Wang, Yi Zhu, Yuyang Wang, Mu Li, Dit-Yan Yeung.

This repo is the official implementation of "Earthformer: Exploring Space-Time Transformers for Earth System Forecasting" that will appear in NeurIPS 2022.

Introduction

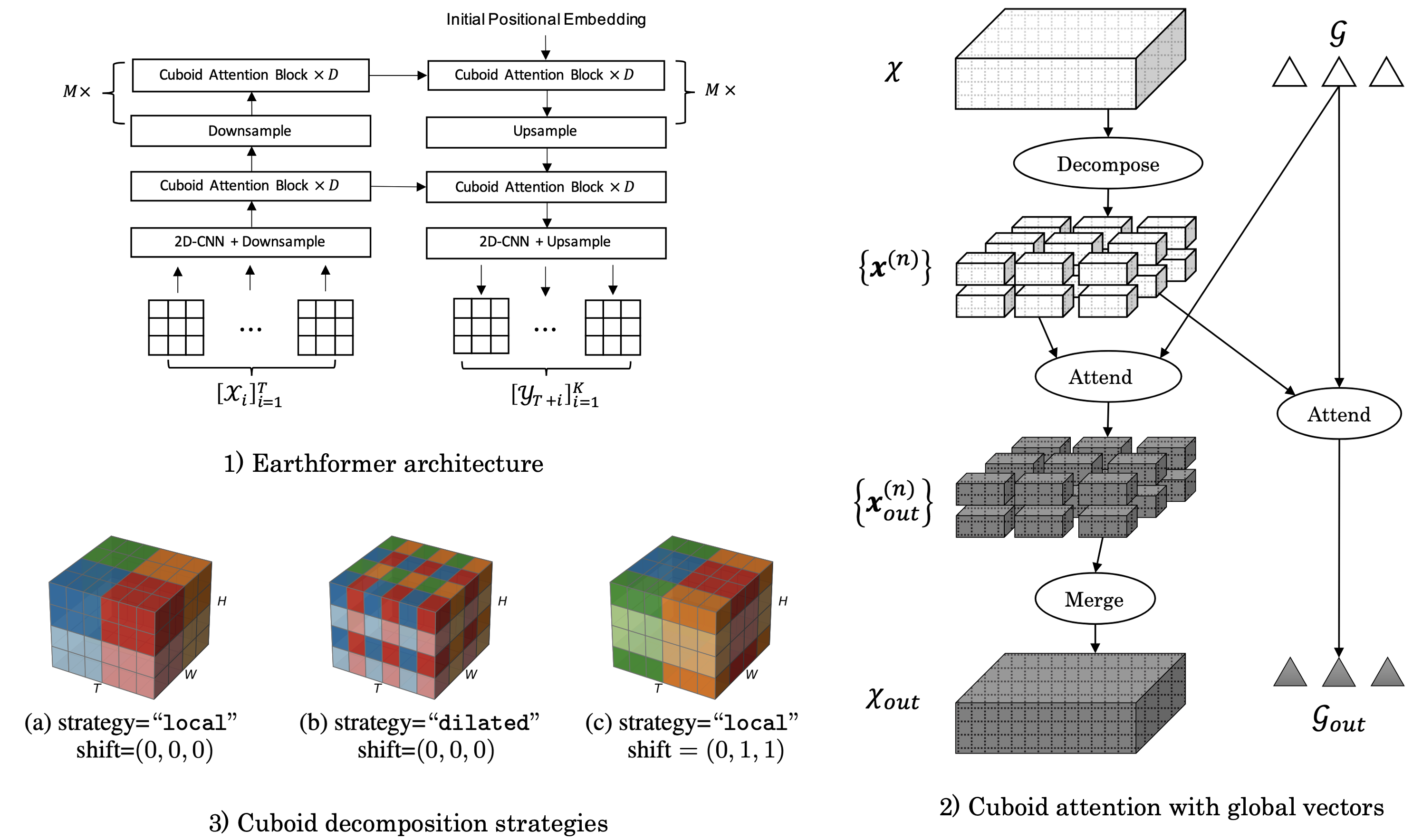

Conventionally, Earth system (e.g., weather and climate) forecasting relies on numerical simulation with complex physical models and are hence both expensive in computation and demanding on domain expertise. With the explosive growth of the spatiotemporal Earth observation data in the past decade, data-driven models that apply Deep Learning (DL) are demonstrating impressive potential for various Earth system forecasting tasks. The Transformer as an emerging DL architecture, despite its broad success in other domains, has limited adoption in this area. In this paper, we propose Earthformer, a space-time Transformer for Earth system forecasting. Earthformer is based on a generic, flexible and efficient space-time attention block, named Cuboid Attention. The idea is to decompose the data into cuboids and apply cuboid-level self-attention in parallel. These cuboids are further connected with a collection of global vectors.

Earthformer achieves strong results in synthetic datasets like MovingMNIST and N-body MNIST dataset, and also outperforms non-Transformer models (like ConvLSTM, CNN-U-Net) in SEVIR (precipitation nowcasting) and ICAR-ENSO2021 (El Nino/Southern Oscillation forecasting).

Installation

We recommend managing the environment through Anaconda.

First, find out where CUDA is installed on your machine. It is usually under /usr/local/cuda or /opt/cuda.

Next, check which version of CUDA you have installed on your machine:

nvcc --versionThen, create a new conda environment:

conda create -n earthformer python=3.9

conda activate earthformerLastly, install dependencies. For example, if you have CUDA 11.6 installed under /usr/local/cuda, run:

python3 -m pip install torch==1.12.1+cu116 torchvision==0.13.1+cu116 -f https://download.pytorch.org/whl/torch_stable.html

python3 -m pip install pytorch_lightning==1.6.4

python3 -m pip install xarray netcdf4 opencv-python

cd ROOT_DIR/earth-forecasting-transformer

python3 -m pip install -U -e . --no-build-isolation

# Install Apex

CUDA_HOME=/usr/local/cuda python3 -m pip install -v --no-cache-dir --global-option="--cpp_ext" --global-option="--cuda_ext" pytorch-extension git+https://github.com/NVIDIA/apex.gitIf you have CUDA 11.7 installed under /opt/cuda, run:

python3 -m pip install torch==1.13.0+cu117 torchvision==0.14.0+cu117 -f https://download.pytorch.org/whl/torch_stable.html

python3 -m pip install pytorch_lightning==1.7.7

python3 -m pip install xarray netcdf4 opencv-python

cd ROOT_DIR/earth-forecasting-transformer

python3 -m pip install -U -e . --no-build-isolation

# Install Apex

CUDA_HOME=/opt/cuda python3 -m pip install -v --no-cache-dir --global-option="--cpp_ext" --global-option="--cuda_ext" pytorch-extension git+https://github.com/NVIDIA/apex.gitDataset

MovingMNIST

We follow Unsupervised Learning of Video Representations using LSTMs (ICML2015) to use MovingMNIST that contains 10,000 sequences each of length 20 showing 2 digits moving in a

Our MovingMNIST DataModule automatically downloads it to datasets/moving_mnist.

N-body MNIST

The underlying dynamics in the N-body MNIST dataset is governed by the Newton's law of universal gravitation:

where

Run the following commands to generate N-body MNIST dataset.

cd ROOT_DIR/earth-forecasting-transformer

python ./scripts/datasets/nbody/generate_nbody_dataset.py --cfg ./scripts/datasets/nbody/cfg.yamlSEVIR

Storm EVent ImageRy (SEVIR) dataset is a spatiotemporally aligned dataset containing over 10,000 weather events.

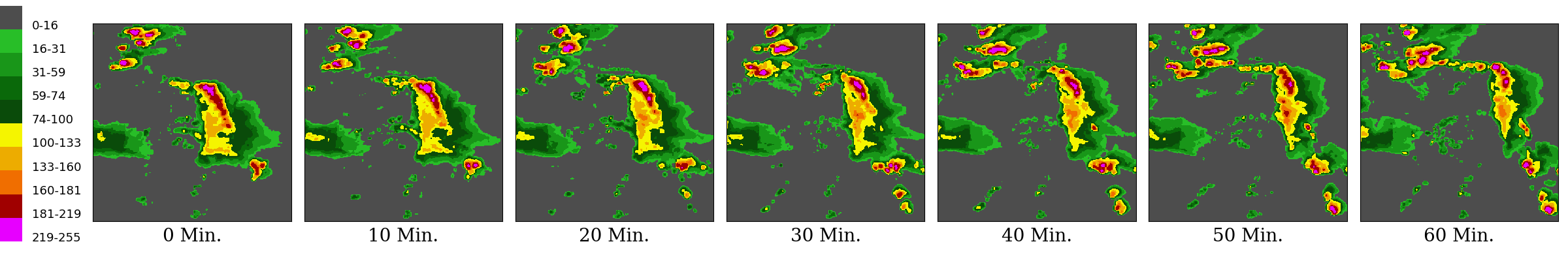

We adopt NEXRAD Vertically Integrated Liquid (VIL) mosaics in SEVIR for benchmarking precipitation nowcasting, i.e., to predict the future VIL up to 60 minutes given 65 minutes context VIL.

The resolution is thus

To download SEVIR dataset from AWS S3, run:

cd ROOT_DIR/earth-forecasting-transformer

python ./scripts/datasets/sevir/download_sevir.py --dataset sevirA visualization example of SEVIR VIL sequence:

ICAR-ENSO

ICAR-ENSO consists of historical climate observation and stimulation data provided by Institute for Climate and Application Research (ICAR). We forecast the SST anomalies up to 14 steps (2 steps more than one year for calculating three-month-moving-average), given a context of 12 steps (one year) of SST anomalies observations.

To download the dataset, you need to follow the instructions on the official website.

You can download a zip-file named enso_round1_train_20210201.zip. Put it under ./datasets/ and extract the zip file with the following command:

unzip datasets/enso_round1_train_20210201.zip -d datasets/icar_enso_2021EarthNet2021

You may follow the official instructions for downloading EarthNet2021 dataset. We recommend download it via the earthnet_toolket.

# python3 -m pip install earthnet

import earthnet as en

en.Downloader.get("./datasets/earthnet2021", "all")It requires 455G disk space in total.

Earthformer Training

Find detailed instructions in the corresponding training script folder

Inference with Pretrained Models

TBA

Citing Earthformer

@inproceedings{gao2022earthformer,

title={Earthformer: Exploring Space-Time Transformers for Earth System Forecasting},

author={Gao, Zhihan and Shi, Xingjian and Wang, Hao and Zhu, Yi and Wang, Yuyang and Li, Mu and Yeung, Dit-Yan},

booktitle={NeurIPS},

year={2022}

}

Security

See CONTRIBUTING for more information.

Credits

Third-party libraries:

License

This project is licensed under the Apache-2.0 License.