This project aims to develop a Python command-line interface (CLI) that converts speech into text. The project utilizes a pre-trained model from the hugging-face library, which is implemented in Python, to perform the conversion.

- Develop my python cli with openai whisper

- Use Github Codespaces and Copilot

- Integrate libtorch and 'hugging-face pretrained models' into a python cli project

- Install python

- Install dependencies

make install

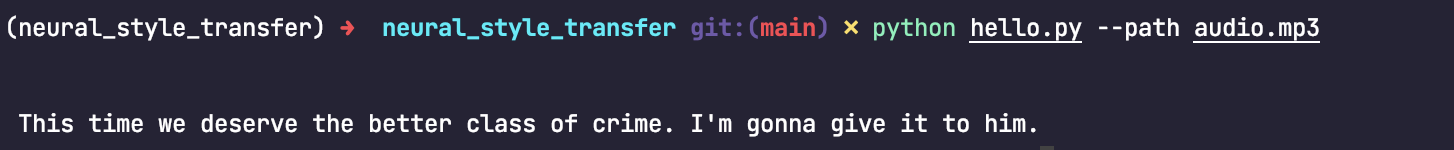

- Run the cli

python hello.py --path audio.mp3

Install dependencies

make setup

- Run the cli

whisper --path audio.mp3

- This repo main branch is automatically published to Dockerhub with CI/CD, you can pull the image from here

docker pull szheng3/whisper-ml-cli:latest

- Run the docker image.

docker run szheng3/whisper-ml-cli:latest 'audio.mp3'

- With your own audio file

docker run -v /path/to/your/audio:/app/audio szheng3/whisper-ml-cli:latest /app/audio/audio.mp3

Github Actions configured in .github/workflows

- Configure Github Codespaces.

- Initialise python project with pretrained model from hugging-face

- CI/CD with Github Actions

- Tag and Releases