This project follows the assignment 2. of Practical Machine Learning.

- tensorflow >= 1.4

- numpy

- Download training dataset at tiny-imagenet and put it on the

datadirectory. - Download a pretrained VGG16 Model from http://download.tensorflow.org/models/vgg_16_2016_08_28.tar.gz, extract and put

vgg16.ckpton thepretraineddirectory. - (Optional) For evaluating the assignment test files, download it at http://www.suyongeum.com/ML/assignments.php and put it on

data/test. - Please run the

train.pyorpredict.py

I used the VGG-16 Model and show the architectures below in detail.

- input layer: output=64x64x3

- conv1 layer: output=64x64x64, ksize=[3, 3], stride=1

- pool1 layer: output=32x32x64, func=max, ksize=[2, 2]

- conv2 layer: output=32x32x128, ksize=[3, 3], stride=1

- pool2 layer: output=16x16x128, func=max, ksize=[2, 2]

- conv3 layer: output=16x16x256, ksize=[3, 3], stride=1

- pool3 layer: output=8x8x256, func=max, ksize=[2, 2]

- conv4 layer: output=8x8x512, ksize=[3, 3], stride=1

- pool4 layer: output=4x4x512, func=max, ksize=[2, 2]

- conv5 layer: output=4x4x512, ksize=[3, 3], stride=1

- pool5 layer: output=2x2x512, func=max, ksize=[2, 2]

- fc1 layer: output=512

- fc2 layer: output200

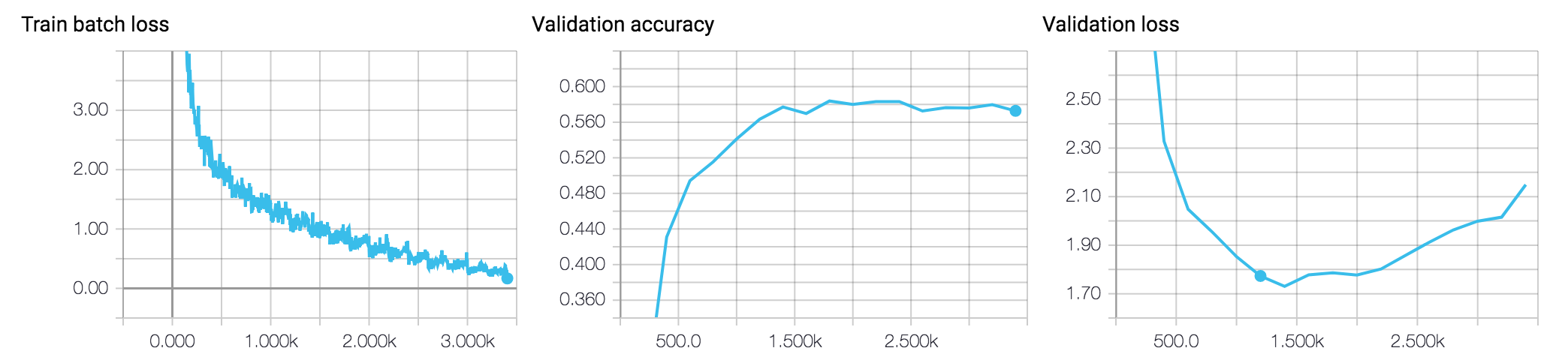

- optimization: adam(lr=0.01)

- batch size: 500

- epoch: 10000

- regularization: weight decay(add L2 norm into loss)

- all activation function: ReLU

- data augumentation:

- brightness

- contrast

- saturation

- crop

- flip the image left or right

- normalization

- To use a prepared pretrained model before training