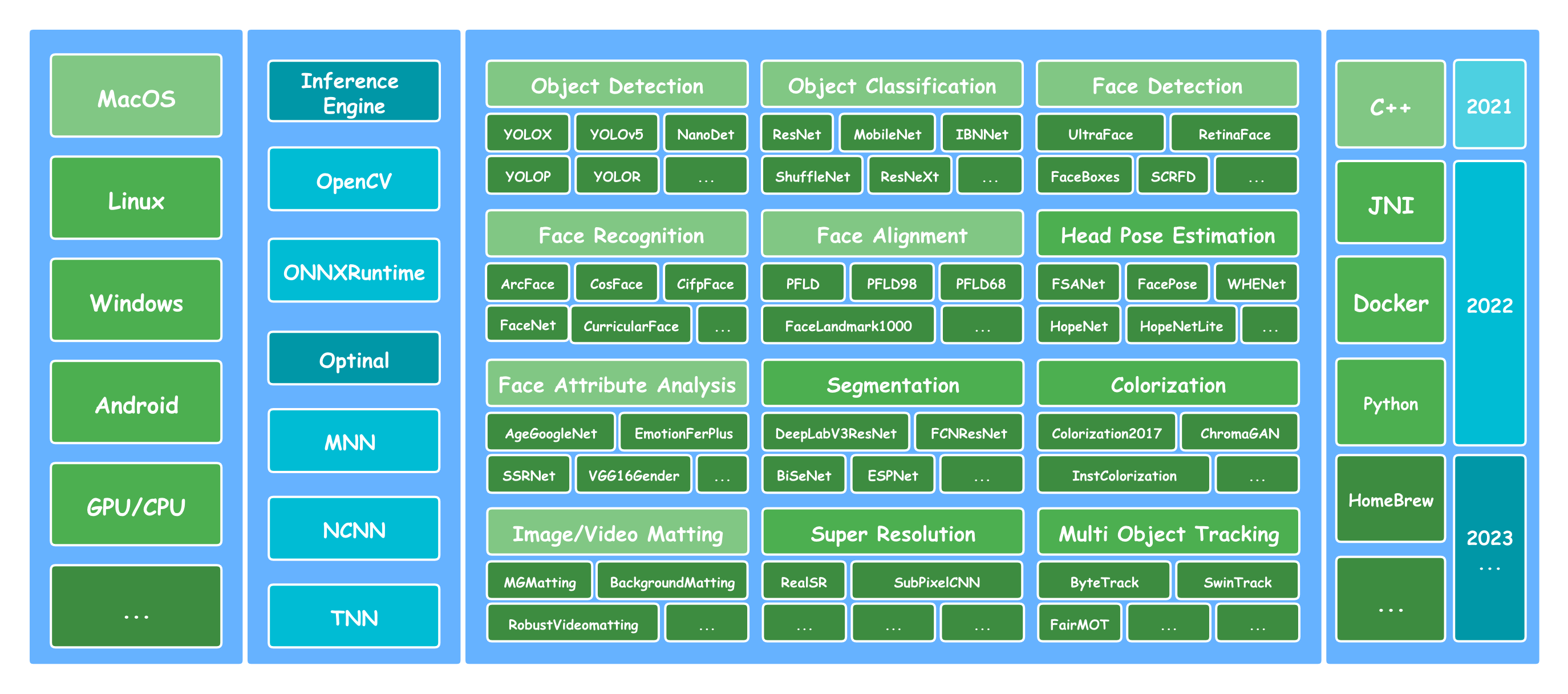

🛠Lite.Ai.ToolKit: A lite C++ toolkit of awesome AI models, such as Object Detection, Face Detection, Face Recognition, Segmentation, Matting, etc. See Model Zoo and ONNX Hub, MNN Hub, TNN Hub, NCNN Hub. [❤️ Star 🌟👆🏻 this repo to support me if it does any helps to you, thanks ~ ]

English | 中文文档 | MacOS | Linux | Windows

- Simply and User friendly. Simply and Consistent syntax like lite::cv::Type::Class, see examples.

- Minimum Dependencies. Only OpenCV and ONNXRuntime are required by default, see build.

- Lots of Algorithm Modules. Contains almost 300+ C++ re-implementations and 500+ weights.

Consider to cite it as follows if you use Lite.Ai.ToolKit in your projects.

@misc{lite.ai.toolkit2021,

title={lite.ai.toolkit: A lite C++ toolkit of awesome AI models.},

url={https://github.com/DefTruth/lite.ai.toolkit},

note={Open-source software available at https://github.com/DefTruth/lite.ai.toolkit},

author={Yan Jun},

year={2021}

}A high level Training and Evaluating Toolkit for Face Landmarks Detection is available at torchlm.

Some prebuilt lite.ai.toolkit libs for MacOS(x64) and Linux(x64) are available, you can download the libs from the release links. Further, prebuilt libs for Windows(x64) and Android will be coming soon ~ Please, see issues#48 for more details of the prebuilt plan and refer to releases for more available prebuilt libs.

- lite0.1.1-osx10.15.x-ocv4.5.2-ffmpeg4.2.2-onnxruntime1.8.1.zip

- lite0.1.1-osx10.15.x-ocv4.5.2-ffmpeg4.2.2-onnxruntime1.9.0.zip

- lite0.1.1-osx10.15.x-ocv4.5.2-ffmpeg4.2.2-onnxruntime1.10.0.zip

- lite0.1.1-ubuntu18.04-ocv4.5.2-ffmpeg4.2.2-onnxruntime1.8.1.zip

- lite0.1.1-ubuntu18.04-ocv4.5.2-ffmpeg4.2.2-onnxruntime1.9.0.zip

- lite0.1.1-ubuntu18.04-ocv4.5.2-ffmpeg4.2.2-onnxruntime1.10.0.zip

In Linux, in order to link the prebuilt libs, you need to export lite.ai.toolkit/lib to LD_LIBRARY_PATH first.

export LD_LIBRARY_PATH=YOUR-PATH-TO/lite.ai.toolkit/lib:$LD_LIBRARY_PATH

export LIBRARY_PATH=YOUR-PATH-TO/lite.ai.toolkit/lib:$LIBRARY_PATH # (may need)To quickly setup lite.ai.toolkit, you can follow the CMakeLists.txt listed as belows. 👇👀

set(LITE_AI_DIR ${CMAKE_SOURCE_DIR}/lite.ai.toolkit)

include_directories(${LITE_AI_DIR}/include)

link_directories(${LITE_AI_DIR}/lib})

set(TOOLKIT_LIBS lite.ai.toolkit onnxruntime)

set(OpenCV_LIBS opencv_core opencv_imgcodecs opencv_imgproc opencv_video opencv_videoio)

add_executable(lite_yolov5 examples/test_lite_yolov5.cpp)

target_link_libraries(lite_yolov5 ${TOOLKIT_LIBS} ${OpenCV_LIBS})- Core Features

- Quick Start

- RoadMap

- Important Updates

- Supported Models Matrix

- Build Docs

- Model Zoo

- Examples

- License

- References

- Contribute

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/yolov5s.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_yolov5_1.jpg";

std::string save_img_path = "../../../logs/test_lite_yolov5_1.jpg";

auto *yolov5 = new lite::cv::detection::YoloV5(onnx_path);

std::vector<lite::types::Boxf> detected_boxes;

cv::Mat img_bgr = cv::imread(test_img_path);

yolov5->detect(img_bgr, detected_boxes);

lite::utils::draw_boxes_inplace(img_bgr, detected_boxes);

cv::imwrite(save_img_path, img_bgr);

delete yolov5;

}Click here to see details of Important Updates!

| Date | Model | C++ | Paper | Code | Awesome | Type |

|---|---|---|---|---|---|---|

| 【2022/04/03】 | MODNet | link | AAAI 2022 | code |  |

matting |

| 【2022/03/23】 | PIPNtet | link | CVPR 2021 | code |  |

face::align |

| 【2022/01/19】 | YOLO5Face | link | arXiv 2021 | code |  |

face::detect |

| 【2022/01/07】 | SCRFD | link | CVPR 2021 | code |  |

face::detect |

| 【2021/12/27】 | NanoDetPlus | link | blog | code |  |

detection |

| 【2021/12/08】 | MGMatting | link | CVPR 2021 | code |  |

matting |

| 【2021/11/11】 | YoloV5_V_6_0 | link | doi | code |  |

detection |

| 【2021/10/26】 | YoloX_V_0_1_1 | link | arXiv 2021 | code |  |

detection |

| 【2021/10/02】 | NanoDet | link | blog | code |  |

detection |

| 【2021/09/20】 | RobustVideoMatting | link | WACV 2022 | code |  |

matting |

| 【2021/09/02】 | YOLOP | link | arXiv 2021 | code |  |

detection |

- / = not supported now.

- ✅ = known work and official supported now.

- ✔️ = known work, but unofficial supported now.

- ❔ = in my plan, but not coming soon, maybe a few months later.

| Class | Size | Type | Demo | ONNXRuntime | MNN | NCNN | TNN | MacOS | Linux | Windows | Android |

|---|---|---|---|---|---|---|---|---|---|---|---|

| YoloV5 | 28M | detection | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| YoloV3 | 236M | detection | demo | ✅ | / | / | / | ✅ | ✔️ | ✔️ | / |

| TinyYoloV3 | 33M | detection | demo | ✅ | / | / | / | ✅ | ✔️ | ✔️ | / |

| YoloV4 | 176M | detection | demo | ✅ | / | / | / | ✅ | ✔️ | ✔️ | / |

| SSD | 76M | detection | demo | ✅ | / | / | / | ✅ | ✔️ | ✔️ | / |

| SSDMobileNetV1 | 27M | detection | demo | ✅ | / | / | / | ✅ | ✔️ | ✔️ | / |

| YoloX | 3.5M | detection | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| TinyYoloV4VOC | 22M | detection | demo | ✅ | / | / | / | ✅ | ✔️ | ✔️ | / |

| TinyYoloV4COCO | 22M | detection | demo | ✅ | / | / | / | ✅ | ✔️ | ✔️ | / |

| YoloR | 39M | detection | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| ScaledYoloV4 | 270M | detection | demo | ✅ | / | / | / | ✅ | ✔️ | ✔️ | / |

| EfficientDet | 15M | detection | demo | ✅ | / | / | / | ✅ | ✔️ | ✔️ | / |

| EfficientDetD7 | 220M | detection | demo | ✅ | / | / | / | ✅ | ✔️ | ✔️ | / |

| EfficientDetD8 | 322M | detection | demo | ✅ | / | / | / | ✅ | ✔️ | ✔️ | / |

| YOLOP | 30M | detection | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| NanoDet | 1.1M | detection | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| NanoDetPlus | 4.5M | detection | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| NanoDetEffi... | 12M | detection | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| YoloX_V_0_1_1 | 3.5M | detection | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| YoloV5_V_6_0 | 7.5M | detection | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| GlintArcFace | 92M | faceid | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| GlintCosFace | 92M | faceid | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| GlintPartialFC | 170M | faceid | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| FaceNet | 89M | faceid | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| FocalArcFace | 166M | faceid | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| FocalAsiaArcFace | 166M | faceid | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| TencentCurricularFace | 249M | faceid | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| TencentCifpFace | 130M | faceid | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| CenterLossFace | 280M | faceid | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| SphereFace | 80M | faceid | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| PoseRobustFace | 92M | faceid | demo | ✅ | / | / | / | ✅ | ✔️ | ✔️ | / |

| NaivePoseRobustFace | 43M | faceid | demo | ✅ | / | / | / | ✅ | ✔️ | ✔️ | / |

| MobileFaceNet | 3.8M | faceid | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| CavaGhostArcFace | 15M | faceid | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| CavaCombinedFace | 250M | faceid | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| MobileSEFocalFace | 4.5M | faceid | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| RobustVideoMatting | 14M | matting | demo | ✅ | ✅ | / | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| MGMatting | 113M | matting | demo | ✅ | ✅ | / | ✅ | ✅ | ✔️ | ✔️ | / |

| MODNet | 24M | matting | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| MODNetDyn | 24M | matting | demo | ✅ | / | / | / | ✅ | ✔️ | ✔️ | / |

| BackgroundMattingV2 | 20M | matting | demo | ✅ | ✅ | / | ✅ | ✅ | ✔️ | ✔️ | / |

| BackgroundMattingV2Dyn | 20M | matting | demo | ✅ | / | / | / | ✅ | ✔️ | ✔️ | / |

| UltraFace | 1.1M | face::detect | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| RetinaFace | 1.6M | face::detect | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| FaceBoxes | 3.8M | face::detect | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| FaceBoxesV2 | 3.8M | face::detect | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| SCRFD | 2.5M | face::detect | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| YOLO5Face | 4.8M | face::detect | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| PFLD | 1.0M | face::align | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| PFLD98 | 4.8M | face::align | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| MobileNetV268 | 9.4M | face::align | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| MobileNetV2SE68 | 11M | face::align | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| PFLD68 | 2.8M | face::align | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| FaceLandmark1000 | 2.0M | face::align | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| PIPNet98 | 44.0M | face::align | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| PIPNet68 | 44.0M | face::align | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| PIPNet29 | 44.0M | face::align | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| PIPNet19 | 44.0M | face::align | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| FSANet | 1.2M | face::pose | demo | ✅ | ✅ | / | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| AgeGoogleNet | 23M | face::attr | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| GenderGoogleNet | 23M | face::attr | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| EmotionFerPlus | 33M | face::attr | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| VGG16Age | 514M | face::attr | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| VGG16Gender | 512M | face::attr | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| SSRNet | 190K | face::attr | demo | ✅ | ✅ | / | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| EfficientEmotion7 | 15M | face::attr | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| EfficientEmotion8 | 15M | face::attr | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| MobileEmotion7 | 13M | face::attr | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| ReXNetEmotion7 | 30M | face::attr | demo | ✅ | ✅ | / | ✅ | ✅ | ✔️ | ✔️ | / |

| EfficientNetLite4 | 49M | classification | demo | ✅ | ✅ | / | ✅ | ✅ | ✔️ | ✔️ | / |

| ShuffleNetV2 | 8.7M | classification | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| DenseNet121 | 30.7M | classification | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| GhostNet | 20M | classification | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| HdrDNet | 13M | classification | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| IBNNet | 97M | classification | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| MobileNetV2 | 13M | classification | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| ResNet | 44M | classification | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| ResNeXt | 95M | classification | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| DeepLabV3ResNet101 | 232M | segmentation | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| FCNResNet101 | 207M | segmentation | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | / |

| FastStyleTransfer | 6.4M | style | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| Colorizer | 123M | colorization | demo | ✅ | ✅ | / | ✅ | ✅ | ✔️ | ✔️ | / |

| SubPixelCNN | 234K | resolution | demo | ✅ | ✅ | / | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| SubPixelCNN | 234K | resolution | demo | ✅ | ✅ | / | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| InsectDet | 27M | detection | demo | ✅ | ✅ | / | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| InsectID | 22M | classification | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ |

| PlantID | 30M | classification | demo | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✔️ | ✔️ |

| YOLOv5BlazeFace | 3.4M | face::detect | demo | ✅ | ✅ | / | / | ✅ | ✔️ | ✔️ | ❔ |

| YoloV5_V_6_1 | 7.5M | detection | demo | ✅ | ✅ | / | / | ✅ | ✔️ | ✔️ | ❔ |

| HeadSeg | 31M | segmentation | demo | ✅ | ✅ | / | ✅ | ✅ | ✔️ | ✔️ | ❔ |

| FemalePhoto2Cartoon | 15M | style | demo | ✅ | ✅ | / | ✅ | ✅ | ✔️ | ✔️ | ❔ |

- MacOS: Build the shared lib of Lite.Ai.ToolKit for MacOS from sources. Note that Lite.Ai.ToolKit uses onnxruntime as default backend, for the reason that onnxruntime supports the most of onnx's operators.

git clone --depth=1 https://github.com/DefTruth/lite.ai.toolkit.git # latest

cd lite.ai.toolkit && sh ./build.sh # On MacOS, you can use the built OpenCV, ONNXRuntime, MNN, NCNN and TNN libs in this repo.💡 Linux and Windows.

- lite.ai.toolkit/opencv2

cp -r you-path-to-downloaded-or-built-opencv/include/opencv4/opencv2 lite.ai.toolkit/opencv2

- lite.ai.toolkit/onnxruntime

cp -r you-path-to-downloaded-or-built-onnxruntime/include/onnxruntime lite.ai.toolkit/onnxruntime

- lite.ai.toolkit/MNN

cp -r you-path-to-downloaded-or-built-MNN/include/MNN lite.ai.toolkit/MNN

- lite.ai.toolkit/ncnn

cp -r you-path-to-downloaded-or-built-ncnn/include/ncnn lite.ai.toolkit/ncnn

- lite.ai.toolkit/tnn

cp -r you-path-to-downloaded-or-built-TNN/include/tnn lite.ai.toolkit/tnn

and put the libs into lite.ai.toolkit/lib/(linux|windows) directory. Please reference the build-docs1 for third_party.

- lite.ai.toolkit/lib/(linux|windows)

cp you-path-to-downloaded-or-built-opencv/lib/*opencv* lite.ai.toolkit/lib/(linux|windows)/ cp you-path-to-downloaded-or-built-onnxruntime/lib/*onnxruntime* lite.ai.toolkit/lib/(linux|windows)/ cp you-path-to-downloaded-or-built-MNN/lib/*MNN* lite.ai.toolkit/lib/(linux|windows)/ cp you-path-to-downloaded-or-built-ncnn/lib/*ncnn* lite.ai.toolkit/lib/(linux|windows)/ cp you-path-to-downloaded-or-built-TNN/lib/*TNN* lite.ai.toolkit/lib/(linux|windows)/

Note, your also need to install ffmpeg(<=4.2.2) in Linux to support the opencv videoio module. See issue#203. In MacOS, ffmpeg4.2.2 was been package into lite.ai.toolkit, thus, no installation need in OSX. In Windows, ffmpeg was been package into opencv dll prebuilt by the team of opencv. Please make sure -DWITH_FFMPEG=ON and check the configuration info when building opencv.

- first, build ffmpeg(<=4.2.2) from source.

git clone --depth=1 https://git.ffmpeg.org/ffmpeg.git -b n4.2.2

cd ffmpeg

./configure --enable-shared --disable-x86asm --prefix=/usr/local/opt/ffmpeg --disable-static

make -j8

make install- then, build opencv with -DWITH_FFMPEG=ON, just like

#!/bin/bash

mkdir build

cd build

cmake .. \

-D CMAKE_BUILD_TYPE=Release \

-D CMAKE_INSTALL_PREFIX=your-path-to-custom-dir \

-D BUILD_TESTS=OFF \

-D BUILD_PERF_TESTS=OFF \

-D BUILD_opencv_python3=OFF \

-D BUILD_opencv_python2=OFF \

-D BUILD_SHARED_LIBS=ON \

-D BUILD_opencv_apps=OFF \

-D WITH_FFMPEG=ON

make -j8

make install

cd ..after built opencv, you can follow the steps to build lite.ai.toolkit.

-

Windows: You can reference to issue#6

-

Linux: The Docs and Docker image for Linux will be coming soon ~ issue#2

-

Happy News !!! : 🚀 You can download the latest ONNXRuntime official built libs of Windows, Linux, MacOS and Arm !!! Both CPU and GPU versions are available. No more attentions needed pay to build it from source. Download the official built libs from v1.8.1. I have used version 1.7.0 for Lite.Ai.ToolKit now, you can download it from v1.7.0, but version 1.8.1 should also work, I guess ~ 🙃🤪🍀. For OpenCV, try to build from source(Linux) or down load the official built(Windows) from OpenCV 4.5.3. Then put the includes and libs into specific directory of Lite.Ai.ToolKit.

-

GPU Compatibility for Windows: See issue#10.

-

GPU Compatibility for Linux: See issue#97.

🔑️ How to link Lite.Ai.ToolKit?

* To link Lite.Ai.ToolKit, you can follow the CMakeLists.txt listed belows.cmake_minimum_required(VERSION 3.10)

project(lite.ai.toolkit.demo)

set(CMAKE_CXX_STANDARD 11)

# setting up lite.ai.toolkit

set(LITE_AI_DIR ${CMAKE_SOURCE_DIR}/lite.ai.toolkit)

set(LITE_AI_INCLUDE_DIR ${LITE_AI_DIR}/include)

set(LITE_AI_LIBRARY_DIR ${LITE_AI_DIR}/lib)

include_directories(${LITE_AI_INCLUDE_DIR})

link_directories(${LITE_AI_LIBRARY_DIR})

set(OpenCV_LIBS

opencv_highgui

opencv_core

opencv_imgcodecs

opencv_imgproc

opencv_video

opencv_videoio

)

# add your executable

set(EXECUTABLE_OUTPUT_PATH ${CMAKE_SOURCE_DIR}/examples/build)

add_executable(lite_rvm examples/test_lite_rvm.cpp)

target_link_libraries(lite_rvm

lite.ai.toolkit

onnxruntime

MNN # need, if built lite.ai.toolkit with ENABLE_MNN=ON, default OFF

ncnn # need, if built lite.ai.toolkit with ENABLE_NCNN=ON, default OFF

TNN # need, if built lite.ai.toolkit with ENABLE_TNN=ON, default OFF

${OpenCV_LIBS}) # link lite.ai.toolkit & other libs.cd ./build/lite.ai.toolkit/lib && otool -L liblite.ai.toolkit.0.0.1.dylib

liblite.ai.toolkit.0.0.1.dylib:

@rpath/liblite.ai.toolkit.0.0.1.dylib (compatibility version 0.0.1, current version 0.0.1)

@rpath/libopencv_highgui.4.5.dylib (compatibility version 4.5.0, current version 4.5.2)

@rpath/libonnxruntime.1.7.0.dylib (compatibility version 0.0.0, current version 1.7.0)

...cd ../ && tree .

├── bin

├── include

│ ├── lite

│ │ ├── backend.h

│ │ ├── config.h

│ │ └── lite.h

│ └── ort

└── lib

└── liblite.ai.toolkit.0.0.1.dylib- Run the built examples:

cd ./build/lite.ai.toolkit/bin && ls -lh | grep lite

-rwxr-xr-x 1 root staff 301K Jun 26 23:10 liblite.ai.toolkit.0.0.1.dylib

...

-rwxr-xr-x 1 root staff 196K Jun 26 23:10 lite_yolov4

-rwxr-xr-x 1 root staff 196K Jun 26 23:10 lite_yolov5

..../lite_yolov5

LITEORT_DEBUG LogId: ../../../hub/onnx/cv/yolov5s.onnx

=============== Input-Dims ==============

...

detected num_anchors: 25200

generate_bboxes num: 66

Default Version Detected Boxes Num: 5To link lite.ai.toolkit shared lib. You need to make sure that OpenCV and onnxruntime are linked correctly. A minimum example to show you how to link the shared lib of Lite.AI.ToolKit correctly for your own project can be found at CMakeLists.txt.

Lite.Ai.ToolKit contains almost 100+ AI models with 500+ frozen pretrained files now. Most of the files are converted by myself. You can use it through lite::cv::Type::Class syntax, such as lite::cv::detection::YoloV5. More details can be found at Examples for Lite.Ai.ToolKit. Note, for Google Drive, I can not upload all the *.onnx files because of the storage limitation (15G).

| File | Baidu Drive | Google Drive | Docker Hub | Hub (Docs) |

|---|---|---|---|---|

| ONNX | Baidu Drive code: 8gin | Google Drive | ONNX Docker v0.1.22.01.08 (28G), v0.1.22.02.02 (400M) | ONNX Hub |

| MNN | Baidu Drive code: 9v63 | ❔ | MNN Docker v0.1.22.01.08 (11G), v0.1.22.02.02 (213M) | MNN Hub |

| NCNN | Baidu Drive code: sc7f | ❔ | NCNN Docker v0.1.22.01.08 (9G), v0.1.22.02.02 (197M) | NCNN Hub |

| TNN | Baidu Drive code: 6o6k | ❔ | TNN Docker v0.1.22.01.08 (11G), v0.1.22.02.02 (217M) | TNN Hub |

docker pull qyjdefdocker/lite.ai.toolkit-onnx-hub:v0.1.22.01.08 # (28G)

docker pull qyjdefdocker/lite.ai.toolkit-mnn-hub:v0.1.22.01.08 # (11G)

docker pull qyjdefdocker/lite.ai.toolkit-ncnn-hub:v0.1.22.01.08 # (9G)

docker pull qyjdefdocker/lite.ai.toolkit-tnn-hub:v0.1.22.01.08 # (11G)

docker pull qyjdefdocker/lite.ai.toolkit-onnx-hub:v0.1.22.02.02 # (400M) + YOLO5Face

docker pull qyjdefdocker/lite.ai.toolkit-mnn-hub:v0.1.22.02.02 # (213M) + YOLO5Face

docker pull qyjdefdocker/lite.ai.toolkit-ncnn-hub:v0.1.22.02.02 # (197M) + YOLO5Face

docker pull qyjdefdocker/lite.ai.toolkit-tnn-hub:v0.1.22.02.02 # (217M) + YOLO5Face❇️ Lite.Ai.ToolKit modules.

| Namespace | Details |

|---|---|

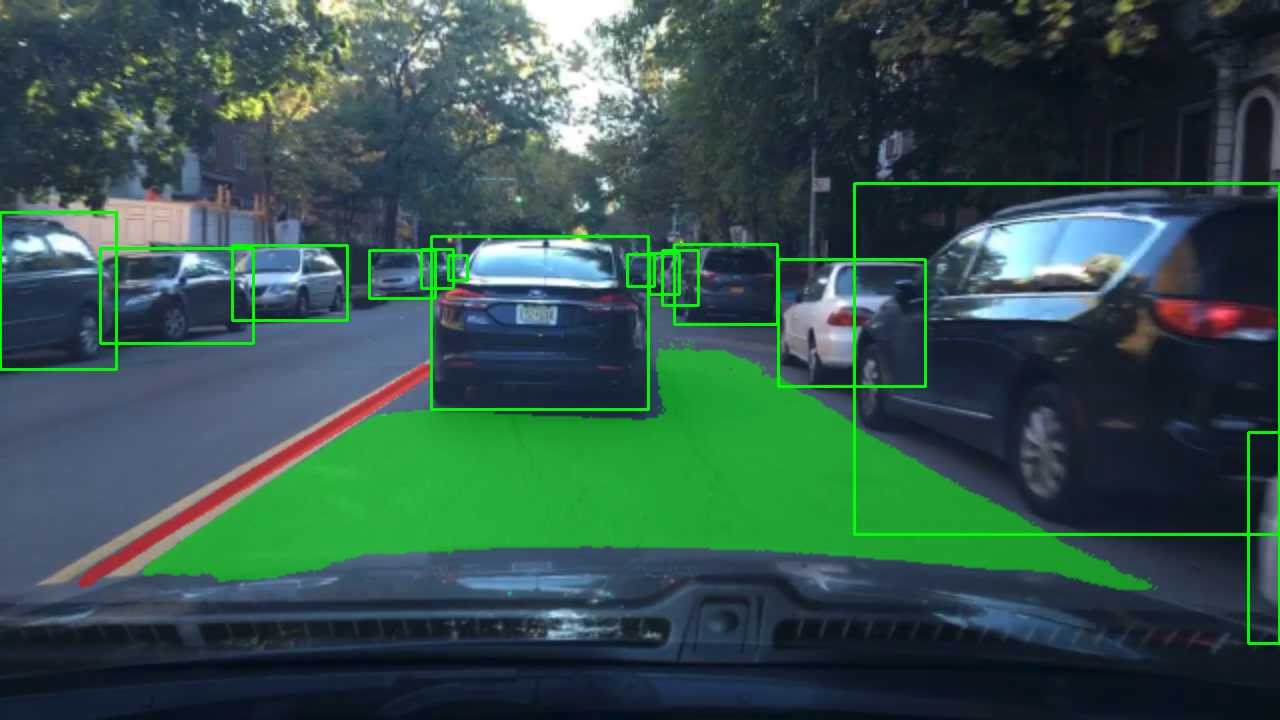

| lite::cv::detection | Object Detection. one-stage and anchor-free detectors, YoloV5, YoloV4, SSD, etc. ✅ |

| lite::cv::classification | Image Classification. DensNet, ShuffleNet, ResNet, IBNNet, GhostNet, etc. ✅ |

| lite::cv::faceid | Face Recognition. ArcFace, CosFace, CurricularFace, etc. ❇️ |

| lite::cv::face | Face Analysis. detect, align, pose, attr, etc. ❇️ |

| lite::cv::face::detect | Face Detection. UltraFace, RetinaFace, FaceBoxes, PyramidBox, etc. ❇️ |

| lite::cv::face::align | Face Alignment. PFLD(106), FaceLandmark1000(1000 landmarks), PRNet, etc. ❇️ |

| lite::cv::face::align3d | 3D Face Alignment. FaceMesh(468 3D landmarks), IrisLandmark(71+5 3D landmarks), etc. ❇️ |

| lite::cv::face::pose | Head Pose Estimation. FSANet, etc. ❇️ |

| lite::cv::face::attr | Face Attributes. Emotion, Age, Gender. EmotionFerPlus, VGG16Age, etc. ❇️ |

| lite::cv::segmentation | Object Segmentation. Such as FCN, DeepLabV3, etc. ❇️ ️ |

| lite::cv::style | Style Transfer. Contains neural style transfer now, such as FastStyleTransfer. |

| lite::cv::matting | Image Matting. Object and Human matting. ❇️ ️ |

| lite::cv::colorization | Colorization. Make Gray image become RGB. |

| lite::cv::resolution | Super Resolution. |

Correspondence between the classes in Lite.AI.ToolKit and pretrained model files can be found at lite.ai.toolkit.hub.onnx.md. For examples, the pretrained model files for lite::cv::detection::YoloV5 and lite::cv::detection::YoloX are listed as follows.

| Class | Pretrained ONNX Files | Rename or Converted From (Repo) | Size |

|---|---|---|---|

| lite::cv::detection::YoloV5 | yolov5l.onnx | yolov5 (🔥🔥💥↑) | 188Mb |

| lite::cv::detection::YoloV5 | yolov5m.onnx | yolov5 (🔥🔥💥↑) | 85Mb |

| lite::cv::detection::YoloV5 | yolov5s.onnx | yolov5 (🔥🔥💥↑) | 29Mb |

| lite::cv::detection::YoloV5 | yolov5x.onnx | yolov5 (🔥🔥💥↑) | 351Mb |

| lite::cv::detection::YoloX | yolox_x.onnx | YOLOX (🔥🔥!!↑) | 378Mb |

| lite::cv::detection::YoloX | yolox_l.onnx | YOLOX (🔥🔥!!↑) | 207Mb |

| lite::cv::detection::YoloX | yolox_m.onnx | YOLOX (🔥🔥!!↑) | 97Mb |

| lite::cv::detection::YoloX | yolox_s.onnx | YOLOX (🔥🔥!!↑) | 34Mb |

| lite::cv::detection::YoloX | yolox_tiny.onnx | YOLOX (🔥🔥!!↑) | 19Mb |

| lite::cv::detection::YoloX | yolox_nano.onnx | YOLOX (🔥🔥!!↑) | 3.5Mb |

It means that you can load the the any one yolov5*.onnx and yolox_*.onnx according to your application through the same Lite.AI.ToolKit's classes, such as YoloV5, YoloX, etc.

auto *yolov5 = new lite::cv::detection::YoloV5("yolov5x.onnx"); // for server

auto *yolov5 = new lite::cv::detection::YoloV5("yolov5l.onnx");

auto *yolov5 = new lite::cv::detection::YoloV5("yolov5m.onnx");

auto *yolov5 = new lite::cv::detection::YoloV5("yolov5s.onnx"); // for mobile device

auto *yolox = new lite::cv::detection::YoloX("yolox_x.onnx");

auto *yolox = new lite::cv::detection::YoloX("yolox_l.onnx");

auto *yolox = new lite::cv::detection::YoloX("yolox_m.onnx");

auto *yolox = new lite::cv::detection::YoloX("yolox_s.onnx");

auto *yolox = new lite::cv::detection::YoloX("yolox_tiny.onnx");

auto *yolox = new lite::cv::detection::YoloX("yolox_nano.onnx"); // 3.5Mb only !🔑️ How to download Model Zoo from Docker Hub?

- Firstly, pull the image from docker hub.

docker pull qyjdefdocker/lite.ai.toolkit-mnn-hub:v0.1.22.01.08 # (11G) docker pull qyjdefdocker/lite.ai.toolkit-ncnn-hub:v0.1.22.01.08 # (9G) docker pull qyjdefdocker/lite.ai.toolkit-tnn-hub:v0.1.22.01.08 # (11G) docker pull qyjdefdocker/lite.ai.toolkit-onnx-hub:v0.1.22.01.08 # (28G)

- Secondly, run the container with local

sharedir usingdocker run -idt xxx. A minimum example will show you as follows.- make a

sharedir in your local device.

mkdir share # any name is ok.- write

run_mnn_docker_hub.shscript like:

#!/bin/bash PORT1=6072 PORT2=6084 SERVICE_DIR=/Users/xxx/Desktop/your-path-to/share CONRAINER_DIR=/home/hub/share CONRAINER_NAME=mnn_docker_hub_d docker run -idt -p ${PORT2}:${PORT1} -v ${SERVICE_DIR}:${CONRAINER_DIR} --shm-size=16gb --name ${CONRAINER_NAME} qyjdefdocker/lite.ai.toolkit-mnn-hub:v0.1.22.01.08

- make a

- Finally, copy the model weights from

/home/hub/mnn/cvto your localsharedir.# activate mnn docker. sh ./run_mnn_docker_hub.sh docker exec -it mnn_docker_hub_d /bin/bash # copy the models to the share dir. cd /home/hub cp -rf mnn/cv share/

The pretrained and converted ONNX files provide by lite.ai.toolkit are listed as follows. Also, see Model Zoo and ONNX Hub, MNN Hub, TNN Hub, NCNN Hub for more details.

More examples can be found at examples.

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/yolov5s.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_yolov5_1.jpg";

std::string save_img_path = "../../../logs/test_lite_yolov5_1.jpg";

auto *yolov5 = new lite::cv::detection::YoloV5(onnx_path);

std::vector<lite::types::Boxf> detected_boxes;

cv::Mat img_bgr = cv::imread(test_img_path);

yolov5->detect(img_bgr, detected_boxes);

lite::utils::draw_boxes_inplace(img_bgr, detected_boxes);

cv::imwrite(save_img_path, img_bgr);

delete yolov5;

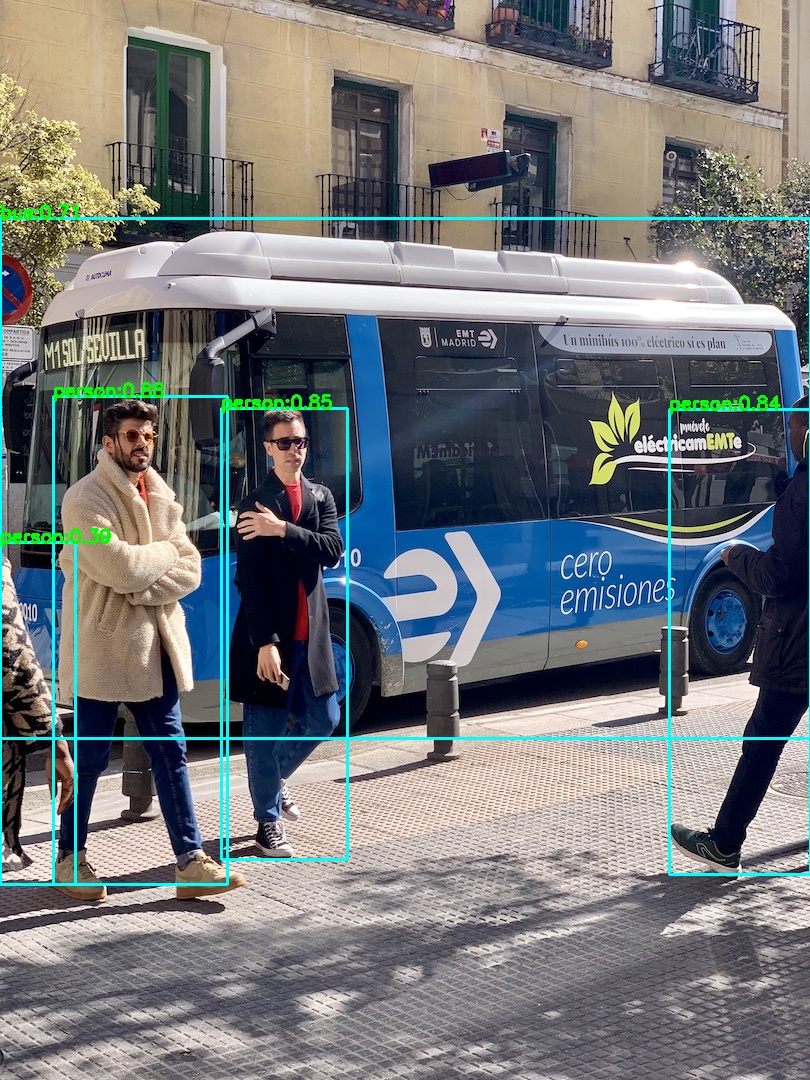

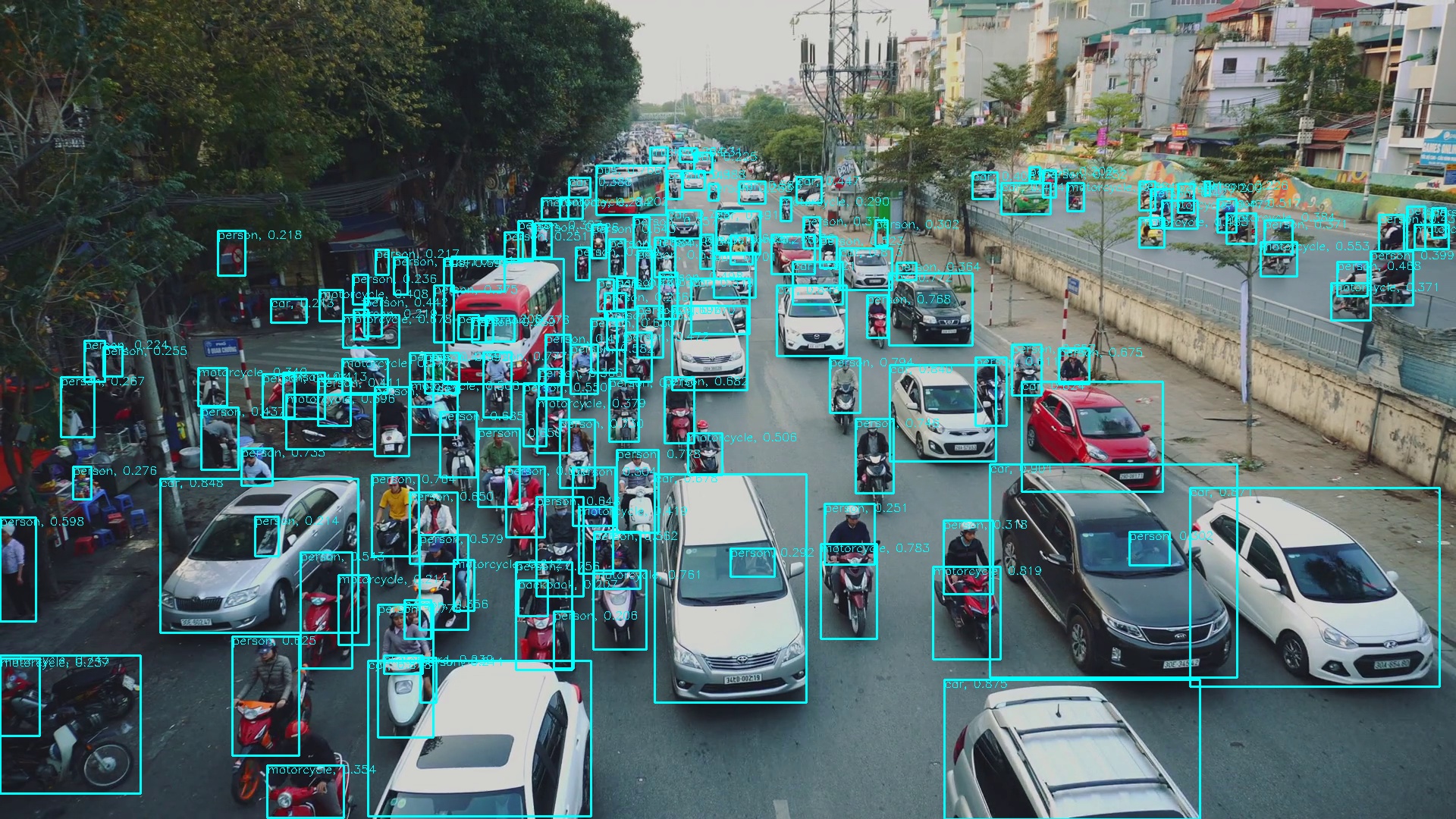

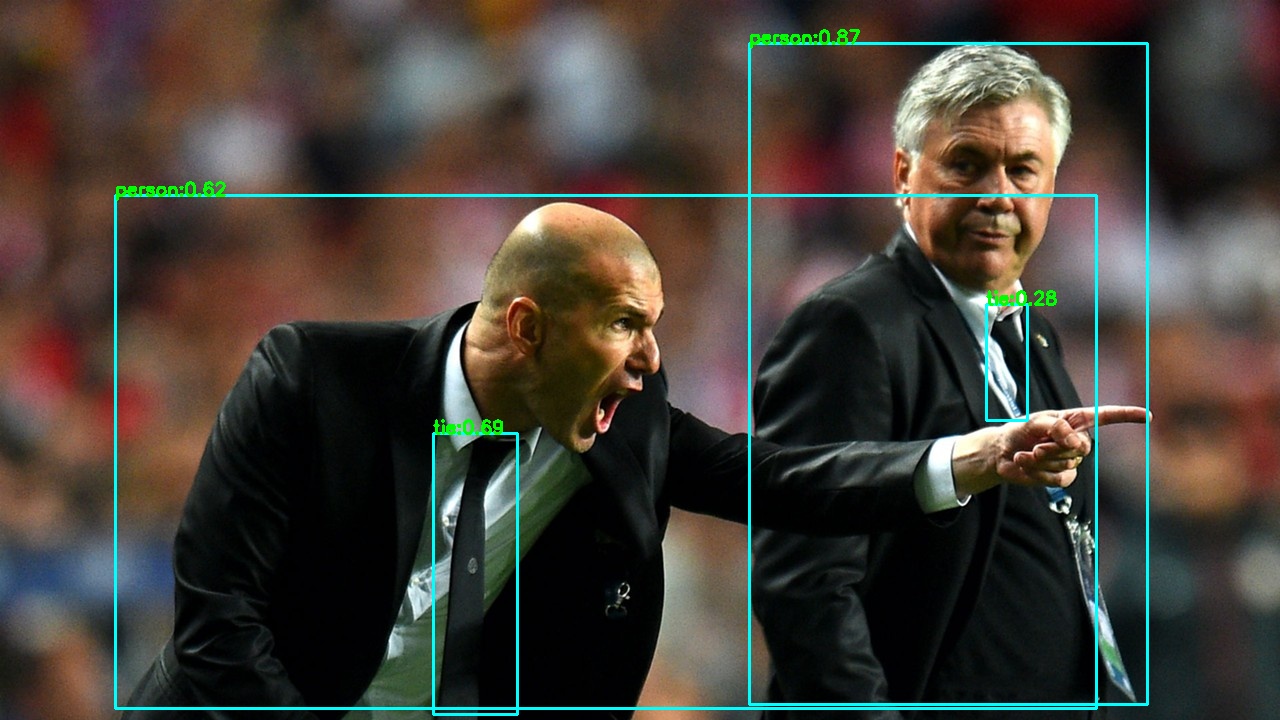

}The output is:

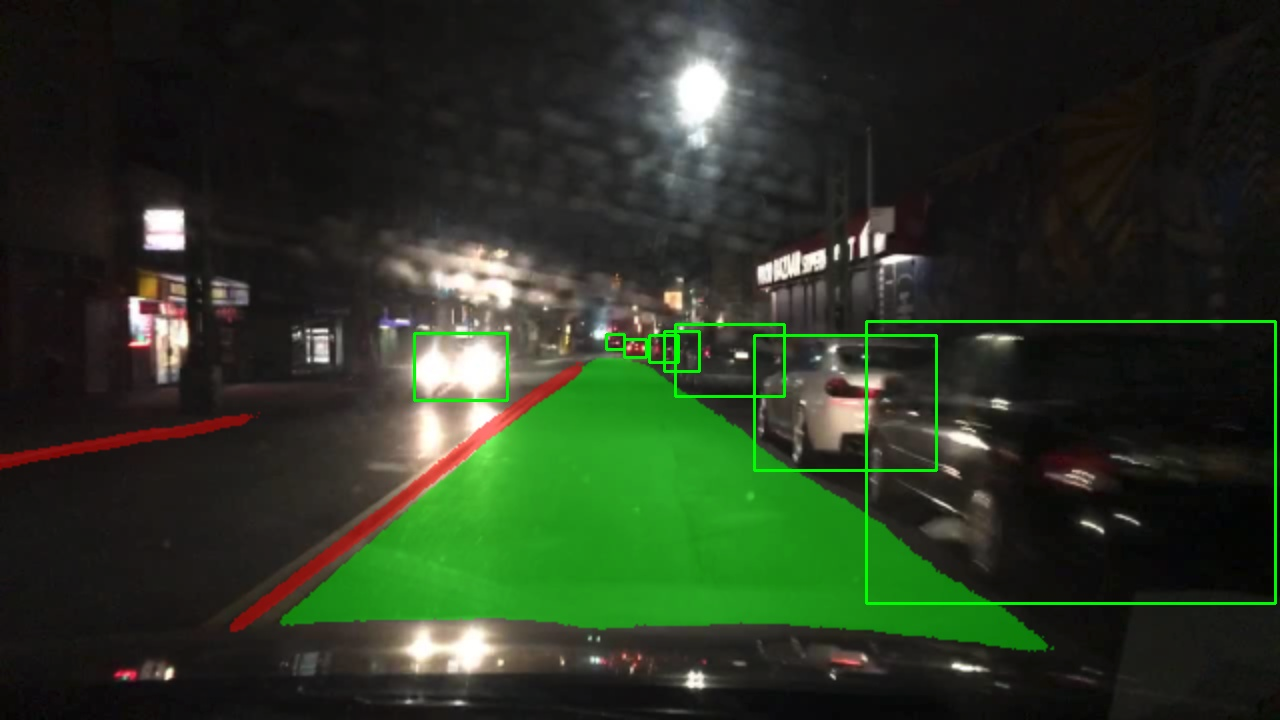

Or you can use Newest 🔥🔥 ! YOLO series's detector YOLOX or YoloR. They got the similar results.

More classes for general object detection (80 classes, COCO).

auto *detector = new lite::cv::detection::YoloX(onnx_path); // Newest YOLO detector !!! 2021-07

auto *detector = new lite::cv::detection::YoloV4(onnx_path);

auto *detector = new lite::cv::detection::YoloV3(onnx_path);

auto *detector = new lite::cv::detection::TinyYoloV3(onnx_path);

auto *detector = new lite::cv::detection::SSD(onnx_path);

auto *detector = new lite::cv::detection::YoloV5(onnx_path);

auto *detector = new lite::cv::detection::YoloR(onnx_path); // Newest YOLO detector !!! 2021-05

auto *detector = new lite::cv::detection::TinyYoloV4VOC(onnx_path);

auto *detector = new lite::cv::detection::TinyYoloV4COCO(onnx_path);

auto *detector = new lite::cv::detection::ScaledYoloV4(onnx_path);

auto *detector = new lite::cv::detection::EfficientDet(onnx_path);

auto *detector = new lite::cv::detection::EfficientDetD7(onnx_path);

auto *detector = new lite::cv::detection::EfficientDetD8(onnx_path);

auto *detector = new lite::cv::detection::YOLOP(onnx_path);

auto *detector = new lite::cv::detection::NanoDet(onnx_path); // Super fast and tiny!

auto *detector = new lite::cv::detection::NanoDetPlus(onnx_path); // Super fast and tiny! 2021/12/25

auto *detector = new lite::cv::detection::NanoDetEfficientNetLite(onnx_path); // Super fast and tiny!

auto *detector = new lite::cv::detection::YoloV5_V_6_0(onnx_path);

auto *detector = new lite::cv::detection::YoloV5_V_6_1(onnx_path);

auto *detector = new lite::cv::detection::YoloX_V_0_1_1(onnx_path); // Newest YOLO detector !!! 2021-07Example1: Video Matting using RobustVideoMatting2021🔥🔥🔥. Download model from Model-Zoo2.

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/rvm_mobilenetv3_fp32.onnx";

std::string video_path = "../../../examples/lite/resources/test_lite_rvm_0.mp4";

std::string output_path = "../../../logs/test_lite_rvm_0.mp4";

std::string background_path = "../../../examples/lite/resources/test_lite_matting_bgr.jpg";

auto *rvm = new lite::cv::matting::RobustVideoMatting(onnx_path, 16); // 16 threads

std::vector<lite::types::MattingContent> contents;

// 1. video matting.

cv::Mat background = cv::imread(background_path);

rvm->detect_video(video_path, output_path, contents, false, 0.4f,

20, true, true, background);

delete rvm;

}The output is:

More classes for matting (image matting, video matting, trimap/mask-free, trimap/mask-based)

auto *matting = new lite::cv::matting::RobustVideoMatting:(onnx_path); // WACV 2022.

auto *matting = new lite::cv::matting::MGMatting(onnx_path); // CVPR 2021

auto *matting = new lite::cv::matting::MODNet(onnx_path); // AAAI 2022

auto *matting = new lite::cv::matting::MODNetDyn(onnx_path); // AAAI 2022 Dynamic Shape Inference.

auto *matting = new lite::cv::matting::BackgroundMattingV2(onnx_path); // CVPR 2020

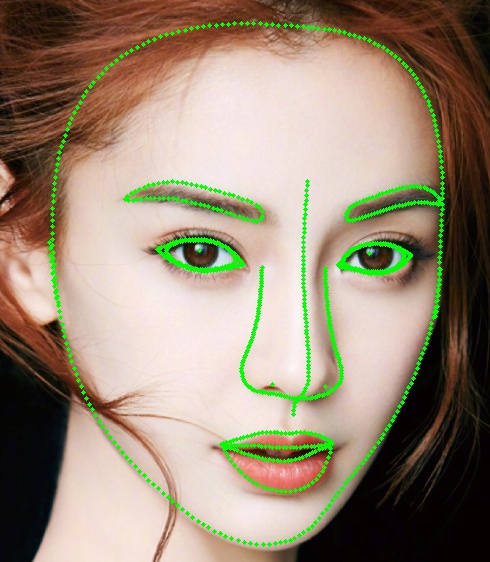

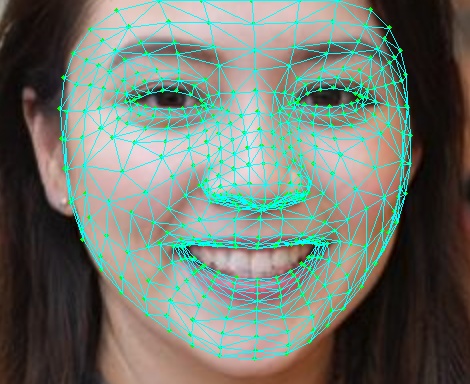

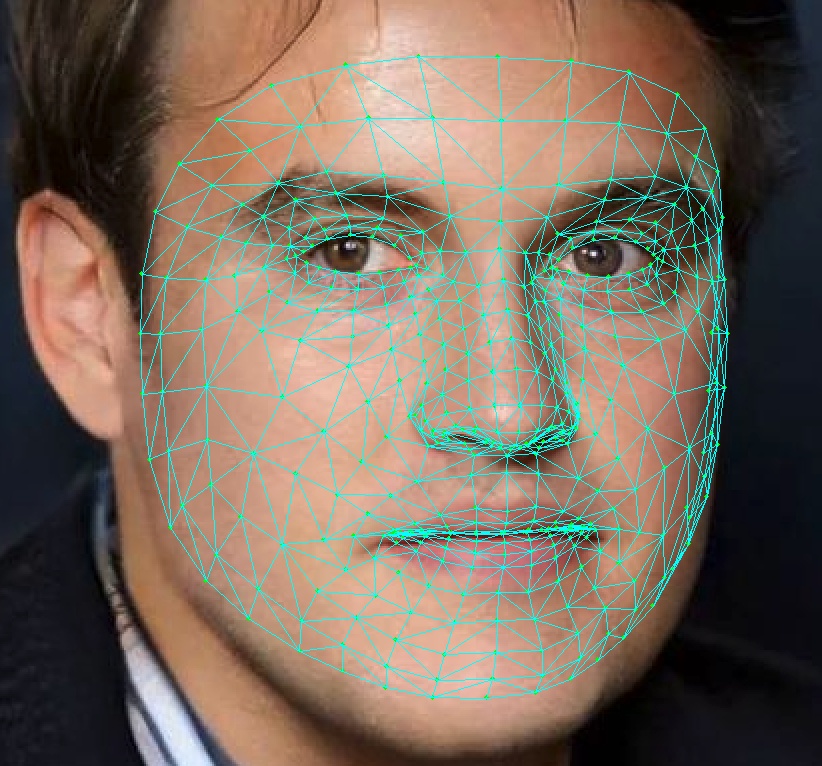

auto *matting = new lite::cv::matting::BackgroundMattingV2Dyn(onnx_path); // CVPR 2020 Dynamic Shape Inference.Example2: 1000 Facial Landmarks Detection using FaceLandmarks1000. Download model from Model-Zoo2.

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/FaceLandmark1000.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_face_landmarks_0.png";

std::string save_img_path = "../../../logs/test_lite_face_landmarks_1000.jpg";

auto *face_landmarks_1000 = new lite::cv::face::align::FaceLandmark1000(onnx_path);

lite::types::Landmarks landmarks;

cv::Mat img_bgr = cv::imread(test_img_path);

face_landmarks_1000->detect(img_bgr, landmarks);

lite::utils::draw_landmarks_inplace(img_bgr, landmarks);

cv::imwrite(save_img_path, img_bgr);

delete face_landmarks_1000;

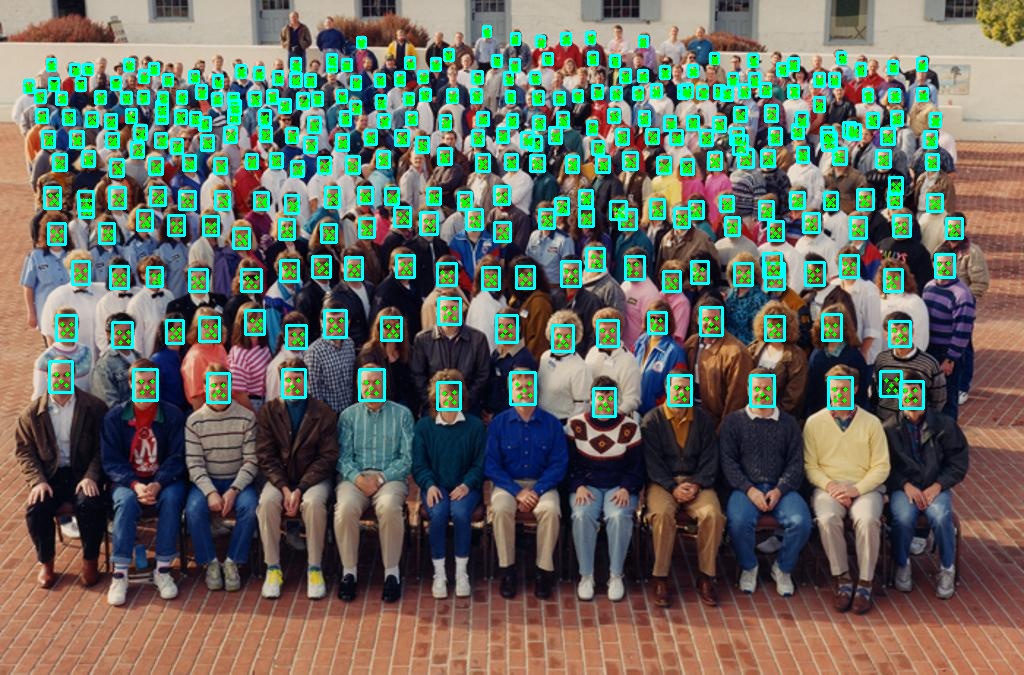

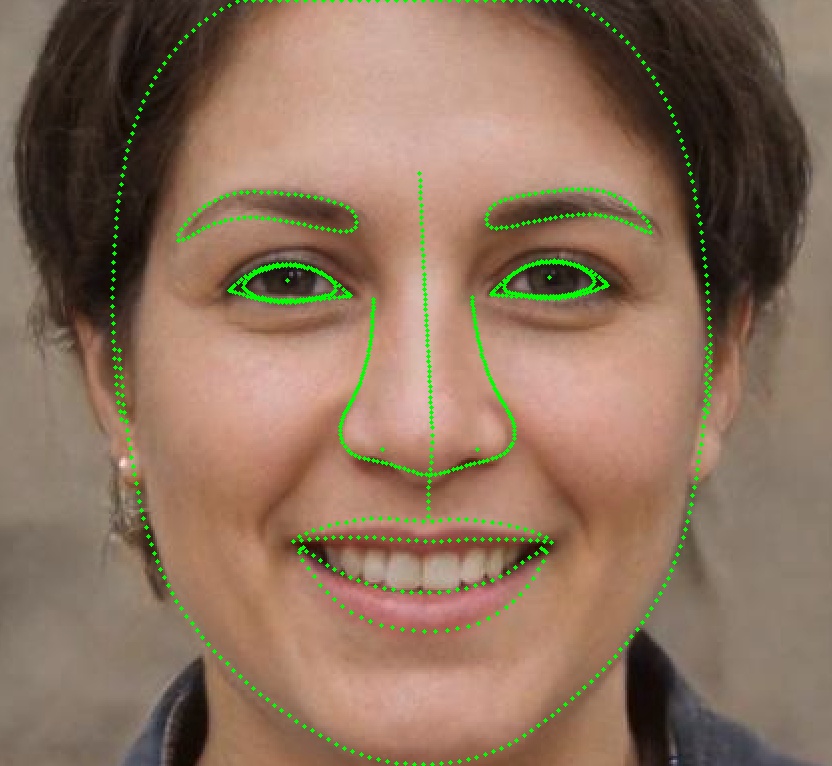

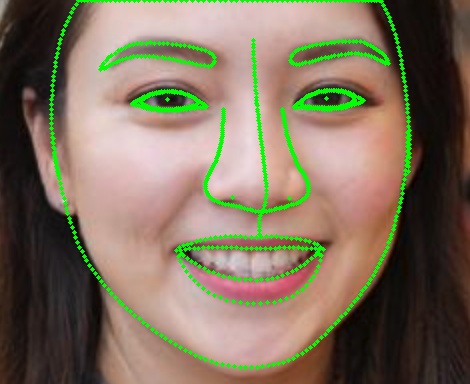

}The output is:

More classes for face alignment (68 points, 98 points, 106 points, 1000 points)

auto *align = new lite::cv::face::align::PFLD(onnx_path); // 106 landmarks, 1.0Mb only!

auto *align = new lite::cv::face::align::PFLD98(onnx_path); // 98 landmarks, 4.8Mb only!

auto *align = new lite::cv::face::align::PFLD68(onnx_path); // 68 landmarks, 2.8Mb only!

auto *align = new lite::cv::face::align::MobileNetV268(onnx_path); // 68 landmarks, 9.4Mb only!

auto *align = new lite::cv::face::align::MobileNetV2SE68(onnx_path); // 68 landmarks, 11Mb only!

auto *align = new lite::cv::face::align::FaceLandmark1000(onnx_path); // 1000 landmarks, 2.0Mb only!

auto *align = new lite::cv::face::align::PIPNet98(onnx_path); // 98 landmarks, CVPR2021!

auto *align = new lite::cv::face::align::PIPNet68(onnx_path); // 68 landmarks, CVPR2021!

auto *align = new lite::cv::face::align::PIPNet29(onnx_path); // 29 landmarks, CVPR2021!

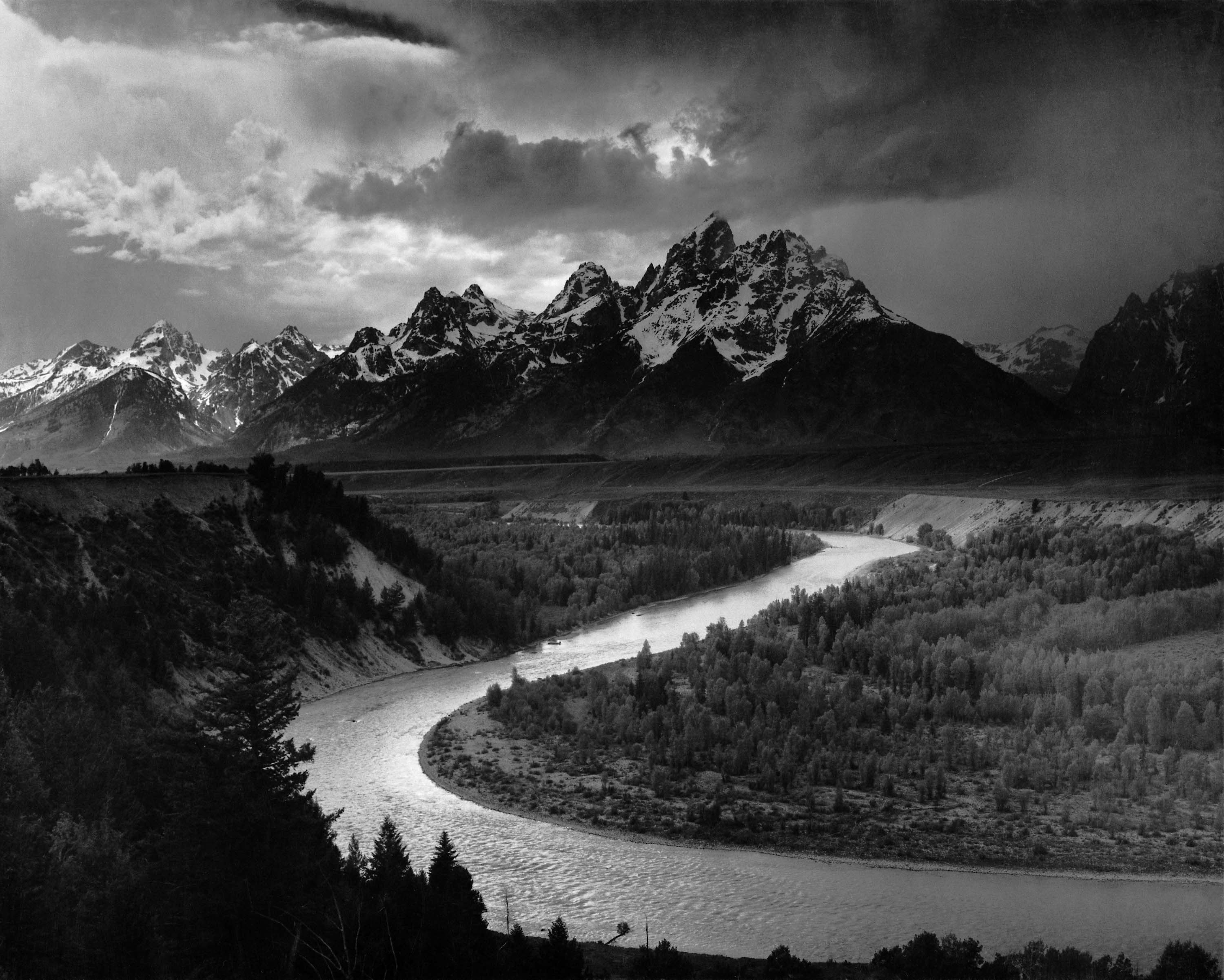

auto *align = new lite::cv::face::align::PIPNet19(onnx_path); // 19 landmarks, CVPR2021!Example3: Colorization using colorization. Download model from Model-Zoo2.

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/eccv16-colorizer.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_colorizer_1.jpg";

std::string save_img_path = "../../../logs/test_lite_eccv16_colorizer_1.jpg";

auto *colorizer = new lite::cv::colorization::Colorizer(onnx_path);

cv::Mat img_bgr = cv::imread(test_img_path);

lite::types::ColorizeContent colorize_content;

colorizer->detect(img_bgr, colorize_content);

if (colorize_content.flag) cv::imwrite(save_img_path, colorize_content.mat);

delete colorizer;

}The output is:

More classes for colorization (gray to rgb)

auto *colorizer = new lite::cv::colorization::Colorizer(onnx_path);#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/ms1mv3_arcface_r100.onnx";

std::string test_img_path0 = "../../../examples/lite/resources/test_lite_faceid_0.png";

std::string test_img_path1 = "../../../examples/lite/resources/test_lite_faceid_1.png";

std::string test_img_path2 = "../../../examples/lite/resources/test_lite_faceid_2.png";

auto *glint_arcface = new lite::cv::faceid::GlintArcFace(onnx_path);

lite::types::FaceContent face_content0, face_content1, face_content2;

cv::Mat img_bgr0 = cv::imread(test_img_path0);

cv::Mat img_bgr1 = cv::imread(test_img_path1);

cv::Mat img_bgr2 = cv::imread(test_img_path2);

glint_arcface->detect(img_bgr0, face_content0);

glint_arcface->detect(img_bgr1, face_content1);

glint_arcface->detect(img_bgr2, face_content2);

if (face_content0.flag && face_content1.flag && face_content2.flag)

{

float sim01 = lite::utils::math::cosine_similarity<float>(

face_content0.embedding, face_content1.embedding);

float sim02 = lite::utils::math::cosine_similarity<float>(

face_content0.embedding, face_content2.embedding);

std::cout << "Detected Sim01: " << sim << " Sim02: " << sim02 << std::endl;

}

delete glint_arcface;

}The output is:

Detected Sim01: 0.721159 Sim02: -0.0626267

More classes for face recognition (face id vector extract)

auto *recognition = new lite::cv::faceid::GlintCosFace(onnx_path); // DeepGlint(insightface)

auto *recognition = new lite::cv::faceid::GlintArcFace(onnx_path); // DeepGlint(insightface)

auto *recognition = new lite::cv::faceid::GlintPartialFC(onnx_path); // DeepGlint(insightface)

auto *recognition = new lite::cv::faceid::FaceNet(onnx_path);

auto *recognition = new lite::cv::faceid::FocalArcFace(onnx_path);

auto *recognition = new lite::cv::faceid::FocalAsiaArcFace(onnx_path);

auto *recognition = new lite::cv::faceid::TencentCurricularFace(onnx_path); // Tencent(TFace)

auto *recognition = new lite::cv::faceid::TencentCifpFace(onnx_path); // Tencent(TFace)

auto *recognition = new lite::cv::faceid::CenterLossFace(onnx_path);

auto *recognition = new lite::cv::faceid::SphereFace(onnx_path);

auto *recognition = new lite::cv::faceid::PoseRobustFace(onnx_path);

auto *recognition = new lite::cv::faceid::NaivePoseRobustFace(onnx_path);

auto *recognition = new lite::cv::faceid::MobileFaceNet(onnx_path); // 3.8Mb only !

auto *recognition = new lite::cv::faceid::CavaGhostArcFace(onnx_path);

auto *recognition = new lite::cv::faceid::CavaCombinedFace(onnx_path);

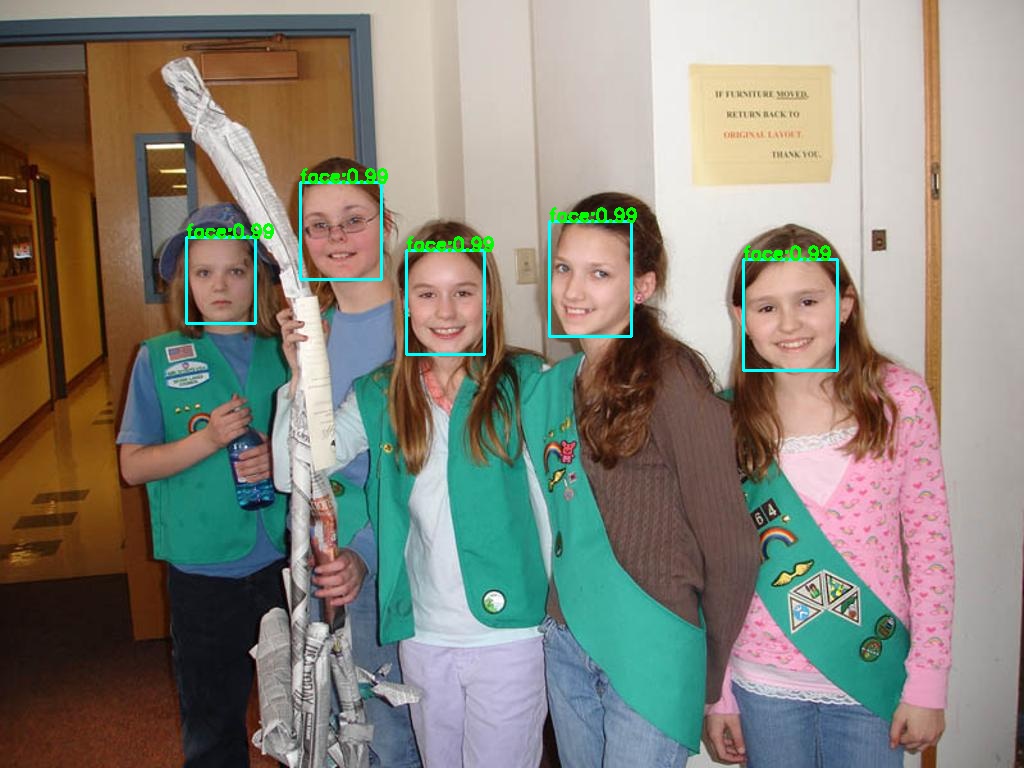

auto *recognition = new lite::cv::faceid::MobileSEFocalFace(onnx_path); // 4.5Mb only !Example5: Face Detection using SCRFD 2021. Download model from Model-Zoo2.

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/scrfd_2.5g_bnkps_shape640x640.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_face_detector.jpg";

std::string save_img_path = "../../../logs/test_lite_scrfd.jpg";

auto *scrfd = new lite::cv::face::detect::SCRFD(onnx_path);

std::vector<lite::types::BoxfWithLandmarks> detected_boxes;

cv::Mat img_bgr = cv::imread(test_img_path);

scrfd->detect(img_bgr, detected_boxes);

lite::utils::draw_boxes_with_landmarks_inplace(img_bgr, detected_boxes);

cv::imwrite(save_img_path, img_bgr);

std::cout << "Default Version Done! Detected Face Num: " << detected_boxes.size() << std::endl;

delete scrfd;

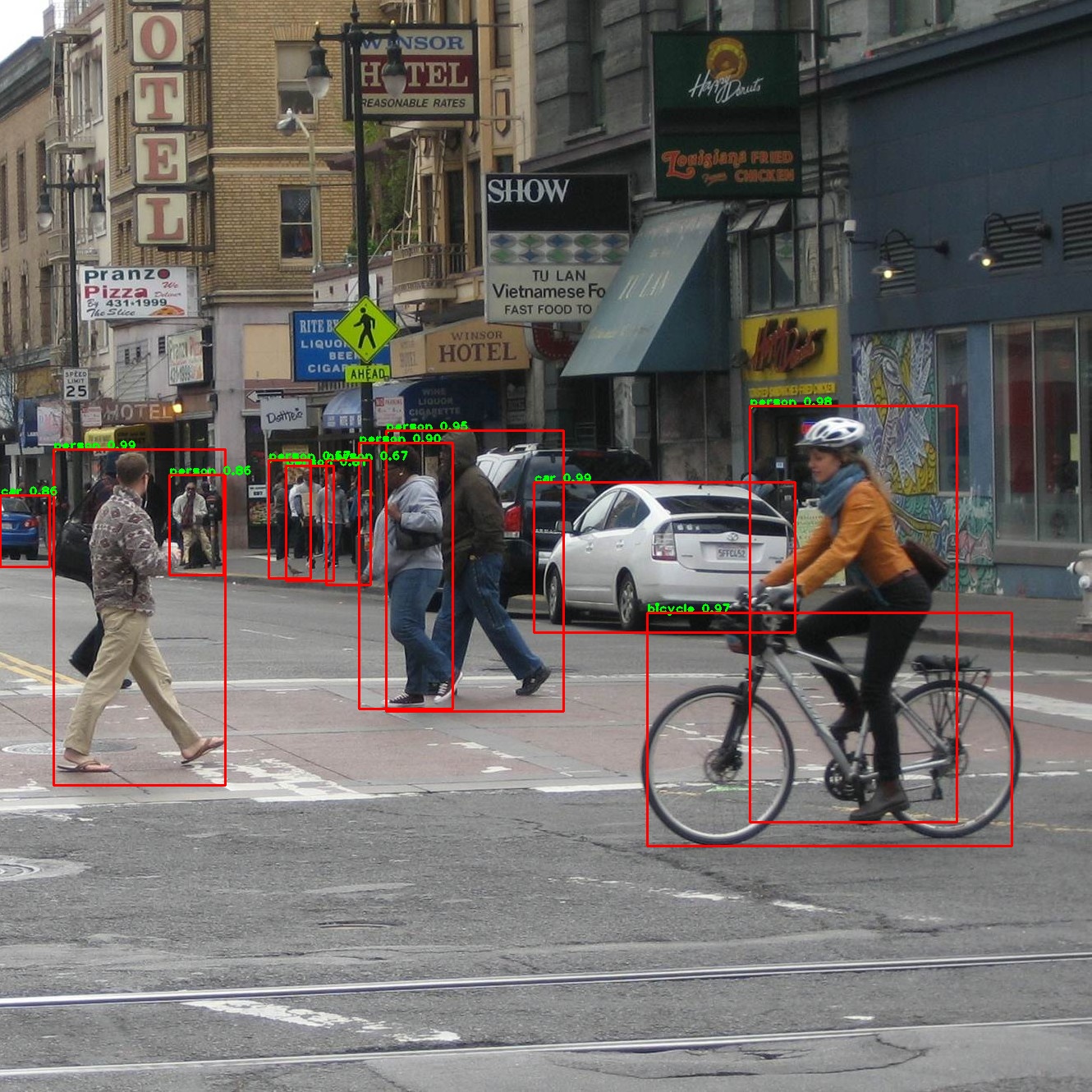

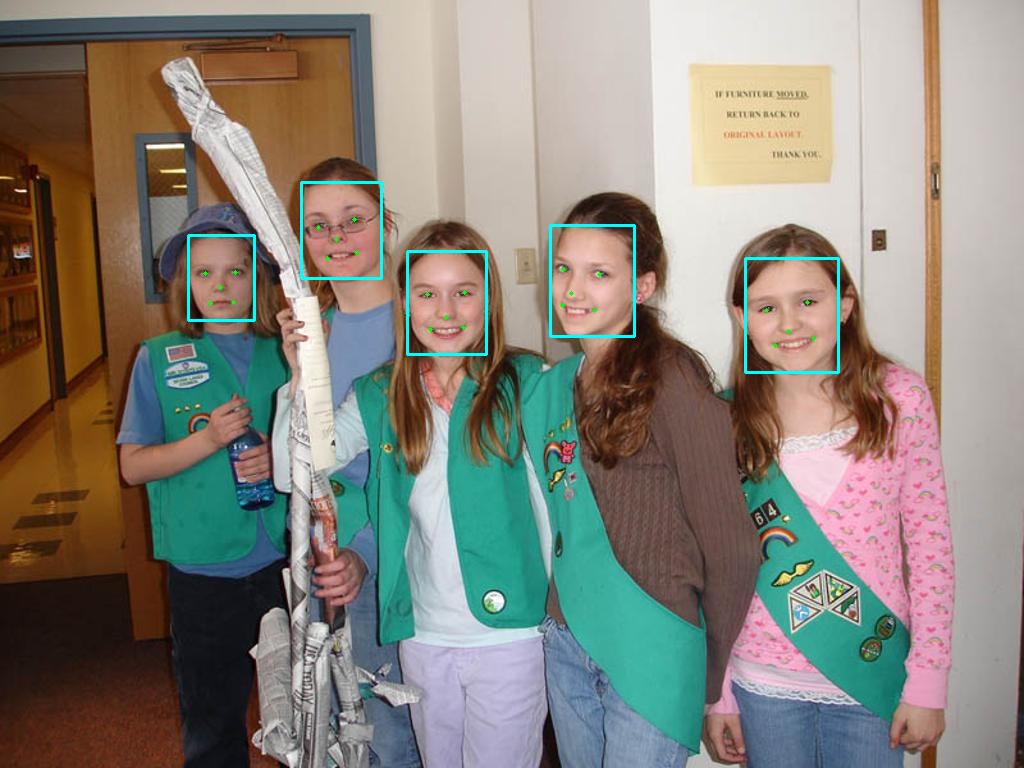

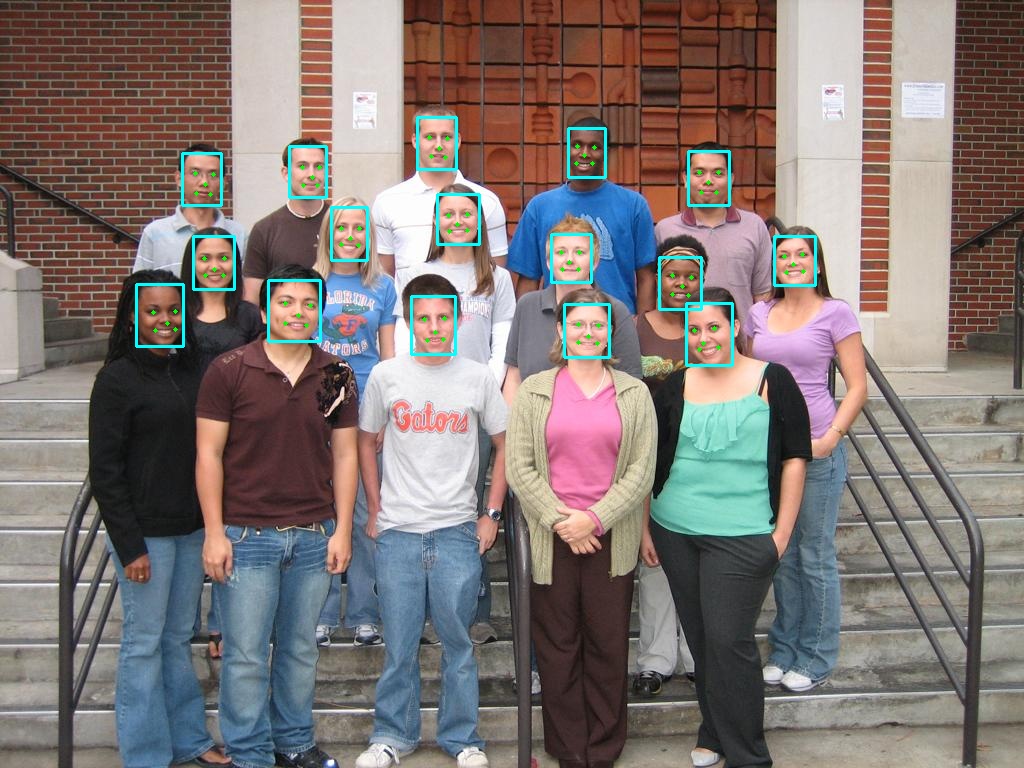

}The output is:

More classes for face detection (super fast face detection)

auto *detector = new lite::face::detect::UltraFace(onnx_path); // 1.1Mb only !

auto *detector = new lite::face::detect::FaceBoxes(onnx_path); // 3.8Mb only !

auto *detector = new lite::face::detect::FaceBoxesv2(onnx_path); // 4.0Mb only !

auto *detector = new lite::face::detect::RetinaFace(onnx_path); // 1.6Mb only ! CVPR2020

auto *detector = new lite::face::detect::SCRFD(onnx_path); // 2.5Mb only ! CVPR2021, Super fast and accurate!!

auto *detector = new lite::face::detect::YOLO5Face(onnx_path); // 2021, Super fast and accurate!!

auto *detector = new lite::face::detect::YOLOv5BlazeFace(onnx_path); // 2021, Super fast and accurate!!Example6: Object Segmentation using DeepLabV3ResNet101. Download model from Model-Zoo2.

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/deeplabv3_resnet101_coco.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_deeplabv3_resnet101.png";

std::string save_img_path = "../../../logs/test_lite_deeplabv3_resnet101.jpg";

auto *deeplabv3_resnet101 = new lite::cv::segmentation::DeepLabV3ResNet101(onnx_path, 16); // 16 threads

lite::types::SegmentContent content;

cv::Mat img_bgr = cv::imread(test_img_path);

deeplabv3_resnet101->detect(img_bgr, content);

if (content.flag)

{

cv::Mat out_img;

cv::addWeighted(img_bgr, 0.2, content.color_mat, 0.8, 0., out_img);

cv::imwrite(save_img_path, out_img);

if (!content.names_map.empty())

{

for (auto it = content.names_map.begin(); it != content.names_map.end(); ++it)

{

std::cout << it->first << " Name: " << it->second << std::endl;

}

}

}

delete deeplabv3_resnet101;

}The output is:

More classes for object segmentation (general objects segmentation)

auto *segment = new lite::cv::segmentation::FCNResNet101(onnx_path);

auto *segment = new lite::cv::segmentation::DeepLabV3ResNet101(onnx_path);#include "lite/lite.h"

static void test_default()

{

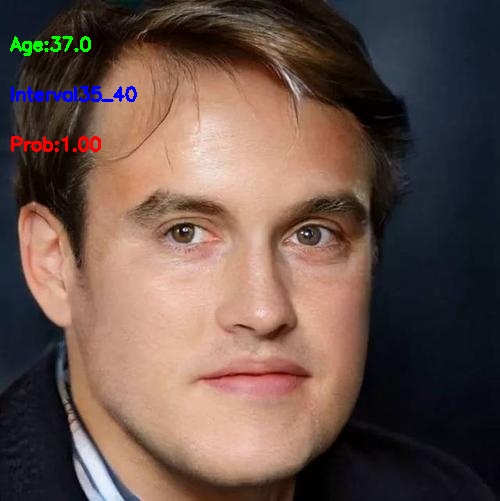

std::string onnx_path = "../../../hub/onnx/cv/ssrnet.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_ssrnet.jpg";

std::string save_img_path = "../../../logs/test_lite_ssrnet.jpg";

auto *ssrnet = new lite::cv::face::attr::SSRNet(onnx_path);

lite::types::Age age;

cv::Mat img_bgr = cv::imread(test_img_path);

ssrnet->detect(img_bgr, age);

lite::utils::draw_age_inplace(img_bgr, age);

cv::imwrite(save_img_path, img_bgr);

std::cout << "Default Version Done! Detected SSRNet Age: " << age.age << std::endl;

delete ssrnet;

}The output is:

More classes for face attributes analysis (age, gender, emotion)

auto *attribute = new lite::cv::face::attr::AgeGoogleNet(onnx_path);

auto *attribute = new lite::cv::face::attr::GenderGoogleNet(onnx_path);

auto *attribute = new lite::cv::face::attr::EmotionFerPlus(onnx_path);

auto *attribute = new lite::cv::face::attr::VGG16Age(onnx_path);

auto *attribute = new lite::cv::face::attr::VGG16Gender(onnx_path);

auto *attribute = new lite::cv::face::attr::EfficientEmotion7(onnx_path); // 7 emotions, 15Mb only!

auto *attribute = new lite::cv::face::attr::EfficientEmotion8(onnx_path); // 8 emotions, 15Mb only!

auto *attribute = new lite::cv::face::attr::MobileEmotion7(onnx_path); // 7 emotions, 13Mb only!

auto *attribute = new lite::cv::face::attr::ReXNetEmotion7(onnx_path); // 7 emotions

auto *attribute = new lite::cv::face::attr::SSRNet(onnx_path); // age estimation, 190kb only!!!#include "lite/lite.h"

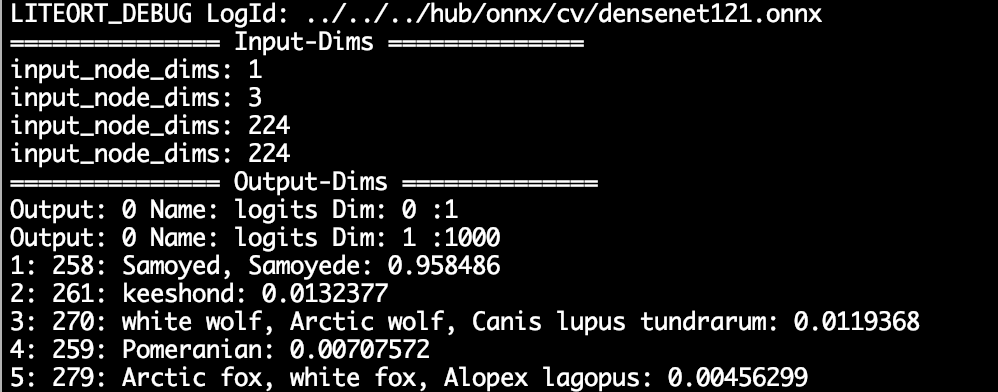

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/densenet121.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_densenet.jpg";

auto *densenet = new lite::cv::classification::DenseNet(onnx_path);

lite::types::ImageNetContent content;

cv::Mat img_bgr = cv::imread(test_img_path);

densenet->detect(img_bgr, content);

if (content.flag)

{

const unsigned int top_k = content.scores.size();

if (top_k > 0)

{

for (unsigned int i = 0; i < top_k; ++i)

std::cout << i + 1

<< ": " << content.labels.at(i)

<< ": " << content.texts.at(i)

<< ": " << content.scores.at(i)

<< std::endl;

}

}

delete densenet;

}The output is:

More classes for image classification (1000 classes)

auto *classifier = new lite::cv::classification::EfficientNetLite4(onnx_path);

auto *classifier = new lite::cv::classification::ShuffleNetV2(onnx_path); // 8.7Mb only!

auto *classifier = new lite::cv::classification::GhostNet(onnx_path);

auto *classifier = new lite::cv::classification::HdrDNet(onnx_path);

auto *classifier = new lite::cv::classification::IBNNet(onnx_path);

auto *classifier = new lite::cv::classification::MobileNetV2(onnx_path); // 13Mb only!

auto *classifier = new lite::cv::classification::ResNet(onnx_path);

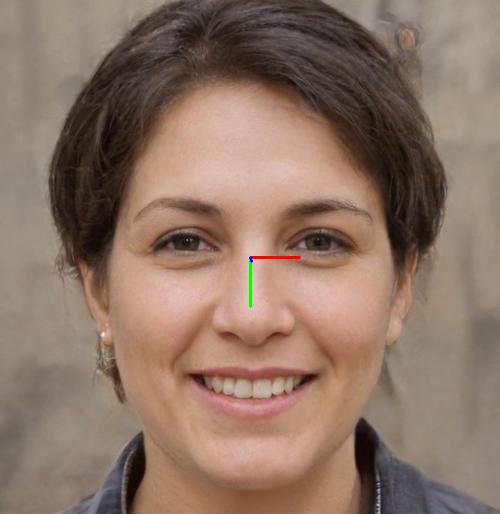

auto *classifier = new lite::cv::classification::ResNeXt(onnx_path);#include "lite/lite.h"

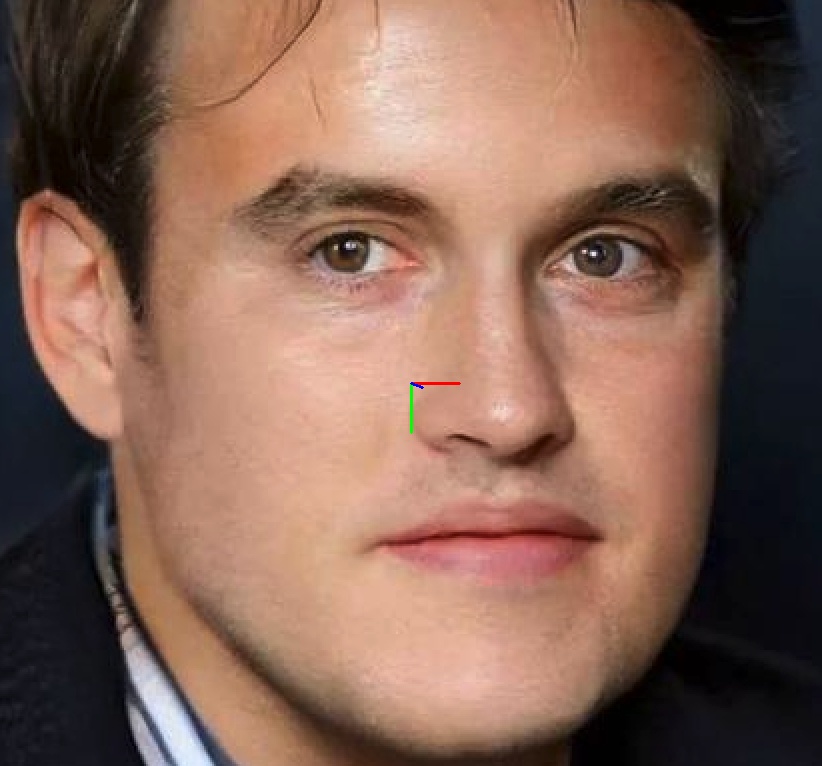

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/fsanet-var.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_fsanet.jpg";

std::string save_img_path = "../../../logs/test_lite_fsanet.jpg";

auto *fsanet = new lite::cv::face::pose::FSANet(onnx_path);

cv::Mat img_bgr = cv::imread(test_img_path);

lite::types::EulerAngles euler_angles;

fsanet->detect(img_bgr, euler_angles);

if (euler_angles.flag)

{

lite::utils::draw_axis_inplace(img_bgr, euler_angles);

cv::imwrite(save_img_path, img_bgr);

std::cout << "yaw:" << euler_angles.yaw << " pitch:" << euler_angles.pitch << " row:" << euler_angles.roll << std::endl;

}

delete fsanet;

}The output is:

More classes for head pose estimation (euler angle, yaw, pitch, roll)

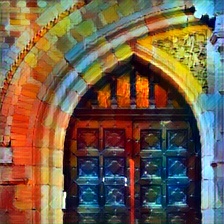

auto *pose = new lite::cv::face::pose::FSANet(onnx_path); // 1.2Mb only!Example10: Style Transfer using FastStyleTransfer. Download model from Model-Zoo2.

#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/style-candy-8.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_fast_style_transfer.jpg";

std::string save_img_path = "../../../logs/test_lite_fast_style_transfer_candy.jpg";

auto *fast_style_transfer = new lite::cv::style::FastStyleTransfer(onnx_path);

lite::types::StyleContent style_content;

cv::Mat img_bgr = cv::imread(test_img_path);

fast_style_transfer->detect(img_bgr, style_content);

if (style_content.flag) cv::imwrite(save_img_path, style_content.mat);

delete fast_style_transfer;

}The output is:

More classes for style transfer (neural style transfer, others)

auto *transfer = new lite::cv::style::FastStyleTransfer(onnx_path); // 6.4Mb only#include "lite/lite.h"

static void test_default()

{

std::string onnx_path = "../../../hub/onnx/cv/minivision_head_seg.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_head_seg.png";

std::string save_img_path = "../../../logs/test_lite_head_seg.jpg";

auto *head_seg = new lite::cv::segmentation::HeadSeg(onnx_path, 4); // 4 threads

lite::types::HeadSegContent content;

cv::Mat img_bgr = cv::imread(test_img_path);

head_seg->detect(img_bgr, content);

if (content.flag) cv::imwrite(save_img_path, content.mask * 255.f);

delete head_seg;

}The output is:

More classes for human segmentation (head, portrait, others)

auto *segment = new lite::cv::segmentation::HeadSeg(onnx_path);Example12: Photo transfer to Cartoon Photo2Cartoon. Download model from Model-Zoo2.

#include "lite/lite.h"

static void test_default()

{

std::string head_seg_onnx_path = "../../../hub/onnx/cv/minivision_head_seg.onnx";

std::string cartoon_onnx_path = "../../../hub/onnx/cv/minivision_female_photo2cartoon.onnx";

std::string test_img_path = "../../../examples/lite/resources/test_lite_female_photo2cartoon.jpg";

std::string save_mask_path = "../../../logs/test_lite_female_photo2cartoon_seg.jpg";

std::string save_cartoon_path = "../../../logs/test_lite_female_photo2cartoon_cartoon.jpg";

auto *head_seg = new lite::cv::segmentation::HeadSeg(head_seg_onnx_path, 4); // 4 threads

auto *female_photo2cartoon = new lite::cv::style::FemalePhoto2Cartoon(cartoon_onnx_path, 4); // 4 threads

lite::types::HeadSegContent head_seg_content;

cv::Mat img_bgr = cv::imread(test_img_path);

head_seg->detect(img_bgr, head_seg_content);

if (head_seg_content.flag && !head_seg_content.mask.empty())

{

cv::imwrite(save_mask_path, head_seg_content.mask * 255.f);

// Female Photo2Cartoon Style Transfer

lite::types::FemalePhoto2CartoonContent female_cartoon_content;

female_photo2cartoon->detect(img_bgr, head_seg_content.mask, female_cartoon_content);

if (female_cartoon_content.flag && !female_cartoon_content.cartoon.empty())

cv::imwrite(save_cartoon_path, female_cartoon_content.cartoon);

}

delete head_seg;

delete female_photo2cartoon;

}The output is:

More classes for photo style transfer.

auto *transfer = new lite::cv::style::FemalePhoto2Cartoon(onnx_path);The code of Lite.Ai.ToolKit is released under the GPL-3.0 License.

Many thanks to these following projects. All the Lite.AI.ToolKit's models are sourced from these repos.

- RobustVideoMatting (🔥🔥🔥new!!↑)

- nanodet (🔥🔥🔥↑)

- YOLOX (🔥🔥🔥new!!↑)

- YOLOP (🔥🔥new!!↑)

- YOLOR (🔥🔥new!!↑)

- ScaledYOLOv4 (🔥🔥🔥↑)

- insightface (🔥🔥🔥↑)

- yolov5 (🔥🔥💥↑)

- TFace (🔥🔥↑)

- YOLOv4-pytorch (🔥🔥🔥↑)

- Ultra-Light-Fast-Generic-Face-Detector-1MB (🔥🔥🔥↑)

Expand for More References.

- headpose-fsanet-pytorch (🔥↑)

- pfld_106_face_landmarks (🔥🔥↑)

- onnx-models (🔥🔥🔥↑)

- SSR_Net_Pytorch (🔥↑)

- colorization (🔥🔥🔥↑)

- SUB_PIXEL_CNN (🔥↑)

- torchvision (🔥🔥🔥↑)

- facenet-pytorch (🔥↑)

- face.evoLVe.PyTorch (🔥🔥🔥↑)

- center-loss.pytorch (🔥🔥↑)

- sphereface_pytorch (🔥🔥↑)

- DREAM (🔥🔥↑)

- MobileFaceNet_Pytorch (🔥🔥↑)

- cavaface.pytorch (🔥🔥↑)

- CurricularFace (🔥🔥↑)

- face-emotion-recognition (🔥↑)

- face_recognition.pytorch (🔥🔥↑)

- PFLD-pytorch (🔥🔥↑)

- pytorch_face_landmark (🔥🔥↑)

- FaceLandmark1000 (🔥🔥↑)

- Pytorch_Retinaface (🔥🔥🔥↑)

- FaceBoxes (🔥🔥↑)

In addition, MNN, NCNN and TNN support for some models will be added in the future, but due to operator compatibility and some other reasons, it is impossible to ensure that all models supported by ONNXRuntime C++ can run through MNN, NCNN and TNN. So, if you want to use all the models supported by this repo and don't care about the performance gap of 1~2ms, just let ONNXRuntime as default inference engine for this repo. However, you can follow the steps below if you want to build with MNN, NCNN or TNN support.

- change the

build.shwithDENABLE_MNN=ON,DENABLE_NCNN=ONorDENABLE_TNN=ON, such as

cd build && cmake \

-DCMAKE_BUILD_TYPE=MinSizeRel \

-DINCLUDE_OPENCV=ON \ # Whether to package OpenCV into lite.ai.toolkit, default ON; otherwise, you need to setup OpenCV yourself.

-DENABLE_MNN=ON \ # Whether to build with MNN, default OFF, only some models are supported now.

-DENABLE_NCNN=OFF \ # Whether to build with NCNN, default OFF, only some models are supported now.

-DENABLE_TNN=OFF \ # Whether to build with TNN, default OFF, only some models are supported now.

.. && make -j8- use the MNN, NCNN or TNN version interface, see demo, such as

auto *nanodet = new lite::mnn::cv::detection::NanoDet(mnn_path);

auto *nanodet = new lite::tnn::cv::detection::NanoDet(proto_path, model_path);

auto *nanodet = new lite::ncnn::cv::detection::NanoDet(param_path, bin_path);How to add your own models and become a contributor? See CONTRIBUTING.zh.md.