This repository mostly provides a Windows-focused Gradio GUI for Kohya's Stable Diffusion trainers... but support for Linux OS is also provided through community contributions. Macos is not great at the moment.

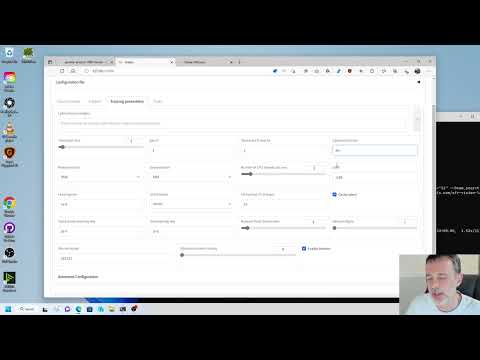

The GUI allows you to set the training parameters and generate and run the required CLI commands to train the model.

- Kohya's GUI

How to Create a LoRA Part 1: Dataset Preparation:

How to Create a LoRA Part 2: Training the Model:

Generate Studio Quality Realistic Photos By Kohya LoRA Stable Diffusion Training - Full Tutorial

First Ever SDXL Training With Kohya LoRA - Stable Diffusion XL Training Will Replace Older Models

Become A Master Of SDXL Training With Kohya SS LoRAs - Combine Power Of Automatic1111 & SDXL LoRAs

How To Do SDXL LoRA Training On RunPod With Kohya SS GUI Trainer & Use LoRAs With Automatic1111 UI

How To Do SDXL LoRA Training On RunPod With Kohya SS GUI Trainer & Use LoRAs With Automatic1111 UI

The feature of SDXL training is now available in sdxl branch as an experimental feature.

Sep 3, 2023: The feature will be merged into the main branch soon. Following are the changes from the previous version.

- ControlNet-LLLite is added. See documentation for details.

- JPEG XL is supported. #786

- Peak memory usage is reduced. #791

- Input perturbation noise is added. See #798 for details.

- Dataset subset now has

caption_prefixandcaption_suffixoptions. The strings are added to the beginning and the end of the captions before shuffling. You can specify the options in.toml. - Other minor changes.

- Thanks for contributions from Isotr0py, vvern999, lansing and others!

Aug 13, 2023:

- LoRA-FA is added experimentally. Specify

--network_module networks.lora_faoption instead of--network_module networks.lora. The trained model can be used as a normal LoRA model.

Aug 12, 2023:

- The default value of noise offset when omitted has been changed to 0 from 0.0357.

- The different learning rates for each U-Net block are now supported. Specify with

--block_lroption. Specify 23 values separated by commas like--block_lr 1e-3,1e-3 ... 1e-3.- 23 values correspond to

0: time/label embed, 1-9: input blocks 0-8, 10-12: mid blocks 0-2, 13-21: output blocks 0-8, 22: out.

- 23 values correspond to

Aug 6, 2023:

- SAI Model Spec metadata is now supported partially.

hash_sha256is not supported yet.- The main items are set automatically.

- You can set title, author, description, license and tags with

--metadata_xxxoptions in each training script. - Merging scripts also support minimum SAI Model Spec metadata. See the help message for the usage.

- Metadata editor will be available soon.

- SDXL LoRA has

sdxl_base_v1-0now forss_base_model_versionmetadata item, instead ofv0-9.

Aug 4, 2023:

bitsandbytesis now optional. Please install it if you want to use it. The instructions are in the later section.albumentationsis not required any more.- An issue for pooled output for Textual Inversion training is fixed.

--v_pred_like_loss ratiooption is added. This option adds the loss like v-prediction loss in SDXL training.0.1means that the loss is added 10% of the v-prediction loss. The default value is None (disabled).- In v-prediction, the loss is higher in the early timesteps (near the noise). This option can be used to increase the loss in the early timesteps.

- Arbitrary options can be used for Diffusers' schedulers. For example

--lr_scheduler_args "lr_end=1e-8". sdxl_gen_imgs.pysupports batch size > 1.- Fix ControlNet to work with attention couple and regional LoRA in

gen_img_diffusers.py.

Summary of the feature:

-

tools/cache_latents.pyis added. This script can be used to cache the latents to disk in advance.- The options are almost the same as `sdxl_train.py'. See the help message for the usage.

- Please launch the script as follows:

accelerate launch --num_cpu_threads_per_process 1 tools/cache_latents.py ... - This script should work with multi-GPU, but it is not tested in my environment.

-

tools/cache_text_encoder_outputs.pyis added. This script can be used to cache the text encoder outputs to disk in advance.- The options are almost the same as

cache_latents.py' andsdxl_train.py'. See the help message for the usage.

- The options are almost the same as

-

sdxl_train.pyis a script for SDXL fine-tuning. The usage is almost the same asfine_tune.py, but it also supports DreamBooth dataset.--full_bf16option is added. Thanks to KohakuBlueleaf!- This option enables the full bfloat16 training (includes gradients). This option is useful to reduce the GPU memory usage.

- However, bitsandbytes==0.35 doesn't seem to support this. Please use a newer version of bitsandbytes or another optimizer.

- I cannot find bitsandbytes>0.35.0 that works correctly on Windows.

- In addition, the full bfloat16 training might be unstable. Please use it at your own risk.

-

prepare_buckets_latents.pynow supports SDXL fine-tuning. -

sdxl_train_network.pyis a script for LoRA training for SDXL. The usage is almost the same astrain_network.py. -

Both scripts has following additional options:

--cache_text_encoder_outputsand--cache_text_encoder_outputs_to_disk: Cache the outputs of the text encoders. This option is useful to reduce the GPU memory usage. This option cannot be used with options for shuffling or dropping the captions.--no_half_vae: Disable the half-precision (mixed-precision) VAE. VAE for SDXL seems to produce NaNs in some cases. This option is useful to avoid the NaNs.

-

The image generation during training is now available.

--no_half_vaeoption also works to avoid black images. -

--weighted_captionsoption is not supported yet for both scripts. -

--min_timestepand--max_timestepoptions are added to each training script. These options can be used to train U-Net with different timesteps. The default values are 0 and 1000. -

sdxl_train_textual_inversion.pyis a script for Textual Inversion training for SDXL. The usage is almost the same astrain_textual_inversion.py.--cache_text_encoder_outputsis not supported.token_stringmust be alphabet only currently, due to the limitation of the open-clip tokenizer.- There are two options for captions:

- Training with captions. All captions must include the token string. The token string is replaced with multiple tokens.

- Use

--use_object_templateor--use_style_templateoption. The captions are generated from the template. The existing captions are ignored.

- See below for the format of the embeddings.

-

sdxl_gen_img.pyis added. This script can be used to generate images with SDXL, including LoRA. See the help message for the usage.- Textual Inversion is supported, but the name for the embeds in the caption becomes alphabet only. For example,

neg_hand_v1.safetensorscan be activated withneghandv.

- Textual Inversion is supported, but the name for the embeds in the caption becomes alphabet only. For example,

requirements.txt is updated to support SDXL training.

- The default resolution of SDXL is 1024x1024.

- The fine-tuning can be done with 24GB GPU memory with the batch size of 1. For 24GB GPU, the following options are recommended for the fine-tuning with 24GB GPU memory:

- Train U-Net only.

- Use gradient checkpointing.

- Use

--cache_text_encoder_outputsoption and caching latents. - Use Adafactor optimizer. RMSprop 8bit or Adagrad 8bit may work. AdamW 8bit doesn't seem to work.

- The LoRA training can be done with 8GB GPU memory (10GB recommended). For reducing the GPU memory usage, the following options are recommended:

- Train U-Net only.

- Use gradient checkpointing.

- Use

--cache_text_encoder_outputsoption and caching latents. - Use one of 8bit optimizers or Adafactor optimizer.

- Use lower dim (-8 for 8GB GPU).

--network_train_unet_onlyoption is highly recommended for SDXL LoRA. Because SDXL has two text encoders, the result of the training will be unexpected.- PyTorch 2 seems to use slightly less GPU memory than PyTorch 1.

--bucket_reso_stepscan be set to 32 instead of the default value 64. Smaller values than 32 will not work for SDXL training.

Example of the optimizer settings for Adafactor with the fixed learning rate:

optimizer_type = "adafactor"

optimizer_args = [ "scale_parameter=False", "relative_step=False", "warmup_init=False" ]

lr_scheduler = "constant_with_warmup"

lr_warmup_steps = 100

learning_rate = 4e-7 # SDXL original learning rate🚦 WIP 🚦

This Colab notebook was not created or maintained by me; however, it appears to function effectively. The source can be found at: https://github.com/camenduru/kohya_ss-colab.

I would like to express my gratitude to camendutu for their valuable contribution. If you encounter any issues with the Colab notebook, please report them on their repository.

| Colab | Info |

|---|---|

| kohya_ss_gui_colab |

To install the necessary dependencies on a Windows system, follow these steps:

-

Install Python 3.10.

- During the installation process, ensure that you select the option to add Python to the 'PATH' environment variable.

-

Install Git.

-

Install the Visual Studio 2015, 2017, 2019, and 2022 redistributable.

To set up the project, follow these steps:

-

Open a terminal and navigate to the desired installation directory.

-

Clone the repository by running the following command:

git clone https://github.com/bmaltais/kohya_ss.git

-

Change into the

kohya_ssdirectory:cd kohya_ss -

Run the setup script by executing the following command:

.\setup.batDuring the accelerate config step use the default values as proposed during the configuration unless you know your hardware demand otherwise. Tfe amount of VRAM on your GPU does not have an impact on the values used.

The following steps are optional but can improve the learning speed for owners of NVIDIA 30X0/40X0 GPUs. These steps enable larger training batch sizes and faster training speeds.

Please note that the CUDNN 8.6 DLLs needed for this process cannot be hosted on GitHub due to file size limitations. You can download them here to boost sample generation speed (almost 50% on a 4090 GPU). After downloading the ZIP file, follow the installation steps below:

-

Unzip the downloaded file and place the

cudnn_windowsfolder in the root directory of thekohya_ssrepository. -

Run .\setup.bat and select the option to install cudann.

To install the necessary dependencies on a Linux system, ensure that you fulfill the following requirements:

-

Ensure that

venvsupport is pre-installed. You can install it on Ubuntu 22.04 using the command:apt install python3.10-venv

-

Install the cudaNN drivers by following the instructions provided in this link.

-

Make sure you have Python version 3.10.6 or higher (but lower than 3.11.0) installed on your system.

-

If you are using WSL2, set the

LD_LIBRARY_PATHenvironment variable by executing the following command:export LD_LIBRARY_PATH=/usr/lib/wsl/lib/

To set up the project on Linux or macOS, perform the following steps:

-

Open a terminal and navigate to the desired installation directory.

-

Clone the repository by running the following command:

git clone https://github.com/bmaltais/kohya_ss.git

-

Change into the

kohya_ssdirectory:cd kohya_ss -

If you encounter permission issues, make the

setup.shscript executable by running the following command:chmod +x ./setup.sh

-

Run the setup script by executing the following command:

./setup.sh

Note: If you need additional options or information about the runpod environment, you can use

setup.sh -horsetup.sh --helpto display the help message.

The default installation location on Linux is the directory where the script is located. If a previous installation is detected in that location, the setup will proceed there. Otherwise, the installation will fall back to /opt/kohya_ss. If /opt is not writable, the fallback location will be $HOME/kohya_ss. Finally, if none of the previous options are viable, the installation will be performed in the current directory.

For macOS and other non-Linux systems, the installation process will attempt to detect the previous installation directory based on where the script is run. If a previous installation is not found, the default location will be $HOME/kohya_ss. You can override this behavior by specifying a custom installation directory using the -d or --dir option when running the setup script.

If you choose to use the interactive mode, the default values for the accelerate configuration screen will be "This machine," "None," and "No" for the remaining questions. These default answers are the same as the Windows installation.

To install the necessary components for Runpod and run kohya_ss, follow these steps:

-

Select the Runpod pytorch 2.0.1 template. This is important. Other templates may not work.

-

SSH into the Runpod.

-

Clone the repository by running the following command:

cd /workspace git clone https://github.com/bmaltais/kohya_ss.git -

Run the setup script:

cd kohya_ss ./setup-runpod.sh -

Run the gui with:

./gui.sh --share --headless

or with this if you expose 7860 directly via the runpod configuration

./gui.sh --listen=0.0.0.0 --headless

-

Connect to the public URL displayed after the installation process is completed.

To run from a pre-built Runpod template you can:

-

Open the Runpod template by clicking on https://runpod.io/gsc?template=ya6013lj5a&ref=w18gds2n

-

Deploy the template on the desired host

-

Once deployed connect to the Runpod on HTTP 3010 to connect to kohya_ss GUI. You can also connect to auto1111 on HTTP 3000.

If you prefer to use Docker, follow the instructions below:

-

Ensure that you have Git and Docker installed on your Windows or Linux system.

-

Open your OS shell (Command Prompt or Terminal) and run the following commands:

git clone https://github.com/bmaltais/kohya_ss.git cd kohya_ss docker compose build docker compose run --service-ports kohya-ss-guiNote: The initial run may take up to 20 minutes to complete.

Please be aware of the following limitations when using Docker:

- All training data must be placed in the

datasetsubdirectory, as the Docker container cannot access files from other directories. - The file picker feature is not functional. You need to manually set the folder path and config file path.

- Dialogs may not work as expected, and it is recommended to use unique file names to avoid conflicts.

- There is no built-in auto-update support. To update the system, you must run update scripts outside of Docker and rebuild using

docker compose build.

If you are running Linux, an alternative Docker container port with fewer limitations is available here.

- All training data must be placed in the

You may want to use the following Dockerfile repos to build the images:

- Standalone Kohya_ss template: https://github.com/ashleykleynhans/kohya-docker

- Auto1111 + Kohya_ss GUI template: https://github.com/ashleykleynhans/stable-diffusion-docker

To upgrade your installation to a new version, follow the instructions below.

If a new release becomes available, you can upgrade your repository by running the following commands from the root directory of the project:

-

Pull the latest changes from the repository:

git pull

-

Run the setup script:

.\setup.bat

To upgrade your installation on Linux or macOS, follow these steps:

- Open a terminal and navigate to the root

directory of the project.

-

Pull the latest changes from the repository:

git pull

-

Refresh and update everything:

./setup.sh

To launch the GUI service, you can use the provided scripts or run the kohya_gui.py script directly. Use the command line arguments listed below to configure the underlying service.

--listen: Specify the IP address to listen on for connections to Gradio.

--username: Set a username for authentication.

--password: Set a password for authentication.

--server_port: Define the port to run the server listener on.

--inbrowser: Open the Gradio UI in a web browser.

--share: Share the Gradio UI.

--language: Set custom language

On Windows, you can use either the gui.ps1 or gui.bat script located in the root directory. Choose the script that suits your preference and run it in a terminal, providing the desired command line arguments. Here's an example:

gui.ps1 --listen 127.0.0.1 --server_port 7860 --inbrowser --shareor

gui.bat --listen 127.0.0.1 --server_port 7860 --inbrowser --shareTo launch the GUI on Linux or macOS, run the gui.sh script located in the root directory. Provide the desired command line arguments as follows:

gui.sh --listen 127.0.0.1 --server_port 7860 --inbrowser --shareFor specific instructions on using the Dreambooth solution, please refer to the Dreambooth README.

For specific instructions on using the Finetune solution, please refer to the Finetune README.

For specific instructions on training a network, please refer to the Train network README.

To train a LoRA, you can currently use the train_network.py code. You can create a LoRA network by using the all-in-one GUI.

Once you have created the LoRA network, you can generate images using auto1111 by installing this extension.

The following are the names of LoRA types used in this repository:

-

LoRA-LierLa: LoRA for Linear layers and Conv2d layers with a 1x1 kernel.

-

LoRA-C3Lier: LoRA for Conv2d layers with a 3x3 kernel, in addition to LoRA-LierLa.

LoRA-LierLa is the default LoRA type for train_network.py (without conv_dim network argument). You can use LoRA-LierLa with our extension for AUTOMATIC1111's Web UI or the built-in LoRA feature of the Web UI.

To use LoRA-C3Lier with the Web UI, please use our extension.

A prompt file might look like this, for example:

# prompt 1

masterpiece, best quality, (1girl), in white shirts, upper body, looking at viewer, simple background --n low quality, worst quality, bad anatomy, bad composition, poor, low effort --w 768 --h 768 --d 1 --l 7.5 --s 28

# prompt 2

masterpiece, best quality, 1boy, in business suit, standing at street, looking back --n (low quality, worst quality), bad anatomy, bad composition, poor, low effort --w 576 --h 832 --d 2 --l 5.5 --s 40

Lines beginning with # are comments. You can specify options for the generated image with options like --n after the prompt. The following options can be used:

--n: Negative prompt up to the next option.--w: Specifies the width of the generated image.--h: Specifies the height of the generated image.--d: Specifies the seed of the generated image.--l: Specifies the CFG scale of the generated image.--s: Specifies the number of steps in the generation.

The prompt weighting such as ( ) and [ ] are working.

If you encounter any issues, refer to the troubleshooting steps below.

If you encounter an X error related to the page file, you may need to increase the page file size limit in Windows.

If you encounter an error indicating that the module tkinter is not found, try reinstalling Python 3.10 on your system.

If you come across a FileNotFoundError, it is likely due to an installation issue. Make sure you do not have any locally installed Python modules that could conflict with the ones installed in the virtual environment. You can uninstall them by following these steps:

-

Open a new PowerShell terminal and ensure that no virtual environment is active.

-

Run the following commands to create a backup file of your locally installed pip packages and then uninstall them:

pip freeze > uninstall.txt pip uninstall -r uninstall.txt

After uninstalling the local packages, redo the installation steps within the

kohya_ssvirtual environment.

The documentation in this section will be moved to a separate document later.

-

sdxl_train.pyis a script for SDXL fine-tuning. The usage is almost the same asfine_tune.py, but it also supports DreamBooth dataset.--full_bf16option is added. Thanks to KohakuBlueleaf!- This option enables the full bfloat16 training (includes gradients). This option is useful to reduce the GPU memory usage.

- The full bfloat16 training might be unstable. Please use it at your own risk.

- The different learning rates for each U-Net block are now supported in sdxl_train.py. Specify with

--block_lroption. Specify 23 values separated by commas like--block_lr 1e-3,1e-3 ... 1e-3.- 23 values correspond to

0: time/label embed, 1-9: input blocks 0-8, 10-12: mid blocks 0-2, 13-21: output blocks 0-8, 22: out.

- 23 values correspond to

-

prepare_buckets_latents.pynow supports SDXL fine-tuning. -

sdxl_train_network.pyis a script for LoRA training for SDXL. The usage is almost the same astrain_network.py. -

Both scripts has following additional options:

--cache_text_encoder_outputsand--cache_text_encoder_outputs_to_disk: Cache the outputs of the text encoders. This option is useful to reduce the GPU memory usage. This option cannot be used with options for shuffling or dropping the captions.--no_half_vae: Disable the half-precision (mixed-precision) VAE. VAE for SDXL seems to produce NaNs in some cases. This option is useful to avoid the NaNs.

-

--weighted_captionsoption is not supported yet for both scripts. -

sdxl_train_textual_inversion.pyis a script for Textual Inversion training for SDXL. The usage is almost the same astrain_textual_inversion.py.--cache_text_encoder_outputsis not supported.- There are two options for captions:

- Training with captions. All captions must include the token string. The token string is replaced with multiple tokens.

- Use

--use_object_templateor--use_style_templateoption. The captions are generated from the template. The existing captions are ignored.

- See below for the format of the embeddings.

-

--min_timestepand--max_timestepoptions are added to each training script. These options can be used to train U-Net with different timesteps. The default values are 0 and 1000.

-

tools/cache_latents.pyis added. This script can be used to cache the latents to disk in advance.- The options are almost the same as `sdxl_train.py'. See the help message for the usage.

- Please launch the script as follows:

accelerate launch --num_cpu_threads_per_process 1 tools/cache_latents.py ... - This script should work with multi-GPU, but it is not tested in my environment.

-

tools/cache_text_encoder_outputs.pyis added. This script can be used to cache the text encoder outputs to disk in advance.- The options are almost the same as

cache_latents.pyandsdxl_train.py. See the help message for the usage.

- The options are almost the same as

-

sdxl_gen_img.pyis added. This script can be used to generate images with SDXL, including LoRA, Textual Inversion and ControlNet-LLLite. See the help message for the usage.

- The default resolution of SDXL is 1024x1024.

- The fine-tuning can be done with 24GB GPU memory with the batch size of 1. For 24GB GPU, the following options are recommended for the fine-tuning with 24GB GPU memory:

- Train U-Net only.

- Use gradient checkpointing.

- Use

--cache_text_encoder_outputsoption and caching latents. - Use Adafactor optimizer. RMSprop 8bit or Adagrad 8bit may work. AdamW 8bit doesn't seem to work.

- The LoRA training can be done with 8GB GPU memory (10GB recommended). For reducing the GPU memory usage, the following options are recommended:

- Train U-Net only.

- Use gradient checkpointing.

- Use

--cache_text_encoder_outputsoption and caching latents. - Use one of 8bit optimizers or Adafactor optimizer.

- Use lower dim (4 to 8 for 8GB GPU).

--network_train_unet_onlyoption is highly recommended for SDXL LoRA. Because SDXL has two text encoders, the result of the training will be unexpected.- PyTorch 2 seems to use slightly less GPU memory than PyTorch 1.

--bucket_reso_stepscan be set to 32 instead of the default value 64. Smaller values than 32 will not work for SDXL training.

Example of the optimizer settings for Adafactor with the fixed learning rate:

optimizer_type = "adafactor"

optimizer_args = [ "scale_parameter=False", "relative_step=False", "warmup_init=False" ]

lr_scheduler = "constant_with_warmup"

lr_warmup_steps = 100

learning_rate = 4e-7 # SDXL original learning ratefrom safetensors.torch import save_file

state_dict = {"clip_g": embs_for_text_encoder_1280, "clip_l": embs_for_text_encoder_768}

save_file(state_dict, file)ControlNet-LLLite, a novel method for ControlNet with SDXL, is added. See documentation for details.

- 2023/10/10 (v22.1.0)

- Remove support for torch 1 to align with kohya_ss sd-scripts code base.

- Add Intel ARC GPU support with IPEX support on Linux / WSL

- Users needs to set these manually:

- Mixed precision to BF16,

- Attention to SDPA,

- Optimizer to: AdamW (or any other non 8 bit one).

- Run setup with:

./setup.sh --use-ipex - Run the GUI with:

./gui.sh --use-ipex

- Users needs to set these manually:

- Merging main branch of sd-scripts:

tag_images_by_wd_14_tagger.pynow supports Onnx. If you use Onnx, TensorFlow is not required anymore. #864 Thanks to Isotr0py!--onnxoption is added. If you use Onnx, specify--onnxoption.- Please install Onnx and other required packages.

- Uninstall TensorFlow.

pip install tensorboard==2.14.1This is required for the specified version of protobuf.pip install protobuf==3.20.3This is required for Onnx.pip install onnx==1.14.1pip install onnxruntime-gpu==1.16.0orpip install onnxruntime==1.16.0

--append_tagsoption is added totag_images_by_wd_14_tagger.py. This option appends the tags to the existing tags, instead of replacing them. #858 Thanks to a-l-e-x-d-s-9!- OFT is now supported.

- You can use

networks.oftfor the network module insdxl_train_network.py. The usage is the same asnetworks.lora. Some options are not supported. sdxl_gen_img.pyalso supports OFT as--network_module.- OFT only supports SDXL currently. Because current OFT tweaks Q/K/V and O in the transformer, and SD1/2 have extremely fewer transformers than SDXL.

- The implementation is heavily based on laksjdjf's OFT implementation. Thanks to laksjdjf!

- You can use

- Other bug fixes and improvements.