This repository serves as a prototype for Saint Gobain UK's AI and Machine Learning projects implemented in Python using Scikit-Learn and MLFlow using the Kaggle Titanic challenge.

The focus isn't the model, but the framework, standards and tools used to structure the project and deploy the model. These are as follows:

- Git feature/branch workflow

- PEP8 code standards with numpy docstrings

- Abstraction of complex shell commands with

makevia the Makefile - Reproducibility via a

condaenvironment and associatedenvironment.yaml - Managing development and test configurations via the

env.devandenv.testfiles. - Separating the application configuration (

.env), metadata (parameters.yaml) and functionality (src). - Tests for functions via

pytestand test coverage viacoverage - Logging generated by

loguru. This needs replacing (see TODO) - Plac to simplify the creation of command line execution options.

- Model experimentation and deployment with MLFlow.

- Machine Learning visualisation produced via

yellowbrickandscikitplot

Links to the packages mentioned here can be found in the Useful Links section.

Over time, I will be expanding upon these topics with documentation, sessions and tutorials etc. to ensure that the "why", "what" and "how" get properly explained.

-

Ensure that you have the following software installed:

- Windows Subsystem for Linux (Through IT)

- Anaconda

- Git

- Make

-

Clone the repository from bitbucket to your local machine:

git clone TBCand change into the directory by runningcd titanic-mlflow. -

Copy the data & configuration files from OneDrive, saved in E:\titanic-data as follows:

- Save the

env.devandenv.testfiles into the root of the repository. - Save the

train_test_raw.csvandholdout_raw.csvfiles in thedata-devdirectory that you downloaded into thedata/devdirectory. This is the live data used to train and test the model. - Save the

train_test_raw.csvandholdout_raw.csvfiles in thedata-dummydirectory that you downloaded into thedata/devdirectory. This is created dummy data used to run the tests on the overall application.

- Create the sqlite databases to store MLFlow experiments as follows:

make create-db-devmake create-db-testThis will create two databases in thedb/directory to store MLFlow experiment data for development and testing respectively.

-

Run

make create-environmentto create the conda environment. This will install the packages listed in therequirements-condaandrequirements-pipdirectories fromcondaandpiprespectively and save a full list of package dependencies toenvironment.yaml. -

To run an experiement run

make run-experiment. You can update the hyperparameters of the model by changing the appropriate sections inparameters.yaml. -

To start the MLFlow dashboard run

make mlflow-ui. This will start a webserver for the UI which you can access vialocalhost:5001in your browser. -

To stage a model for deployment run

make run-deployment. This will save the model ready to be deployed in theartifacts/dev/modelsdirectory. Note that the command will error if a model name already exists so you'll need to either delete the model directory inartifacts/dev/modelsor change themodel_nameparameter inparameters.yaml. -

To start the model deployment server run

make mlflow-serve-model. This will serve the model atlocalhost:1235. This is best accessed through thequery_model_server.ipynbnotebook which contains some pre-baked code to generate a prediction from it. -

(Optional) To install the environment as a Jupyter kernel run

make create-kernel. You shouldn't need this to run the notebook in Step 8 however. -

(Optional) To run the tests run

make tests. This will run the tests in thetestsdirectory usingpytestand output a coverage report alongside them usingcoverage. -

(Optional) To install extra packages, update one/both of requirements-conda.txt or requirements-pip.txt with the package names and run the appropriate command:

make install-all-requirementsmake install-conda-requirementsmake install-pip-requirements

- (Optional) To remove the environment run

make remove-environment - (Optional) To remove the kernel run

make remove-kernel

If you don't want to use Make you can run everything manually - the commands in the Makefile are all recorded and most will be copy / pasteable into the terminal. Note that you will likely need to replace the environment variables with the actual values to get it to work.

The entrypoint for the application is main.py in the root of the repository. The application functionality is made up of a number of modules stored in the src directory which are explained in more detail below. Complex command line executions are simplified through make and the Makefile. Run make help for a comprehensive list of these.

To run an experiment run make run-experiement. This can then be seen in the MLFlow UI which can be started via make mlflow-ui which will serve the ui on the localhost through the port specified in the configuration.

To stage a model for deployment, run make run-deployment. This can then be deployed with make mlflow-serve-model which will serve the model on the localhost through the port specified in the configuration.

The create_db.py file is used to create sqlite databases to store MLFlow data. These are stored in the db directory. This only needs to be executed once each for the dev and dummy databases.

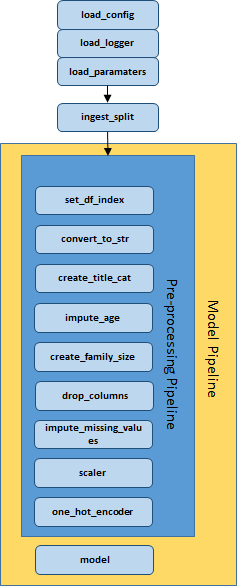

The design of the pipeline is as follows:

This module:

- Imports the

train_testandholdoutcsv files from the location specified in the.env.devfile - Converts any int columns to floats as int columns can cause problems with regard to missing values (note that this should be included in preprocessing)

- Performs a train_test_split on the

train_testfile

This module creates a scikit-learn preprocessing pipeline to preprocess the data prior to modelling via the preprocessing.py file and applies a number of transformations which are recorded as functions in the transforms.py with the arguments for the function located in parameters.yaml. New transforms can be created and slotted into the pipeline as appropriate.

The transformations in this module must also ship with the model for MLFlow deployment. This ensures that users can pass unprocessed data to the model in order to generate predictions.

This module contains the various models created during experimentation with a separate .py file for each model. To switch between, models replace the existing create_logreg_model() function in the main.py directory with a new function from the models module. There are two models at present, Logistic Regression and SVC.

This module contains two files. The evaluate.py file runs the preprocessing pipeline, fits the model and evaluates it via a number of different scoring methods. The model parameters, evaluation metadata and model is then recorded in MLFlow. The model_pipeline.py file appends the model to the preprocessing pipeline to create the overall model pipeline, generates an input signature for the model telling it what format data should be provided in, and formally logs the model with MLFlow for deployment.

This module contains a single utils.py file which contains utility functions to load the configuration from either .env.dev or .env.test, load the parameters from parameters.yaml and create the logger which ouputs logs to the logs/dev and logs/dummy directories.

The configuration of the application is stored as linux environment variables in the env.dev and env.test files and passed to the application via the load_config() function in the src.utils module.. The artifacts, data, logs and db folders contain separate directories and files for development and test executions with references to these stored in the .env.dev and .env.test files.

Tests are recorded in the tests/ directory and when executing them the .env.test configuration is used. This ensures that test data, mlflow artifacts and logs are recorded and can be managed separately. Test data has been created to support this and this is stored in the data/dummy directory.

Tests can be executed with the command make tests and are performed using the pytest Python package which automatically runs the tests, and the coverage Python package which generates a test coverage report as part of the testing process.

Note that the dummy convention has been used for things which might traditionally called test to avoid confusion with test data for a Machine Learning model used to validate its accuracy.

TODO

├── LICENSE

├── Makefile # Used to simplify command line exeuction

├── README.md

├── artifacts # Stored MLFlow files

│ ├── dev # Live files created during processing

│ └── dummy # Fake files created during testing.

├── assets # Stores files associated with the repo

├── create_db.py # Creates sqlite databases for MLflow

├── data # Data for the application

│ ├── dev # Live data used for development

│ └── dummy # Fake data used for testing

├── db # Database files for MLFlow tracking

├── environment.yaml # Package data for the environment

├── logs # Logs produced during processing

├── logs-dummy # Logs produced during testing

├── main.py # Entrypoint for the application

├── mlruns # Local store for MLFlow tracking

├── notebooks # Notebooks storage

├── parameters.yaml # Parameters for processing

├── query_model_server.ipynb # Used to test the MLFlow API

├── requirements-conda.txt # Conda package dependencies

├── requirements-pip.txt # Pip package dependencies

├── src # Functaionlity for the application

└── tests # Tests for the applcationMlFlow is used to monitor model experiments and to serve the model. The base syntax for this is as follows:

with mlflow.start_run(): # Starts MLFlow tracking

mlflow.end_run() # Ends MLFlow tracking

mlflow.log_param(parameter, "parameter_name") # Logs a parameter

mlflow.log_metric(metric, "metric_name") # Logs a metric

mlflow.log_artifact(artifact_path) # Logs a directory of artifactsAdditional MLFlow commands with respect to saving and logging models can be found in the src/model_pipeline/model_pipeline.py file.

To serve a model, you must first save it by running make mlflow-deployment. It can then be served via make mlflow-serve-model.

-

Implement a better logger than

loguru- the current one produces horrific error messages in Jupyter. This will improve debugging speed and can be re-implemented in other pipelines. Bonus points if it can produce a coloured output. -

Replace the

convert_to_str()step in the pipeline with a step that ensures type safety for all columns in the pipeline, not just strings. This will improve the reliability of the pipeline and can be re-implemented in other pipelines. Additionally some of the type transformation processing iningest_splitcan be removed resulting in a cleaner application. -

Implement feature selection as the last step in the SKL pipeline and log the features as a parameter in MLFlow. This will allow greater experiementation and control over models and can be re-implemented in other pipelines.

-

Implementing SHAP, or a similar explainability framework. This will allow greater model transparency and make it easier to explain to stakeholders.

-

Implement Docker for easier production deployment.

-

Create end-to-end tests for the application.