Omri Avrahami, Ohad Fried, Dani Lischinski

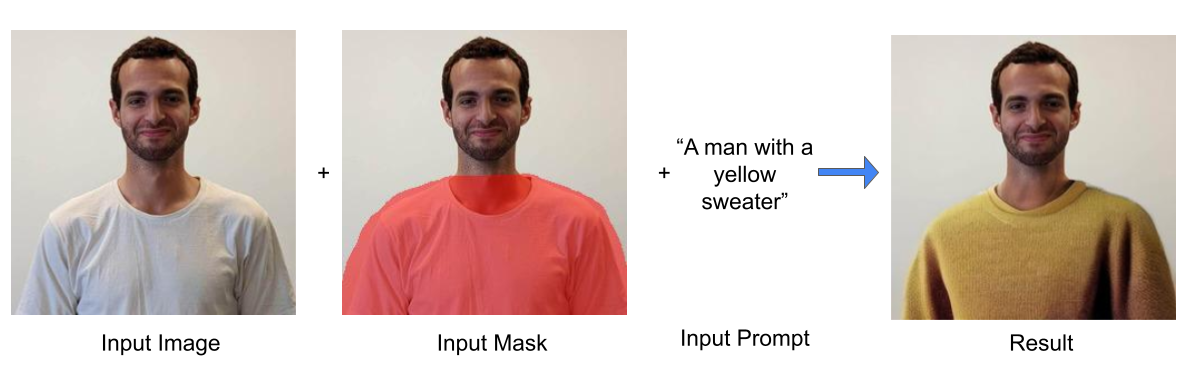

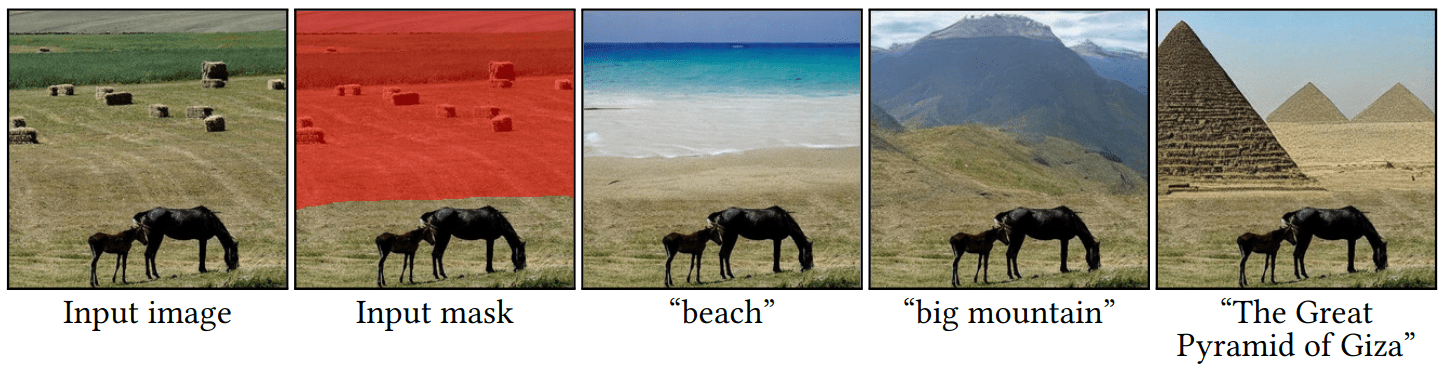

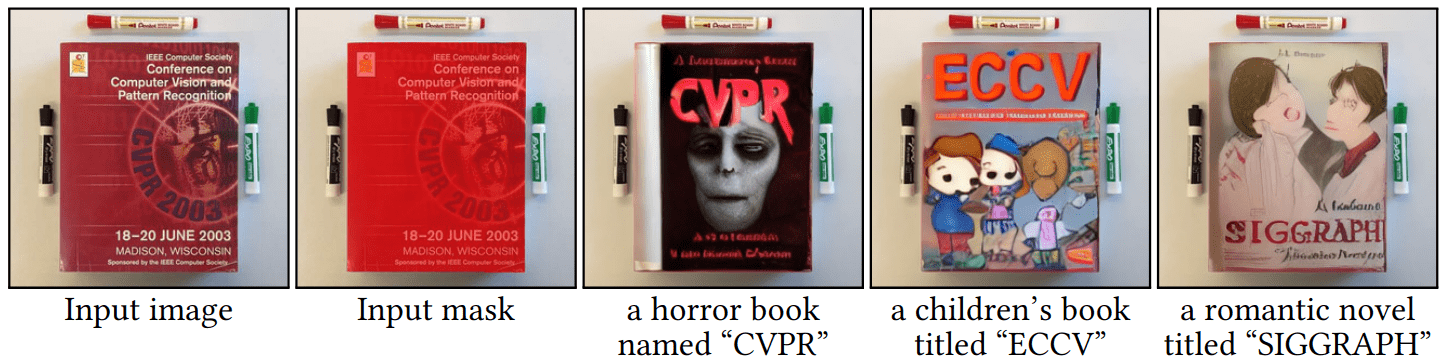

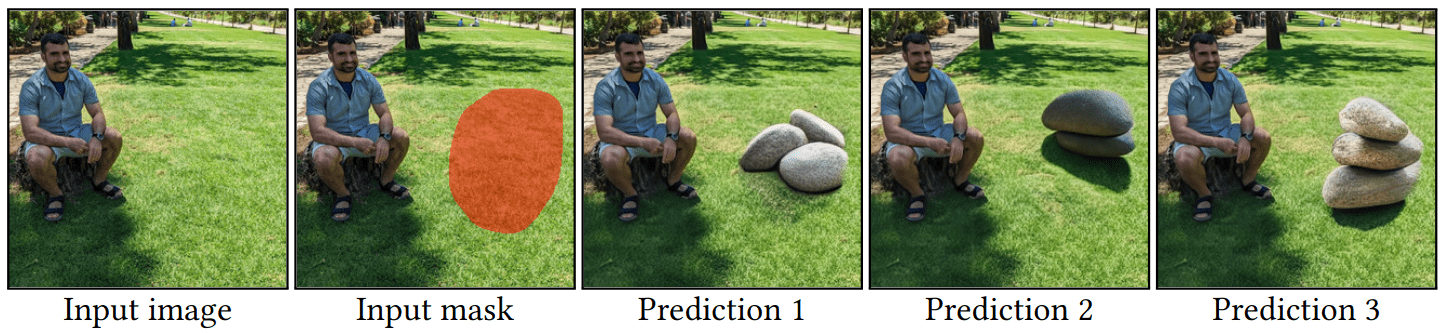

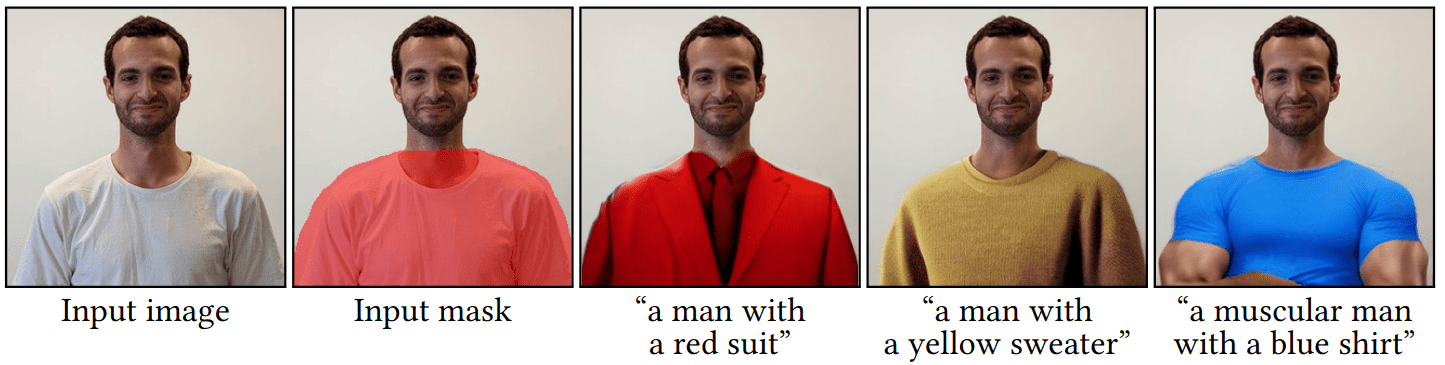

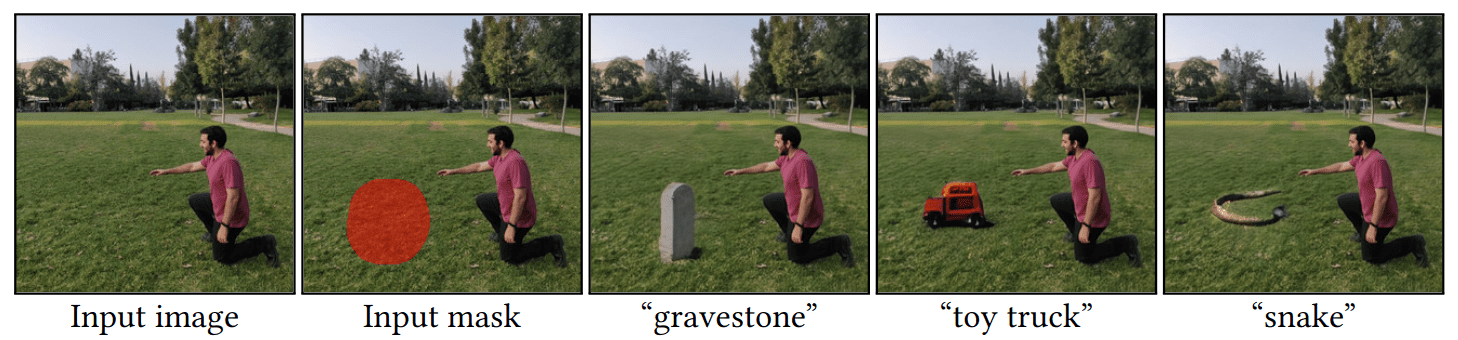

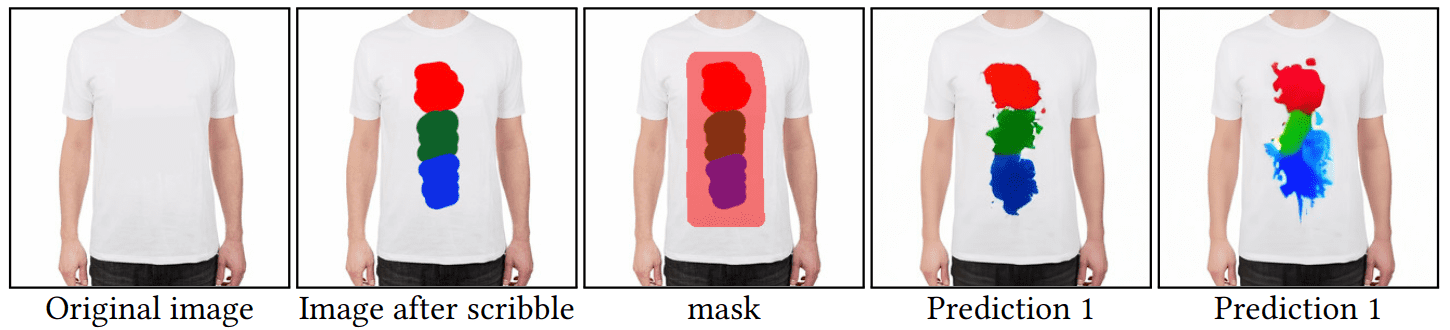

Abstract: The tremendous progress in neural image generation, coupled with the emergence of seemingly omnipotent vision-language models has finally enabled text-based interfaces for creating and editing images. Handling generic images requires a diverse underlying generative model, hence the latest works utilize diffusion models, which were shown to surpass GANs in terms of diversity. One major drawback of diffusion models, however, is their relatively slow inference time. In this paper, we present an accelerated solution to the task of local text-driven editing of generic images, where the desired edits are confined to a user-provided mask. Our solution leverages a recent text-to-image Latent Diffusion Model (LDM), which speeds up diffusion by operating in a lower-dimensional latent space. We first convert the LDM into a local image editor by incorporating Blended Diffusion into it. Next we propose an optimization-based solution for the inherent inability of this LDM to accurately reconstruct images. Finally, we address the scenario of performing local edits using thin masks. We evaluate our method against the available baselines both qualitatively and quantitatively and demonstrate that in addition to being faster, our method achieves better precision than the baselines while mitigating some of their artifacts

Install the conda virtual environment:

conda env create -f environment.yaml

conda activate ldmDownload the pre-trained weights (5.7GB):

mkdir -p models/ldm/text2img-large/

wget -O models/ldm/text2img-large/model.ckpt https://ommer-lab.com/files/latent-diffusion/nitro/txt2img-f8-large/model.ckptIf the above link is broken, you can use this Google drive mirror.

python scripts/text_editing.py --prompt "a pink yarn ball" --init_image "inputs/img.png" --mask "inputs/mask.png"The predictions will be saved in outputs/edit_results/samples.

You can use a larger batch size by specifying --n_samples to the maximum number that saturates your GPU.

If you want to reconstruct the original image background, you can run the following:

python scripts/reconstruct.py --init_image "inputs/img.png" --mask "inputs/mask.png" --selected_indices 0 1You can choose the specific image indices that you want to reconstruct. The results will be saved in outputs/edit_results/samples/reconstructed_optimization.

If you find this useful for your research, please use the following:

@article{avrahami2022blended_latent,

title={Blended Latent Diffusion},

author={Avrahami, Omri and Fried, Ohad and Lischinski, Dani},

journal={arXiv preprint arXiv:2206.02779},

year={2022}

}This code is based on Latent Diffusion Models.