Information retrieval on your private data using Embeddings Vector Search and LLMs.

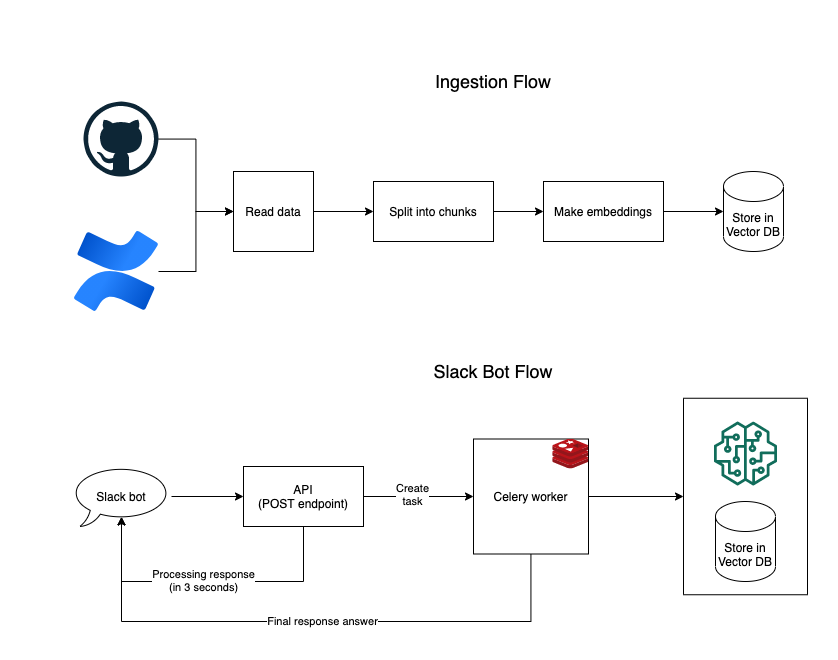

- The data is first scraped from the internal sources.

- The text data is then converted into embedding vectors using pre-trained models.

- These embeddings are stored into a Vector database, we have used Chroma in this project. Vector databases allows us to easily perform Nearest Neighbor Search on the embedding vectors.

- Once all data is ingested into the DB, we take the user query, fetch the top

kmatching documents from the DB and feed them into the LLM of our choice. The LLM then generates a summarized answer using the question and documents. This process is orchestrated by Langchain'sRetrievalQAchain

- The package also has an

apifolder which can be used to integrate it as a Slack slash command. - The API is built using FastAPI, which provides a

/slackPOST endpoint, that acts as URL for slack command. - Since the slash command has to response within

3 seconds, we offload the querying work to Celery and return processing response to user. - Celery then performs the retrieval and summarization task on the query and sends the final response to the Slack provided endpoint.

The package can be easily installed by pip, using the following command:

pip install info_gpt[api] git+https://github.com/techytushar/info_gpt- This package uses Poetry for dependency management, so install poetry first using instructions here

- [Optional] Update poetry config to create virtual environment inside the project only using

poetry config virtualenvs.in-project true - Run

poetry install --all-extrasto install all dependencies. - Install the

pre-commithooks for linting and formatting usingpre-commit install

All configurations are driven through the constants.py and api/constants.py. Most of them have a default value but some need to be provided explicitly, such as secrets and tokens.

from info_gpt.ingest import Ingest

import asyncio

ingester = Ingest()

# ingest data from GitHub

asyncio.run(ingester.load_github("<org_name_here>", ".md"))

# ingest data from Confluence pages

ingester.load_confluence()- Build the Docker image locally using

docker build --build-arg SLACK_TOKEN=$SLACK_TOKEN -t info-gpt . - Run the API using Docker Compose

docker compose up - You can use ngrok to expose the localhost URL to internet using

ngrok http 8000 --host-header="localhost:8000"

---- WIP ----