Hanoona Rasheed*, Muhammad Maaz*, Sahal Shaji, Abdelrahman Shaker, Salman Khan, Hisham Cholakkal, Rao M. Anwer, Eric Xing, Ming-Hsuan Yang and Fahad Khan

* Equally contributing first authors

Mohamed bin Zayed University of AI, Australian National University, Aalto University, Carnegie Mellon University, University of California - Merced, Linköping University, Google Research

- Dec-27-23- GLaMM training and evaluation codes, pretrained checkpoints and GranD-f dataset are released click for details 🔥🔥

- Nov-29-23: GLaMM online interactive demo is released demo link. 🔥

- Nov-07-23: GLaMM paper is released arxiv link. 🌟

- 🌟 Featured: GLaMM is now highlighted at the top on AK's Daily Papers page on HuggingFace! 🌟

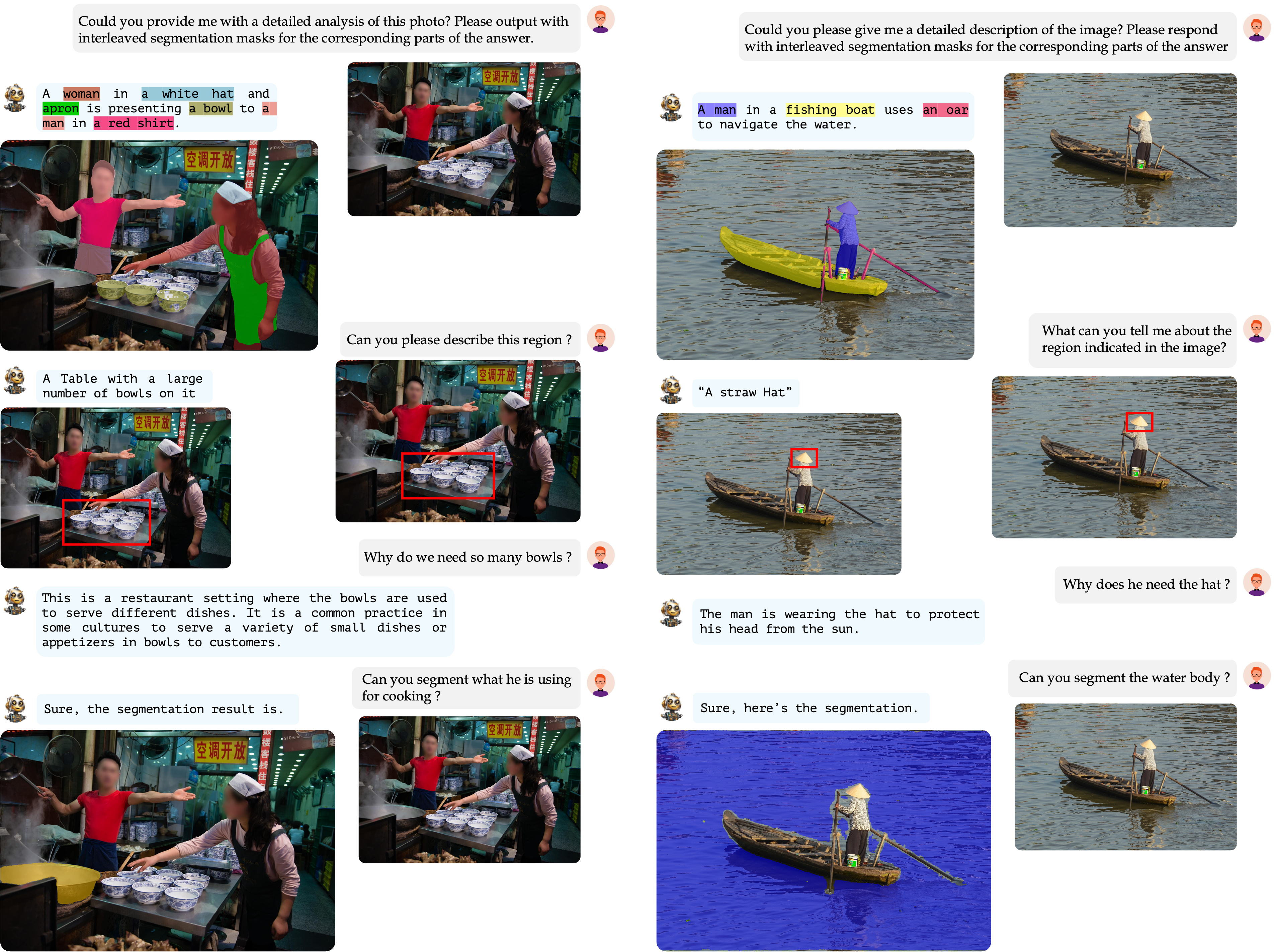

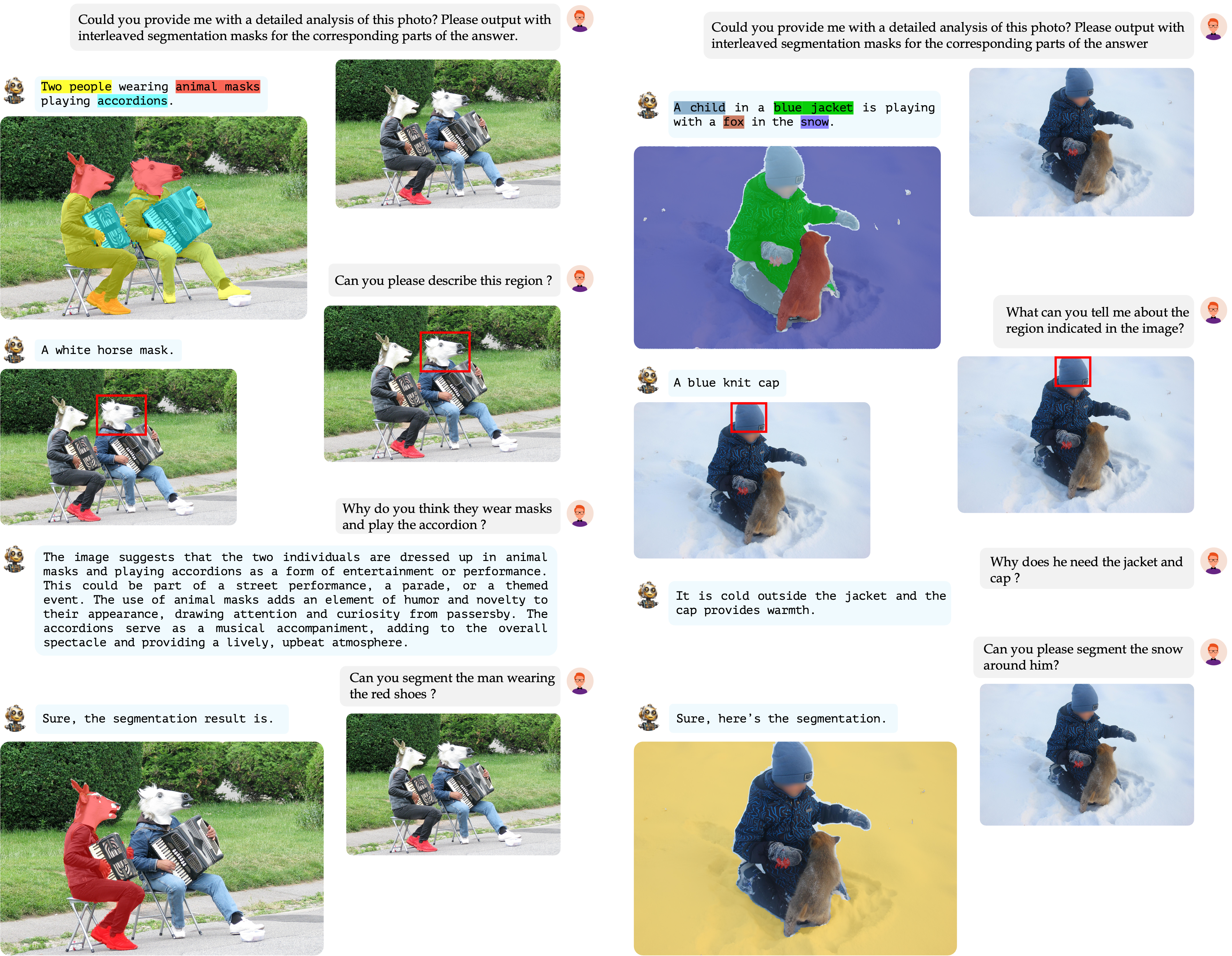

Grounding Large Multimodal Model (GLaMM) is an end-to-end trained LMM which provides visual grounding capabilities with the flexibility to process both image and region inputs. This enables the new unified task of Grounded Conversation Generation that combines phrase grounding, referring expression segmentation, and vision-language conversations. Equipped with the capability for detailed region understanding, pixel-level groundings, and conversational abilities, GLaMM offers a versatile capability to interact with visual inputs provided by the user at multiple granularity levels.

-

GLaMM Introduction. We present the Grounding Large Multimodal Model (GLaMM), the first-of-its-kind model capable of generating natural language responses that are seamlessly integrated with object segmentation masks.

-

Novel Task & Evaluation. We propose a new task of Grounded Conversation Generation (GCG). We also introduce a comprehensive evaluation protocol for this task.

-

GranD Dataset Creation. We create the GranD - Grounding-anything Dataset, a large-scale densely annotated dataset with 7.5M unique concepts grounded in 810M regions.

Delve into the core of GLaMM with our detailed guides on the model's Training and Evaluation methodologies.

-

Installation: Provides guide to set up conda environment for running GLaMM training, evaluation and demo.

-

Datasets: Provides detailed instructions to download and arrange datasets required for training and evaluation.

-

Model Zoo: Provides downloadable links to all pretrained GLaMM checkpoints.

-

Training: Provides instructions on how to train the GLaMM model for its various capabilities including Grounded Conversation Generation (GCG), Region-level captioning, and Referring Expression Segmentation.

-

Evaluation: Outlines the procedures for evaluating the GLaMM model using pretrained checkpoints, covering Grounded Conversation Generation (GCG), Region-level captioning, and Referring Expression Segmentation, as reported in our paper.

-

Demo: Guides you through setting up a local demo to showcase GLaMM's functionalities.

The components of GLaMM are cohesively designed to handle both textual and optional visual prompts (image level and region of interest), allowing for interaction at multiple levels of granularity, and generating grounded text responses.

GranD dataset, a large-scale dataset with automated annotation pipeline for detailed region-level understanding and segmentation masks. GranD comprises 7.5M unique concepts anchored in a total of 810M regions, each with a segmentation mask.

Below we present some examples of the GranD dataset.

GranD-f is designed for the GCG task, with about 214K image-grounded text pairs for higher-quality data in fine-tuning stage.

Introducing GCG, a task to create image-level captions tied to segmentation masks, enhancing the model’s visual grounding in natural language captioning.

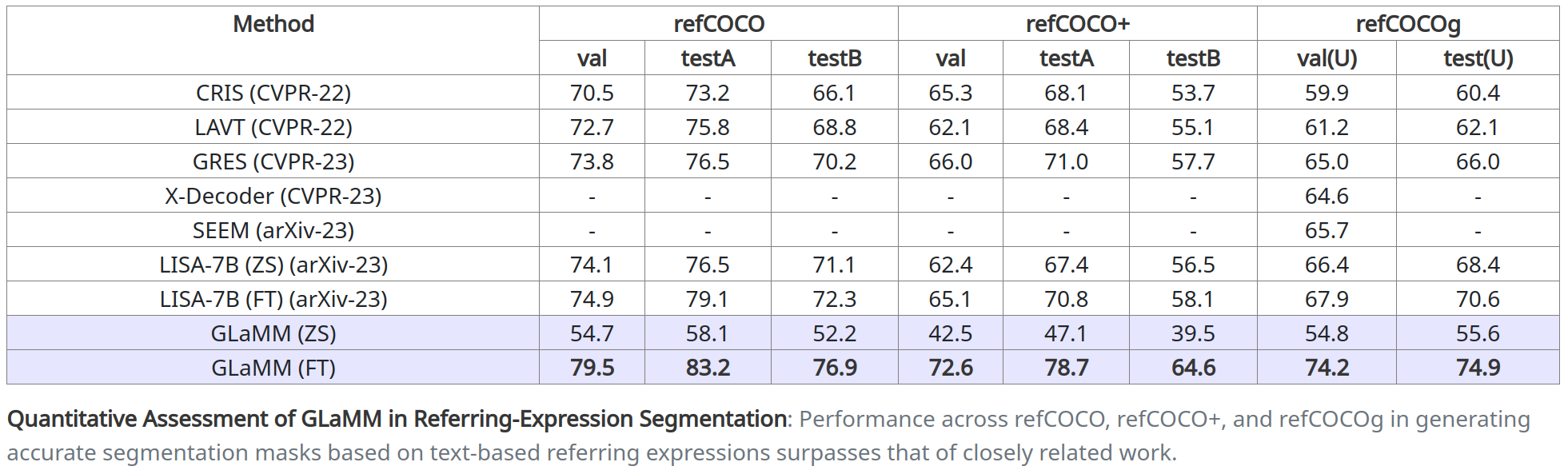

Our model excels in creating segmentation masks from text-based referring expressions.

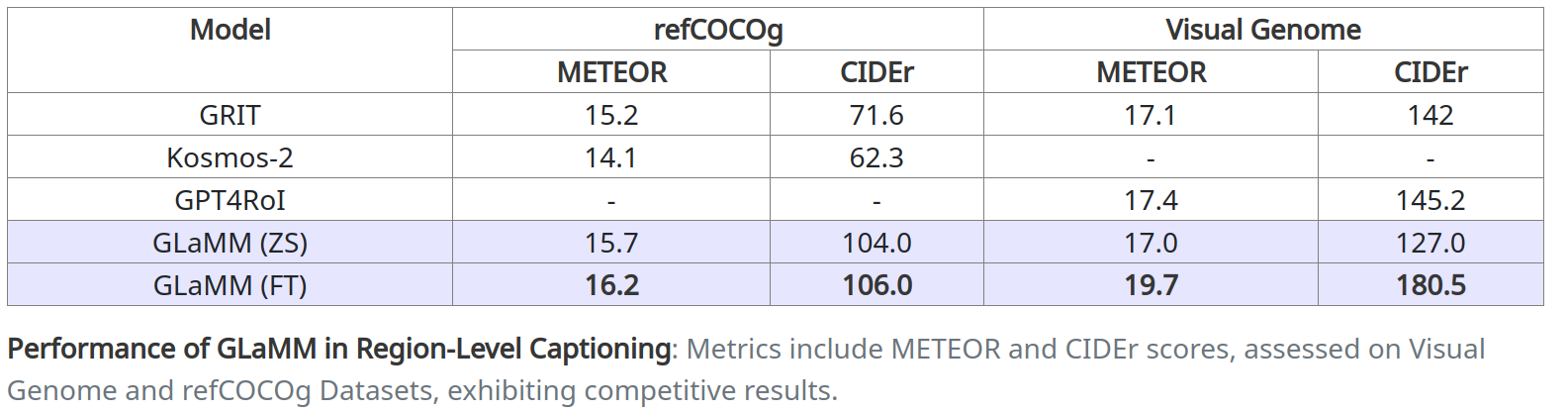

GLaMM generates detailed region-specific captions and answers reasoning-based visual questions.

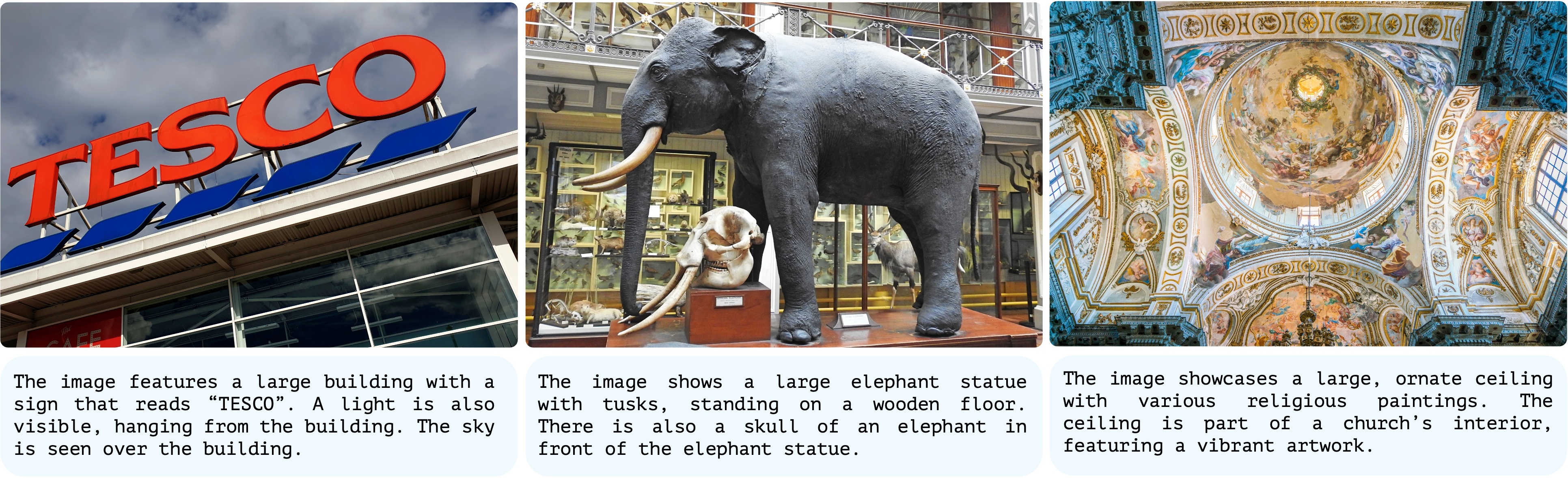

Comparing favorably to specialized models, GLaMM provides high-quality image captioning.

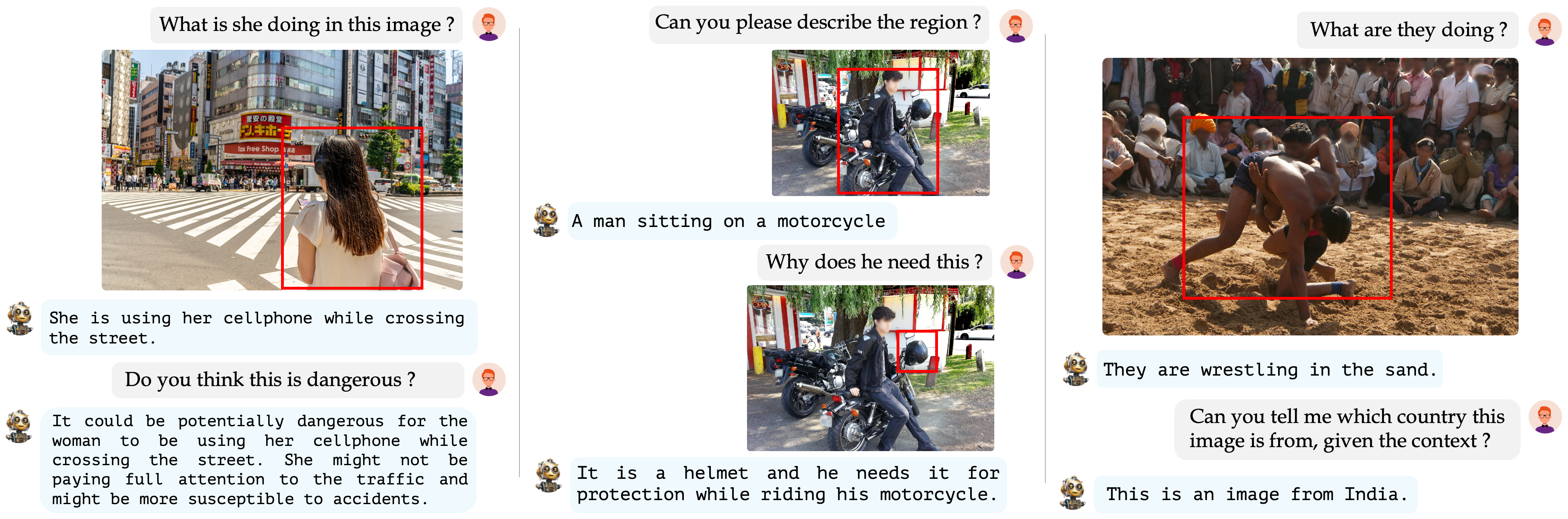

GLaMM demonstrates its prowess in engaging in detailed, region-specific, and grounded conversations. This effectively highlights its adaptability in intricate visual-language interactions and robustly retaining reasoning capabilities inherent to LLMs.

@article{hanoona2023GLaMM,

title={GLaMM: Pixel Grounding Large Multimodal Model},

author={Rasheed, Hanoona and Maaz, Muhammad and Shaji, Sahal and Shaker, Abdelrahman and Khan, Salman and Cholakkal, Hisham and Anwer, Rao M. and Xing, Eric and Yang, Ming-Hsuan and Khan, Fahad S.},

journal={ArXiv 2311.03356},

year={2023}

}We are thankful to LLaVA, GPT4ROI, and LISA for releasing their models and code as open-source contributions.